The WD Black2 Review: World's First 2.5" Dual-Drive

by Kristian Vättö on January 30, 2014 7:00 AM ESTPerformance Consistency

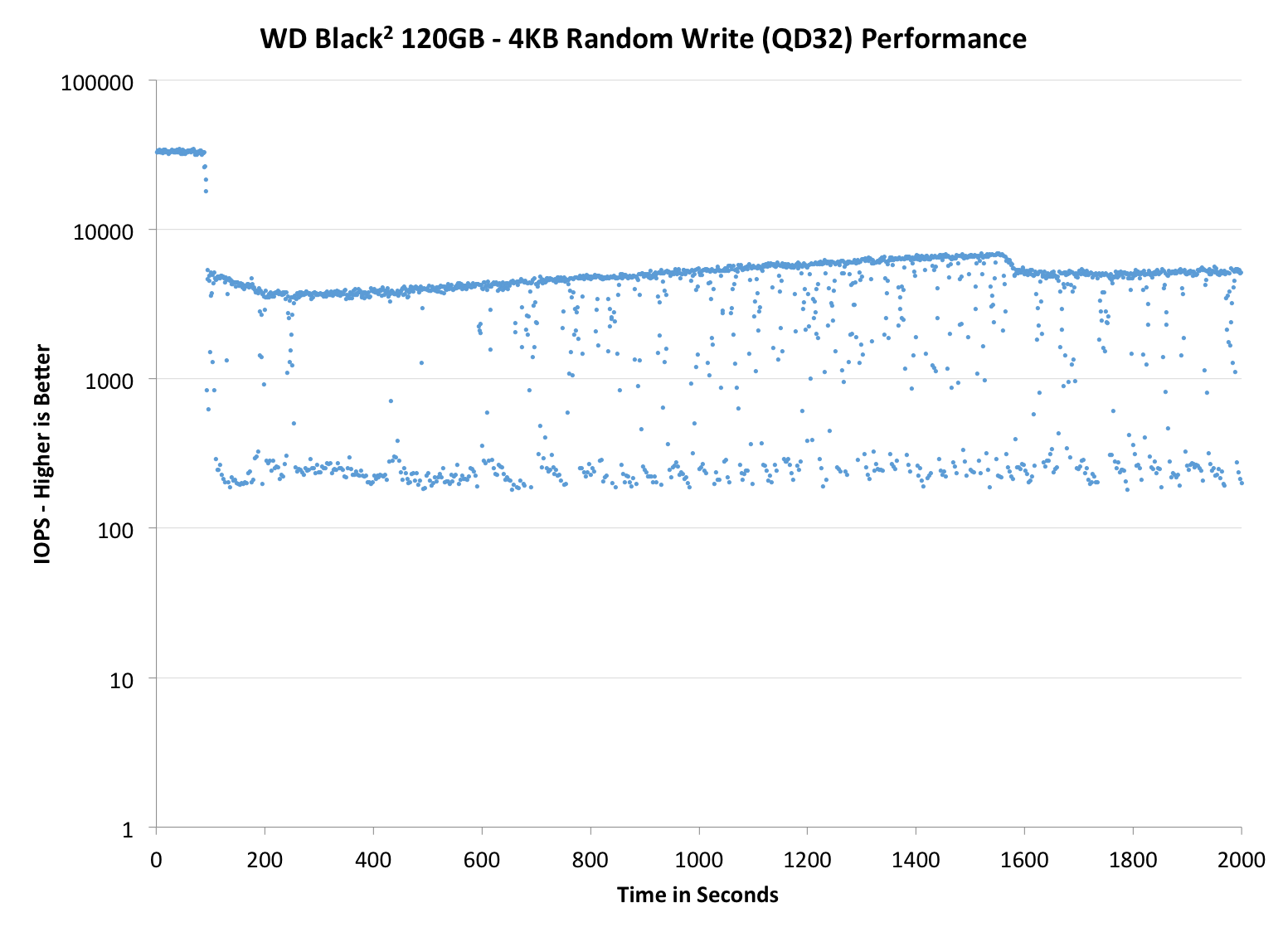

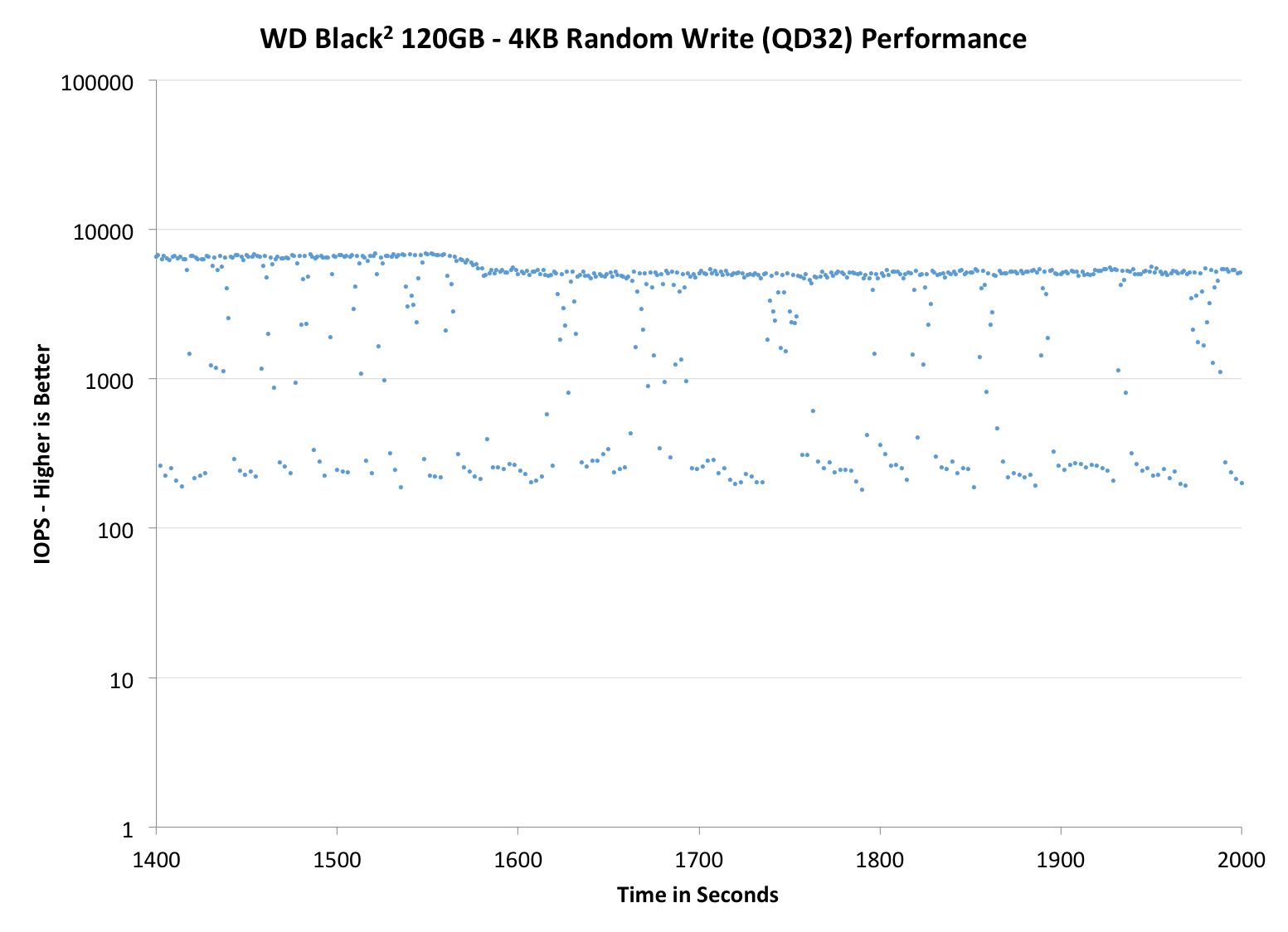

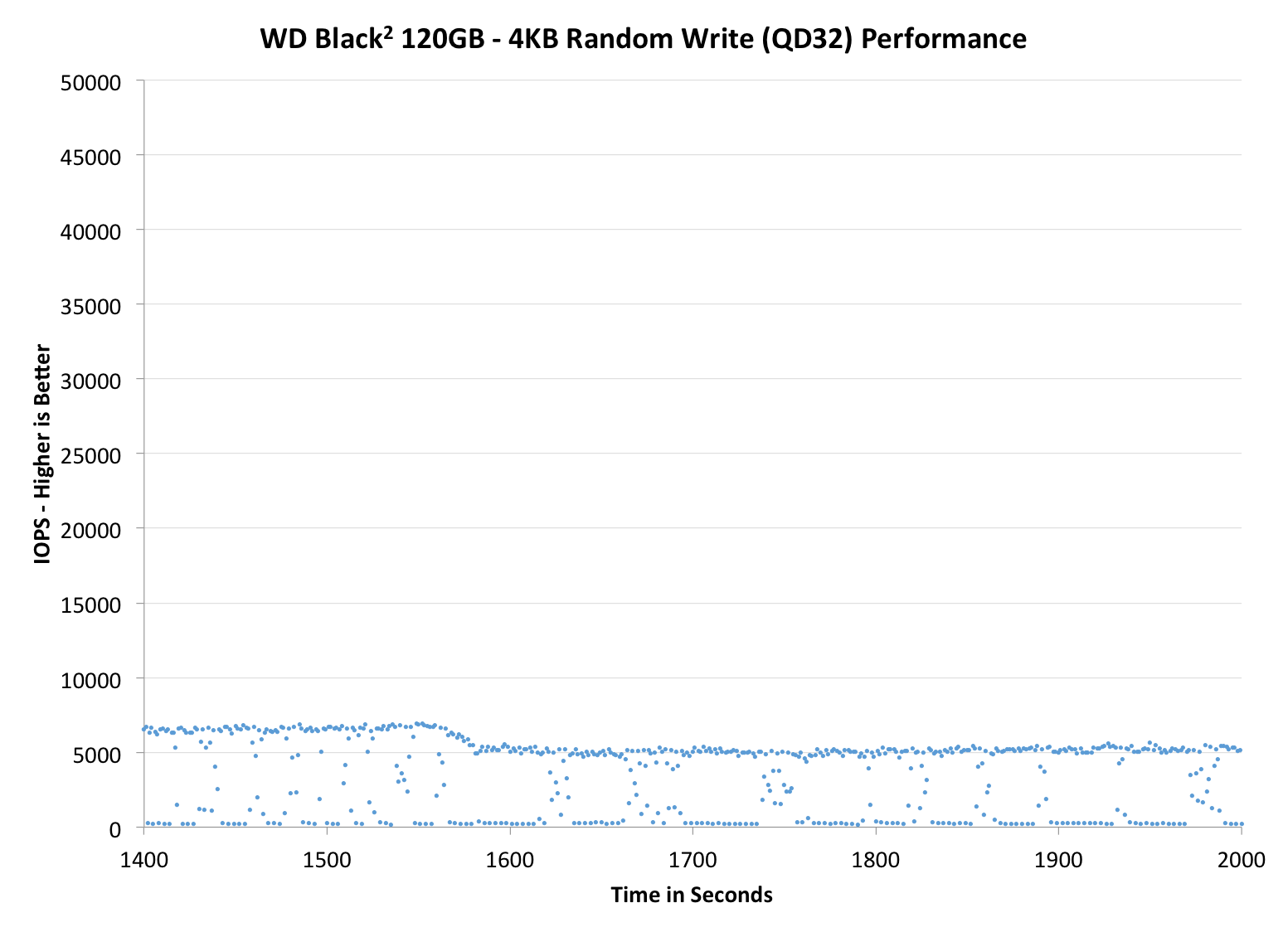

Performance consistency tells us a lot about the architecture of these SSDs and how they handle internal defragmentation. The reason we don’t have consistent IO latency with SSD is because inevitably all controllers have to do some amount of defragmentation or garbage collection in order to continue operating at high speeds. When and how an SSD decides to run its defrag or cleanup routines directly impacts the user experience as inconsistent performance results in application slowdowns.

To test IO consistency, we fill a secure erased SSD with sequential data to ensure that all user accessible LBAs have data associated with them. Next we kick off a 4KB random write workload across all LBAs at a queue depth of 32 using incompressible data. The test is run for just over half an hour and we record instantaneous IOPS every second.

We are also testing drives with added over-provisioning by limiting the LBA range. This gives us a look into the drive’s behavior with varying levels of empty space, which is frankly a more realistic approach for client workloads.

Each of the three graphs has its own purpose. The first one is of the whole duration of the test in log scale. The second and third one zoom into the beginning of steady-state operation (t=1400s) but on different scales: the second one uses log scale for easy comparison whereas the third one uses linear scale for better visualization of differences between drives. Click the buttons below each graph to switch the source data.

For more detailed description of the test and why performance consistency matters, read our original Intel SSD DC S3700 article.

|

|||||||||

| WD Black2 120GB | Samsung SSD 840 EVO mSATA 1TB | Mushkin Atlas 240GB | Intel SSD 525 | Plextor M5M | |||||

| Default | |||||||||

| 25% OP | - | ||||||||

The area where low cost designs usually fall behind is performance consistency and the JMF667H in the Black2 is no exception. I was actually expecting far worse results, although the JMF667H is certainly one of the worst SATA 6Gbps controllers we've tested lately. The biggest issue is the inability to sustain performance because while the thickest line is at ~5,000 IOPS, the performance is constantly dropping below 1,000 IOPS and even to zero on occasion. Increasing the over-provisioning helps a bit, although no amount of over-provisioning can fix a design issue this deep.

|

|||||||||

| WD Black2 120GB | Samsung SSD 840 EVO mSATA 1TB | Mushkin Atlas 240GB | Intel SSD 525 | Plextor M5M | |||||

| Default | |||||||||

| 25% OP | - | ||||||||

|

|||||||||

| WD Black2 120GB | Samsung SSD 840 EVO mSATA 1TB | Mushkin Atlas 480GB | Intel SSD 525 | Plextor M5M | |||||

| Default | |||||||||

| 25% OP | - | ||||||||

TRIM Validation

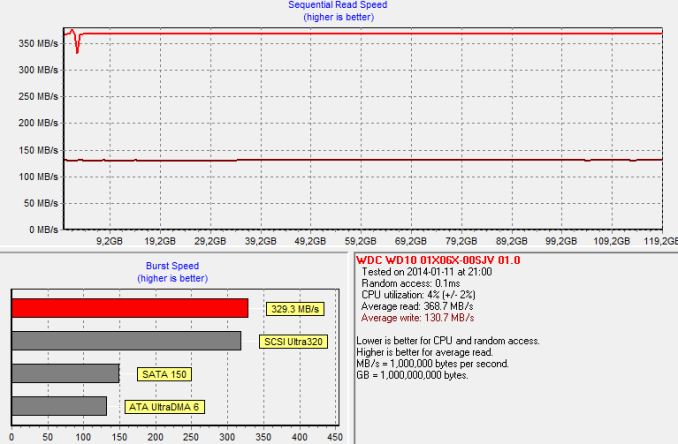

To test TRIM, I first filled all user-accessible LBAs with sequential data and continued with torturing the drive with 4KB random writes (100% LBA, QD=32) for 30 minutes. After the torture I TRIM'ed the drive (quick format in Windows 7/8) and ran HD Tach to make sure TRIM is functional.

Based on our sequential Iometer write test, the write performance should be around 150MB/s after secure erase. It seems that TRIM doesn't work perfectly but performance would likely further recover after some idle time.

100 Comments

View All Comments

Gigaplex - Thursday, January 30, 2014 - link

Once the partitions are set, do they show up in a different Windows box that doesn't have the drivers installed? If so, they're not really drivers, they're just a one-off utility to create the partitions.arturoh - Friday, January 31, 2014 - link

It does sound like the WD "driver" just sets up the partition table in MBR to point to the correct places. It'd be nice if WD provides a Linux utility or, even better, gives steps using existing Linux tools to correctly setup the MBR.arturoh - Friday, January 31, 2014 - link

I'd like know if WD plans to provide a utility to set it up under Linux.Guspaz - Thursday, January 30, 2014 - link

SATA expanders demonstrate that most chipsets do support multiple devices per SATA port.oranos - Thursday, January 30, 2014 - link

SSD is pretty much standard. HDD is too much of a bottleneck in performance system now.kepstin - Thursday, January 30, 2014 - link

I'm rather curious whether this dual drive would show up correctly, with space from both disks available, in Linux.Not that I would pick it up, I've already gone the dual drive route with a SanDisk extreme II and a hard drive in the (former) optical bay.

Kristian Vättö - Thursday, January 30, 2014 - link

It does.Panzerknacker - Thursday, January 30, 2014 - link

Like I said above, it should, and it's the reason I think it should not require a driver. Would be nice if this can be tested. If someone is gonna test this, please use a OLDER linux distro to make sure that there is no specific driver included.calyth - Thursday, January 30, 2014 - link

If they required a driver for windows so that the 2 drives shows up on the same partition table, I wouldn't count on Linux support yet.Unless WD sends a bunch to some linux hw devs ;)

Maltz - Thursday, January 30, 2014 - link

Except that it works fine on a Mac without drivers... once it's partitioned in Windows. I suspect the driver is only important or needed in the partitioning process.