The Xbox One - Mini Review & Comparison to Xbox 360/PS4

by Anand Lal Shimpi on November 20, 2013 8:00 AM ESTPerformance - An Update

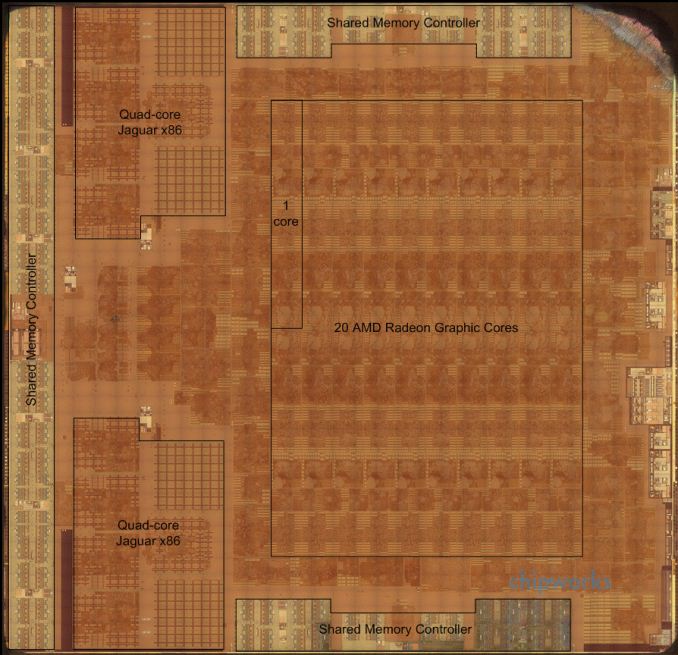

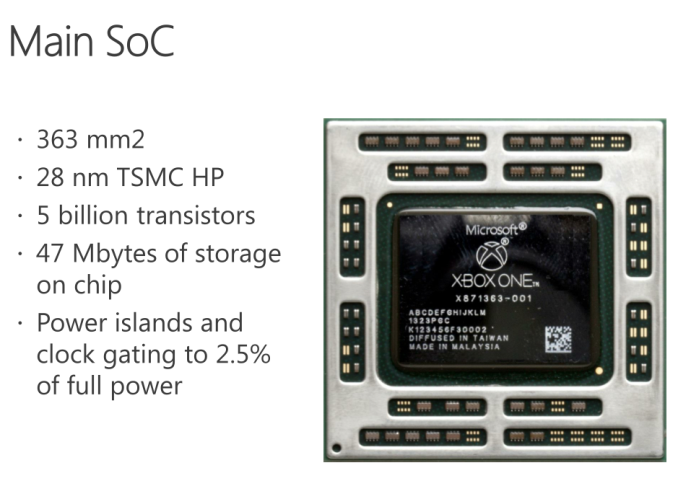

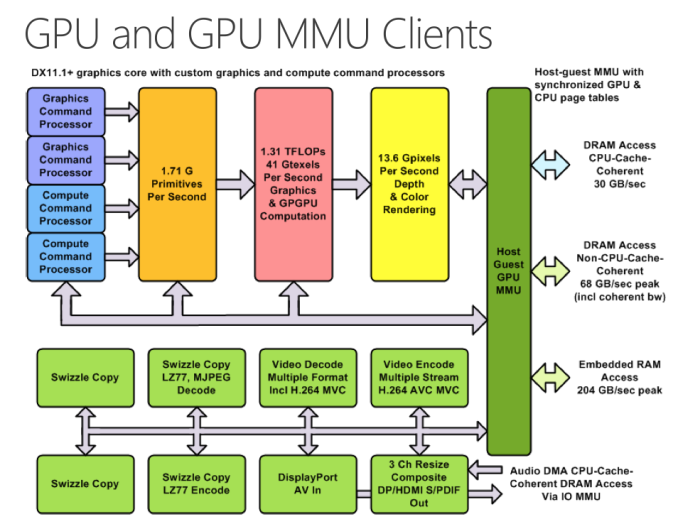

The Chipworks PS4 teardown last week told us a lot about what’s happened between the Xbox One and PlayStation 4 in terms of hardware. It turns out that Microsoft’s silicon budget was actually a little more than Sony’s, at least for the main APU. The Xbox One APU is a 363mm^2 die, compared to 348mm^2 for the PS4’s APU. Both use a similar 8-core Jaguar CPU (2 x quad-core islands), but they feature different implementations of AMD’s Graphics Core Next GPUs. Microsoft elected to implement 12 compute units, two geometry engines and 16 ROPs, while Sony went for 18 CUs, two geometry engines and 32 ROPs. How did Sony manage to fit in more compute and ROP partitions into a smaller die area? By not including any eSRAM on-die.

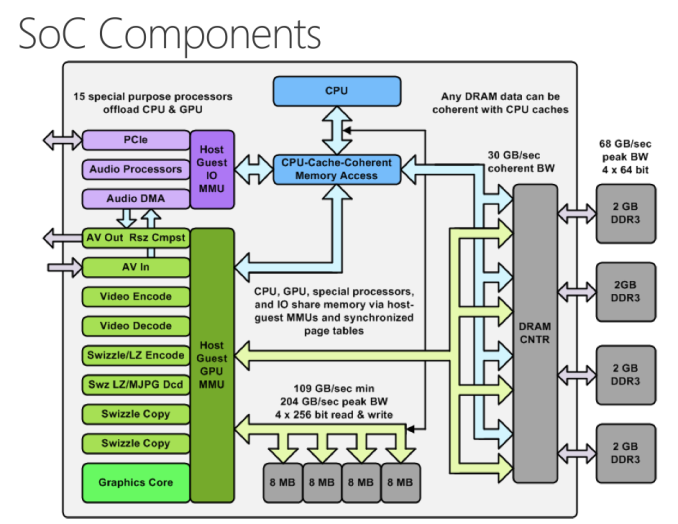

While both APUs implement a 256-bit wide memory interface, Sony chose to use GDDR5 memory running at a 5.5GHz data rate. Microsoft stuck to more conventionally available DDR3 memory running at less than half the speed (2133MHz data rate). In order to make up for the bandwidth deficit, Microsoft included 32MB of eSRAM on its APU in order to alleviate some of the GPU bandwidth needs. The eSRAM is accessible in 8MB chunks, with a total of 204GB/s of bandwidth offered (102GB/s in each direction) to the memory. The eSRAM is designed for GPU access only, CPU access requires a copy to main memory.

Unlike Intel’s Crystalwell, the eSRAM isn’t a cache - instead it’s mapped to a specific address range in memory. And unlike the embedded DRAM in the Xbox 360, the eSRAM in the One can hold more than just a render target or Z-buffer. Virtually any type of GPU accessible surface/buffer type can now be stored in eSRAM (e.g. z-buffer, G-buffer, stencil buffers, shadow buffer, etc…). Developers could also choose to store things like important textures in this eSRAM as well, there’s nothing that states it needs to be one of these buffers just anything the developer finds important. It’s also possible for a single surface to be split between main memory and eSRAM.

Obviously sticking important buffers and other frequently used data here can definitely reduce demands on the memory interface, which should help Microsoft get by with only having ~68GB/s of system memory bandwidth. Microsoft has claimed publicly that actual bandwidth to the eSRAM is somewhere in the 140 - 150GB/s range, which is likely equal to the effective memory bandwidth (after overhead/efficiency losses) to the PS4’s GDDR5 memory interface. The difference being that you only get that bandwidth to your most frequently used data on the Xbox One. It’s still not clear to me what effective memory bandwidth looks like on the Xbox One, I suspect it’s still a bit lower than on the PS4, but after talking with Ryan Smith (AT’s Senior GPU Editor) I’m now wondering if memory bandwidth isn’t really the issue here.

| Microsoft Xbox One vs. Sony PlayStation 4 Spec comparison | ||||||||||||||

| Xbox 360 | Xbox One | PlayStation 4 | ||||||||||||

| CPU Cores/Threads | 3/6 | 8/8 | 8/8 | |||||||||||

| CPU Frequency | 3.2GHz | 1.75GHz | 1.6GHz | |||||||||||

| CPU µArch | IBM PowerPC | AMD Jaguar | AMD Jaguar | |||||||||||

| Shared L2 Cache | 1MB | 2 x 2MB | 2 x 2MB | |||||||||||

| GPU Cores | 768 | 1152 | ||||||||||||

| GCN Geometry Engines | 2 | 2 | ||||||||||||

| GCN ROPs | 16 | 32 | ||||||||||||

| GPU Frequency | 853MHz | 800MHz | ||||||||||||

| Peak Shader Throughput | 0.24 TFLOPS | 1.31 TFLOPS | 1.84 TFLOPS | |||||||||||

| Embedded Memory | 10MB eDRAM | 32MB eSRAM | - | |||||||||||

| Embedded Memory Bandwidth | 32GB/s | 102GB/s bi-directional (204GB/s total) | - | |||||||||||

| System Memory | 512MB 1400MHz GDDR3 | 8GB 2133MHz DDR3 | 8GB 5500MHz GDDR5 | |||||||||||

| System Memory Bus | 128-bits | 256-bits | 256-bits | |||||||||||

| System Memory Bandwidth | 22.4 GB/s | 68.3 GB/s | 176.0 GB/s | |||||||||||

| Manufacturing Process | 28nm | 28nm | ||||||||||||

In order to accommodate the eSRAM on die Microsoft not only had to move to a 12 CU GPU configuration, but it’s also only down to 16 ROPs (half of that of the PS4). The ROPs (render outputs/raster operations pipes) are responsible for final pixel output, and at the resolutions these consoles are targeting having 16 ROPs definitely puts the Xbox One as the odd man out in comparison to PC GPUs. Typically AMD’s GPU targeting 1080p come with 32 ROPs, which is where the PS4 is, but the Xbox One ships with half that. The difference in raw shader performance (12 CUs vs 18 CUs) can definitely creep up in games that run more complex lighting routines and other long shader programs on each pixel, but all of the more recent reports of resolution differences between Xbox One and PS4 games at launch are likely the result of being ROP bound on the One. This is probably why Microsoft claimed it saw a bigger increase in realized performance from increasing the GPU clock from 800MHz to 853MHz vs. adding two extra CUs. The ROPs operate at GPU clock, so an increase in GPU clock in a ROP bound scenario would increase performance more than adding more compute hardware.

The PS4's APU - Courtesy Chipworks

The PS4's APU - Courtesy Chipworks

Microsoft’s admission that the Xbox One dev kits have 14 CUs does make me wonder what the Xbox One die looks like. Chipworks found that the PS4’s APU actually features 20 CUs, despite only exposing 18 to game developers. I suspect those last two are there for defect mitigation/to increase effective yields in the case of bad CUs, I wonder if the same isn’t true for the Xbox One.

At the end of the day Microsoft appears to have ended up with its GPU configuration not for silicon cost reasons, but for platform power/cost and component availability reasons. Sourcing DDR3 is much easier than sourcing high density GDDR5. Sony managed to obviously launch with a ton of GDDR5 just fine, but I can definitely understand why Microsoft would be hesitant to go down that route in the planning stages of Xbox One. To put some numbers in perspective, Sony has shipped 1 million PS4s thus far. That's 16 million GDDR5 chips, or 7.6 Petabytes of RAM. Had both Sony and Microsot tried to do this, I do wonder if GDDR5 supply would've become a problem. That's a ton of RAM in a very short period of time. The only other major consumer of GDDR5 are video cards, and the number of cards sold in the last couple of months that would ever use that RAM is a narrow list.

Microsoft will obviously have an easier time scaling its platform down over the years (eSRAM should shrink nicely at smaller geometry processes), but that’s not a concern to the end user unless Microsoft chooses to aggressively pass along cost savings.

286 Comments

View All Comments

nathanddrews - Wednesday, November 20, 2013 - link

Nice article, thanks for posting!Will you be going into any of the media streaming capabilities of the different platforms? I've heard that Sony has abandoned almost all DLNA abilities and doesn't even play 3-D Blu-ray discs? (WTF) Is Microsoft going that route as well or have they expanded their previous offerings? Being able to play MKV Blu-ray rips would be interesting...

Also, what's the deal with 4K and HDMI? As I understand it, the new consoles use HDMI 1.4a, so that means only 4K at 24Hz (30Hz max), so no one is going to be gaming at 4K, but it would allow for 4K movie downloads.

I've spent the last couple years investing heavily into PC gaming (Steam, GOG, etc.) after a long stint of mostly console gaming. A lot of my friends who used to be exclusive console gamers have also made the switch recently. They're all getting tired of being locked into proprietary systems and the lack of customization. I've hooked a bunch of them up with $100 i3/C2Q computers on Craigslist and they toss in a GTX 670 or 7950 (whatever their budget allows) and they're having a blast getting maxed (or near maxed) 1080p gaming with less money spent on hardware and games. Combined with XBMC, Netflix via any browser they prefer, it's definitely a lot easier for non-enthusiasts to get into PC gaming now (thanks big Picture Mode!). Obviously, there's still a long way to go to get the level of UI smoothness/integration of a single console, but BPM actually does a pretty good job switching between XBMC, Hyperspin, Chrome, and all that.

SunLord - Wednesday, November 20, 2013 - link

A 4k movie is at least 100+GB and that just for one movie... No one sane is going to be downloading them or at least not more then 2 a month.nathanddrews - Wednesday, November 20, 2013 - link

Except they aren't 100+GB. The 4K movies Sony is offering through its service are 40-60GB for the video with a couple audio tracks. You forget that most Blu-ray video files are in the 20-30GB range, only a handful even get close to 45GB. And that's using H.264, not H.265 which offers double the compression without sacrificing PQ.Don't measure other peoples' sanity based upon your own. I download multiple 15-25GB games per month via Steam without even thinking about it. 4K video downloads are happening now and will likely continue with or without your blessing. :/

3DoubleD - Wednesday, November 20, 2013 - link

The thing is, 4k is roughly 4x the pixels as 1080p. Therefore, a 4k video the the appropriate bit-rate will be ~4x the size as the 1080p version. So yes, a 4k movie should be about 80 - 120 GB.Now the scaling won't be perfect given that we aren't requiring 4x the audio, but the audio on 1080p BRs is a small portion relative to the video.

The 60 GB 4k video will probably be an improvement over a 30 GB 1080p video, but the reality is that it is a bitrate starved version, sort of like 1080p netflix vs 1080p BR.

The thing is, what format is available to delivery full bitrate 4k? Quad layer BRs? Probably not. Digital downloads... not with internet caps. Still, I'm in no rush, my 23 TB (and growing... always growing) media server would be quickly overwhelmed either way. Also, I just bought a Panasonic TC60ST60, so I'm locking into 1080p for the next 5 years to ride out this 4k transition until TVs are big enough or I can install a projector.

nathanddrews - Wednesday, November 20, 2013 - link

When you say "full bitrate 4k", do you even know what you're saying? RED RAW? Uncompressed you're talking many Gbps, several TBs of storage for a feature-length film. DCI 4K? Hundreds of GBs. Sony has delivered on Blu-ray quality picture quality (no visible artifacts) at 4K under 60GB, it's real and it looks excellent. Is it 4K DCI? Of course not, but Blu-ray couldn't match 2K DCI either. There are factors beyond bit rate at play.Simple arithmetic can not be used to relate bit rate to picture quality, especially when using different codecs... or even the same codec! Using the same bit rate, launch DVDs look like junk compared to modern DVDs. Blu-ray discs today far outshine most launch Blu-ray discs at the same bit rate. There's more to it than just the bit rate.

3DoubleD - Wednesday, November 20, 2013 - link

You certainly know what I meant by "full bitrate" when I spent half my post describing what I meant. Certainly not uncompressed video, that is ridiculous.There is undoubtedly room for improvements available using the same codec to achieve more efficient encoding of BR video. I've seen significant decreases in bitrate accomplished with negligible impact to image quality with .264 encoding of BR video. That said, to this day these improvements rarely appear on production BR discs, but instead by videophile enthusiasts.

If what your saying is that all production studios (not just Sony) have gotten their act together and are more efficiently encoding BR video, then that's great news! Now when ripping BRs I don't have to re-encode the video more efficiently because they were too lazy to do it in the first place!

If this is the case, then yes, 60 GB is probably sufficient to produce artifact free UHD; however, this practice is contrary to the way BR production has been since the beginning and I'd be surprised if everyone follows suit. Yes, BR PQ/bitrate has been improving over the years, but not to the level of a 60GB feature length movie completely artifact free UHD.

Still, 60 GB is both too large for dual layer BRs and far too large for the current state of internet (with download caps). I applaud Sony for offering an option for the 4K enthusiast, but I'm still unclear as to what the long term game plan will be. I assume a combination of maintaining efficient encoding practices and H.265 will enable them to squeeze UHD content onto a double layer BR? I hope (and prefer) that viable download options appear, but that is mostly up to ISPs removing their download caps unfortunately.

Overall, it's interesting, but still far from accessible. The current extreme effort required to get UHD content and the small benefit (unless you have a massive UHD projector setup) really limits the market. I'm saying this as someone who went to seemingly extreme lengths to get HD content (720p/1080p) prior to the existence of HDDVD and BR. Of course consumers blinded by marketing will still buy 60" UHD TVs and swear they can see the difference sitting 10 - 15+ ft away, but mass adoption is certainly years away. Higher PQ display technology is far more interesting (to me).

Keshley - Wednesday, November 20, 2013 - link

You're assuming that all 45 GB of the data is video, when usually at least half of that is audio. Audio standards haven't changed, still DTS-MA, TrueHD, etc. Typically the actual video portion of a movie is around 10GBs, so we're talking closer to the 60GB number that was mentioned above.3DoubleD - Wednesday, November 20, 2013 - link

The video portion of a BR is by far the bulk and audio is certainly not half the data. For example, Man of Steel has 21.39 GB of video and the English 7.1 DTS HD track is 5.57 GB. The entire BR is 39 GB, the remainder are a bunch of extra video features and some extra other DTS audio tracks for other languages is also included. So keeping the bitrate to pixel ratio the same, 4x scaling gives us ~85 GB. To fit within 60GB, the video portion could only be 54.5GB to leave room for DTS HD audio (english only) That would be ~64% of the bitrate/pixel for UHD compared to 1080p, assuming 4x scaling and the same codec and encoding efficiency. Perhaps in some cases you can get away with less bitrate per pixel given the shear number of pixels, but it certainly seems on the bitrate starved side to me. Even if video without noticeable artifacts is possible for a 2h20min movie (20 min of credits, so ~2h) like Man of Steel, a longer movie or a one that is more difficult to encode without artifacts (grainy, dark) would struggle.Keep in mind, that is JUST the core video/audio. We've thrown out all the other video that would normally come with a BR (which is fine by me, just give me the core video and audio and I'm happy). If they insist on keeping the extra features on a UHD BR release, they would certainly have to include them on a separate disc since even an average length movie would struggle to squeeze to fit on a 50GB disc. To fit a BR with just video and english DTS HD audio, we are talking 52% bitrate/pixel for UHD compared to 1080p. We would certainly need .265 encoding in that case.

So I would probably concede that UHD without artifacts with only 60GB is possible for shorter films or if you can get away with less bitrate/pixel due to the higher resolution. For longer films and/or difficult to encode films, I could see this going up towards 100 GB. Putting more effort into encoding efficiency and switching to .265 will certainly be important steps towards making this possible.

Kjella - Friday, November 22, 2013 - link

For what it's worth, BluRay is far beyond the sweet spot in bit rate. Take a UHD video clip, resize to 1080p and compress it to BluRay size. Now compress the UHD video to BluRay size and watch them both on a UHDTV. The UHD clip will look far better than the 1080p clip, at 1080p the codec is resolution starved. It has plenty bandwidth but not enough resolution to make an optimal encoding. The other part is that if you have a BluRay disc, it doesn't hurt to use it. Pressing the disc costs the same if the video is 40GB total instead of 30GB and it could only get slightly better, while if you're streaming video it matters. Hell, even cartoons are often 20GB when you put them on a BluRay...ydeer - Thursday, November 21, 2013 - link

I pay 29.99 for my 150/30 Mbit connection with 3 TB of traffic. My average download volume was around 450 GB over the last few months and I sit close enough to my 60" screen (which isn’t 4k - yet) to notice a difference between the two resolutions.So yes, I would absolutely buy/rent 4k movies if sony could offer them at decent nitrate. I would even buy a PS4 for that sole purpose.