The Xbox One - Mini Review & Comparison to Xbox 360/PS4

by Anand Lal Shimpi on November 20, 2013 8:00 AM ESTPerformance - An Update

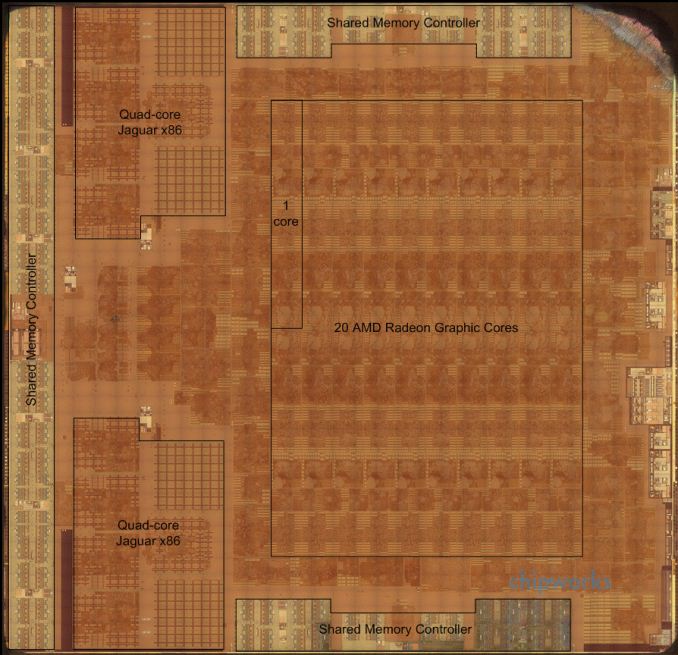

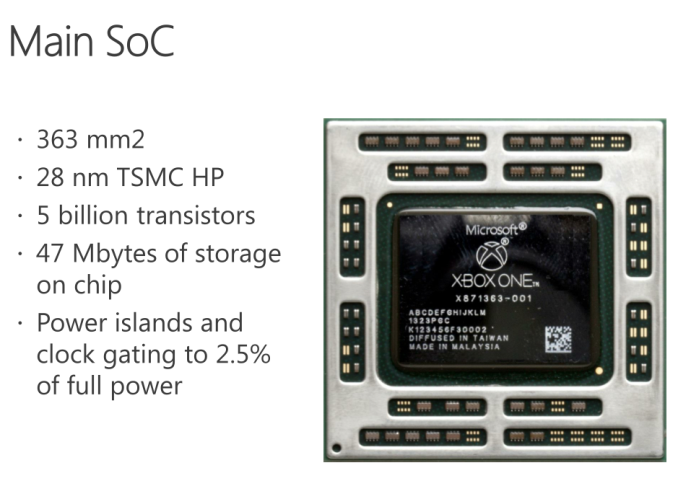

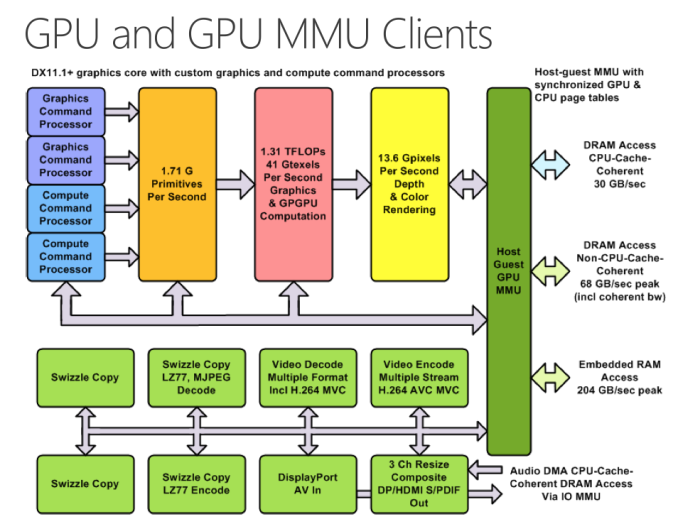

The Chipworks PS4 teardown last week told us a lot about what’s happened between the Xbox One and PlayStation 4 in terms of hardware. It turns out that Microsoft’s silicon budget was actually a little more than Sony’s, at least for the main APU. The Xbox One APU is a 363mm^2 die, compared to 348mm^2 for the PS4’s APU. Both use a similar 8-core Jaguar CPU (2 x quad-core islands), but they feature different implementations of AMD’s Graphics Core Next GPUs. Microsoft elected to implement 12 compute units, two geometry engines and 16 ROPs, while Sony went for 18 CUs, two geometry engines and 32 ROPs. How did Sony manage to fit in more compute and ROP partitions into a smaller die area? By not including any eSRAM on-die.

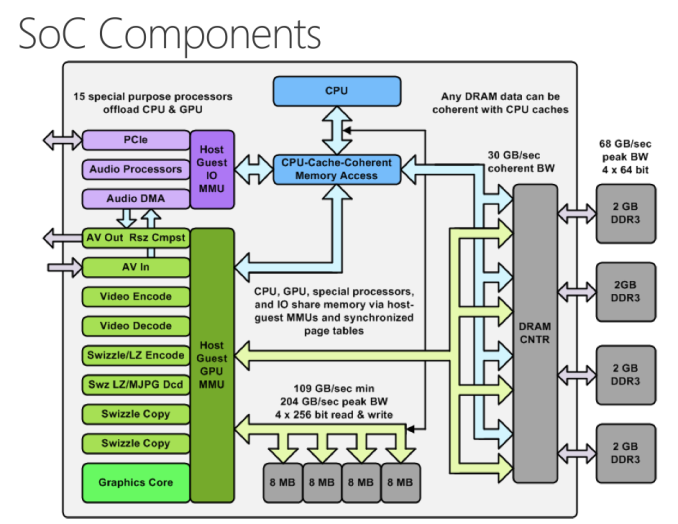

While both APUs implement a 256-bit wide memory interface, Sony chose to use GDDR5 memory running at a 5.5GHz data rate. Microsoft stuck to more conventionally available DDR3 memory running at less than half the speed (2133MHz data rate). In order to make up for the bandwidth deficit, Microsoft included 32MB of eSRAM on its APU in order to alleviate some of the GPU bandwidth needs. The eSRAM is accessible in 8MB chunks, with a total of 204GB/s of bandwidth offered (102GB/s in each direction) to the memory. The eSRAM is designed for GPU access only, CPU access requires a copy to main memory.

Unlike Intel’s Crystalwell, the eSRAM isn’t a cache - instead it’s mapped to a specific address range in memory. And unlike the embedded DRAM in the Xbox 360, the eSRAM in the One can hold more than just a render target or Z-buffer. Virtually any type of GPU accessible surface/buffer type can now be stored in eSRAM (e.g. z-buffer, G-buffer, stencil buffers, shadow buffer, etc…). Developers could also choose to store things like important textures in this eSRAM as well, there’s nothing that states it needs to be one of these buffers just anything the developer finds important. It’s also possible for a single surface to be split between main memory and eSRAM.

Obviously sticking important buffers and other frequently used data here can definitely reduce demands on the memory interface, which should help Microsoft get by with only having ~68GB/s of system memory bandwidth. Microsoft has claimed publicly that actual bandwidth to the eSRAM is somewhere in the 140 - 150GB/s range, which is likely equal to the effective memory bandwidth (after overhead/efficiency losses) to the PS4’s GDDR5 memory interface. The difference being that you only get that bandwidth to your most frequently used data on the Xbox One. It’s still not clear to me what effective memory bandwidth looks like on the Xbox One, I suspect it’s still a bit lower than on the PS4, but after talking with Ryan Smith (AT’s Senior GPU Editor) I’m now wondering if memory bandwidth isn’t really the issue here.

| Microsoft Xbox One vs. Sony PlayStation 4 Spec comparison | ||||||||||||||

| Xbox 360 | Xbox One | PlayStation 4 | ||||||||||||

| CPU Cores/Threads | 3/6 | 8/8 | 8/8 | |||||||||||

| CPU Frequency | 3.2GHz | 1.75GHz | 1.6GHz | |||||||||||

| CPU µArch | IBM PowerPC | AMD Jaguar | AMD Jaguar | |||||||||||

| Shared L2 Cache | 1MB | 2 x 2MB | 2 x 2MB | |||||||||||

| GPU Cores | 768 | 1152 | ||||||||||||

| GCN Geometry Engines | 2 | 2 | ||||||||||||

| GCN ROPs | 16 | 32 | ||||||||||||

| GPU Frequency | 853MHz | 800MHz | ||||||||||||

| Peak Shader Throughput | 0.24 TFLOPS | 1.31 TFLOPS | 1.84 TFLOPS | |||||||||||

| Embedded Memory | 10MB eDRAM | 32MB eSRAM | - | |||||||||||

| Embedded Memory Bandwidth | 32GB/s | 102GB/s bi-directional (204GB/s total) | - | |||||||||||

| System Memory | 512MB 1400MHz GDDR3 | 8GB 2133MHz DDR3 | 8GB 5500MHz GDDR5 | |||||||||||

| System Memory Bus | 128-bits | 256-bits | 256-bits | |||||||||||

| System Memory Bandwidth | 22.4 GB/s | 68.3 GB/s | 176.0 GB/s | |||||||||||

| Manufacturing Process | 28nm | 28nm | ||||||||||||

In order to accommodate the eSRAM on die Microsoft not only had to move to a 12 CU GPU configuration, but it’s also only down to 16 ROPs (half of that of the PS4). The ROPs (render outputs/raster operations pipes) are responsible for final pixel output, and at the resolutions these consoles are targeting having 16 ROPs definitely puts the Xbox One as the odd man out in comparison to PC GPUs. Typically AMD’s GPU targeting 1080p come with 32 ROPs, which is where the PS4 is, but the Xbox One ships with half that. The difference in raw shader performance (12 CUs vs 18 CUs) can definitely creep up in games that run more complex lighting routines and other long shader programs on each pixel, but all of the more recent reports of resolution differences between Xbox One and PS4 games at launch are likely the result of being ROP bound on the One. This is probably why Microsoft claimed it saw a bigger increase in realized performance from increasing the GPU clock from 800MHz to 853MHz vs. adding two extra CUs. The ROPs operate at GPU clock, so an increase in GPU clock in a ROP bound scenario would increase performance more than adding more compute hardware.

The PS4's APU - Courtesy Chipworks

The PS4's APU - Courtesy Chipworks

Microsoft’s admission that the Xbox One dev kits have 14 CUs does make me wonder what the Xbox One die looks like. Chipworks found that the PS4’s APU actually features 20 CUs, despite only exposing 18 to game developers. I suspect those last two are there for defect mitigation/to increase effective yields in the case of bad CUs, I wonder if the same isn’t true for the Xbox One.

At the end of the day Microsoft appears to have ended up with its GPU configuration not for silicon cost reasons, but for platform power/cost and component availability reasons. Sourcing DDR3 is much easier than sourcing high density GDDR5. Sony managed to obviously launch with a ton of GDDR5 just fine, but I can definitely understand why Microsoft would be hesitant to go down that route in the planning stages of Xbox One. To put some numbers in perspective, Sony has shipped 1 million PS4s thus far. That's 16 million GDDR5 chips, or 7.6 Petabytes of RAM. Had both Sony and Microsot tried to do this, I do wonder if GDDR5 supply would've become a problem. That's a ton of RAM in a very short period of time. The only other major consumer of GDDR5 are video cards, and the number of cards sold in the last couple of months that would ever use that RAM is a narrow list.

Microsoft will obviously have an easier time scaling its platform down over the years (eSRAM should shrink nicely at smaller geometry processes), but that’s not a concern to the end user unless Microsoft chooses to aggressively pass along cost savings.

286 Comments

View All Comments

Sabresiberian - Wednesday, November 20, 2013 - link

Sorry about the emotion in the last paragraph, but it irritates me that some console players have to make up excuses for their decision. If you decide to buy a console, that's all good, but don't cut your nose off to spite yourself by purchasing one for reasons that simply aren't true.Sabresiberian - Wednesday, November 20, 2013 - link

"yourself" is a typo, should be "your face". :)PliotronX - Thursday, November 21, 2013 - link

That's very true, but then they've always lagged PC gaming. The closed proprietary system is a double edged sword. SDKs designed for a specific system can eek every last drop out of said system but then it's basically set in stone. I honestly don't think most peoples eyes are attune to the blur without GSync but they will notice true 1080p gaming. They all (PC, PS4, Xbone) all still serve their roles. Xbone just happens to veer off into Netflix territory a little too hard.ydeer - Thursday, November 21, 2013 - link

I agree, I’m not as excited about consoles as I used to be. What I am really excited about is SteamOS.Most reasonably priced gaming PCs have the potential to compete with this generation of consoles if Valve (somehow, magically) manages to bring down the overhead using Linux.

Plus you get the community. And a controller that at least has the potential to work better than anything we have used so far (see Civ5 on Steam Controller demo). And holiday sales. Upgradable hardware.

Heck, I can even see myself dual booting SteamOS on a MBP with the Steam Controller to play the latest and greatest games at almost equal quality than "next-gen" consoles, but completely mobile.

Please Valve, don't mess this up.

Wall Street - Thursday, November 21, 2013 - link

First off, 1440p, G-Sync and 120 Hz are all technologies that cost $250+ for the monitor alone and really demand another $300 on the GPU, so they are not comparable to the PS4 or XBox.Secondly, how can you build a gaming rig for $500? $100 is the Windows license. Another $100 gets a PSU, a case and a Blu-ray drive (but a really cheap case and PSU). Another $100 needs to be spent for a HDD and RAM. Now we are at $300 and don'y have a Mobo, CPU or RAM. A good CPU and CPU cooler costs $150, even for a cheaper CPU (with a stock cooler, the console would be much quieter than the desktop). At least $50 needs to be spent on a Mobo. This leaves you with only $50 on your $500 budget for a GPU. As you can see, this leaves you with a system that underperforms the consoles. I would also argue that a $500 system needs to cheap out on components leaving you with worse build quality than a console which is more similar to a premium SFF PC (which cost a premium to full sized). Also, this cost analysis doesn't have a monitor or peripherals, so if you don't have a PC or have a laptop, that is at least another $150 (many more people have TVs, and fewer people have monitors sitting around now that laptops have been a majority of PC sales over the past five years).

Hixbot - Sunday, November 24, 2013 - link

PC gaming is superior, but as long as developers leave out the local multiplayer elements of their console counterpart, a console will always have a spot in my home. You know, gaming in the living room with actual friends. I'd hook up my gaming PC to my TV and get some controllers, but there are basically no PC games that offer any decent local multiplayer options.mikato - Monday, November 25, 2013 - link

They do but you need to have all the computers in the same room. Pain in the butt, but we do it a couple times a year.Lonesloane - Thursday, November 21, 2013 - link

What about the noise of both new consoles? Anand is not commenting on that in the article, but after my experience with a Xenon 360 this is really important to me.Could you add that information?

JimmiG - Thursday, November 21, 2013 - link

It's funny how PC hardware reviews obsess over tiny differences in memory bandwidth, shader throughput and clock speeds, yet the PS4 having 40% greater shader throughput and 160% more memory bandwidth just doesn't seem to matter...blzd - Thursday, November 21, 2013 - link

Did you read the article? It was pretty clear and even pointed out to make real world differences. Maybe you thought theyd outright denounce the xb1 for it?