The NVIDIA GeForce GTX 780 Ti Review

by Ryan Smith on November 7, 2013 9:01 AM ESTCompute

Jumping into compute, we’re entering the one area where GTX 780 Ti’s rule won’t be nearly as absolute. Among NVIDIA cards its single precision performance will be unchallenged, but the artificial double precision performance limitation as compared to the compute-focused GTX Titan means that GTX 780 Ti will still lose to GTX Titan whenever double precision comes into play. Alternatively, GTX 780 Ti still has to deal with the fact that AMD’s cards have shown themselves to be far more competitive in our selection of compute benchmarks.

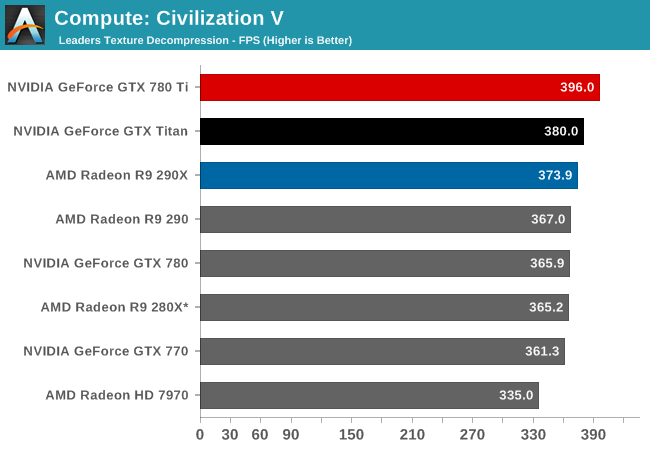

As always we'll start with our DirectCompute game example, Civilization V, which uses DirectCompute to decompress textures on the fly. Civ V includes a sub-benchmark that exclusively tests the speed of their texture decompression algorithm by repeatedly decompressing the textures required for one of the game’s leader scenes. While DirectCompute is used in many games, this is one of the only games with a benchmark that can isolate the use of DirectCompute and its resulting performance.

Even though we’re largely CPU bound by this point, GTX 780 Ti manages to get a bit more out of Civilization V’s texture decode routine, pushing it to the top of the charts and ahead of both GTX Titan and 290X.

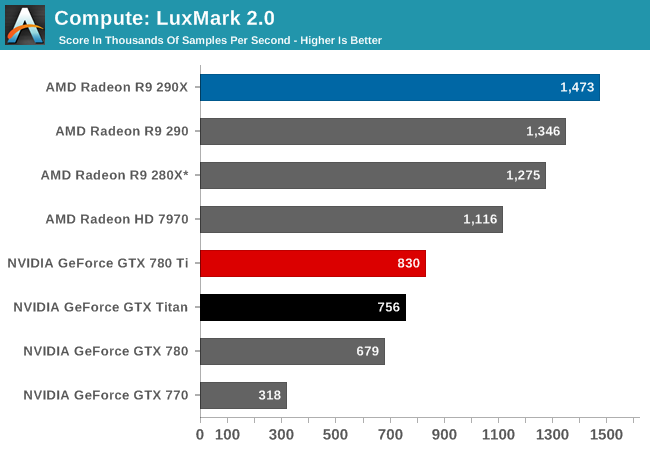

Our next benchmark is LuxMark2.0, the official benchmark of SmallLuxGPU 2.0. SmallLuxGPU is an OpenCL accelerated ray tracer that is part of the larger LuxRender suite. Ray tracing has become a stronghold for GPUs in recent years as ray tracing maps well to GPU pipelines, allowing artists to render scenes much more quickly than with CPUs alone.

With LuxMark NVIDIA’s ray tracing performance sees further improvements due to the additional compute resources at hand. But NVIDIA still doesn’t fare well here, with the GTX 780 Ti falling behind all of our AMD cards in this test.

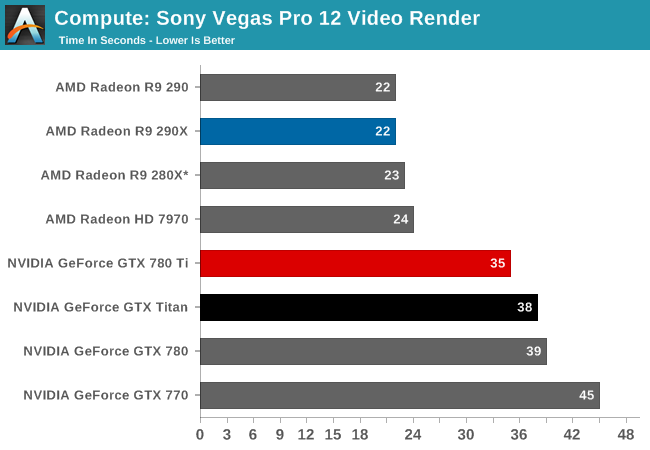

Our 3rd compute benchmark is Sony Vegas Pro 12, an OpenGL and OpenCL video editing and authoring package. Vegas can use GPUs in a few different ways, the primary uses being to accelerate the video effects and compositing process itself, and in the video encoding step. With video encoding being increasingly offloaded to dedicated DSPs these days we’re focusing on the editing and compositing process, rendering to a low CPU overhead format (XDCAM EX). This specific test comes from Sony, and measures how long it takes to render a video.

Like LuxMark, GTX 780 Ti once again improves on its predecessors. But it’s not enough to make up for AMD’s innate performance advantage in this benchmark, leading to GTX 780 Ti trailing all of the AMD cards.

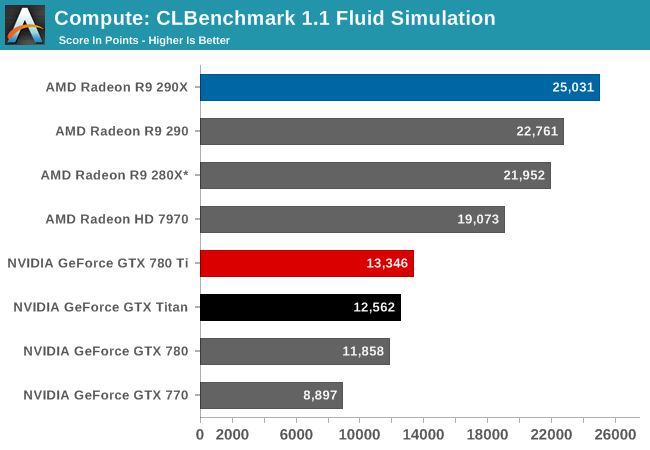

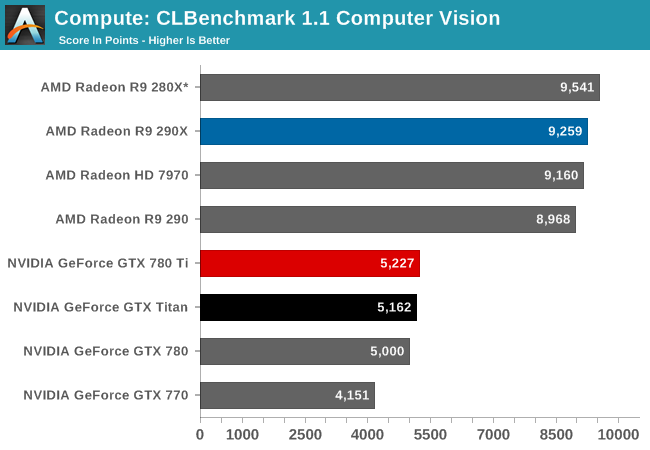

Our 4th benchmark set comes from CLBenchmark 1.1. CLBenchmark contains a number of subtests; we’re focusing on the most practical of them, the computer vision test and the fluid simulation test. The former being a useful proxy for computer imaging tasks where systems are required to parse images and identify features (e.g. humans), while fluid simulations are common in professional graphics work and games alike.

CLBenchmark continues to be the same story. GTX 780 Ti improves on NVIDIA’s performance to become their fastest single precision card, but it still falls short of every AMD card in these tests.

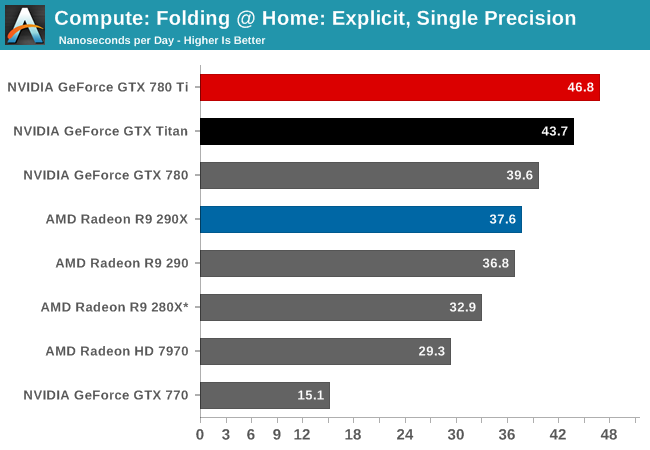

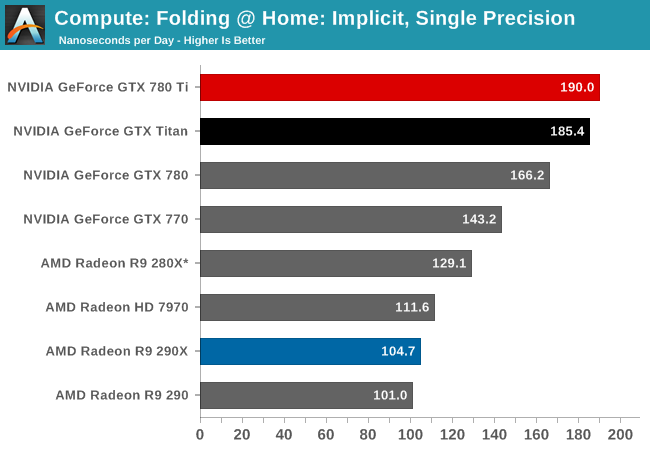

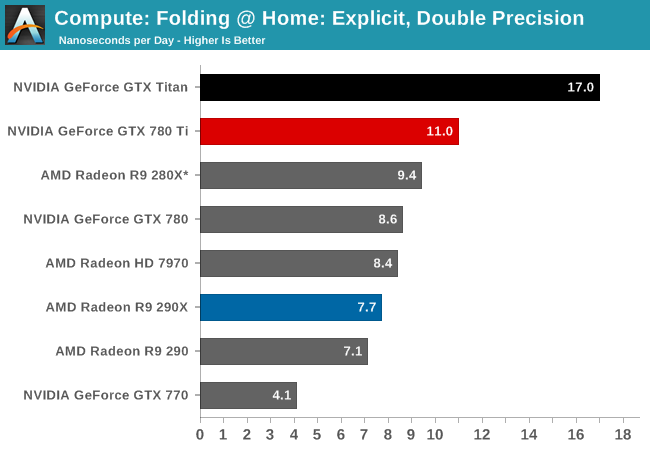

Moving on, our 5th compute benchmark is FAHBench, the official Folding @ Home benchmark. Folding @ Home is the popular Stanford-backed research and distributed computing initiative that has work distributed to millions of volunteer computers over the internet, each of which is responsible for a tiny slice of a protein folding simulation. FAHBench can test both single precision and double precision floating point performance, with single precision being the most useful metric for most consumer cards due to their low double precision performance. Each precision has two modes, explicit and implicit, the difference being whether water atoms are included in the simulation, which adds quite a bit of work and overhead. This is another OpenCL test, as Folding @ Home has moved exclusively to OpenCL this year with FAHCore 17.

Finally with Folding@Home we see the GTX 780 Ti once again take the top spot. In the single precision tests the GTX 780 further extends NVIDIA’s lead, beating GTX Titan by anywhere between a few percent to over ten percent depending on which specific test we’re looking at. However even with GTX 780 Ti’s general performance increase, in the double precision test it won’t overcome the innate double precision performance deficit it faces versus GTX Titan. When it comes to double precision compute, Titan remains king.

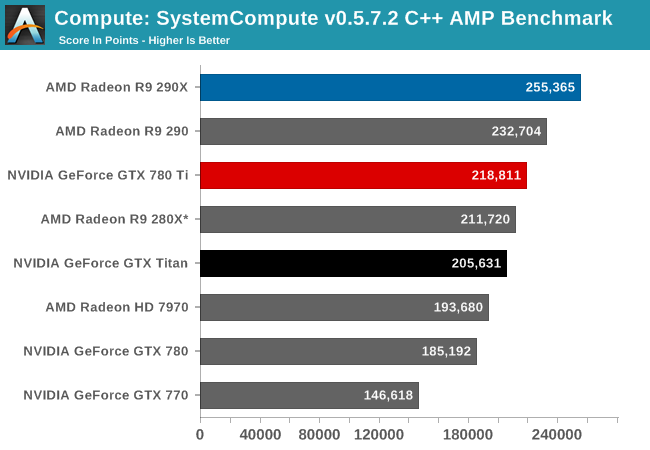

Wrapping things up, our final compute benchmark is an in-house project developed by our very own Dr. Ian Cutress. SystemCompute is our first C++ AMP benchmark, utilizing Microsoft’s simple C++ extensions to allow the easy use of GPU computing in C++ programs. SystemCompute in turn is a collection of benchmarks for several different fundamental compute algorithms, as described in this previous article, with the final score represented in points. DirectCompute is the compute backend for C++ AMP on Windows, so this forms our other DirectCompute test.

Last, in our C++ AMP benchmark we see the GTX 780 Ti take the top spot for an NVIDIA card, but like so many of our earlier compute tests it will come up short versus AMD’s best cards. This isn’t quite as lopsided as some of our other tests, however GTX 780 Ti is stuck competing with the 280X while the 290 and 290X easily outperform NVIDIA’s new flagship.

302 Comments

View All Comments

A5 - Thursday, November 7, 2013 - link

BF4 has a built-in benchmark too, but I have no idea how good it is. I'd guess they're waiting on a patch?If nothing else, there will be BF4 results if/when that Mantle update comes out.

IanCutress - Thursday, November 7, 2013 - link

BF4 has a built in benchmark tool? I can't find any reference to one.Ryan Smith - Thursday, November 7, 2013 - link

BF3 will ultimately get replaced with BF4 later this month. For the moment with all of the launches in the past few weeks, we haven't yet had the time to sit down and validate BF4, let alone collect all of the necessary data.1Angelreloaded - Thursday, November 7, 2013 - link

Hell man people run FEAR still as a benchmark because of how brutal it is against GPU/CPU/HDD.Bakes - Thursday, November 7, 2013 - link

I think it's better to wait until driver performance stabilizes for new applications before basing benchmarks on them. If you don't then early benchmark numbers become useless for comparison sake.TheJian - Thursday, November 7, 2013 - link

I would argue warhead needs to go. Servers for that game have been EMPTY for ages and ZERO people play it. You can ask to add BF4, but to remove BF3 given warhead is included (while claiming bf3 old) is ridiculous. How old is Warhead? 7-8 years? People still play BF3. A LOT of people. I would argue they need to start benchmarking based on game sales.Starcraft2, Diablo3, World of Warcraft Pandaria, COD Black ops 2, SplinterCell Blacklist, Assassins Creed 3 etc etc... IE, black ops 2 has over 5x the sales of Hitman Absolution. Which one should you be benchmarking?

Warhead...OLD.

Grid 2 .03 total sales for PC says vgchartz

StarCraft 2 5.2.mil units (just PC).

Which do you think should be benchmarked?

Even Crysis 3 only has .27mil units says vgchartz.

Diablo 3? ROFL...3.18mil for PC. So again, 11.5x Crysis 3.

Why are we not benchmarking games that are being sold in the MILLIONS of units?

WOW still has 7 million people playing and it can slow down a lot with tons of people doing raids etc.

TheinsanegamerN - Friday, November 8, 2013 - link

because any halfway decent machine can run WoW? they use the most demanding games to show how powerful the gpu really is. 5760x1080p with 4xMSAA gets 69 FPS with the 780ti.why benchmark hitman over black ops? simple, it is not what we call demanding.

they use demanding games. not the super popular games thatll run on hardware from 3 years ago.

powerarmour - Thursday, November 7, 2013 - link

Well, that time on the throne for the 290X lasted about as long as Ned Stark...Da W - Thursday, November 7, 2013 - link

I look at 4K gaming since i play in 3X1 eyefinity (being +/- 3.5K gaming).At these resolution i see an average of 1FPS lead for 780Ti over 290X. For 200$ more.

Power consumption is about the same.

And as far as temperature go, it's temperature AT THE CHIP level. Both cards will heat your room equally if they consume as much power.

The debate is really about the cooler, and Nvidia got an outright lead as far as cooling goes.

JDG1980 - Thursday, November 7, 2013 - link

It seems to me that both Nvidia and AMD are charging too much of a price premium for their top-end cards. The GTX 780 Ti isn't worth $200 more than the standard GTX 780, and the R9 290X isn't worth $150 more than the standard R9 290.For gamers who want a high-end product but don't want to unnecessarily waste money, it seems like the real competition is between the R9 290 ($399) and the GTX 780 ($499). At the moment the R9 290 has noise issues, but once non-reference cards become available (supposedly by the end of this month), AMD should hold a comfortable lead. That said, the Titan Cooler is indeed a really nice piece of industrial design, and I can see someone willing to pay a bit extra for it.