The AMD Radeon R9 290X Review

by Ryan Smith on October 24, 2013 12:01 AM EST- Posted in

- GPUs

- AMD

- Radeon

- Hawaii

- Radeon 200

Hawaii: Tahiti Refined

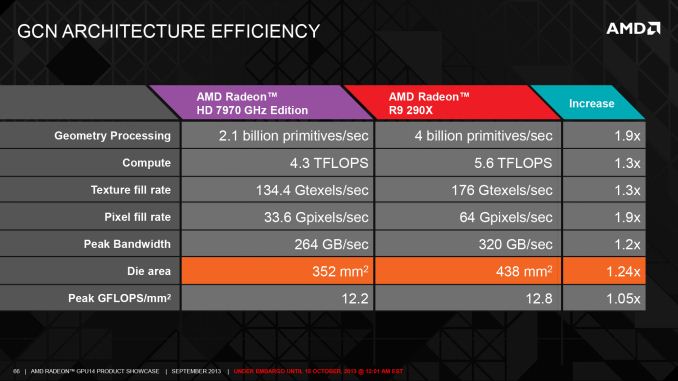

Thus far when we’ve been discussing Hawaii, it’s typically been in comparison to Tahiti, and there’s good reason for that. Besides the obvious parallel of being AMD’s new flagship GPU, finally succeeding Tahiti after just short of 2 years, in terms of design Hawaii looks and acts a lot like an improved Tahiti. The underlying architecture is still Graphics Core Next, and a lot of the compute functionality that gave Tahiti its broad applicability to graphics and compute alike is equally present in Hawaii, so in many ways Hawaii looks and behaves like a bigger Tahiti. But as we’ve seen over the years with these second wind parts, there’s are a lot of finer details involved taking an existing architecture and building it bigger, never mind the subtle feature additions that come with Hawaii.

The biggest addition with Hawaii is of course the increased number of functional units. 2 years in and against GPUs like NVIDIA’s GK110, AMD has a clear need to produce a larger, more powerful GPU if they wish to stay competitive with NVIDIA at the high end while also delivering newer, faster products for their regular customers. In doing so there’s a need to identify bottlenecks in the existing design (Tahiti) to figure out what changes will pay off the most for their die size and power consumption cost, and conversely what changes would have little payoff. The end result is that we’re seeing AMD significantly scale up some of the smaller areas of the chip, while taking a more nuanced approach on scaling up the larger areas.

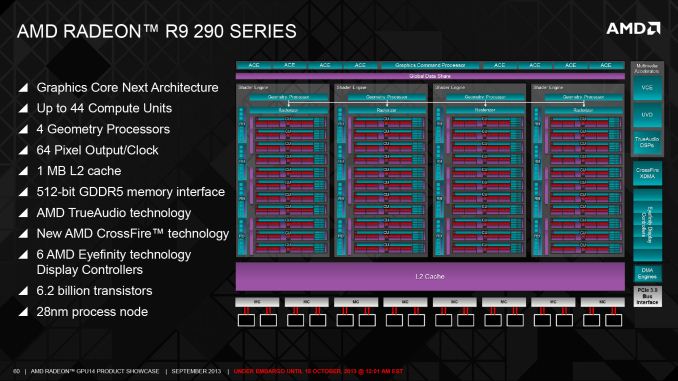

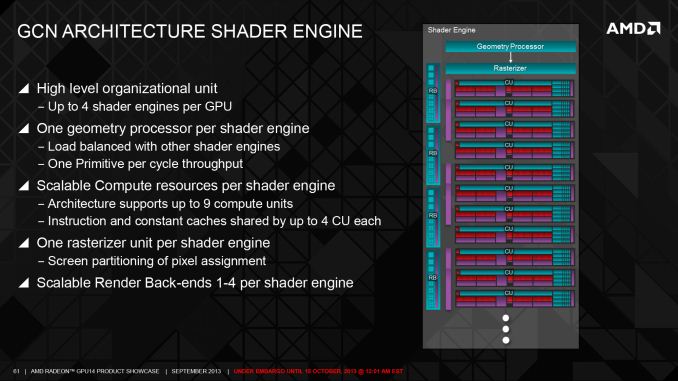

But before we get too deep here, we want to quickly point out that with Hawaii AMD is making a significant change to how they’re logically representing the architecture in public, which although is striking does not mean the underlying low-level organization is nearly as different as the high-level changes would imply. At a high level the biggest change here is that AMD is now segmenting their hardware into “shader engines”. Conceptually the idea is similar to NVIDIA’s SMXes, with each Shader Engine (SE) representing a collection of hardware including shaders/CUs, geometry processors, rasterizers, and L1 cache. Furthermore ROPs are also being worked into the Shader Engine model, with each SE taking on a fraction of the ROPs for the purposes of high level overviews. What remains outside of the SEs is the command processor and ACEs, the L2 cache and memory controllers, and then the various dedicated, non-duplicated functionality such as video decoders, display controllers, DMA controllers, and the PCIe interface.

Moving forward, AMD designs are going to scale up and down both with respect to the number of SEs and in the number of CUs in each SE. This distinction is important because unlike NVIDIA’s SMX model, where the company can only scale down hardware by cutting whole SMXes, AMD can technically maintain up to 4 SEs while scaling down the number of CUs within each SE. So despite what the SE model implies, AMD’s scaling abilities are status quo for GCN in as much as they can continue to scale down for lower tier parts without sacrificing geometry or ROP performance. In reality of course the physical layout of Hawaii and other GPUs will deviate by even less, as the ROPs are still going to be tied into the memory controllers, the geometry processors are still closely integrated with the command processor, etc. Still, as a high level model it’s likely a better fit for how the underlying hardware really works, as it provides a more intuitive view on how the number of geometry processors, rasterizers, and ROPs are closedly related, or how the individual CUs are lumped together into CU arrays.

With that in mind, we’ll start or low level overview with a look at both the front end and the back end of Hawaii. Of all the aspects of the GPU AMD has scaled up compared to Hawaii, it’s at the front end and the back end that we’ll find the biggest changes due to the fact that AMD has doubled the number of functional units in most of the elements that reside here.

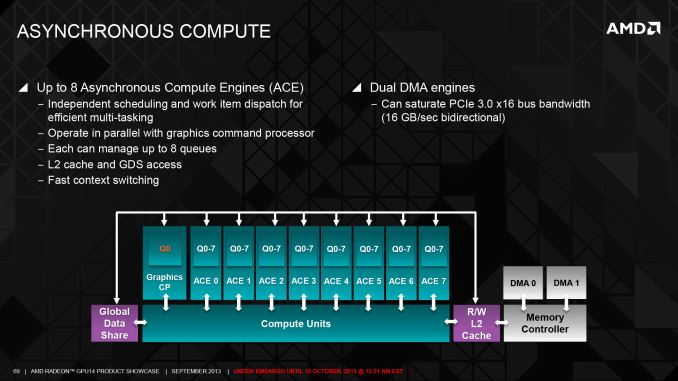

At the very front, in conjunction with the ACE improvements inherient to GCN 1.1, AMD has scaled up the number of ACEs from 2 in Tahiti to 8 in Hawaii. With each ACE now containing 8 work queues this brings the total number of work queues to 64. Unlike most of the other changes we’ll be going over today, the ACE increase has limited applicability for gaming, and while AMD isn’t talking about non-Radeon Hawaii products at this time, given what we know about GCN 1.1 there’s a clear applicability not only towards HSA, but also to more traditional GPU compute setups such as the FirePro S series. For GPU compute the additional ACEs and queues will help improve AMD’s real world compute performance by improving the utilization of the CUs, while the DMA engine improvements that come with the increased number of ACEs will help keep the CUs fed with data from the CPU and other GPUs.

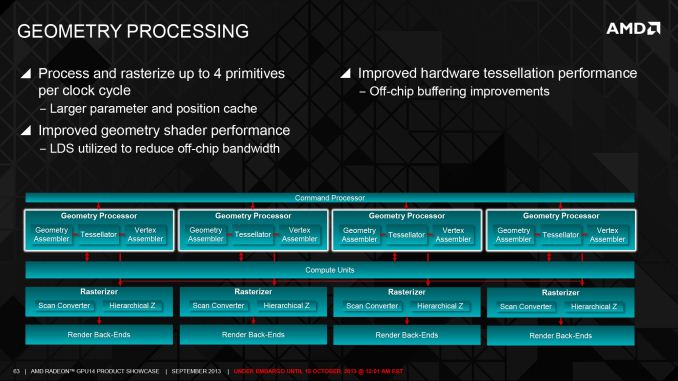

Moving on, there are a number of back end and front end changes AMD has made to improve rendering performance, and the increased number of geometry processors is at the forefront of this. With Hawaii AMD has doubled the number of geometry engines from 2 to 4, and more closely coupling those with the existing 4 rasterizer setup they inherit. The increase in geometry processors comes at an appropriate time for the company as the last time the number of geometry processors was increased was with the 6900 series in 2010, when the company moved to 2 such processors. One of the side effects of the new consoles coming out this year is that cross-platform games will be able to use a much larger number of primitives than before – especially with the uniform addition of D3D11-style tessellation – so there’s a clear need to ramp up geometry performance to keep up with where games are expected to go.

Further coupled with this are more generalized improvements designed to improve geometry efficiency overall. Alongside the additional geometry processors AMD has also improved both on-chip and off-chip data flows, with off-chip buffering being improved to further improve AMD’s tessellation performance, while the Local Data Store can now be tapped by geometry shaders to reduce the need to go off-chip at all. More directly applicable is that the inter-stage storage (parameter and position caches) used by the geometry processors has also been increased in order to keep up with the overall increase in the number of processors.

On a side note, with every architectural revision/launch we try to get AMD’s engineers to give us an idea of what aspects they’re most proud of, and while they typically downplay the question (it’s a team effort, after all) for Hawaii the geometry processor changes have been a recurring theme of something where the engineering team is particularly proud of its work. As it turns out adding geometry processors is actually a quite a bit harder than it sounds, as the additional processors bring with it the need to balance geometry workloads across the processor cluster. When splitting up the geometry workload there are dependency issues that must be addressed, and to maximize efficiency there are load balancing/partitioning matters that must be taken into account as there’s no guarantee geometry is evenly distributed over the entire viewport. Consequently AMD’s engineers are quite happy with how this turned out due to the effort involved.

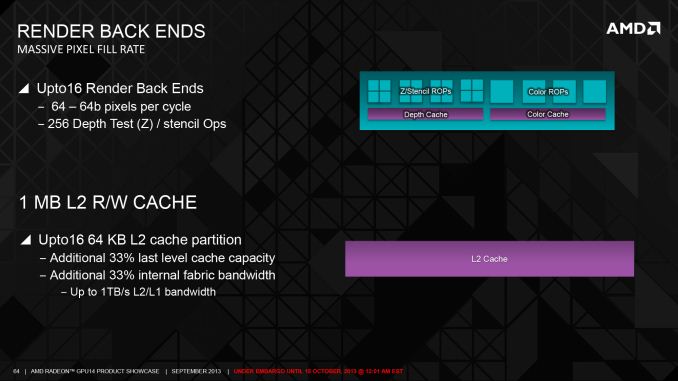

Meanwhile at the other end of the rendering pipeline we have AMD’s back end changes, which have been made in concert with the changes to the front end. The big change here is that for the first time since the 5870 (Cypress) back in 2009, AMD has increased the number of ROPs, going from 32 on Tahiti/Pitcairn to 64 on Hawaii. As ROPs are primarily tasked with jobs that are resolution dependent such as final pixel resolution and depth testing, the workload placed on ROPs has increased much more slowly over the years than the workload placed on shaders or even geometry processors. Similarly, for that reason scaling up the ROPs alone typically doesn’t have a big impact on rendering performance, hence ROP upgrades have come far more sparingly.

With Hawaii the increase in the number of ROPs comes down to a few different factors. To a large extent it’s merely a matter of “it’s time”, where the performance increases finally justify the die space increases. But AMD’s focus on 4K resolution workloads also plays a significant part, as 4K represents a significant increase in the ROP workload, and hence the need for more ROPs to pick up the work. Consequently while we can’t easily compare ROP performance across vendors, increasing the number of ROPs is one of the ways AMD will extend their high resolution performance advantage over NVIDIA, by being sure they have plenty of capacity to chew through 4K scenes.

Working in conjunction with the ROPs of course is the L2 cache, forming the second member of the ROP/L2/MC triumvirate, and like the number of ROPs this is being increased. L2 cache is more closely tied to the memory controllers than the ROPs, so while Tahiti had 32 ROPs and 768KB of L2 paired with 6 memory controllers, Hawaii gets double the ROPs but a smaller 33% increase in the L2 cache in accordance with the 33% increase in memory controllers. The end result is that Hawaii packs a full 1MB of L2 cache, and that the total bandwidth available out of the L2 cache has also been increased by 33% to a full 1TB. The L2 cache plays a role in every aspect of rendering, and as the primary backstop for the ROPs and secondary backstop for the CUs it’s critical to avoiding relatively expensive off-chip memory operations.

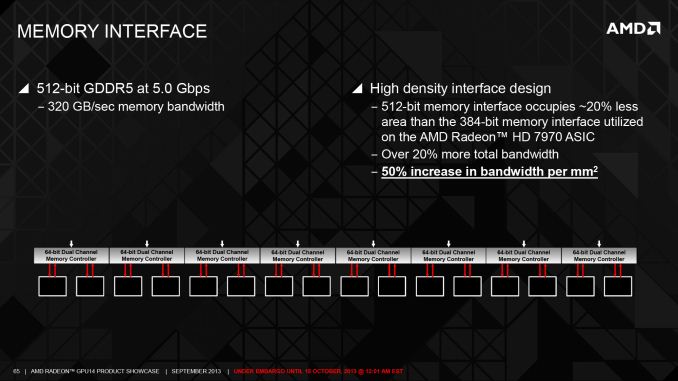

Lastly we have the final member of the ROP/L2/MC triumvirate, which is the memory interface. Tahiti for all of its strengths and weaknesses possesses a very large memory interface (as a percentage of die space), which has helped it reach 6GHz+ memory speeds on a 384-bit memory bus at the cost of die size. As there’s a generally proportional relationship between memory interface size and memory speeds, AMD has made the interesting move of going the opposite direction for Tahiti. Rather than scale up a 384-bit memory controller even more, they opted to scale down an even larger 512-bit memory controller with impressive results.

The result of AMD’s memory interface changes is that between the die space savings from the lower speed controllers coupled with a number of smaller tweaks to improve density, AMD has been able to implement the larger 512-bit memory interface while still reducing the size of the memory interface by 20% as compared to Tahiti. Furthermore these space savings still allow for a meaningful increase in memory bandwidth despite the lower memory clockspeeds, with AMD being able to increase their memory bandwidth by over 10% (as compared to 280X), from 288GB/sec to 320GB/sec. The end result is a very neat and clean (and impressive) improvement in AMD’s memory controllers, with AMD reducing their interface size and increasing their memory bandwidth at the same time. The 512-bit memory bus does have some externalities to it – specifically increased PCB costs and requiring more GDDR5 memory modules than Tahiti (16 vs. 12) – but these are ultimately countered by the die space savings that AMD is realizing from the smaller memory interface.

Meanwhile compared to AMD’s front end and back end changes, the Hawaii’s CU changes are much more straightforward. Besides optimizing the CUs for die size and giving them the appropriate GCN 1.1 functionality, very little has changed here. The end result is a simple increase in the number of CUs, going from 32 on Tahiti to 44 on Hawaii, with AMD continuing to distribute them evenly over the 4 Shader Engines. Shading/texturing remains the primary bottleneck for most games today, so while the CU increase is straightforward the performance implications are not to be ignored. Much of AMD’s 30% performance increase comes from this 38% increase in CUs. GCN was after all designed from the start to scale up well in this respect, so with Hawaii AMD is executing on those plans.

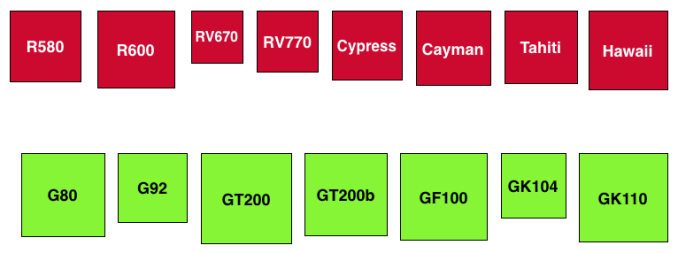

Moving on, having completed our look at the design of Hawaii, let’s discuss the die size of Hawaii a bit. Unlike NVIDIA, AMD doesn’t traditionally go above 400mm2 dies, and for good reason. NVIDIA holds the lion’s share of the high end, high margin workstation market, and while AMD market share has been slowly increasing from the historic lows of a couple of years ago it’s still well behind NVIDIA’s. Consequently AMD doesn’t have that high margin market to help bootstrap the production of large GPUs, requiring that they stay smaller to stay within their means.

With Hawaii AMD still isn’t entering the big die race that defines NVIDIA’s flagship GPUs, but AMD is going larger than ever before. At 438mm2 Hawaii is AMD’s biggest GPU yet, and despite AMD’s improvements in area efficiency Hawaii is still 73mm2 (20%) larger than Tahiti. The fact that AMD is able to improve their gaming performance by 30% over Tahiti means that this is a very good tradeoff to make, it just means that AMD is treading new ground in doing so.

Similarly, at 6.2 billion transistors Hawaii is AMD’s largest GPU yet by transistor count, outpacing the 4.31B Tahiti by 1.89B transistors, an increase of 44%. Now transistor counts alone don’t mean much, but the fact that AMD was able to increase their transistor density by this much is a significant accomplishment for the company.

Meanwhile on a historical basis it’s worth pointing out that while AMD’s “small die” strategy effectively died with Cayman in 2010, this marks the first time since R600 that AMD has dared to go this big. R600, AMD’s previously largest GPU, ended up being rather ill-fated, which in turn spurred on the small die strategy that defined the R700 and Evergreen GPU families. Hawaii won’t be a repeat of R600 – in particular AMD isn’t going to be repeating the unfortunate circumstance of building a large GPU against a new architecture and a new manufacturing node all at the same time – so they are certainly on far more solid ground this time. Ultimately the success of Hawaii will be based on sales and profit margins as always, but based on the performance we’re seeing and the state of AMD’s market, AMD shouldn’t have any trouble justifying a 400mm2 GPU at this point. This is yet another benefit of being a second wind product: AMD gets to build their large GPU against a mature manufacturing process, as opposed to the immature process that Tahiti had to work with.

396 Comments

View All Comments

kyuu - Friday, October 25, 2013 - link

I agree. Ignore at all the complainers; it's great to have the benchmark data available without having to wait for all the rest of the article to be complete. Those who don't want anything at all until it's 100% done can always just come back later.AnotherGuy - Friday, October 25, 2013 - link

What a beastzodiacsoulmate - Friday, October 25, 2013 - link

Donno, all the geforce cards looks like sh!t in this review, and 280x/7970 290x looks like haven's god...but my 6990 7970 never really make me happier than my gtx 670 system...

well, whatever

TheJian - Friday, October 25, 2013 - link

While we have a great card here, it appears it doesn't always beat 780, and gets toppled consistently by Titan in OTHER games:http://www.techpowerup.com/reviews/AMD/R9_290X/24....

World of Warcraft (spanked again all resolutions by both 780/titan even at 5760x1080)

Splinter Cell Blacklist (smacked by 780 even, of course titan)

StarCraft 2 (by both 780/titan, even 5760x1080)

Titan adds more victories (780 also depending on res, remember 98.75% of us run 1920x1200 or less):

Skyrim (all res, titan victory at techpowerup) Ooops, 780 wins all res but 1600p also skyrim.

Assassins creed3, COD Black Ops2, Diablo3, FarCry3 (though uber ekes a victory at 1600p, reg gets beat handily in fc3, however hardocp shows 780 & titan winning apples-apples min & avg, techspot shows loss to 780/titan also in fc3)

Hardocp & guru3d both show Bioshock infinite, Crysis 3 (titan 10% faster all res) and BF3 winning on Titan. Hardocp also show in apples-apples Tombraider and MetroLL winning on titan.

http://www.guru3d.com/articles_pages/radeon_r9_290...

http://hardocp.com/article/2013/10/23/amd_radeon_r...

http://techreport.com/review/25509/amd-radeon-r9-2...

Guild wars 2 at techreport win for both 780/titan big also (both over 12%).

Also tweaktown shows lost planet 2 loss to the lowly 770, let alone 780/titan.

I guess there's a reason why most of these quite popular games are NOT tested here :)

So while it's a great card, again not overwhelming and quite the loser depending on what you play. In UBER mode as compared above I wouldn't even want the card (heat, noise, watts loser). Down it to regular and there are far more losses than I'm listing above to 780 and titan especially. Considering the overclocks from all sites, you are pretty much getting almost everything in uber mode (sites have hit 6-12% max for OCing, I think that means they'll be shipping uber as OC cards, not much more). So NV just needs to kick up 780TI which should knock out almost all 290x uber wins, and just make the wins they already have even worse, thus keeping $620-650 price. Also drop 780 to $500-550 (they do have great games now 3 AAA worth $100 or more on it).

Looking at 1080p here (a res 98.75% of us play at 1920x1200 or lower remember that), 780 does pretty well already even at anandtech. Most people playing above this have 2 cards or more. While you can jockey your settings around all day per game to play above 1920x1200, you won't be MAXING much stuff out at 1600p with any single card. It's just not going to happen until maybe 20nm (big maybe). Most of us don't have large monitors YET or 1600p+ and I'm guessing all new purchases will be looking at gsync monitors now anyway. Very few of us will fork over $550 and have the cash for a new 1440p/1600p monitor ALSO. So a good portion of us would buy this card and still be 1920x1200 or lower until we have another $550-700 for a good 1440/1600p monitor (and I say $550+ since I don't believe in these korean junk no-namers and the cheapest 1440p newegg itself sells is $550 acer). Do you have $1100 in your pocket? Making that kind of monitor investment right now I wait out Gsync no matter what. If they get it AMD compatible before 20nm maxwell hits, maybe AMD gets my money for a card. Otherwise Gsync wins hands down for NV for me. I have no interest in anything but a Gsync monitor at this point and a card that works with it.

Guru3D OC: 1075/6000

Hardwarecanucks OC: 1115/5684

Hardwareheaven OC: 1100/5500

PCPerspective OC: 1100/5000

TweakTown OC: 1065/5252

TechpowerUp OC: 1125/6300

Techspot OC: 1090/6400

Bit-tech OC: 1120/5600

Left off direct links to these sites regarding OCing but I'm sure you can all figure out how to get there (don't want post flagged as spam with too many links).

b3nzint - Friday, October 25, 2013 - link

"So NV just needs to kick up 780TI which should knock out almost all 290x uber wins, and just make the wins they already have even worse, thus keeping $620-650 price. Also drop 780 to $500-550"we're talking about titan killer here.

titan vs titan killer, at res 3840, at high or ultra :

coh2 - 30%

metro - 30%

bio - (10%) but win 3% at medium

bf3 - 15%

crysis 3 - tie

crysis - 10

totalwar - tie

hitman - 20%

grid 2 - 10%+

2816 sp, 64rop, 176tmu, 4gb 512bit. 780 or 780ti won't stand a chance. this is titan killer dude wake up. only then then we're talking CF, SLi and res 5760. But for single card i go for this titan killer. good luck with gsync, im not gave up my dell u2711 yet.

just4U - Friday, October 25, 2013 - link

Well.. you have to put this in context. Those guys gave it their editor's choice award and a overall score of 9.3 They summed it up with this.."

The real highlight of AMD's R9 290X is certainly the price. What has been rumored to cost around $700 (and got people excited at that price), will actually retail for $549! $549 is an amazing price for this card, making it the price/performance king in the high-end segment. NVIDIA's $1000 GTX Titan is completely irrelevant now, even the GTX 780 with its $625 price will be a tough sale."

theuglyman0war - Thursday, October 31, 2013 - link

the flagship gtx *80 $msrp has been $499 for every upgrade I have ever made. After waiting out the 104 fer the 110 chip only to have the insult of the previous 780 pricing meant I will be holding off to see if everything returns to normal with Maxwell. Kind of depressing when others are excited for $550? As far as I know the market still dictates pricing and my price iz $499 if AMD is offering up decent competition to keep the market healthy and respectful.ToTTenTranz - Friday, October 25, 2013 - link

How isn't this viral?nader21007 - Friday, October 25, 2013 - link

Radeon R9 290X received Tom’s Hardware’s Elite award—the first time a graphics card has received this honor. Nvidia: Why?Wiseman: Because it Outperformed a card that is nearly double it's price (your Titan).

Do you hear me Nvidia? Please don't gouge consumers again.

Viva AMD.

doggghouse - Friday, October 25, 2013 - link

I don't think the Titan was ever considered to be a gamer's card... it was more like "prosumer" card for compute. But it was also marketed to people who build EXTREME! machines for maximum OC scores. The 780 was basically the gamer's card... it has 90-95% of the Titan's gaming capability, but for only $650 (still expensive).If you want to compare the R9 290X to the Titan, I would look at the compute benchmarks. And in that, it seems to be an apples to oranges comparison... AMD and nVIDIA seem to trade blows depending on the type of compute.

Compared to the 780, the 290X pretty much beats it hands down in performance. If I hadn't already purchased a 780 last month ($595 yay), I would consider the 290X... though I'd definitely wait for 3rd party cards with better heat solutions. A stock card on "Uber" setting is simply way too hot, and too loud!