The AMD Radeon R9 290X Review

by Ryan Smith on October 24, 2013 12:01 AM EST- Posted in

- GPUs

- AMD

- Radeon

- Hawaii

- Radeon 200

Synthetics

As always we’ll also take a quick look at synthetic performance. The 290X shouldn’t pack any great surprises here since it’s still GCN, and as such bound to the same general rules for efficiency, but we do have the additional geometry processors and additional ROPs to occupy our attention.

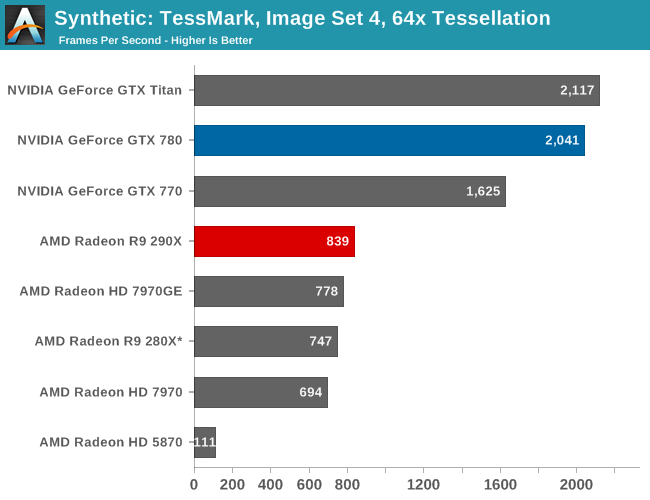

Right off the bat then, the TessMark results are something of a head scratcher. Whereas NVIDIA’s performance here has consistently scaled well with the number of SMXes, AMD’s seeing minimal scaling from those additional geometry processors on Hawaii/290X. Clearly Tessmark is striking another bottleneck on 290X beyond simple geometry throughput, though it’s not absolutely clear what that bottleneck is.

This is a tessellation-heavy benchmark as opposed to a simple massive geometry bencehmark, so we may be seeing a tessellation bottleneck rather than a geometry bottleneck, as tessellation requires its own set of heavy lifting to generate the necessary control points. The 12% performance gain is much closer to the 11% memory bandwidth gain than anything else, so it may be that the 280X and 290X are having to go off-chip to store tessellation data (we are after all using a rather extreme factor), in which case it’s a memory bandwidth bottleneck. Real world geometry performance will undoubtedly be better than this – thankfully for AMD this is the pathological tessellation case – but it does serve of a reminder of how much more tessellation performance NVIDIA is able to wring out of Kepler. Though the nearly 8x increase in tessellation performance since 5870 shows that AMD has at least gone a long way in 4 years, and considering the performance in our tessellation enabled games AMD doesn’t seem to be hurting for tessellation performance in the real world right now.

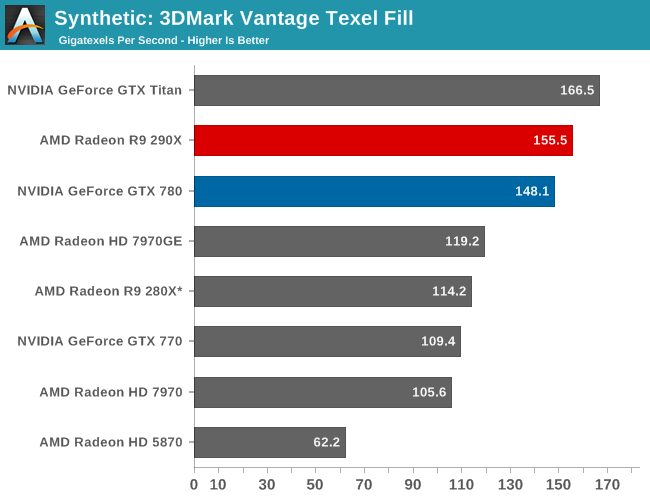

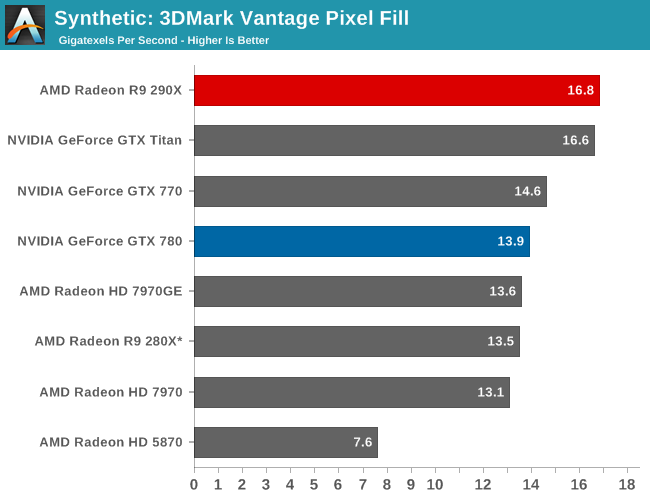

Moving on, we have our 3DMark Vantage texture and pixel fillrate tests, which present our cards with massive amounts of texturing and color blending work. These aren’t results we suggest comparing across different vendors, but they’re good for tracking improvements and changes within a single product family.

Looking first at texturing performance, we can see that texturing performance is essentially scaling 1:1 with what the theoretical numbers say it should. 36% better texturing performance over 280X is exactly in line with the increased number of texture units versus 280X, at the very least proving that 290X isn’t having any trouble feeding the increased number of texture units in this scenario.

Meanwhile for our pixel fill rates the results are a bit more in the middle, reflecting the fact that this test is a mix of ROP bottlenecking and memory bandwidth bottlenecking. Remember, AMD doubled the ROPs versus 280X, but only gave it 11% more memory bandwidth. As a result the ROPs’ ability to perform is going to depend in part on how well color compression works and what can be recycled in the L2 cache, as anything else means a trip to the VRAM and running into those lesser memory bandwidth gains. Though the 290X does get something of a secondary benefit here, which is that unlike the 280X it doesn’t have to go through a memory crossbar and any inefficiencies/overhead it may add, since the number of ROPs and memory controllers is perfectly aligned on Hawaii.

396 Comments

View All Comments

DMCalloway - Thursday, October 24, 2013 - link

Once again, against the Titan it's $450 cheaper, not $100. Against the gtx 780 it is a wash on performance at a cheaper price point. Eight months late to the game I'll agree on, however it took time to get in bed with Sony and Micro$oft which was needed if they (AMD) ever hope to get to the point of being able to release 'at a competitive time'. I'm amazed that they are still viable after the financial losses they suffered with the whole Intel paying OEM's to not release their current cpu gen. along side AMD's business . Sure, AMD won the law suit but the financial losses in market share was in the billions , Intel jumped ahead a gen. and the damage was done. Realistically, I believe AMD chose wisely to focus on the console market because the 7970ghz pushed hard wasn't really that far behind a stock gtx780.Bloodcalibur - Thursday, October 24, 2013 - link

Ever wonder why the TItan costs $350 more than their own GTX 780 while having only a small margin of improvement?Oh, right, compute performance.

anubis44 - Thursday, October 24, 2013 - link

and in some cases, the R9 290X is as much as 23% faster in 4K resolution than the Titan, or in the words of HardOCP: : "at Ultra HD 4K it (R9 290X) just owns the GeForce GTX TITAN."Bloodcalibur - Thursday, October 24, 2013 - link

Once again, Titan is a gaming/workstation hybrid, that's why it costs $350 more than their own GTX 780 with only a small FPS improvement in gaming.TheJian - Friday, October 25, 2013 - link

Depends on the games chosen. For instance All 4K:Guru3d:

Tombraider tied 4k 40fps (they consider this BARELY playable-though advise 60fps)

MOH Warfighter Titan wins 7%

Bioshock Infinite Titan wins 10% (33fps to 30, but again not going to be playable min in teens?)

BF3 TIE (32fps, again avg, so not playable)

The only victory at 4K is Hitman absolution here. So clearly it depends on what your settings are and what games you play. Also note the fps at 4K at hardocp. They can't max settings and every game is a sacrifice of some stuff (or a lot). Even at 2560 Kyle notes all were unplayable with everything on with avg's at 22fps and mins 12fps for all 3 basically...ROFL. How useful is it to win (or even lose) at a res you can't play at?

http://www.techpowerup.com/reviews/AMD/R9_290X/24....

Techpowerup tests all the way to 5760x1080, quoting that unless not tested. Here we go again...LOL

World of Warcraft domination for 780 & Titan (over 20% faster on titan 5760!)

SKYRIM - Both Titan and 780 win 5760

Starcraft2 only went to 2560 but again clean sweep for 780/Titan bot over 10%

Splintercell blacklist clean sweep at 2560 & 5760 for Titan AND 780 (>20% for titan both res)

Farcry3 (titan and 780 wins at 5760 but at 22fps who cares...LOL but 10% faster than 290x)

black ops 2 (only went to 2560, but titan wins all res)

Metro TIE (26fps, again neither playable)

Crysis 3 Titan over 10% win (25fps vs. 22, but neither playable...LOL)

At hardocp, metro, tombraider, bf3, and crysis 3 were all UNDER 25fps min on both cards with most coming in at 22fps or so on both. I wish they would benchmark at what they find is PLAYABLE, but even then I'm against 4K if I have to turn all kinds of stuff off in the first place. Only farcry3 was tested at above 30fps...LOL. You need TWO cards for 4K gaming. PERIOD. If you have the money to buy a 4K monitor or two monitors you probably have the cash to do it right and buy 2 cards. Steampowered survey shows this as most have 2 cards above 1920x1200! Bragging about 4K gaming on this card (or even titan) is ridiculous as it just ends up in an exercise of turning crap off that devs wanted me to SEE. I wouldn't care if 290x was 50% faster than Titan if you're running 22fps who cares? Neither is playable. You've proven NOTHING. If we jump off a 100 story building I'll beat you to the bottom...Yeah but umm...We're both still dead right? So what's the point no matter who wins that game?

Funfact: techspot.com tombraider comment (2560/1080p both tested-4xSSAA+16af)

"We expected AMD to do better in Tomb Raider since they supported the title's development, but the R9 290X was 12% slower than the GTX Titan and 3% slower than the GTX 780"

LOL. I hope they do better with BF4 AMD enhancements. Resident Evil 6 shows titan win also.

http://www.techspot.com/review/727-radeon-r9-290x/...

Tomshardware 4K quote:

"In Gaming At 3840x2160: Is Your PC Ready For A 4K Display?, I concluded that you’d want at least two GeForce GTX 780s for 4K. And although the R9 290X is faster than even the $1000 Titan, I maintain that you need a pair in order to crank your settings up to where they should be."

That was their ARMA quote...But it applies to all 4K...TWO CARDS. But their benchmarks are really low compared to everyone else for Titan in the same games. It's like took 10-15% off Titan's scores. IE, Bioshock infinite at guru3d shows titan winning 10%, but at toms losing by 20% same game, same res...WTF? That's odd right? Skyrim shows NV domination at 4k (780 also). Almost 20% faster for Titan & 780 (they tied) over Uber. Of course they turned off ALL AA modes to get it playable. Again, you can't just judge 4K by one site's games. Clearly you can find the exact opposite at 4K and come back down to reality (a res you can actually play at above 30fps) and titan is smacking them in a ton of games (far more wins than losses). I could find a ton more if needed but you should get the point. TITAN isn't OWNED at 4K and usually when it is as toms says of Metro "the win is largely symbolic though", yeah at 30fps avg it is pointless even turned down!

bronopoly - Thursday, October 24, 2013 - link

Why shouldn't one of the cards you mentioned be bought for 1080p? I don't know about you, but I prefer to get 120 FPS in games so it matches my monitor w/ lightboost enabled.Bloodcalibur - Thursday, October 24, 2013 - link

Except the Titan is a gaming/workstation hybrid due to its computing ability. Anyone who bought a Titan just for gaming is retarded and paid $350 more than they would have on a 780. Titan shouldn t be compared to 290X for gaming. Its a good card for those who do both gaming and a little bit of computing.looncraz - Thursday, October 24, 2013 - link

Install a new cooler and the last two of those problems vanish... and you've saved hundreds... you could afford to build a stand-alone water-cooling loop just for the 290x and still have money to spare for a nice dinner.teiglin - Thursday, October 24, 2013 - link

I haven't finished reading the article yet, but isn't that more than a little hyperbolic? It just means NVIDIA will have to cut back on the amount it gouges for GK110. The fact that it was able to leave the price high for so long is nearly all good for them--it's just a matter of how quickly they adjust their pricing to match.It will be nice to have a fair fight again at the high-end for a single card.

bill5 - Thursday, October 24, 2013 - link

Heh, I'm the biggest AMD fanboy around, but these top two comments almost smell like marketing.It's a great card, and the Titan was deffo highly overpriced, but Nvidia can just make some adjustments on price and compete. That 780 Ti they showed will surely be something in that vein.