21.5-inch iMac (Late 2013) Review: Iris Pro Driving an Accurate Display

by Anand Lal Shimpi on October 7, 2013 3:28 AM ESTGPU Performance: Iris Pro in the Wild

The new iMac is pretty good, but what drew me to the system was it’s among the first implementations of Intel’s Iris Pro 5200 graphics in a shipping system. There are some pretty big differences between what ships in the entry-level iMac and what we tested earlier this year however.

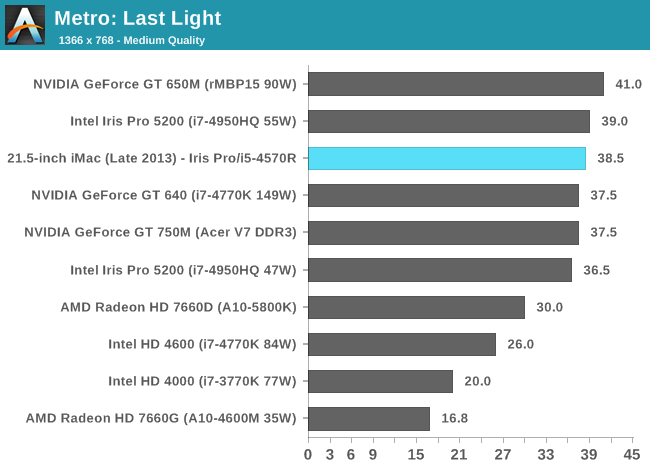

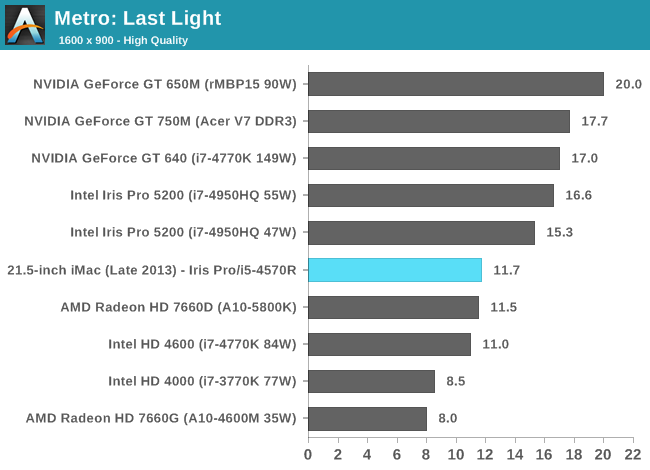

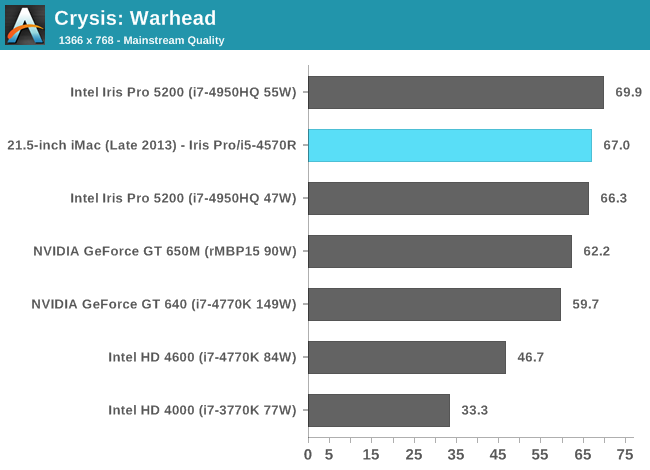

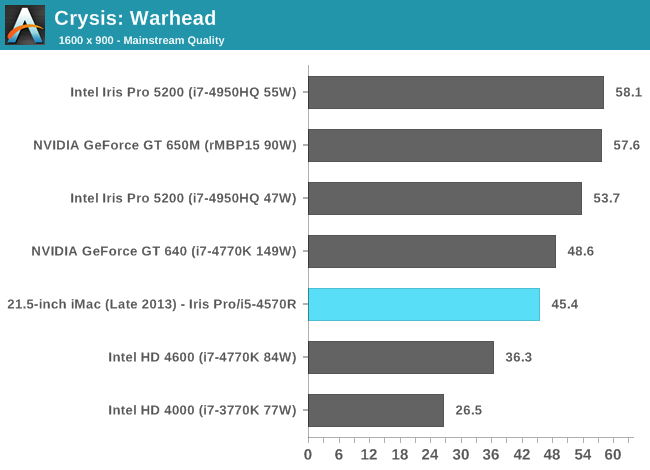

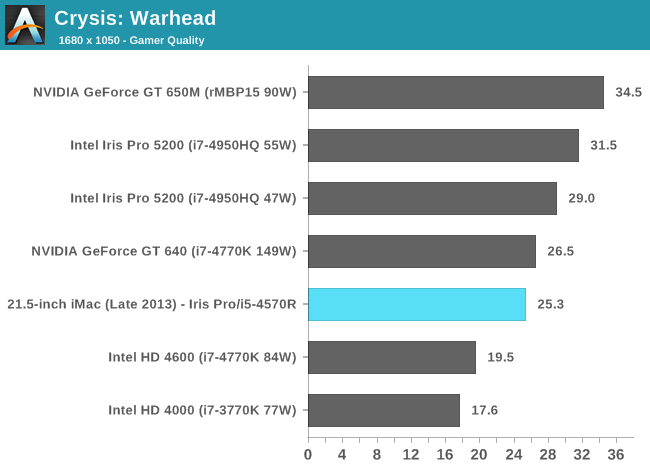

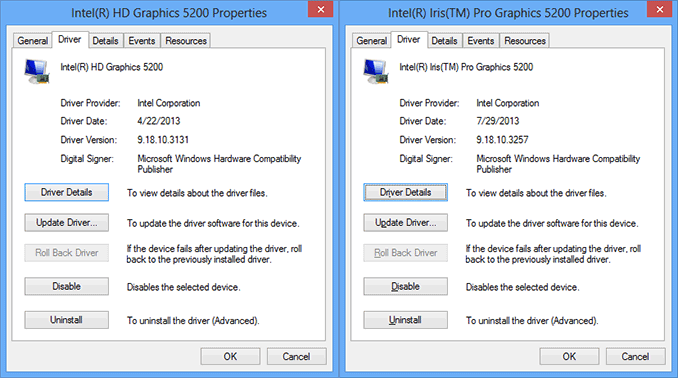

We benchmarked a Core i7-4950HQ, a 2.4GHz 47W quad-core part with a 3.6GHz max turbo and 6MB of L3 cache (in addition to the 128MB eDRAM L4). The new entry-level 21.5-inch iMac is offered with no CPU options in its $1299 configuration: a Core i5-4570R. This is a 65W part clocked at 2.7GHz but with a 3GHz max turbo and only 4MB of L3 cache (still 128MB of eDRAM). The 4570R also features a lower max GPU turbo clock of 1.15GHz vs. 1.30GHz for the 4950HQ. In other words, you should expect lower performance across the board from the iMac compared to what we reviewed over the summer. At launch Apple provided a fairly old version of Iris Pro drivers for Boot Camp, I updated to the latest available driver revision before running any of these tests under Windows.

Iris Pro 5200’s performance is still amazingly potent for what it is. With Broadwell I’m expecting to see another healthy increase in performance, and hopefully we’ll see Intel continue down this path with future generations as well. I do have concerns about the area efficiency of Intel’s Gen7 graphics. I’m not one to normally care about performance per mm^2, but in Intel’s case it’s a concern given how stingy the company tends to be with die area.

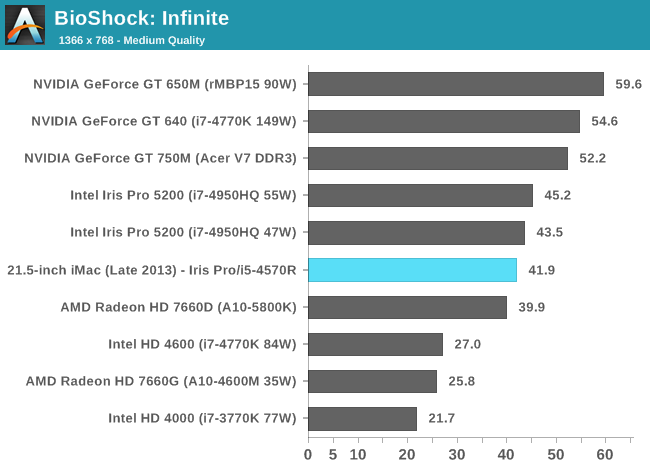

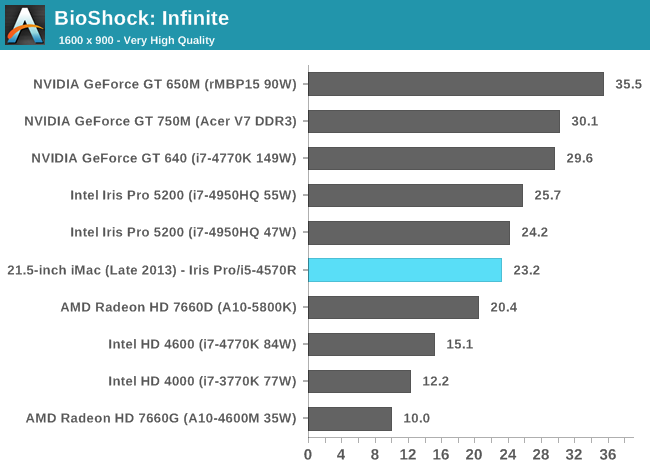

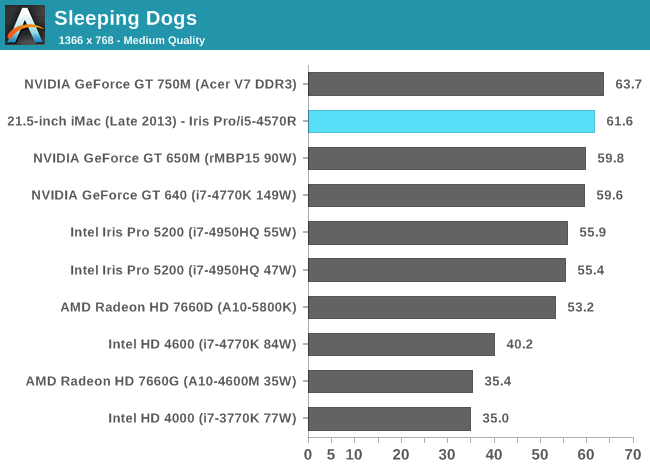

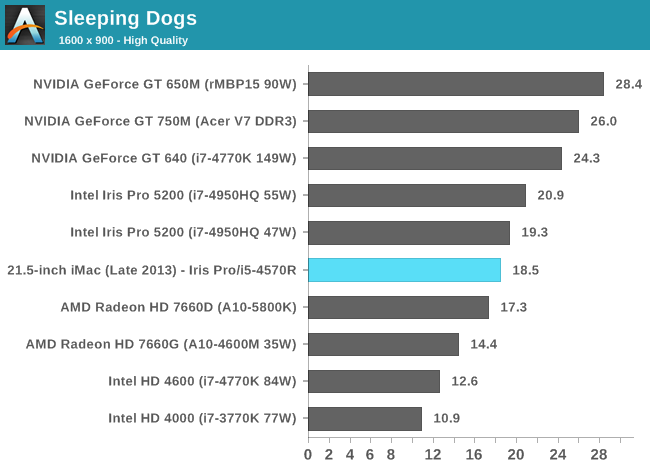

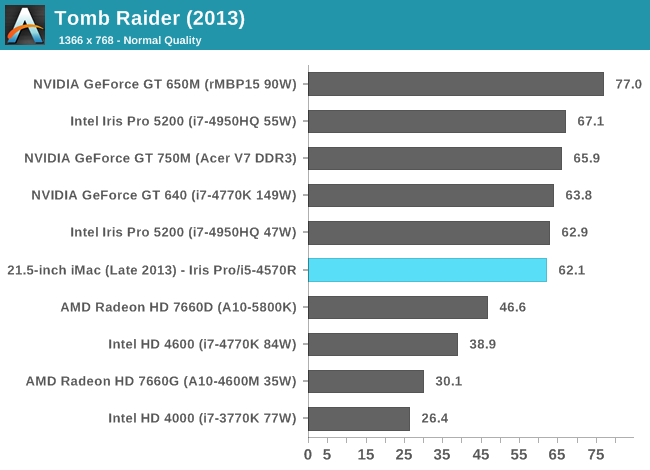

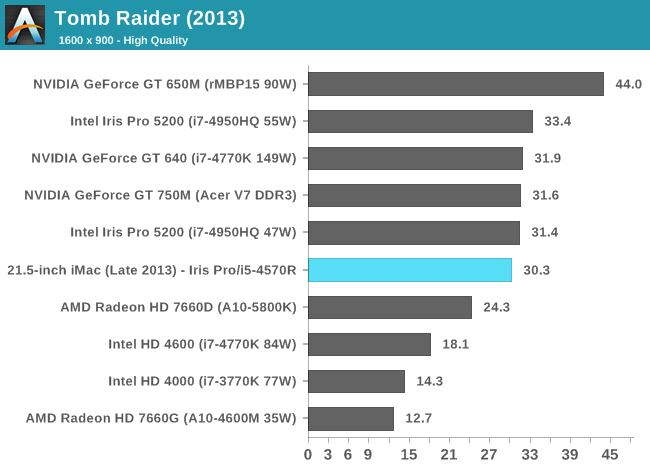

The comparison of note is the GT 750M, as that's likely closest in performance to the GT 640M that shipped in last year's entry-level iMac. With a few exceptions, the Iris Pro 5200 in the new iMac appears to be performance competitive with the 750M. Where it falls short however, it does by a fairly large margin. We noticed this back in our Iris Pro review, but Intel needs some serious driver optimization if it's going to compete with NVIDIA's performance even in the mainstream mobile segment. Low resolution performance in Metro is great, but crank up the resolution/detail settings and the 750M pulls far ahead of Iris Pro. The same is true for Sleeping Dogs, but the penalty here appears to come with AA enabled at our higher quality settings. There's a hefty advantage across the board in Bioshock Infinite as well. If you look at Tomb Raider or Sleeping Dogs (without AA) however, Iris Pro is hot on the heels of the 750M. I suspect the 750M configuration in the new iMacs is likely even faster as it uses GDDR5 memory instead of DDR3.

It's clear to me that the Haswell SKU Apple chose for the entry-level iMac is, understandably, optimized for cost and not max performance. I would've liked to have seen an option with a high-end R-series SKU, although I understand I'm in the minority there.

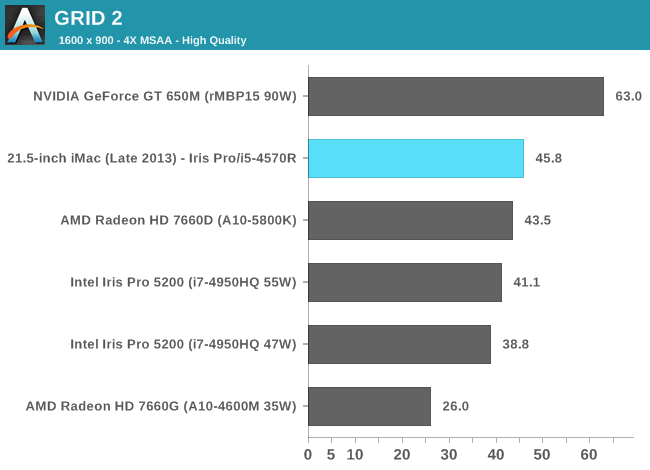

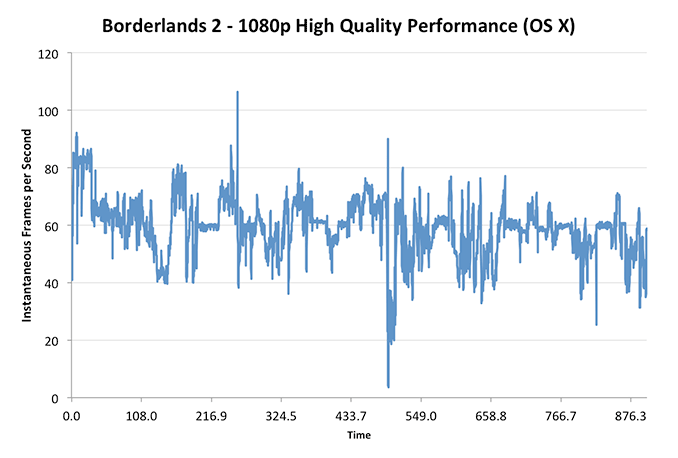

These charts put the Iris Pro’s performance in perspective compared to other dGPUs of note as well as the 15-inch rMBP, but what does that mean for actual playability? I plotted frame rate over time while playing through Borderlands 2 under OS X at 1080p with all quality settings (aside from AA/AF) at their highest. The overall experience running at the iMac’s native resolution was very good:

With the exception of one dip into single digit frame rates (unclear if that was due to some background HDD activity or not), I could play consistently above 30 fps.

Using BioShock Infinite I actually had the ability to run some OS X vs. Windows 8 gaming performance numbers:

| OS X 10.8.5 vs. Windows Gaming Performance - Bioshock Infinite | ||||

| 1366 x 768 Normal Quality | 1600 x 900 High Quality | |||

| OS X 10.8.5 | 29.5 fps | 23.8 fps | ||

| Windows 8 | 41.9 fps | 23.2 fps | ||

Unsurprisingly, when we’re not completely GPU bound there’s actually a pretty large performance difference between OS X and Windows gaming performance. I’ve heard some developers complain about this in the past, partly blaming it on a lack of lower level API access as OS X doesn’t support DirectX and must use OpenGL instead. In our mostly GPU bound test however, performance is identical between OS X and Windows - at least in BioShock Infinite.

127 Comments

View All Comments

elian123 - Monday, October 7, 2013 - link

Anand, could you perhaps indicate when you would expect higher-res iMac displays (as well as pc displays in general, not only all-in-ones)?solipsism - Monday, October 7, 2013 - link

Before that happens Apple will likely need to get their stand-alone Apple display "high-res". I don't expect it to go 2x like avery other one of their display; instead I would suspect it to be 4K, which is exactly 1.5x over the current 27" display size. Note that Apple mentioned 4K many times when previewing the Mac Pro.Also, the most common size for quality 4K panels appears to be 31.5" so I would't be surprised to see it move to that size. When the iMacs are to get updated I think each would then most likely use a slightly larger display panel.

mavere - Monday, October 7, 2013 - link

~75% of the stock desktop wallpapers in OSX 10.9 are at 5120x2880.It's probably the biggest nudge-nudge-wink-wink Apple has ever given for unannounced products.

name99 - Monday, October 7, 2013 - link

Why does Apple have to go to exactly 4K? We all understand the point, and the value, of going to 2x resolution. The only value in going to exactly 4K is cheaper screens (but cheaper screens means crappy lousy looking screens, so Apple doesn't care).jasonelmore - Monday, October 7, 2013 - link

4k is 16:9 ratio, to do a 16:10 right, they would have to do 5krepoman27 - Monday, October 7, 2013 - link

iMacs have been 16:9 since 2009, and 3840x2400 (4K 16:10) panels have been produced in the past and work just fine.repoman27 - Monday, October 7, 2013 - link

Apple is pretty locked in to the current screen sizes and 16:9 aspect ratio by the ID, and I can only imagine they will stick with the status quo for at least one more generation in order to recoup some of their obviously considerable design costs there.Since Apple sells at best a couple million iMacs of each form factor in a year’s time, they kinda have to source panels for which there are other interested customers—we’re not even close to iPhone or iPad numbers here. Thus I’d reckon we’ll see whatever panels they intend to use in future generations in the wild before those updates happen. As solipsism points out, the speculation that there will be a new ATD with a 31.5”, 3840x2160 panel released alongside the new Mac Pro makes total sense because other vendors are already shipping similar displays.

I actually made a chart to illustrate why a Retina iMac was unlikely anytime soon: http://i.imgur.com/CfYO008.png

I listed the size and resolution of previous LCD iMacs, as well as possible higher resolutions at 21.5” and 27”. Configurations that truly qualify as "Retina" are highlighted in green, and it looks as though pixel doubling will be Apple’s strategy when they make that move. I also highlighted configurations that require two DP 1.1a links or a DP 1.2 link in yellow, and those that demand four DP 1.1a links or two DP 1.2 links in red for both standard CVT and CVT with reduced blanking. Considering Apple has yet to ship any display that requires more than a single DP 1.1a link, and all of the Retina options at 27" are in the red is probably reason alone that such a device doesn't exist yet.

I also included the ASUS PQ321Q 31.5" 3840x2160 display, and the Retina MacBook Pros as points of comparison to illustrate the pricing issues that Retina iMacs would face. While there are affordable GPU options that could drive these displays and still maintain a reasonable degree of UI smoothness, the panels themselves either don't exist or would be prohibitively expensive for an iMac.

name99 - Monday, October 7, 2013 - link

OR what you chart tells us is that these devices will be early adopters of the mythical (but on its way) DisplayPort 1.3?Isn't it obvious that part of the slew of technologies to arrive when 4K hits the mainstream (as opposed to its current "we expect you to pay handsomely for something that is painful to use" phase will be an updated DisplayPort spec?

repoman27 - Monday, October 7, 2013 - link

Unlike HDMI 1.4, DisplayPort 1.2 can handle 4K just fine. I'd imagine DP 1.3 should take us to 8K.What baffles me is that every Mac Apple has shipped thus far with Thunderbolt and either a discrete GPU or Haswell has been DP 1.2 capable, but the ports are limited to DP 1.1a by the Thunderbolt controller. So even though Intel is supposedly shipping Redwood Ridge which has a DP 1.2 redriver, and Falcon Ridge which fully supports DP 1.2, we seem to be getting three generations of Macs where only the Mac Pros can actually output a DP 1.2 signal.

Furthermore, I don't know of any panels out there that actually support eDP HBR2 signaling (introduced in the eDP 1.2 specification in May 2010, clarified in eDP 1.3 in February 2011, and still going strong in eDP 1.4 as of January this year). The current crop of 4K displays appear to be driven by converting a DisplayPort 1.2 HBR2 signal that uses MST to treat the display as 2 separate regions into a ridiculously wide 8 channel LVDS signal. Basically, for now, driving a display at more than 2880x1800 seems to require multiple outputs from the GPU.

And to answer your question about why 4K, the problem is really more to do with creating a panel that has a pixel pitch somewhere in the no man's land between 150 and 190 PPI. Apple does a lot of work to make scaling decent even with straight up pixel doubling, but the in-between pixel densities would be really tricky, and probably not huge sellers in the Windows market. Apple needs help with volume in this case, they can't go it alone and expect anything short of ludicrously expensive.

name99 - Tuesday, October 8, 2013 - link

My bad. I had in mind the fancier forms of 4K like 10 bits (just possible) and 12 bit (not possible) at 60Hz, or 8bit at 120Hz; not your basic 8 bits at 60Hz. I should have filled in my reasoning.