21.5-inch iMac (Late 2013) Review: Iris Pro Driving an Accurate Display

by Anand Lal Shimpi on October 7, 2013 3:28 AM ESTGPU Performance: Iris Pro in the Wild

The new iMac is pretty good, but what drew me to the system was it’s among the first implementations of Intel’s Iris Pro 5200 graphics in a shipping system. There are some pretty big differences between what ships in the entry-level iMac and what we tested earlier this year however.

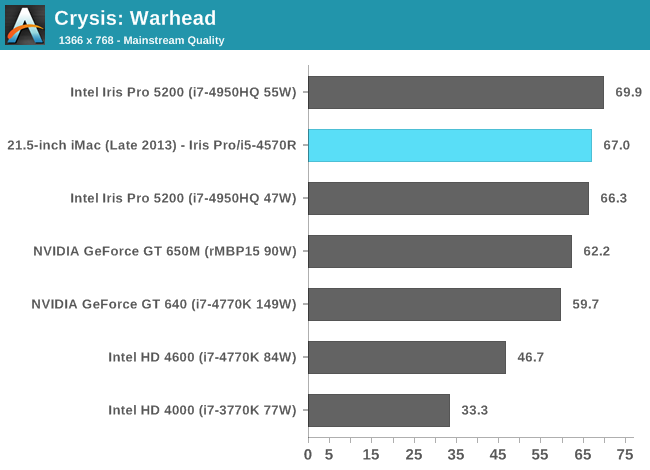

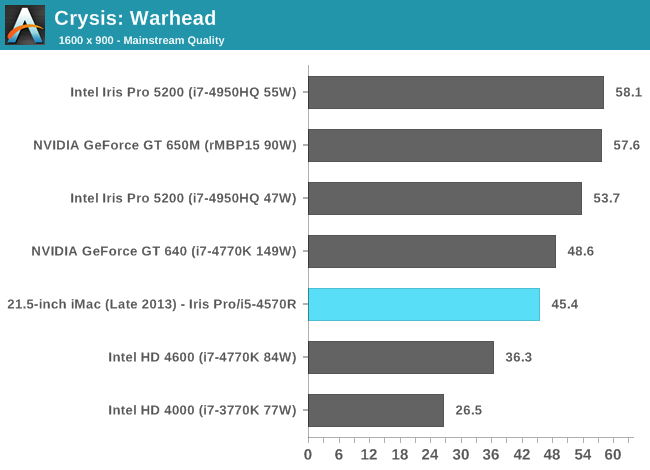

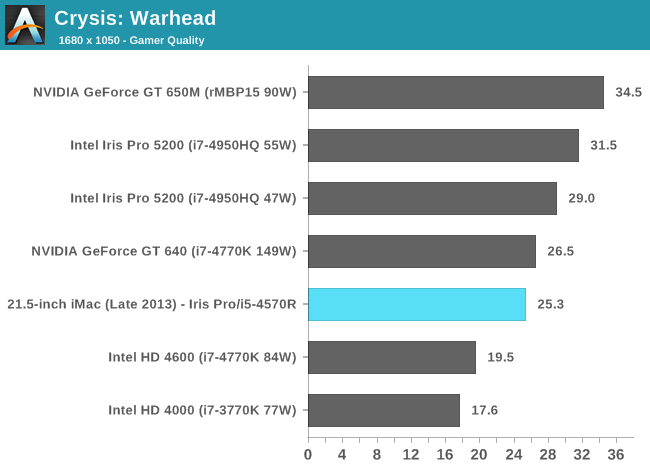

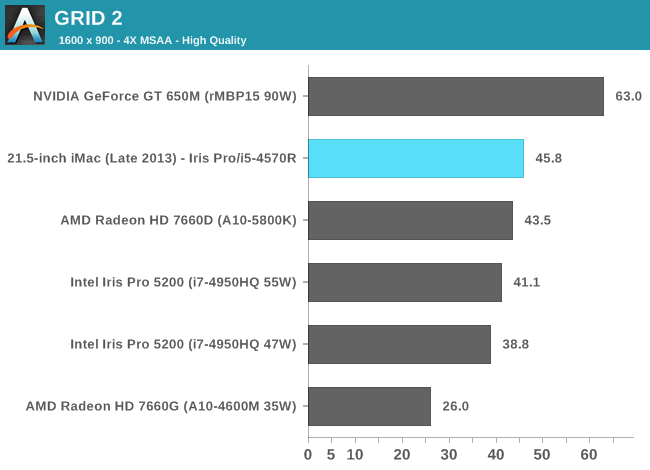

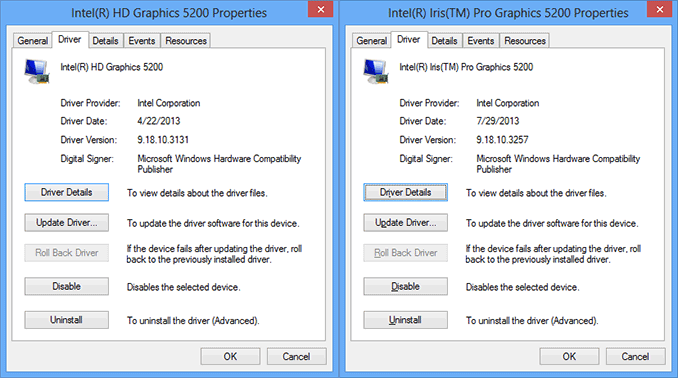

We benchmarked a Core i7-4950HQ, a 2.4GHz 47W quad-core part with a 3.6GHz max turbo and 6MB of L3 cache (in addition to the 128MB eDRAM L4). The new entry-level 21.5-inch iMac is offered with no CPU options in its $1299 configuration: a Core i5-4570R. This is a 65W part clocked at 2.7GHz but with a 3GHz max turbo and only 4MB of L3 cache (still 128MB of eDRAM). The 4570R also features a lower max GPU turbo clock of 1.15GHz vs. 1.30GHz for the 4950HQ. In other words, you should expect lower performance across the board from the iMac compared to what we reviewed over the summer. At launch Apple provided a fairly old version of Iris Pro drivers for Boot Camp, I updated to the latest available driver revision before running any of these tests under Windows.

Iris Pro 5200’s performance is still amazingly potent for what it is. With Broadwell I’m expecting to see another healthy increase in performance, and hopefully we’ll see Intel continue down this path with future generations as well. I do have concerns about the area efficiency of Intel’s Gen7 graphics. I’m not one to normally care about performance per mm^2, but in Intel’s case it’s a concern given how stingy the company tends to be with die area.

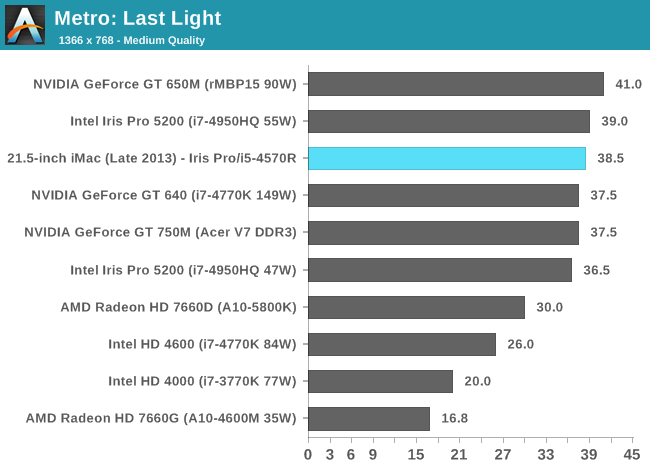

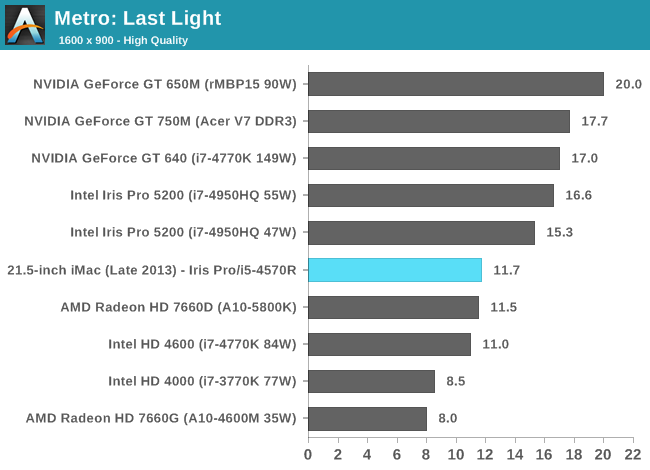

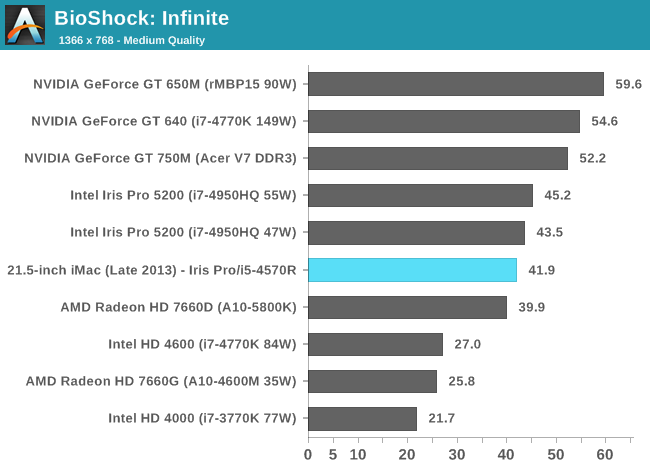

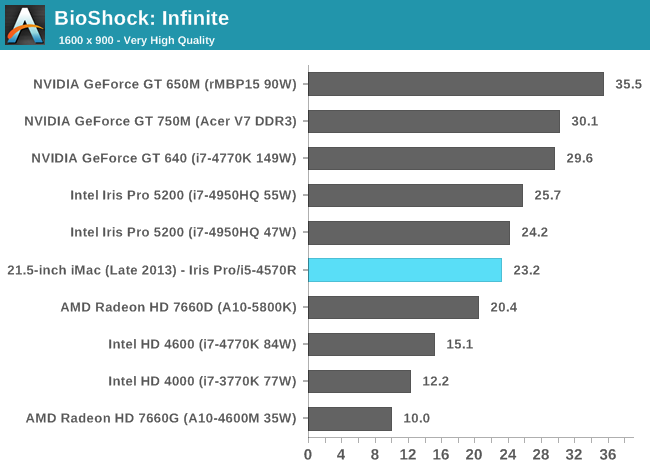

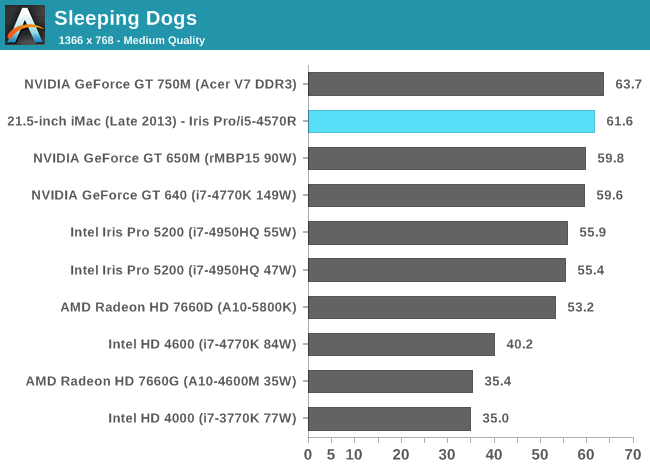

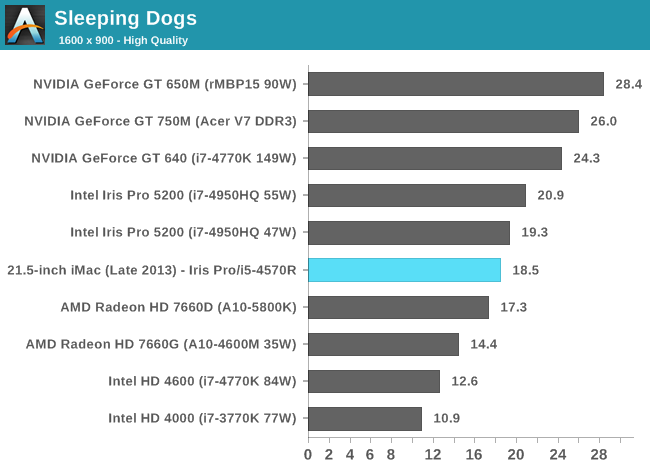

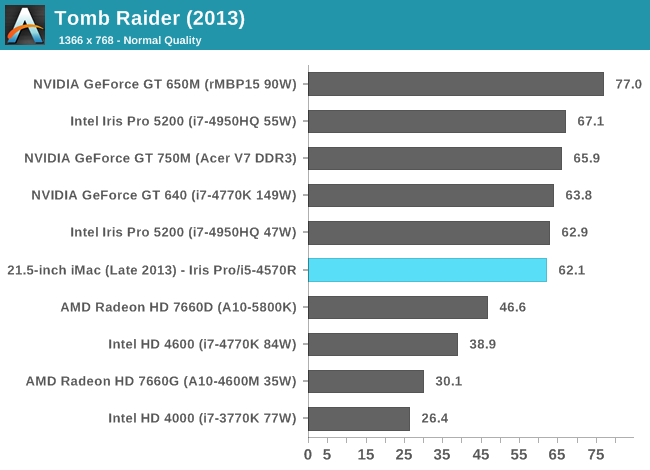

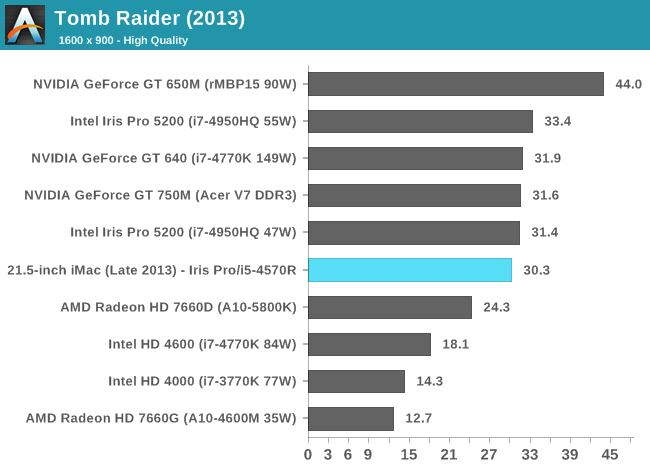

The comparison of note is the GT 750M, as that's likely closest in performance to the GT 640M that shipped in last year's entry-level iMac. With a few exceptions, the Iris Pro 5200 in the new iMac appears to be performance competitive with the 750M. Where it falls short however, it does by a fairly large margin. We noticed this back in our Iris Pro review, but Intel needs some serious driver optimization if it's going to compete with NVIDIA's performance even in the mainstream mobile segment. Low resolution performance in Metro is great, but crank up the resolution/detail settings and the 750M pulls far ahead of Iris Pro. The same is true for Sleeping Dogs, but the penalty here appears to come with AA enabled at our higher quality settings. There's a hefty advantage across the board in Bioshock Infinite as well. If you look at Tomb Raider or Sleeping Dogs (without AA) however, Iris Pro is hot on the heels of the 750M. I suspect the 750M configuration in the new iMacs is likely even faster as it uses GDDR5 memory instead of DDR3.

It's clear to me that the Haswell SKU Apple chose for the entry-level iMac is, understandably, optimized for cost and not max performance. I would've liked to have seen an option with a high-end R-series SKU, although I understand I'm in the minority there.

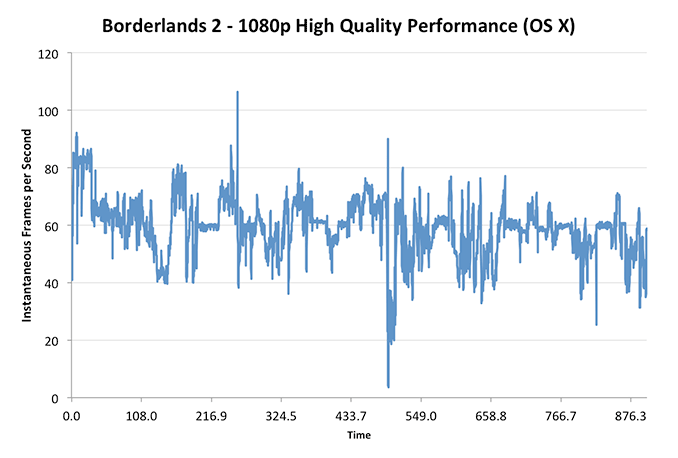

These charts put the Iris Pro’s performance in perspective compared to other dGPUs of note as well as the 15-inch rMBP, but what does that mean for actual playability? I plotted frame rate over time while playing through Borderlands 2 under OS X at 1080p with all quality settings (aside from AA/AF) at their highest. The overall experience running at the iMac’s native resolution was very good:

With the exception of one dip into single digit frame rates (unclear if that was due to some background HDD activity or not), I could play consistently above 30 fps.

Using BioShock Infinite I actually had the ability to run some OS X vs. Windows 8 gaming performance numbers:

| OS X 10.8.5 vs. Windows Gaming Performance - Bioshock Infinite | ||||

| 1366 x 768 Normal Quality | 1600 x 900 High Quality | |||

| OS X 10.8.5 | 29.5 fps | 23.8 fps | ||

| Windows 8 | 41.9 fps | 23.2 fps | ||

Unsurprisingly, when we’re not completely GPU bound there’s actually a pretty large performance difference between OS X and Windows gaming performance. I’ve heard some developers complain about this in the past, partly blaming it on a lack of lower level API access as OS X doesn’t support DirectX and must use OpenGL instead. In our mostly GPU bound test however, performance is identical between OS X and Windows - at least in BioShock Infinite.

127 Comments

View All Comments

rootheday3 - Monday, October 7, 2013 - link

I don't think this is true. See the die shots here:http://wccftech.com/haswell-die-configurations-int...

I count 8 different die configurations.

Note that the reduction in LLC (CPU L3) on Iris Pro may be because some of the LLC is used to hold tag data for the 128MB of eDRAM. Mainstream Intel CPUs have 2MB of LLC per CPU core, so the die has 8MB of LLC natively. The i7-4770R has all 8MB enabled but 2MB for eDRAM tag ram leaving 6MB for the CPU/GPU to use directly as cache (how it is reported on the spec sheet). The i5s generally have 6MB natively (for either die recovery and/or segmentation reasons) but if 2MB is used for eLLC tag ram, that leaves 4 for direct cache usage.

Given that you get 128MB of eDRAM in exchange for the 2MB LLC consumed as tag ram, seems like a fair trade.

name99 - Monday, October 7, 2013 - link

HT adds a pretty consistent 25% performance boost across an extremely wide variety of benchmarks. 50% is an unrealistic value.And, for the love of god, please stop with this faux-naive "I do not understand why Intel does ..." crap.

If you do understand the reason, you are wasting everyone's time with your lament.

If you don't understand the reason, go read a fscking book. Price discrimination (and the consequences thereof INCLUDING lower prices at the low end) are hardly deep secret mysteries.

(And the same holds for the "Why oh why do Apple charge so much for RAM upgrades or flash upgrades" crowd. You're welcome to say that you do not believe the extra cost is worth the extra value to YOU --- but don't pretend there's some deep unresolved mystery here that only you have the wit to notice and bring to our attention; AND on't pretend that your particular cost/benefit tradeoff represents the entire world.

And heck, let's be equal opportunity here --- the Windows crowd have their own version of this particular fool, telling us how unfair it is that Windows Super Premium Plus Live Home edition is priced at $30 more than Windows Ultra Extra Pro Family edition.

I imagine there are the equivalent versions of these people complaining about how unfair Amazon S3 pricing is, or the cost of extra Google storage. Always with this same "I do not understand why these companies behave exactly like economic theory predicts; and they try to make a profit in the bargain" idiocy.)

tipoo - Monday, October 7, 2013 - link

Wow, the gaming performance gap between OSX and Windows hasn't narrowed at all. I had hoped, two major OS releases after the Snow Leopard article, it would have gotten better.tipoo - Monday, October 7, 2013 - link

I wonder if AMD will support OSX with Mantle?Flunk - Monday, October 7, 2013 - link

Likely not, I don't think they're shipping GCN chips in any Apple products right now.AlValentyn - Monday, October 7, 2013 - link

Look up Mavericks, it supports OpenGL4.1, while Mountain Lion is still at 3.2http://t.co/rzARF6vIbm

Good overall improvements in the Developer Previews alone.

tipoo - Monday, October 7, 2013 - link

ML supports a higher OpenGL spec than Snow Leopard, but that doesn't seem to have helped lessen the real world performance gap.Sm0kes - Tuesday, October 8, 2013 - link

Got a link with real numbers?Hrel - Monday, October 7, 2013 - link

The charts show the Iris Pro take a pretty hefty hit any time you increase quality settings. HOWEVER, you're also increasing resolution. I'd be interested to see what happens when you increase resolution but leave detail settings at low-med.In other words, is the bottleneck the processing power of the GPU (I think it is) or the memory bandwidth? I suspect we could run Mass Effect or something similar at 1080p with medium settings.

Kevin G - Monday, October 7, 2013 - link

"OS X doesn’t seem to acknowledge Crystalwell’s presence, but it’s definitely there and operational (you can tell by looking at the GPU performance results)."I bet OS X does but not in the GUI. Type the following in terminal:

sysctl -a hw.

There should be line about the CPU's full cache hierarchy among other cache information.