Memory Scaling on Haswell CPU, IGP and dGPU: DDR3-1333 to DDR3-3000 Tested with G.Skill

by Ian Cutress on September 26, 2013 4:00 PM EST

‘How much does memory speed matter?’ is a question often asked when dealing with mainstream processor lines. Depending on the platform, the answers might very well be different. Similar to our comparisons with Ivy Bridge, today we publish our results for 26 different memory timings across 45 benchmarks, all using a G.Skill memory kit.

In our previous memory scaling article with an Ivy Bridge CPU, the results of memory testing between DDR3-1333 to DDR3-2400 afforded two main results – (a) the high end memory kit offered up to a 20% improvement, but (b) this improvement was restricted to certain memory limited tests. In order to be more thorough, our tests in this article take a single memory kit, the G.Skill 2x4GB DDR3-3000 12-14-14 1.65V kit, through 26 different combinations of memory speed and CAS latency to see if it is better to choose one set of timings over the other. Benchmarks chosen include my standard array of real world benchmarks, some of which are memory limited, as well as several gaming titles on IGP, single GPU and multi-GPU setups, recording both average and minimum frame rates.

The Problem with Memory Speed

As mentioned in the Ivy Bridge memory scaling article, one of the main issues with reporting memory speeds is the exclusion of the CAS Latency, or tCL. When a user purchases memory, it comes with an associated number of sticks, each stick is of a certain size, memory speed, set of subtimings and voltage. In fact the importance of order is such that:

1. Amount of memory

2. Number of sticks of memory

3. Placement of those sticks in the motherboard

4. The MHz of the memory

5. If XMP/AMP is enabled

6. The subtimings of the memory

I use this order on the basis that point 1 is more important than point 3:

- A system will be slow due to lack of memory before the speed of the memory is an issue (point 1)

- In order to take advantage of the number of memory channels of the CPU we must have a number of sticks that have a factor of the memory channels (point 2), known as dual channel/tri channel/quad channel.

- In order to ensure that we have dual (or tri/quad) channel operation these sticks need to be in the right slots of the motherboard – most motherboards support two DIMM slots per channel and we need at least one memory stick for each channel

- If the MHz of the memory is more than CPU is rated for (1333, 1600, 1866+), then the user needs to apply XMP/AMP in order to benefit from the additional speed. Otherwise the system will run at the CPU defaults.

- Subtimings, such as tCL, are used in conjunction with the MHz to provide the overall picture when it comes to performance.

A user can go out and buy two memory kits, both DDR3-2400, but in reality (as shown in this review), they can perform different and have different prices. The reason for this will be in the sub-timings of each memory kit: one might be 9-11-10 (2400 C9), and the other 11-11-11 (2400 C11). So whenever someone boasts about a particular memory speed, ask for subtimings.

G.Skill DDR3-3000 C12 2x4GB Memory Kit: F3-3000C12D-8GTXDG

For this review, G.Skill supplied us with a pair of DDR3 modules from their TridentX range, rated at DDR3-3000. This is at the absolute high end of memory kits, with very few memory kits going faster in terms of MHz. Of course, in this MHz race, it comes at a price premium: $690 for 8 GB. This memory kit uses single-sided Hynix MFR ICs, known for their high MHz numbers, and while there are large heat-spreaders on each stick, these can be removed reducing the height from 5.4 cm to 3.9 cm.

Hynix MFR based memory kits are used by extreme overclockers to hit the high MHz numbers. Recently YoungPro from Australia took one of these memory sticks and hit DDR3-4400 MHz (13-31-31 sub-timings) to reach #1 in the world in pure MHz.

Test Setup

| Test Setup | |

| Processor |

Intel Core i7-4770K Retail @ 4.0 GHz 4 Cores, 8 Threads, 3.5 GHz (3.9 GHz Turbo) |

| Motherboards | ASRock Z87 OC Formula/AC |

| Cooling |

Corsair H80i Intel Stock Cooler (pre-testing) |

| Power Supply | Corsair AX1200i Platinum PSU |

| Memory | G.Skill TridentX 2x4 GB DDR3-3000 12-14-14 Kit |

| Memory Settings | 1333 C7 to XMP (3000 12-14-14) |

| Discrete Video Cards |

AMD HD5970 AMD HD5870 |

| Video Drivers | Catalyst 13.6 |

| Hard Drive | OCZ Vertex 3 256GB |

| Optical Drive | LG GH22NS50 |

| Case | Open Test Bed |

| Operating System | Windows 7 64-bit |

| USB 3 Testing | OCZ Vertex 3 240GB with SATA->USB Adaptor |

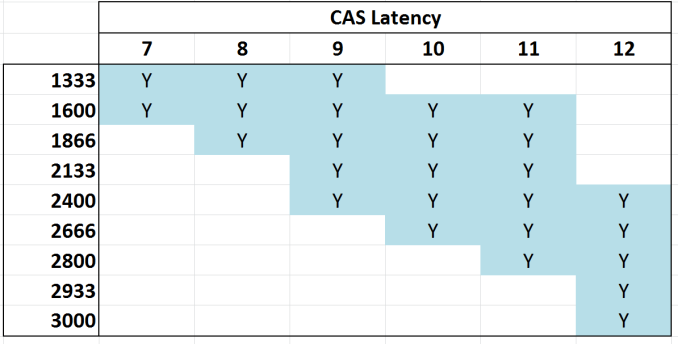

With this test setup, we are using the BIOS to set the following combinations of MHz and subtimings:

Almost all of these combinations are available for purchase. For any combination of MHz and CAS, we attempt that CAS for all sub-timings, e.g. 2400 9-9-9 1T at 1.65 volts. If this setting is unstable, we move to 9-10-9, 9-10-10 then 9-11-10 and so on until the combination is stable.

There is an odd twist when dealing with DDR3-3000. In order to reach 3000 MHz, as Haswell does not accept the DDR3-3000 memory strap, we actually have to use the DDR3-2933 strap and boost the CPU speed to 102.3 MHz. This leads to a slight advantage in terms of CPU throughput when using DDR3-3000 which does come through in several benchmarks. In order to keep things even, our 4.0 GHz CPU has the multiplier reduced for 3000 C12 in order to keep the overall system speed the same, albeit with a slight BCLK advantage.

At the time of testing, DDR3-3000 C12 was the highest MHz memory kit available, but since then there are now 3100 C12 memory kits on the market taking price margins even higher at $1000 for 8 GB. The problem at this speed is the actual overclocking of the CPU aspect of the system will skew the performance results in favor of the high end kit.

Benchmarks

For this test, we use the following real world and compute benchmarks:

CPU Real World:

- WinRAR 4.2

- FastStone Image Viewer

- Xilisoft Video Converter

- x264 HD Benchmark 4.0

- TrueCrypt v7.1a AES

- USB 3.0 MaxCPU Copy Test

CPU Compute:

- 3D Particle Movement, Single Threaded and MultiThreaded

- SystemCompute ‘2D Explicit’

- SystemCompute ‘3D Explicit’

- SystemCompute nBody

- SystemCompute 2D Implicit

IGP Compute:

- SystemCompute ‘2D Explicit’

- SystemCompute ‘3D Explicit’

- SystemCompute nBody

- SystemCompute MatrixMultiplication

- SystemCompute 3D Particle Movement

For what should be obvious reasons, there is no point in running synthetic tests when dealing with memory. A synthetic test will tell you if the peak speed or latency is higher or lower – that is not a number that necessarily translates into the real world unless you can detect the type and size of all the memory accesses used within a real world environment. The real world is more complex than a simple boost in memory read/write peak speeds.

For each of the 3D benchmarks we use an ASUS HD 6950 (flashed to HD6970) for the single GPU tests, the HD 4600 in the CPU for IGP, and a HD 5970+5870 for a lopsided tri-GPU test.

Gaming:

- Dirt 3, Avg and Min FPS, 1360x768

- Bioshock Infinite, Avg and Min FPS, 1360x768

- Tomb Raider, Avg and Min FPS, 1360x768

- Sleeping Dogs, Avg and Min FPS, 1360x768

Firstly, I want to go through enabling XMP in the BIOS of all the major vendors.

89 Comments

View All Comments

Rob94hawk - Friday, September 27, 2013 - link

Avoid DDR3 1600 and spend more for that 1 extra fps? No thanks. I'll stick with my DDR3 1600 @ 9-9-9-24 and I'll keep my Haswell overclocked at 4.7 Ghz which is giving me more fps.Wwhat - Friday, September 27, 2013 - link

I have RAM that has an XMP profile, but I did NOT enable it in the BIOS, reason being that it will run faster but it jumps to 2T, and ups to 1.65v from the default 1.5v, apart from the other latencies going up of course.Now 2T is known to not be a great plan if you can avoid it.

So instead I simply tweak the settings to my own needs, because unlike this article's suggestion you can, and overclockers will, do it manually instead of only having the options SPD or XMP..

The difference is that you need to do some testing to see what is stable, which can be quite different from the advised values in the settings chip.

So it's silly to ridicule people for not being some uninformed type with no idea except allowing the SPD/XMP to tell them what to do.

Hrel - Friday, September 27, 2013 - link

Not done yet, but so far it seems 1866 CL 9 is the sweet spot for bang/buck.I'd also like to add that I absolutely LOVE that you guys do this kind of in depth analyses. Remember when, one of you, did the PSU review? Actually going over how much the motherboard pulled at idle and load, same for memory on a per DIMM basis. CPU, everything, hdd, add in cards. I still have the specs saved for reference. That info is getting pretty old though, things have changed quite a bit since back then; when the northbridge was still on the motherboard :P

Hint Hint ;)

repoman27 - Friday, September 27, 2013 - link

Ian, any chance you could post the sub-timings you ended up using for each of the tested speeds?If you're looking at mostly sequential workloads, then CL is indicative of overall latency, but once the workloads become more random / less sequential, tRCD and tRP start to play a much larger role. If what you list as 2933 CL12 is using 12-14-14, then page-empty or page-miss accesses are going to look a lot more like CL13 or CL14 in terms of actual ns spent servicing the requests.

Also, was CMD consistent throughout the tests, or are some timings using 1T and others 2T?

There's a lot of good data in this article, but I constantly struggle with seeing the correlation between real world performance, memory bandwidth, and memory latency. I get the feeling that most scenarios are not bound by bandwidth alone, and that reducing the latency and improving the consistency of random accesses pays bigger dividends once you're above a certain bandwidth threshold. I also made the following chart, somewhat along the lines of those in the article, in order to better visualize what the various CAS latencies look like at different frequencies: http://i.imgur.com/lPveITx.png Of course real world tests don't follow the simple curves of my chart because the latency penalties of various types of accesses are not dictated solely by CL, and enthusiast memory kits are rarely set to timings such as n-n-n-3*n-1T where the latency would scale more consistently.

Wwhat - Sunday, September 29, 2013 - link

Good comment I must say, and interesting chart.Peroxyde - Friday, September 27, 2013 - link

"#2 Number of sticks of memory"Can you please clarify? What should be that number? The highest possible? For example, to get 16GB, what is the best sticks combination to recommend? Thanks for any help.

erple2 - Sunday, September 29, 2013 - link

I think that if you have a dual channel memory controller and have a single dimm, then you should fill up the controller with a second memory chip first.malphadour - Sunday, September 29, 2013 - link

Peroxyde, Haswell uses a dual channel controller, so in theory (and in some benchmarks I have seen) 2 sticks of 8gb ram would give the same performance as 4 sticks of 4gb ram. So go with the 2 sticks as this allows you to fit more ram in the future should you want to without having to throw away old sticks. You could also get 1 16gb stick of ram, and benchmarks I have seen suggest that there is only about a 5% decrease in performance, though for the tiny saving in cost you might as well go dual channel.lemonadesoda - Saturday, September 28, 2013 - link

I'm reading the benchmarks. And what I see is that in 99% of tests the gains are technical and only measurable to the third significant digit. That means they make no practical noticeable difference. The money is better spent on a difference part of the system.faster - Saturday, September 28, 2013 - link

This is a great article. This is valuable, useful, and practical information for the system builders on this site. Thank you!