Memory Scaling on Haswell CPU, IGP and dGPU: DDR3-1333 to DDR3-3000 Tested with G.Skill

by Ian Cutress on September 26, 2013 4:00 PM ESTOne side I like to exploit on CPUs is the ability to compute and whether a variety of mathematical loads can stress the system in a way that real-world usage might not. For these benchmarks we are ones developed for testing MP servers and workstation systems back in early 2013, such as grid solvers and Brownian motion code. Please head over to the first of such reviews where the mathematics and small snippets of code are available.

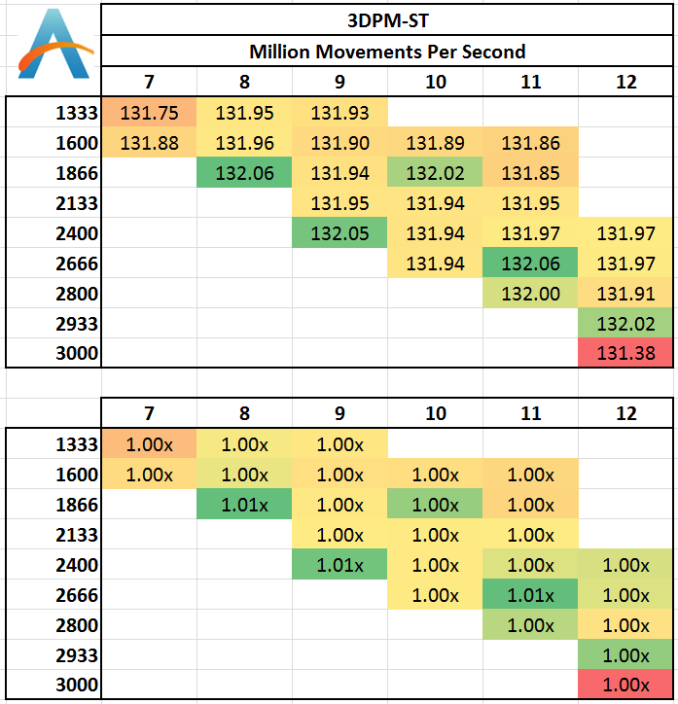

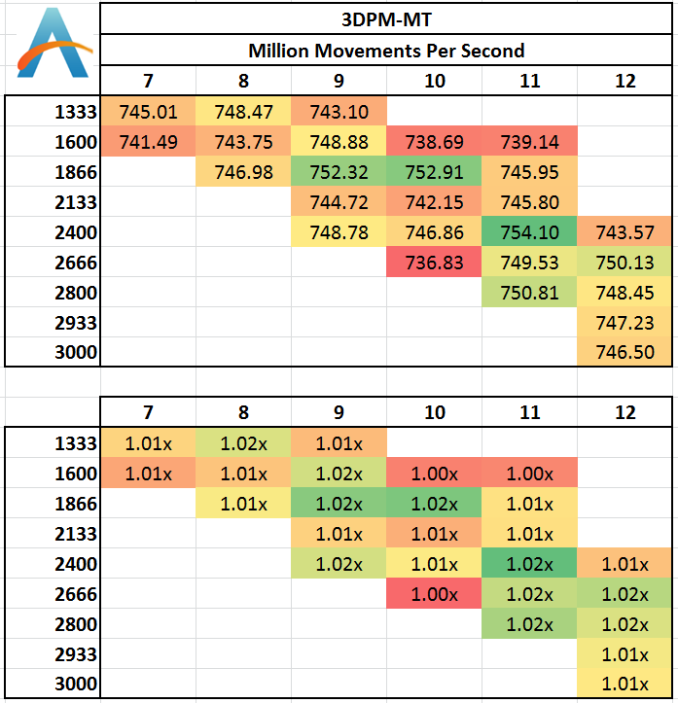

3D Movement Algorithm Test

The algorithms in 3DPM employ uniform random number generation or normal distribution random number generation, and vary in various amounts of trigonometric operations, conditional statements, generation and rejection, fused operations, etc. The benchmark runs through six algorithms for a specified number of particles and steps, and calculates the speed of each algorithm, then sums them all for a final score. This is an example of a real world situation that a computational scientist may find themselves in, rather than a pure synthetic benchmark. The benchmark is also parallel between particles simulated, and we test the single thread performance as well as the multi-threaded performance. Results are expressed in millions of particles moved per second, and a higher number is better.

Single threaded results:

For software that deals with a particle movement at once then discards it, there are very few memory accesses that go beyond the caches into the main DRAM. As a result, we see little differentiation between the memory kits, except perhaps a loose automatic setting with 3000 C12 causing a small decline.

Multi-Threaded:

With all the cores loaded, the caches should be more stressed with data to hold, although in the 3DPM-MT test we see less than a 2% difference in the results and no correlation that would suggest a direction of consistent increase.

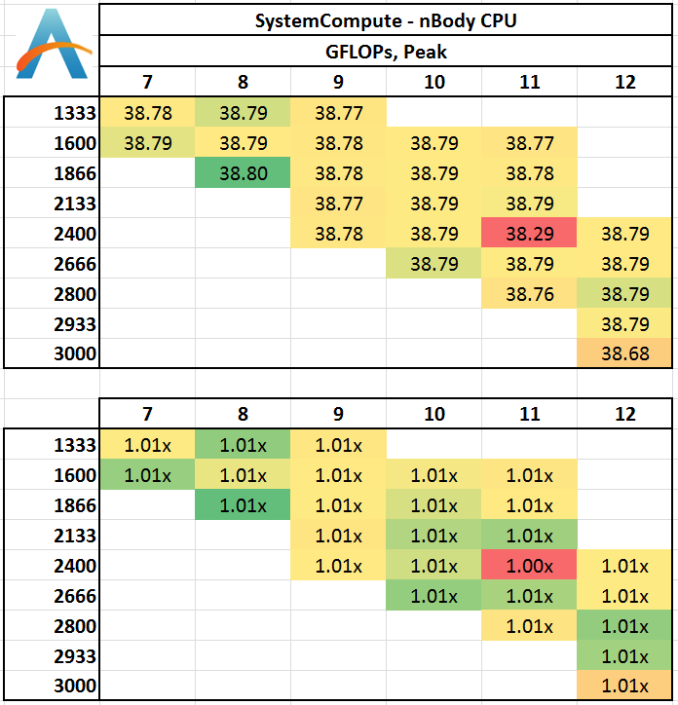

N-Body Simulation

When a series of heavy mass elements are in space, they interact with each other through the force of gravity. Thus when a star cluster forms, the interaction of every large mass with every other large mass defines the speed at which these elements approach each other. When dealing with millions and billions of stars on such a large scale, the movement of each of these stars can be simulated through the physical theorems that describe the interactions. The benchmark detects whether the processor is SSE2 or SSE4 capable, and implements the relative code. We run a simulation of 10240 particles of equal mass - the output for this code is in terms of GFLOPs, and the result recorded was the peak GFLOPs value.

Despite co-interaction of many particles, the fact that a simulation of this scale can hold them all in caches between time steps means that memory has no effect on the simulation.

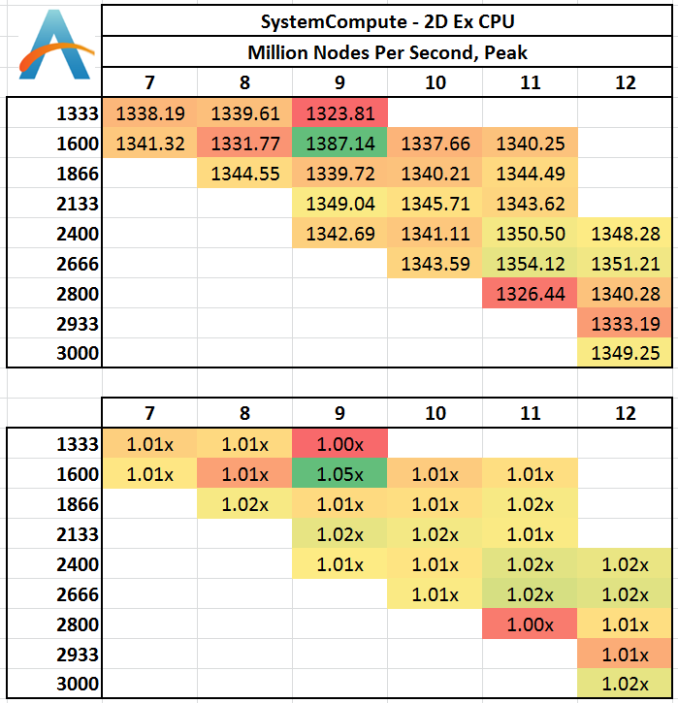

Grid Solvers - Explicit Finite Difference

For any grid of regular nodes, the simplest way to calculate the next time step is to use the values of those around it. This makes for easy mathematics and parallel simulation, as each node calculated is only dependent on the previous time step, not the nodes around it on the current calculated time step. By choosing a regular grid, we reduce the levels of memory access required for irregular grids. We test both 2D and 3D explicit finite difference simulations with 2n nodes in each dimension, using OpenMP as the threading operator in single precision. The grid is isotropic and the boundary conditions are sinks. We iterate through a series of grid sizes, and results are shown in terms of ‘million nodes per second’ where the peak value is given in the results – higher is better.

Two-Dimensional Grid:

In 2D we get a small bump over at 1600 C9 in terms of calculation speed, with all other results being fairly equal. This would statistically be an outlier, although the result seemed repeatable.

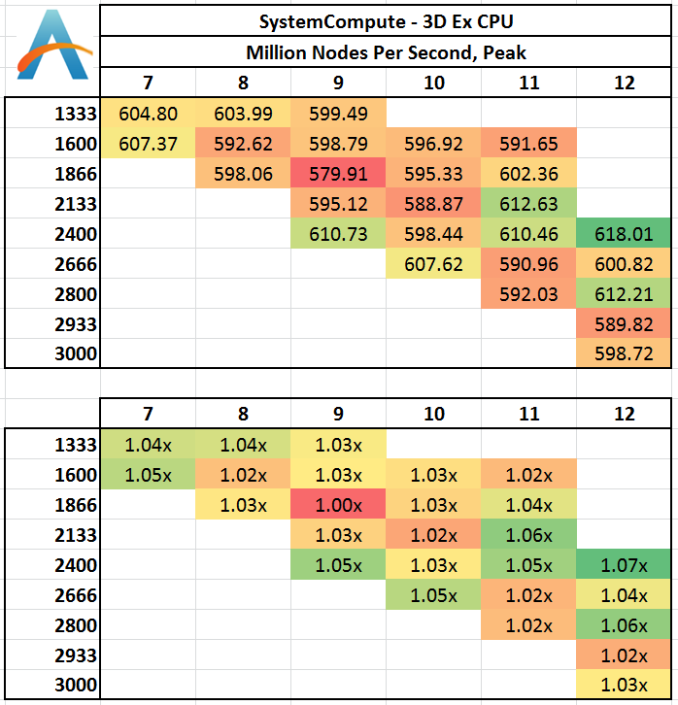

Three Dimensions:

In three dimensions, the memory jumps required to access new rows of the simulation are far greater, resulting in L3 cache misses and accesses into main memory when the simulation is large enough. At this boundary it seems that low CAS latencies work well, as do memory speeds > 2400 MHz. 2400 C12 seems a surprising result.

Grid Solvers - Implicit Finite Difference + Alternating Direction Implicit Method

The implicit method takes a different approach to the explicit method – instead of considering one unknown in the new time step to be calculated from known elements in the previous time step, we consider that an old point can influence several new points by way of simultaneous equations. This adds to the complexity of the simulation – the grid of nodes is solved as a series of rows and columns rather than points, reducing the parallel nature of the simulation by a dimension and drastically increasing the memory requirements of each thread. The upside, as noted above, is the less stringent stability rules related to time steps and grid spacing. For this we simulate a 2D grid of 2n nodes in each dimension, using OpenMP in single precision. Again our grid is isotropic with the boundaries acting as sinks. We iterate through a series of grid sizes, and results are shown in terms of ‘million nodes per second’ where the peak value is given in the results – higher is better.

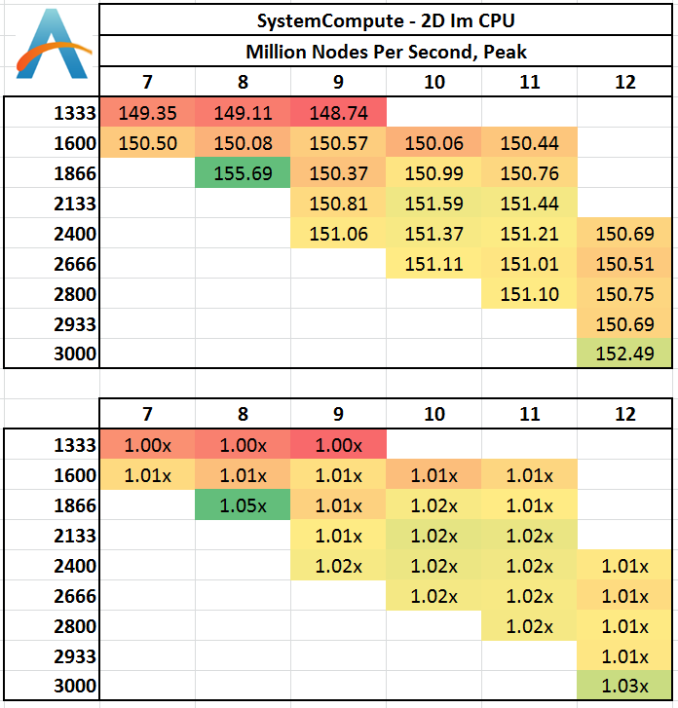

2D Implicit:

Despite the nature if implicit calculations, it would seem that as long as 1333 MHz is avoided, results are fairly similar. 1866 C8 being a surprise outlier.

89 Comments

View All Comments

Nagorak - Thursday, September 26, 2013 - link

Why did memory prices fluctuate so much since the end of last year and now? The Hynix fire looks to have next to no impact, but the price of memory has nearly doubled since last November/December.aryonoco - Thursday, September 26, 2013 - link

Ian, I do not want to disparage your work, please take this as nothing but constructive criticism. You do amazing work and the wealth of technical expertise is very clear in your articles.But you have a terrible writing style. There are so many sentences in your articles that while technically grammatically correct, are the most awkward ways of saying what you mean. Take a very simple sentence: "In terms of real world usage, on our Haswell platform, there are some recommendations to be made." There are so many ways, much simpler, much cleaner, much shorter to say what you said in that sentence.

I really struggle with your writing style. I know journalism isn't really your day job and you have a lot of important things to attend to, but please, if you care about this side job of yours as a technical writer, being technical is only half the story. Please consider improving your writing style to make it more readable.

Bob Todd - Friday, September 27, 2013 - link

I'd wager most of the readers didn't struggle as mightily as you. If you want to critique another's wordsmithery, you might want to find a classier way to do it. Our first exhibit will be sentence one of paragraph two. Surely you could have strung together a couple of words that got your point across without sounding like an ass?Impulses - Friday, September 27, 2013 - link

Yeah, I'm pretty sure that starting a sentence with a But is something you'd typically avoid...Dustin Sklavos - Friday, September 27, 2013 - link

"You have a terrible writing style."Constructive!

How would you like it if someone came to your place of business and told you "Look, I don't mean to disparage your work, but it makes my cat's hair fall out in clumps."

ingwe - Friday, September 27, 2013 - link

Yep. This definitely wasn't constructive.Ian, I don't see anything wrong with your writing, and I would rather you concentrate on getting articles out than on spending lots of extra time on editing your work.

jaded1592 - Sunday, September 29, 2013 - link

Your first sentence is grammatically incorrect. Stones and glass houses...Sivar - Thursday, September 26, 2013 - link

What a great article. Tons of actual data, and the numerous charts weren't stupidly saved as JPG. I love Anandtech.soccerballtux - Thursday, September 26, 2013 - link

so bandwidth starved apps with predictable data requests (h264 p1) really like it but when the CPU has enough data to crunch (winrar) the lower real-world latency time in seconds is worth having.gandergray - Thursday, September 26, 2013 - link

Ian: Thank you for the excellent article. You provide in depth and thorough analysis. Your article will undoubtedly serve as a frequently referenced guide.