Choosing a Gaming CPU October 2013: i7-4960X, i5-4670K, Nehalem and Intel Update

by Ian Cutress on October 3, 2013 10:05 AM ESTThe i5-4670K vs i7-4770K Dilemma

The big debate on Gaming CPUs always circles around to how many cores does a game use, and whether they are sufficiently utilized to matter. Some users are concerned if a title does not use all the CPU cores, while others would prefer that the CPU is a minimal part of the equation when work can be offloaded onto the GPU. So here is a question:

Do you prefer:

- a game that uses the CPU as much as possible such that the CPU can be a bottleneck, or

- a game that offloads most of the CPU work to the GPU thus making the GPU the primary bottleneck?

I am firmly in the latter camp and like to think that the latter is the result of game optimization, and that some users would focus budgets on GPUs if that is their primary concern for a system. Of course you can have your cake and eat it too with a hex-core system, as long as the wallet stretches.

No matter how much philosophical mumbo-jumbo you want to throw at ‘the ideal situation’, the reality always answer the question ‘but what should I get today?’. There are plenty of forum posts regarding processor recommendations, especially when it comes to Intel’s flagship mainstream processor, the i7-4770K, and its modified counterpart, the i5-4670K. Whether the hyperthreading of the i7-4770K provides a boost in games over the i5-4670K is an answer I wanted to provide, given the price difference between these processors is around $100 at Newegg today and that money might be better spent on a GPU.

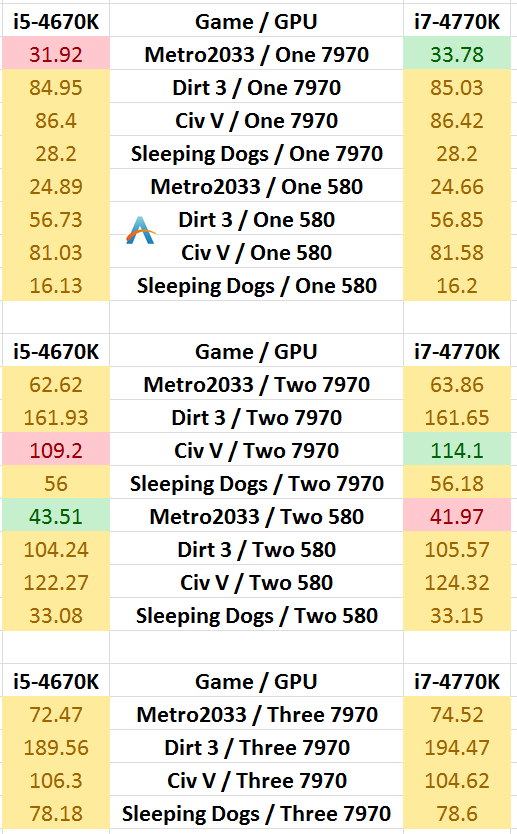

Here is a table comparing all our results with both CPUs in an x8/x8 + x4 motherboard, with a ‘win’ going to the side that has a +3.5% FPS advantage:

In direct comparison, only two benchmarks had more than a 3.5% FPS jump with the 4770K in favor, and one actually in favor of the 4670K.

So in terms of answering the question, for our benchmarks, it would seem that the i5-4670K is the more cost effective choice in buying a Haswell processor.

Nehalem Can Still Be Still Strong, But Update Soon

Getting a chance to cover a range of Nehalem CPUs was a goal since the first testing started for Part 1, and it is clear to see why performance platforms have that particular name. If you invested in an i7-920, like I did, and are lucky enough to run a nice D0 stepping CPU, then some bases are covered on the single and dual GPU front, although there are some holes were Nehalem is clearly not with the leading pack of CPUs.

Of course with socket 1366 CPUs there are some compromises. The motherboards with these CPUs do not have PCIe 3.0 (which is shown to look important in multi-GPU setups), nor do not have USB 3.0 / SATA 6 Gbps native, meaning you’ll be scrounging around for mid-performing controllers at best. Features such as Thunderbolt are but a wish unless you are willing to upgrade.

The most direct comparison for us is the i7-950 to the i7-4770K – here we have two processors both quad core with hyperthreading, with the 4770K taking a small MHz lead and a large IPC lead. Going back through our benchmark scenarios, the 4770K needs a PLX board to support some nice 3- and 4- way GPU setups, but there is a clear CPU advantage on the side of Haswell. The triple channel memory support of Nehalem was a big plus point when it was launched, but as we can now kit out our dual channel mainstream platforms with 2400 C10 memory with relative ease (or more if you are that way inclined), that memory bandwidth advantage is shorter.

If you were lucky/rich enough to jump on the extreme end of Westmere (i7-980, i7-980X or i7-990X), then having that hex-core system will keep a small advantage in multithreaded tests over the top performing Haswell solution (PovRay on 4770K = 1612.68, on i7-990X = 1636.40). The IPC advantage that Haswell comes with shows itself to be useful in most multi-GPU setups, whereas for our other single and some dual GPU benchmarks the performance difference between the two processors is almost negligible. When you hit three-way GPU configurations, it is all about the lane counts.

Investing in the i7-4960X

Plunging in at the high end is always expensive. These are the high margin parts that the manufacturers want to promote the virtues of such that users might invest lower down the product stack. When going for more cores, more MHz and a strong IPC contender, the CPU benchmark results are plain to see for anyone needing cores and grunt.

The downside of the i7-4960X is going to be with the chipset – we still have X79 on hand, even when paired with a motherboard refresh there are some limitations that motherboard manufacturers cannot escape. Now that Haswell/Z87 offers a full complement of SATA 6 Gbps and native USB 3.0, functionality via X79 has to come via extra controllers. The big upside in the extreme end is the lane allocation, which has some benefits in gaming.

Across our benchmark range, the i7-4960X and i7-4770K are similar in results, where single and dual GPU results are on par with each other across the board. When we start moving into tri-GPU setups, there are several things to consider:

In general:

- the x8/x8 + x4 PCIe allocation on Z87 is bad

- the x8/x4/x4 PCIe allocation on Z87 fares better

- having a PLX chip on a Z87 for x16/x8/x8 is best, but this more expensive

- You don’t have to worry about this with an i7-4960X

- But going Ivy Bridge-E is more expensive to begin with

The i7-4960X takes the top spot on Dirt 3 tri-GPU, Civ5 tri-GPU and Sleeping Dogs tri-GPU, suggesting that if you want the absolute best frame rates with more than two GPUs, then the i7-4960X is your answer. However the i7-4770K with a PLX-enabled motherboard will give almost as good results (often within 1-2%) of the i7-4960X for the lower CPU cost.

Next Update: Part 3

As mentioned in the early parts of this article, our next update will focus solely on the AMD midrange. A few of our partners have kindly volunteered processors for testing, as well as a small call to AMD for a few of the major ones and a quick scout on eBay for the less expensive models. As you can imagine, that is quite a list to choose from, but needs must as the devil desires. For sure the A10-6800K and similar processors will be included in as many GPU configurations as possible.

Recommendations for the Games Tested at 1440p/Max Settings

A CPU for Single GPU Gaming:

Intel: i5-4430

AMD: A8-5600K + Core Parking updates

If I were gaming today on a single GPU, the A8-5600K (or non-K equivalent) would strike me as a price competitive choice for frame rates, as long as you are not a big Civilization V player and do not mind the single threaded performance. The A8-5600K scores within a percentage point or two across the board in single GPU frame rates with both a HD7970 and a GTX580, as well as feel the same in the OS as an equivalent Intel CPU. The A8-5600K will also overclock a little, giving a boost, and comes in at a stout $110, meaning that some of those $$$ can go towards a beefier GPU or an SSD. The only downside is if you are planning some heavy OS work – if the software is Piledriver-aware, all is well, although most processing is not, and perhaps an i3-3225 or FX-8350 might be worth a look.

It is possible to consider the non-IGP versions of the A8-5600K, such as the FX-4xxx variant or the Athlon X4 750K. But as we have not had these chips in to test, it would be unethical to suggest them without having data to back them up. Watch this space, we have processors in the list to test.

A CPU for Dual GPU Gaming:

Intel: i5-4430 / i5-4670K

AMD: FX-8350 + Core Parking Updates

Based on our benchmarks, it again comes down to if you are a Civilization V type gamer, or if the engine your game is based on is similar to Civ5. If the answer is no, then the i5-4430 performs within low single digit % numbers of our top performers, and the FX-8350 puts up a reasonable showing. If the answer is yes, then anything short of the i5-4670K means that performance is being lost.

Looking back through the results, moving to a dual GPU setup obviously has some issues. Various AMD platforms are not certified for dual NVIDIA cards for example, meaning while they may excel for AMD, you cannot recommend them for team Green. There is also the dilemma that while in certain games you can be fairly GPU limited (Metro 2033, Sleeping Dogs), there are others were having the CPU horsepower can double the frame rate (Civilization V).

After the overview, my recommendation for dual GPU gaming comes in at the feet of the i5-4430 and the i5-4670K, depending on your CPU workloads. The price difference between these two processors is around $40, and for that extra we do get an overclockable CPU as well.

A CPU for Tri-GPU Gaming:

i5-4670K with an x8/x4/x4 (AMD) or PLX (NVIDIA) motherboard

By moving up in GPU power we also have to boost the CPU power in order to see the best scaling at 1440p. The CPUs in our testing that provides the top frame rates at this level are the top line Ivy Bridge and Haswell models. For a comparison point, the Sandy/Ivy Bridge-E 6-core results were often very similar, but the price jump to such as setup is prohibitive to all but the most sturdy of wallets. Of course we would suggest Haswell over Ivy Bridge based on Haswell being that newer platform.

As noted in the introduction, using 3-way on NVIDIA with Ivy Bridge will require a PLX motherboard in order to get enough lanes to satisfy the SLI requirement of x8 minimum per CPU. This also raises the bar in terms of price, as PLX motherboards start around the $280 mark. For a 3-way AMD setup, an x8/x4/x4 enabled motherboard performs similarly to a PLX enabled one, and ahead of the slightly crippled x8/x8 + x4 variations. However investing in a PLX board would help moving to a 4-way setup should that be your intended goal.

A CPU for Quad-GPU Gaming:

i5-4670K with a PLX motherboard

While our fourth GPU for this update was unfortunately in need of repair, by extension of the tri-GPU results we should say at this point that as long as the game title scales, we need at least the CPU recommendation for the tri-GPU setups in order to make sure the frame rates are in the top echelons. There are a couple of Haswell Z87 motherboards that offer an odd x8/x4/x4 + x4 PCIe lane allocation, although given our tri-GPU results using that PCIe 2.0 x4 from the PCH, I would not be too confident in seeing anything spectacular from those results. We see in our tri-GPU testing that the PLX chip has a positive effect which will only ever be boosted by adding GPUs. For the wallets that open wider than most, the socket 2011 processors are also at your beck and call.

But even still, a four-way GPU configuration is for those few users that have both the money and the physical requirement for pixel power. We are all aware of the law of diminishing returns, and more often than not adding that fourth GPU is taking the biscuit for most resolutions. Despite this, even at 1440p, we see awesome scaling in games like Sleeping Dogs (+73% of a single card moving from three to four cards) and more recently I have seen that four-way GTX680s help give BF3 in Ultra settings a healthy 35 FPS minimum on a 4K monitor. So while four-way setups are insane, there is clearly a usage scenario where it matters to have card number four.

Next on the Horizon: AMD, Dual Core Haswell, 2014 Updates

By the end of the year, it would make sense to cycle back around to the AMD platforms we have not tested in their entirety, including CPUs like the A10-6800K and the non-IGP oriented Athlon X4 750K. The stack of CPUs under $150 is larger than I originally thought, varying in CPU speed, cores and cache levels. We have a number in for testing which should provide a few interesting data points.

At the beginning of September, Intel formally put on sale the dual core Haswell CPUs that are now populating e-tailers. I would like to get a few in to bolster the area around the i3-3225 which is looking a little forlorn. Samples and prices dependent, I would also like to take a few of these in due course – either this year or beginning of next.

Leading on to next year, I am planning an update to our testing following recommendations from our readers. This includes a driver update (to the latest WHQL), hopefully an update on our NVIDIA GPU side to something around the GTX 760+, and also a game update to coincide with more relevant titles. At present we are looking at Company of Heroes 2, Bioshock Infinite, F1 2012/2013, Tomb Raider, and Sleeping Dogs again. We will stick at 1440p for 2014, as well as aim to report minimum frame rates as well.

If you have any suggestions for our Gaming CPU 2014 update, please forward them on to ian@anandtech.com!

137 Comments

View All Comments

BrightCandle - Thursday, October 3, 2013 - link

So again we see tests with games that are known not to scale with more CPU cores. There are games however that show clear benefits, your site simply doesn't test them. Its not universally true that more cores or HT make a difference but maybe it would be a good idea to focus on those games we know do benefit like Metro last light, Hitman absolution, Medal of honour warfighter and some areas of Crysis 3.The problem here is that its the games that support more multithreading, so to give true impression you need to test a much wider and modern set of games. To do otherwise is pretty misleading.

althaz - Thursday, October 3, 2013 - link

To test only those games would be more misleading, as the vast majority of games are barely multithreaded at all.erple2 - Thursday, October 3, 2013 - link

Honestly, if the stats for single GPU's weren't all at about the same level, this would be an issue. It isn't until you get to multiple GPU's - an area that you start to see some differentiation. But that level begins to become very expensive very quickly. I'd posit that if you're already into multiple high-end video cards, the price difference between dual and quad core is relatively insignificant anyway, so the point is moot.Pheesh - Thursday, October 3, 2013 - link

appreciate the review, but it seems like the choice of games and settings makes the results primarily reflect a GPU constrained situation (1440p max settings for a CPU test?). It would be nice to see some of the newer engines which utilize more cores as most people will be buying CPU for titles in the future. I'm personally more interested in the delta between the CPU's when in CPU bound situations. Early benchmarks of next gen engines have shown larger differences between 8 threads vs 4 threads.cbrownx88 - Friday, October 4, 2013 - link

amenTheJian - Sunday, October 6, 2013 - link

Precisely. Also only 2% of us even own 1440p monitors and I'm guessing the super small % of us in a terrible economy that have say $550 to blow on a PC (the price of the FIRST 1440p monitor model you'd actually recognize the name of on newegg-asus model (122reviews) – and the only one with more than 12reviews) would buy anything BUT a monitor that would probably require 2 vid cards to fully utilize anyway. Raise your hand if you're planning on buying a $550 monitor instead of say, buying a near top end maxwell next year? I see no hands. Since 98% of us are on 1920x1200 or LESS (and more to the point a good 60% are less than 1920x1080), I'm guessing we are all planning on buying either a top vid card, or if you're in the 60% or so that have UNDER 1080p, you'll buy a $100-200 monitor (1080p upgrade to 22in-24in) and a $350-450 vid card to max out your game play.Translation: These results affect less than 2% of us and are pointless for another few years at the very least. I'm planning on buying a 1440p monitor but LONG after I get my maxwell. The vid card improves almost everything I'll do in games. The monitor only works well if I have the VID CARD muscle ALREADY. Most people of the super small 2% running 1440p or up have two vid cards to push the monitors (whatever they have). I don't want to buy a monitor and say "oh crap, all my games got super slow" for the next few years (1440p for me is a purchase once a name brand is $400 at 27in – only $150 away…LOL). I refuse to run anything but native and won't turn stuff off. I don't see the point in buying a beautiful monitor if I have to turn in into crap to get higher fps anyway... :)

Who is this article for? Start writing articles for 98% of your readers, not 2%. Also you'll find the cpu's are far more important where that 98% is running as fewer games are gpu bound. I find it almost stupid to recommend AMD these days for cpus and that stupidity grows even more as vid cards get faster. So basically if you are running 1080p and plan to for a while look at the cpu separation on the triple cards and consider that what you'll see as cpu results. If you want a good indication of what I mean, see the first or second 1440p article here and CTRL-F my nick. I listed all the games previously that part like the red sea leaving AMD cpus in the dust (it's quite a bit longer than CIV 5...ROFL). I gave links to the benchmarks showing all those games.

http://www.anandtech.com/comments/6985/choosing-a-...

There's the comments section on the 2nd 1440p article for the lazy people :)

Note that even here in this article TWO of the games aren't playable on single cards...LOL. 34fps avg in metro 2033 means you'll be hitting low 20's or worse MINIMUM. Sleeping dogs is already under 30fps AVG, so not even playable at avg fps let alone MIN fps you will hit (again TEENS probably). So if you buy that fancy new $550+ monitor (because only a retard or a gambler buys a $350 korean job from ebay etc...LOL) get used to slide shows and stutter gaming for even SINGLE 7970's in a lot of games never mind everything below sucking even more. Raise your hand if you have money for a $550 monitor AND a second vid card...ROFL. And these imaginary people this article is for, apparently should buy a $110 CPU from AMD to pair with this setup...ROFLMAO.

REALISTIC Recommendations:

Buy a GPU first.

Buy a great CPU second (and don't bother with AMD unless you're absolutely broke).

Buy that 1440p monitor if your single card is above 7970 already or you're planning shortly on maxwell or some such card. As we move to unreal 4 engine, cryengine 3.5 (or cryengine 4th gen…whatever) etc next year, get ready to feel the pain of that 1440p monitor even more if you're not above 7970. So again, this article should be considered largely irrelevant for most people unless you can fork over for the top end cards AND that monitor they test here. On top of this, as soon as you tell me you have the cash for both of those, what they heck are you doing talking $100 AMD cpus?...LOL.

And for AMD gpu lovers like the whole anandtech team it seems...Where's the NV portal site? (I love the gpus, just not their drivers):

http://hothardware.com/News/Origin-PC-Ditching-AMD...

Origin AND Valve have abandoned AMD even in the face of new gpus. Origin spells it right out exactly as we already know:

"Wasielewski offered a further clarifying statement from Alvaro Masis, one of Origin’s technical support managers, who said, “Primarily the overall issues have been stability of the cards, overheating, performance, scaling, and the amount of time to receive new drivers on both desktop and mobile GPUs.”

http://www.pcworld.com/article/2052184/whats-behin...

More data, nearly twice the failure rate at another vendor confirming why the first probably dropped AMD. I’d call a 5% rate bad, never mind AMD’s 1yr rate of nearly 8% failure (and nearly 9% over 3yrs). Cutting RMAs nearly in half certainly saves a company some money, never mind all the driver issues AMD still has and has had for 2yrs. A person adds a monitor and calls tech support about AMD eyefinity right? If they add a gpu they call about crossfire next?...ROFL. I hope AMD starts putting more effort into drivers, or the hardware sucks no matter how good the silicon is. As a boutique vendor at the high end surely the crossfire and multi-monitor situation affects them more than most who don't even ship high end stuff really (read:overly expensive...heh)

Note one of the games here performs worse with 3 cards than 2. So I guess even anandtech accidentally shows AMD's drivers still suck for triple's. 12 cpus in civ5 post above 107fps with 2 7970's, but only 5 can do over 107fps with 3 cards...Talk about going backwards. These tests, while wasted on 98% of us, should have at the least been done with the GPU maker who has properly functioning drivers WITH 2 or 3 CARDS :)

CrispySilicon - Thursday, October 3, 2013 - link

What gives Anand?I may be a little biased here since I'm still rocking a Q6600 (albeit fairly OC'd). But with all the other high end platforms you used, why not use a DDR3 X48/P45 for S775?

I say this because NOBODY who reads this article would still be running a mobo that old with pcie 1.1, especially in multi-gpu configuration.

dishayu - Friday, October 4, 2013 - link

I share you opinion on the matter, although I'm myself still running a Q6600 on a MSI P965 Platinum with an AMD HD6670. :PThomasS31 - Thursday, October 3, 2013 - link

Please also add Battlefield 4 to the game tests in the next update(s)/2014.I think it will be very relevant, based on the beta experience.

tackle70 - Thursday, October 3, 2013 - link

I know you dealt with this criticism in the intro, and I understand the reasoning (consistency, repeatability, etc) but I'm going to criticize anyways...These CPU results are to me fairly insignificant and not worth the many hours of testing, given that the majority of cases where CPU muscle is important are multiplayer (BF3/Crysis 3/BF4/etc). As you can see even from your benchmark data, these single player scenarios just don't really care about CPU all that much - even in multi-GPU. That's COMPLETELY different in the multiplayer games above.

Pretty much the only single player game I'm aware of that will eat up CPU power is Crysis 3. That game should at least be added to this test suite, in my opinion. I know it has no built in benchmark, but it would at least serve as a point of contact between the world of single player CPU-agnostic GPU-bound tests like these and the world of CPU-hungry multiplayer gaming.