Samsung SSD 840 EVO Review: 120GB, 250GB, 500GB, 750GB & 1TB Models Tested

by Anand Lal Shimpi on July 25, 2013 1:53 PM EST- Posted in

- Storage

- SSDs

- Samsung

- TLC

- Samsung SSD 840

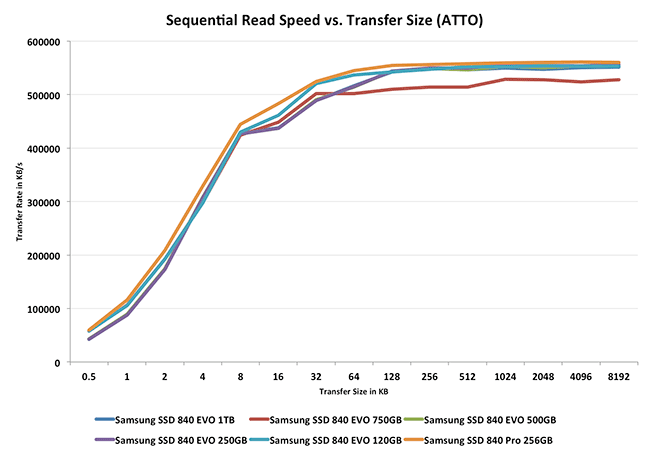

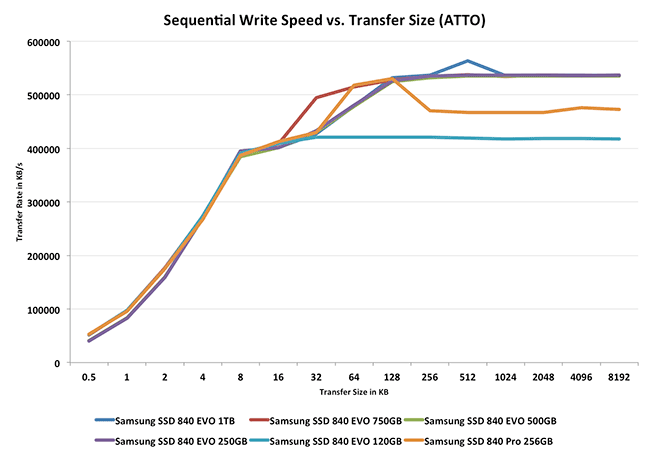

Performance vs. Transfer Size

ATTO is a useful tool for quickly measuring the impact of transfer size on performance. You can get the complete data set in Bench.

I pointed this out in the 4KB random write section, but Samsung continues to do a great job of dealing with low queue depth transfers on the EVO. Performance is consistently great across all of the EVO capacity points. There's also no difference between the EVO's behavior here and the 840 Pro.

137 Comments

View All Comments

ervinshiznit - Thursday, July 25, 2013 - link

Typo? On the Turbowrite page you say "For most light use cases I can see TurboWrite being a great way to deliver more of an MLC experience but on a TLC drive."It should be deliver more of a SLC experience but on a TLC drive.

ciri - Sunday, July 28, 2013 - link

SLC>MLC>TLCGuspaz - Thursday, July 25, 2013 - link

The fact that RAPID sees any performance improvement at all illustrates to me a failure of the operating system's disk caching subsystem. That's all that RAPID really is, after all, a replacement for the Windows disk cache.I'd be curious to see the performance results of RAPID compared to the disk caching subsystems on other platforms, such as Linux and ZFS (which even on Linux has it's own cache called the "ARC"). Are the large improvements because Windows disk caching is particularly bad, or because RAPID is a better implementation than anybody else?

themelon - Thursday, July 25, 2013 - link

Windows is absolutely horrible at filesystem caching and I don't think it does any sort of block caching. It seems to use more of a FIFO algorithm that has no sequential write bypass no matter what you do. ZFS and the 2 block device caches that recently integrated into the linux kernel, bcache and dm-cache, use more of an LRU method. All of them have at least basic sequential bypass detection as well. bcache in particular is tuneable to your load in almost all aspects of performance. Of course these are only block side caching and currently have no filesystem specific knowledge.There is some interesting work going on to track hot spots that will eventually allow for preemptive cache warming and/or hot relocation. Right now it is BTRFS specific but it is being integrated below the filesystem layer so any filesystem will eventually be able to take advantage of it.

ZFS on Linux is a waste of time in my opinion. ZFS's L2ARC and SLOG are great but limited by some of what I feel are architectural flaws in zfs itself. I used to love zfs but the Linux kernel block stack has caught up to it in features and still offers all of the flexibility that it always has.

aicom - Friday, July 26, 2013 - link

Windows' cache system is better than you give it credit for. It does support sequential bypass (see FILE_FLAG_SEQUENTIAL_SCAN flag). It works with filesystem drivers with the Cc* APIs in the kernel. It also supports caching files over a network, even with other clients modifying the files. It does standard read-ahead and write-behind and is supplemented by an adaptive prefetcher (SuperFetch).The reason we're seeing such huge gains is because the programs being tested explicitly ask NOT to be cached. The whole point is to test the drive, so they pass FILE_FLAG_NO_BUFFERING to disable caching on the files being accessed.

MrSpadge - Saturday, July 27, 2013 - link

Excellent post!Timur Born - Sunday, July 28, 2013 - link

Question still arises why the Anand Storage Bench is affected beneficial by RAPID?! Is it because ASB also asks the Windows cache to be bypassed, is it because of the Windows cache flushing parts of its pages every second or does RAPID communicate with the drive (firmware) at a more fundamental level that allows further optimizations?watersb - Friday, July 26, 2013 - link

Excellent points. I stick with ZFS because I trust it (after many hardware failures but no data loss) and because it is cross-platform.Mac HFS does "hot relocation", I believe. And NTFS has always tried to keep hot files in the middle of the disk in order to reduce hard disk seek times. So maybe I don't understand what is meant by hot relocation.

piroroadkill - Thursday, July 25, 2013 - link

I agree. I'm pretty sure Windows' own disk caching is terrible. It's pretty poor even on the server side. They really need to work on that shit.tincmulc - Thursday, July 25, 2013 - link

How is rapid any better from SuperCache or FancyCache? Not only do they do the same thing, but can also be configured to use more ram or use os invisble memory (32 bit os with more than 3GB of ram) and they work for any drive, even HDDs.