ASUS PQ321Q UltraHD Monitor Review: Living with a 31.5-inch 4K Desktop Display

by Chris Heinonen on July 23, 2013 9:01 AM ESTSince the ASUS has a pair of HDMI inputs, but there is effectively no 4K HDMI content right now, the performance of the internal scaler is essential to know. To test it, I use an Oppo BDP-105 Blu-ray player and the Spears and Munsil HD Benchmark, Version 2. The Oppo has its own 4K scaler so I can easily compare the two and see how the ASUS performs.

First off, the ASUS is poor when it comes to video processing. Common film and video cadences of 3:2 and 2:2 are not properly picked up upon and deinterlaced correctly. The wedge patterns are full of artifacts and never lock on. With the scrolling text of video over film, the ASUS passed which was strange as it fails the wedges. It also does a poor job with diagonals, showing very little if any filtering on them, and producing lots of jaggies.

Spears and Munsil also has a 1080p scaling pattern to test 4K and higher resolution devices. Using the ASUS scaler compared to the Oppo it had a bit more ringing but they were pretty comparable. This becomes very important for watching films or playing video games, as you’ll need to send a 1080p signal to get a 60p frame rate. 24p films will be fine, but concerts, some TV shows and some documentaries are 60i and would then appear choppy if sent at 4K over HDMI.

Brightness and Contrast

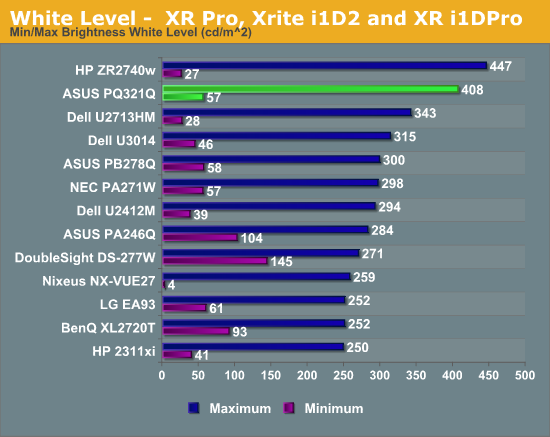

In our preview of the PQ321Q, we looked at how it performed out of the box with the default settings. What we did see is that the PQ321Q can get really, really bright. Cranked up to the maximum I see 408 cd/m2 of light from it. That is plenty no matter how bright of an office environment you might work in. At the very bottom of the brightness setting you still get 57 cd/m2. That is low enough that if you are using it for print work or something else in a darkened room the brightness won’t overwhelm you.

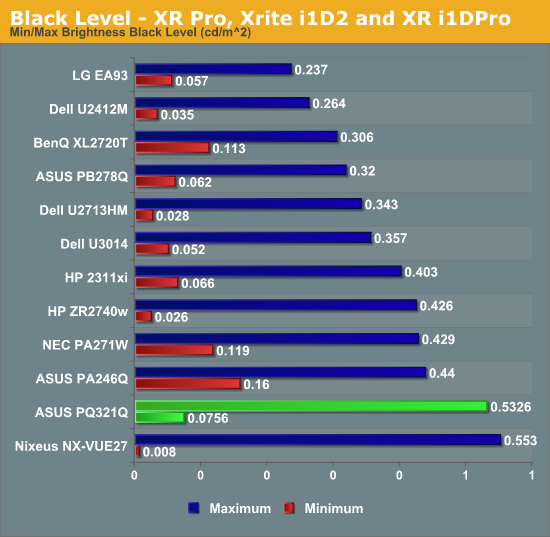

The change to IGZO caused me to wonder how the black levels would behave on the ASUS. If energy flows far more freely, would that cause a slight bit of leakage to lead to a higher black level? Or would the overall current be scaled down so that the contrast ratio remains constant.

I’m not certain what the reason is, but the black level of the PQ321Q is a bit higher than I’d like to see. It is 0.756 cd/m2 at the lowest level and 0.5326 cd/m2 at the highest level. Even with the massive light output of the ASUS that is a bit high.

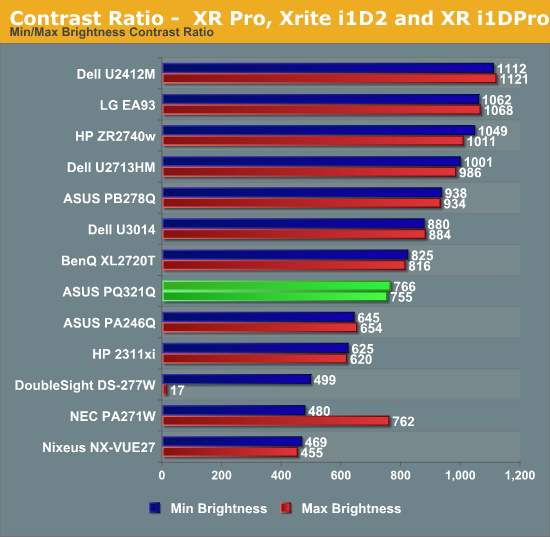

Because of this higher black level, we see Contrast Ratios of 755:1 and 766:1 on the ASUS PQ321Q. These are decent, middle-of-the-pack numbers. I really like to see 1,000:1 or higher, especially when we are being asked to spend $3,500 on a display. Without another IGZO display or 4K display to compare the ASUS to, I can’t be certain if one of those is the cause, or if it is the backlighting system, or something else entirely. I just think we could see improvements in the black level and contrast ratio here.

166 Comments

View All Comments

msahni - Tuesday, July 23, 2013 - link

very costly..... hope these displays become mainstream soon....Higher resolution/ppi does make a big difference atleast for people using their computers all day...

Even when I jumped from a 1366x768 laptop to a 1920x1080 laptop and then to a rMBP the difference is truly there.... Once you go to the higher resolution working on the lesser one really is a pain...

Cheers

airmantharp - Tuesday, July 23, 2013 - link

The cost is IZGO; 4k panels cost only slightly more than current panels when using other panel types like IPS, VA or PLS.Death666Angel - Tuesday, July 23, 2013 - link

And where can I buy monitors with the panels you speak of? I'd like a 4k monitor for about 800 €, maybe even 1000 € (I paid 570 for my Samsung 27" 1440p, so that seems fair if the panels only cost slightly more)....airmantharp - Tuesday, July 23, 2013 - link

Look up Seiko, they're all over the place. 30Hz only at 4k for now, but that's an electronics limitation; the panels are good for 120Hz.Gunbuster - Monday, July 29, 2013 - link

Seikisheh - Tuesday, July 23, 2013 - link

Why does the response time graph show no input lag for the monitor?Can it accept 10-bit input? Does 10-bit content look any better than 8-bit?

"Common film and video cadences of 3:2 and 2:2 are not properly picked up upon and deinterlaced correctly."

Why expect a computer monitor to have video-specific processing logic?

cheinonen - Tuesday, July 23, 2013 - link

Because I had to change from SMTT (which shows input lag and response time) as our license expired and they're no longer selling new licenses. The Leo Bodnar shows the overall lag, but can't break it up into two separate numbers.It can accept 10-bit, but I have nothing that uses 10-bit data as I don't use Adobe Creative Suite or anything else that supports it.

The ASUS has a video mode, with a full CMS, to go with the dual HDMI outputs. Since that would indicate they expect some video use for it, testing for 2:2 and 3:2 cadence is fair IMO.

sheh - Wednesday, July 24, 2013 - link

Thanks.Alas. It'd be interesting to know the lag break down. If most is input lag, there's hope for better firmware. :)

Are 10-bit panels usually true 10-bit or 8-bit with temporal dithering?

DanNeely - Thursday, July 25, 2013 - link

some of the current generation high end 2560x1600/1440 panels are 14bit internally and have a programmable LUT to do in monitor calibration instead of at the OS level. (The latter is an inherently lossy operation; the former is much less likely to be.)mert165 - Tuesday, July 23, 2013 - link

I'd like to know how a Retina MacBook Pro and a new MacBook Air hold up to the 4k display. The Verge com a while back published a demo and the results were not spectacular. Although in their demo they didn't go into depth as to WHY the results were so poor (weak video card, bad DisplayPort drivers, other???)Could you connect up the new Haswell MacBook Air to see performance?

Thanks!