Intel Iris Pro 5200 Graphics Review: Core i7-4950HQ Tested

by Anand Lal Shimpi on June 1, 2013 10:01 AM ESTCompute Performance

With Haswell, Intel enables full OpenCL 1.2 support in addition to DirectX 11.1 and OpenGL 4.0. Given the ALU-heavy GPU architecture, I was eager to find out how well Iris Pro did in our compute suite.

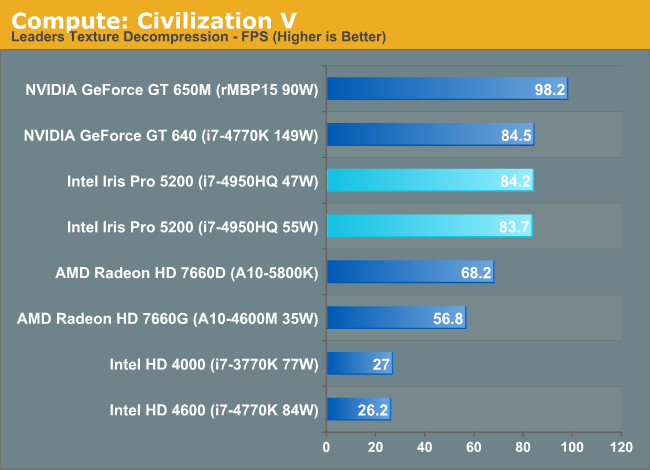

As always we'll start with our DirectCompute game example, Civilization V, which uses DirectCompute to decompress textures on the fly. Civ V includes a sub-benchmark that exclusively tests the speed of their texture decompression algorithm by repeatedly decompressing the textures required for one of the game’s leader scenes. While DirectCompute is used in many games, this is one of the only games with a benchmark that can isolate the use of DirectCompute and its resulting performance.

Iris Pro does very well here, tying the GT 640 but losing to the 650M. The latter holds a 16% performance advantage, which I can only assume has to do with memory bandwidth given near identical core/clock configurations between the 650M and GT 640. Crystalwell is clearly doing something though because Intel's HD 4600 is less than 1/3 the performance of Iris Pro 5200 despite having half the execution resources.

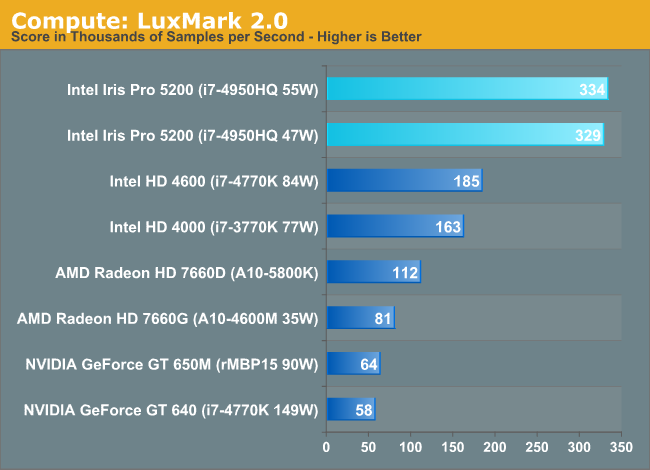

Our next benchmark is LuxMark2.0, the official benchmark of SmallLuxGPU 2.0. SmallLuxGPU is an OpenCL accelerated ray tracer that is part of the larger LuxRender suite. Ray tracing has become a stronghold for GPUs in recent years as ray tracing maps well to GPU pipelines, allowing artists to render scenes much more quickly than with CPUs alone.

Moving to OpenCL, we see huge gains from Intel. Kepler wasn't NVIDIA's best compute part, but Iris Pro really puts everything else to shame here. We see near perfect scaling from Haswell GT2 to GT3. Crystalwell doesn't appear to be doing much here, it's all in the additional ALUs.

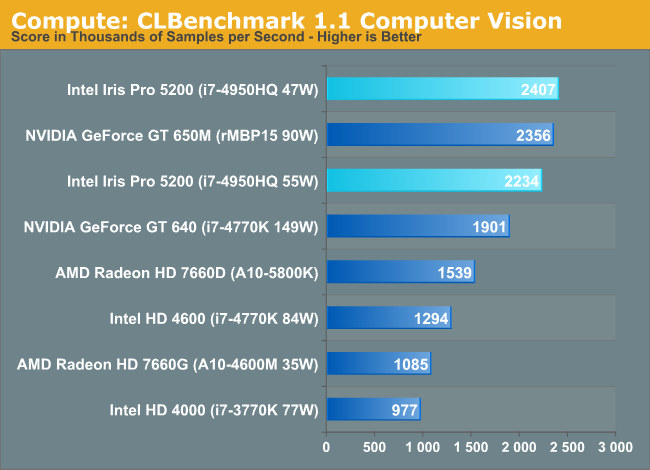

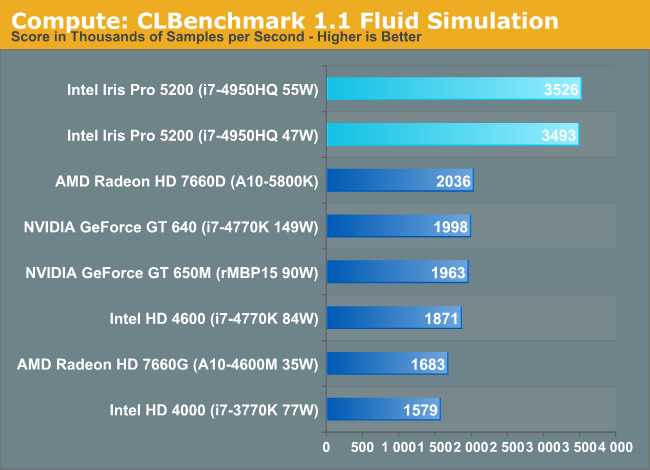

Our 3rd benchmark set comes from CLBenchmark 1.1. CLBenchmark contains a number of subtests; we’re focusing on the most practical of them, the computer vision test and the fluid simulation test. The former being a useful proxy for computer imaging tasks where systems are required to parse images and identify features (e.g. humans), while fluid simulations are common in professional graphics work and games alike.

Once again, Iris Pro does a great job here, outpacing everything else by roughly 70% in the Fluid Simulation test.

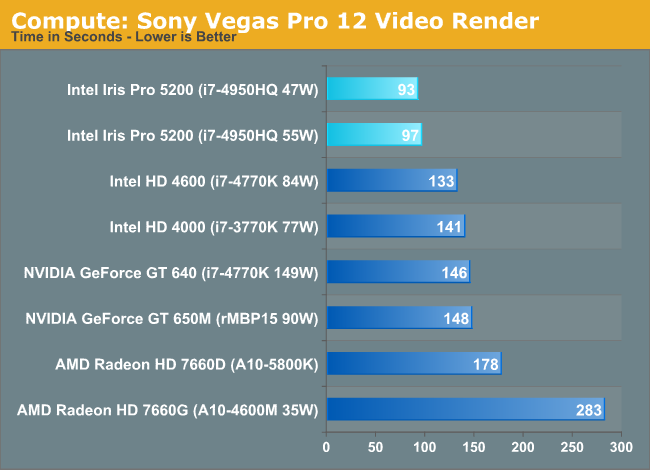

Our final compute benchmark is Sony Vegas Pro 12, an OpenGL and OpenCL video editing and authoring package. Vegas can use GPUs in a few different ways, the primary uses being to accelerate the video effects and compositing process itself, and in the video encoding step. With video encoding being increasingly offloaded to dedicated DSPs these days we’re focusing on the editing and compositing process, rendering to a low CPU overhead format (XDCAM EX). This specific test comes from Sony, and measures how long it takes to render a video.

Iris Pro rounds out our compute comparison with another win. In fact, all of the Intel GPU solutions do a good job here.

177 Comments

View All Comments

TheJian - Sunday, June 2, 2013 - link

This is useless at anything above 1366x768 for games (and even that is questionable as I don't think you were posting minimum fps here). It will also be facing richland shortly not AMD's aging trinity. And the claims of catching a 650M...ROFL. Whatever Intel. I wouldn't touch a device today with less than 1600x900 and want to be able to output it to at least a 1080p when in house (if not higher, 22in or 24in). Discrete is here to stay clearly. I have an Dell i9300 (Geforce 6800) from ~2005 that is more potent and runs 1600x900 stuff fine, I think it has 256MB of memory. My dad has an i9200 (radeon 9700pro with 128mb I think) that this IRIS would have trouble with. Intel has a ways to go before they can claim to take out even the low-end discrete cards. You are NOT going to game on this crap and enjoy it never mind trying to use HDMI/DVI out to a higher res monitor at home. Good for perhaps the NICHE road warrior market, not much more.But hey, at least it plays quite a bit of the GOG games catalog now...LOL. Icewind Dale and Baldur's gate should run fine :)

wizfactor - Sunday, June 2, 2013 - link

Shimpi's guess as to what will go into the 15-inch rMBP is interesting, but I have a gut feeling that it will not be the case. Despite the huge gains that Iris Pro has over the existing HD 4000, it is still a step back from last year's GT 650M. I doubt Apple will be able to convince its customers to spend $2199 on a computer that has less graphics performance than last year's (now discounted) model. Despite its visual similarity to an Air, the rMBP still has performance as a priority, so my guess is that Apple will stick to discrete for the time-being.That being said, I think Iris Pro opens up a huge opportunity to the 15-inch rMBP lineup, mainly a lower entry model that finally undercuts the $2000 barrier. In other words, while the $2199 price point may be too high to switch entirely to iGPU, Apple might be able to pull it off at $1799. Want a 15-inch Retina Display? Here's a more affordable model with decent performance. Want a discrete GPU? You can get that with the existing $2199 price point.

As far as the 13-inch version is concerned, my guesses are rather murky. I would agree with the others that a quad-core Haswell with Iris Pro is the best-case scenario for the 13-inch model, but it might be too high an expectation for Apple engineers to live up to. I think Apple's minimum target with the 13-inch rMBP should be dual-core Haswell with Iris 5100. This way, Apple can stick to a lower TDP via dual-core, and while Iris isn't as strong as Iris Pro, its gain over HD 4000 is enough to justify the upgrade. Of course, there's always the chance that Apple has temporary exclusivity on an unannounced dual-core Haswell with Iris Pro, the same way it had exclusivity with ULV Core 2 Duo years ago with MBA, but I prefer not to make Haswell models out of thin air.

BSMonitor - Monday, June 3, 2013 - link

You are assuming that the next MBP will have the same chasis size. If thin is in, the dGPU-less Iris Pro is EXTREMELY attractive for heat/power considerations..More likely is the end of the thicker MBP and separate thin MBAir lines. Almost certainly, starting in two weeks we have just one line, MBP all with retina, all the thickness of MBAir. 11" up to 15"..

TheJian - Sunday, June 2, 2013 - link

As far as encoding goes, why do you guys ignore cuda?http://www.extremetech.com/computing/128681-the-wr...

Extremetech's last comment:

"Avoid MediaEspresso entirely."

So the one you pick is the worst of the bunch to show GPU power....jeez. You guys clearly have a CS6 suite lic so why not run Adobe Premiere which uses Cuda and run it vs the same vid render you use in Sony's Vegas? Surely you can rip the same vid in both to find out why you'd seek a CUDA enabled app to rip with. Handbrake looks like they're working on supporting Cuda also shortly. Or heck, try FREEMAKE (yes free with CUDA). Anything besides ignoring CUDA and acting like this is what a user would get at home. If I owned an NV card (and I don't in my desktop) I'd seek cuda for everything I did that I could find. Freemake just put out another update 5/29 a few days ago.

http://www.tested.com/tech/windows/1574-handbrake-...

2.5yrs ago it was equal, my guess is they've improved Cuda use by now. You've gotta love Adam and Jamie... :) Glad they branched out past just the Mythbusters show.

xrror - Sunday, June 2, 2013 - link

I have a bad suspicion one of the reasons why you won't see a desktop Haswell part with eDRAM is that it would pretty much euthanize socket 2011 on the spot.IF Intel does actually release a "K" part with it enabled, I wonder how restrictive or flexible the frequency ratios on the eDRAM will be?

Speaking of socket 2011, I wonder if/when Intel will ever refresh it from Sandy-E?

wizfactor - Sunday, June 2, 2013 - link

I wouldn't call myself an expert on computer hardware, but isn't it possible that Iris Pro's bottleneck at 1600x900 resolutions could be attributed to insufficient video memory? Sure, that eDRAM is a screamer as far as latency is concerned, but if the game is running on higher resolutions and utilising HD textures, that 128MB would fill up really quickly, and the chip would be forced to swap often. Better to not have to keep loading and unloading stuff in memory, right?Others note the similarity between Crystalwell and the Xbox One's 32MB Cache, but let's not forget that the Xbox One has its own video memory; Iris Pro does not, or put another way, it's only got 128 MB of it. In a time where PC games demand at least 512 MB of video RAM or more, shouldn't the bottleneck that would affect Iris Pro be obvious? 128 MB of RAM is sure as hell a lot more than 0, but if games demand at least four times as much memory, then wouldn't Iris Pro be forced to use regular RAM to compensate, still? This sounds to me like what's causing Iris Pro to choke at higher resolutions.

If I am at least right about Crystalwell, it is still very impressive that Iris Pro was able to get in reach of the GT 650M with so little memory to work with. It could also explain why Iris Pro does so much better in Crysis: Warhead, where the minimum requirements are more lenient with video memory (256 MB minimum). If I am wrong, however, somebody please correct me, and I would love to have more discussion on this matter.

BSMonitor - Monday, June 3, 2013 - link

Me thinks thou not know what thou talking about ;)F_A - Monday, June 3, 2013 - link

The video memory is stored in main memory being it 4GB and above...(so minspecs of crysis are clearly met)... the point is bandwidtht.The article is telling there are roughly 50GB/s when the cachè is run with 1.6 Ghz.

So ramping it up in füture makes the new Iris 5300 i suppose.

glugglug - Tuesday, June 4, 2013 - link

Video cards may have 512MB to 1GB of video memory for marketing purposes, but you would be hard pressed to find a single game title that makes use of more than 128.tipoo - Wednesday, January 21, 2015 - link

Uhh, what? Games can use far more than that, seeing them push past 2GB is common. But what matters is how much of that memory needs high bandwidth, and that's where 128MB of cache can be a good enough solution for most games.