The Crucial/Micron M500 Review (960GB, 480GB, 240GB, 120GB)

by Anand Lal Shimpi on April 9, 2013 9:59 AM ESTPower Consumption

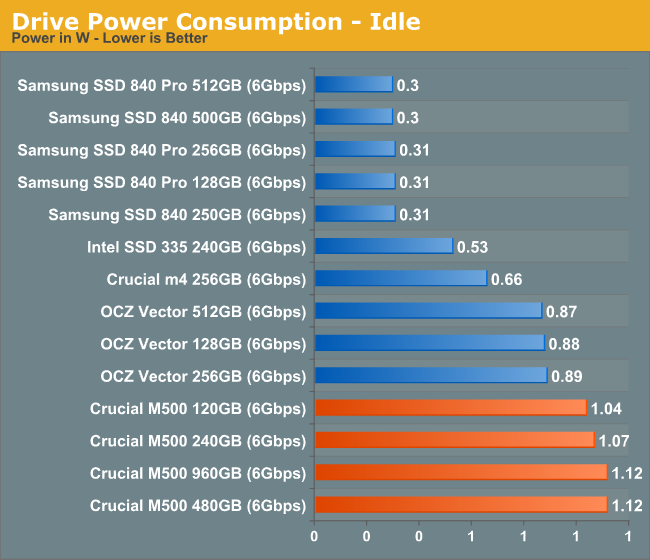

The M500 supports the new Device Sleep standard which will see platform support with Haswell this year. Crucial claims DIPM enabled idle power as low as 80mW, however even with DIPM enabled on our testbed we weren't able to get anything south of ~1W at idle. I'm digging to see if this is a M500 issue or one specific to our testbed, but Crucial is confident that in a notebook you'd see very little idle power consumption with the M500. Supporting DevSleep is important as that'll quickly become a must have feature for Haswell notebooks.

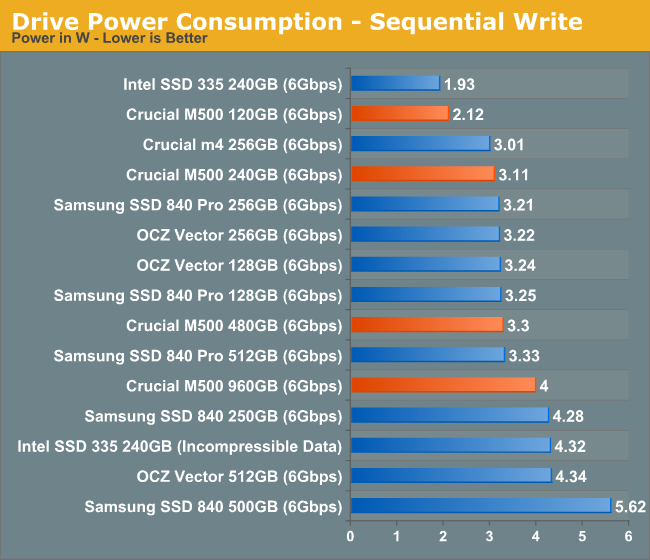

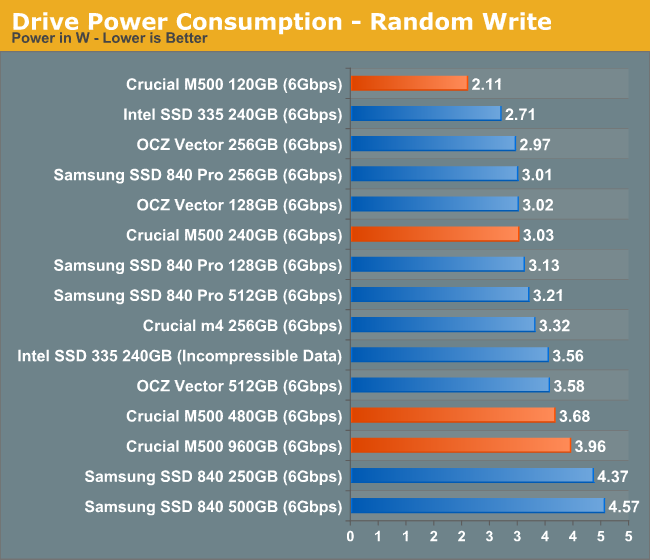

Load power looks excellent, which gives me hope that Crucial's idle power is indeed as good as they claim. The M500 is a direct competitor to Samsung's SSD 840 Pro when it comes to power consumption under load. Given how power efficient the 840 Pro is, the M500 is in good company.

111 Comments

View All Comments

mayankleoboy1 - Wednesday, April 10, 2013 - link

thanks! These look much better, and more realworld+consumer usage.metafor - Wednesday, April 10, 2013 - link

I'd be very interested to see an endurance test for this drive and how it compares to the TLC Samsung drives. One of the bigger selling points of 2-level MLC is that it has a much longer lifespan, isn't it?73mpl4R - Wednesday, April 10, 2013 - link

Thank you for a great review. If this is a product that paves the way for better drives with 128Gbit dies, then this is most welcome. Interesting with the encryption aswell, gonna check it out.raclimja - Wednesday, April 10, 2013 - link

power consumption is through the roof.very disappointed with it.

toyotabedzrock - Wednesday, April 10, 2013 - link

If you wrote 1.5 TB of data for this test then you used 2% of the drives write life in 10-11 hours.As a heavy multitasker this worries me greatly. Especially if you edit large video files.

Solid State Brain - Wednesday, April 10, 2013 - link

As I written in one of the comments above, they probably state 72 TiB of maximum supported writes for liability and commercial reasons. They don't want users to be using these as enterprise/professional drives (and chances are that if you write more than 40 GiB/day continuously for 5 years you're not a normal consumer). Most people barely write 1.5 TiB in 6 months of use anyway. So even if 72 TiB don't seem much, they're actually quite a lot of writes.Taking into account drive and NAND specifications, and an average write amplification of 2.0x (although in case of sequential workloads such as video editing this should be much closer to 1.0x), a realistic estimate as a minimum drive endurance would be:

120 GB => 187.5 TiB

240 GB => 375.0 TiB

480 GB => 750.0 TiB

960 GB => 1.46 PiB

Of course, it's not that these drives will stop working after 3000 write cycles. They will go on as long as uncorrectable write errors (which increase as the drive gets used) remain within usable margins.

glugglug - Wednesday, April 10, 2013 - link

It is very easy to come up with use cases where a "normal" user will end up hitting the 72TB of writes quickly.Most obvious example is a user who is using this large SSD to transition from a large HDD without it being "just a boot drive", so they archive a lot of stuff.

Depending on MSSE settings, it will likely uncompress everything into C:\Windows\Temp when it does scans each night scan.

You don't want to know how much of my X-25M G1's lifespan I killed in about 6 months time before finding out about that and junctioning my temp directories off of the SSD.

Solid State Brain - Wednesday, April 10, 2013 - link

I am currently using a Samsung 840 250GB with TLC memory, without any hard disk installed in my system. I use it for everything from temp files to virtual machines to torrents. I even reinstalled the entire system a few times because I hopped between Linux and Windows "just because". I haven't performed any "SSD optimization" either. A purely plug&play usage, and it isn't a "boot drive" either. Furthermore, my system is always on. Not quite a normal usage I'd say.In 47 days of usage I've written 2.12 TiB and used 10 write cycles out of 1000. This translates in 13 years of drive life at my current usage rate.

My usage graph + SMART data:

http://i.imgur.com/IwWZ9Kg.png

Temp directories alone aren't going to kill your SSD, not directly at least. It likely was something caused by some anomalous write-happy application, not Windows by itself.

juhatus - Wednesday, April 10, 2013 - link

What would you recommend overprovisioning for 256Gb M4 with bitlocker, 10-15-25% ? Also what was the M4's firmware you used to compare to M500? Also are there any benefits for M500 with bitlocker on windows 7? thanks for review, please add 25% results for M4 too :)Solid State Brain - Wednesday, April 10, 2013 - link

Increasing overprovisioning is only going to matter when continuously writing to the drive without never (or rarely) executing a TRIM operation every time an amount of data roughly equivalent (in practice, less, depending on workload and drive conditions) to the amount of free space gets written.This almost never happens in real life usage by the target userbase of such a drive. It's a matter for servers, for those who for a reason or another (like hi-definition video editing) perform many sustained writes, or for those working in an environment without TRIM support (which isn't the case for Windows 7/8, although it can be for MacOS or Linux - where it has to be manually enabled).

Anandtech SSD benchmarks aren't very realistic for most users, and the same can be said for their OP reccomendations.