Westmere-EP to Sandy Bridge-EP: The Scientist Potential Upgrade

by Ian Cutress on March 4, 2013 9:30 AM EST- Posted in

- CPUs

- Xeon

- Westmere-EP

- Sandy Bridge-EP

Grid Solvers

For any theoretical evaluation of physical events, we mathematically track a volume and monitor the evolution of the properties within that volume (speed, temperature, concentration). How a property changes over time is defined by the equations of the system, often describing the rate of change of energy transfer, motion, or another property over time.

The volume itself is divided into smaller sections or ‘nodes’, which contain the values of the properties of the system at that point. The volume can be split a variety of different ways – regularly by squares (finite difference), irregularly by squares (finite difference with variable distance modifiers), irregularly by triangles (finite element) to name three, although many different methods exist. More often than not the system has a point of action where stuff is happening (heat transfer at a surface or a surface bound reaction), meaning that some areas of the system are more important than others and the grid solver should focus on those areas (benefits against regular finite difference). This usually comes at the expense of increased computational difficulty and irregular memory accesses, but affords faster simulation time having to calculate 1000 variable distance points rather than 1 million (as an example of a 106 simulation volume). Another point to note is that if the system is symmetrical about an axis (or the center), the simulation and grid chosen is often reduced by a dimension to improve simulation throughput (as O(n) < O(n2) < O(n3)).

Boundary conditions can also affect the simulation – because the volume being simulated is finite with edges, the action at those edges has to be determined. The volume may be one unit of a whole, making the boundary a repeating boundary (entering one side comes out the other), a reflecting boundary (rate of change at the boundary is zero), a sink (boundary is constantly 0), an input (boundary is constantly 1) or a reactive zone (rate of change is defined by kinetics or another property) – again, there are many more boundary conditions depending on the simulation at hand. However as the boundary conditions have to be treated differently, this can cause extended memory reads, additional calculations at various points, or fewer calculations by virtue of constant values.

A final point to make is dealing with simulations involving time. For the scenarios I simulated in research, time could either be dealt with as a pushing structure (every node in the next time step is based on the surrounding nodes ‘pushing‘ the values of the previous time step) or pulling structure (each calculation of the next time step requires pulling a matrix of values from the previous time step), also known as explicit and implicit respectively. By their nature, explicit simulations are embarrassingly parallel but have restricted conditions based on time step and node size – implicit simulations are only slightly parallel, require larger memory jumps but have several fewer restrictions that allow more to be simulated in less time. Deciding between these two methods is often one of the first decisions when it comes to the sorts of simulation I will be testing.

All of the simulations used in this article were described in our previous GA-7PESH1 review in terms of both mathematics and code. For the sake of brevity, please refer back to that article for more information.

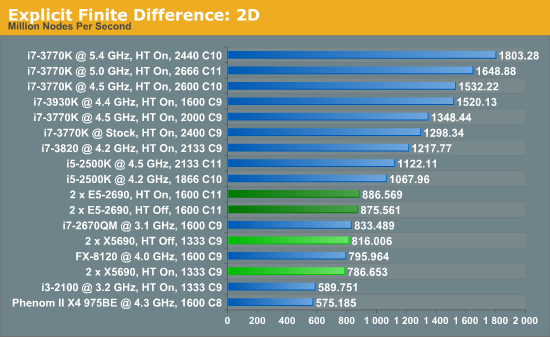

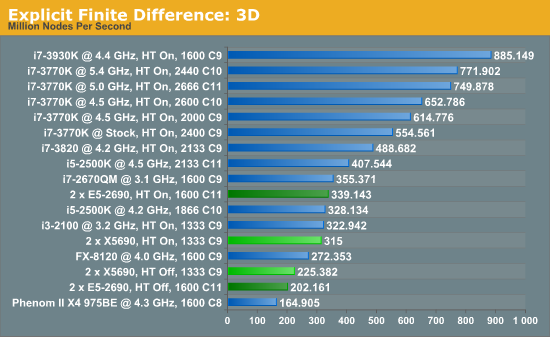

Explicit Finite Difference

For any grid of regular nodes, the simplest way to calculate the next time step is to use the values of those around it. This makes for easy mathematics and parallel simulation, as each node calculated is only dependent on the previous time step, not the nodes around it on the current calculated time step. By choosing a regular grid, we reduce the levels of memory access required for irregular grids. We test both 2D and 3D explicit finite difference simulations with 2n nodes in each dimension, using OpenMP as the threading operator in single precision. The grid is isotropic and the boundary conditions are sinks.

The 6-core X5690s in this situation definitely perform lower than the 8-core E5-2690s, although with the X5690s it pays to have HyperThreading turned off or face 3.5% less performance. Compared to the E5-2690s, the X5690s only perform 8% down for that 25% price difference.

In three dimensions, the E5-2690s still have the advantage, at 7.7% with HT enabled. With HT disabled, the dual X5690 system performs 11.4% better than the Sandy Bridge-E counterparts. The nature of the 3D simulation tends towards a single CPU system performing much better, however.

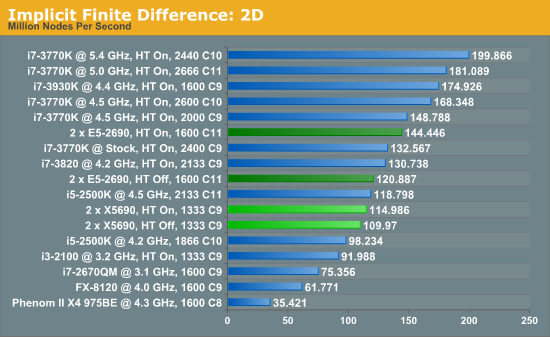

Implicit Finite Difference (with the Alternating Direction Implicit method)

The implicit method takes a different approach to the explicit method – instead of considering one unknown in the new time step to be calculated from known elements in the previous time step, we consider that an old point can influence several new points by way of simultaneous equations. This adds to the complexity of the simulation – the grid of nodes is solved as a series of rows and columns rather than points, reducing the parallel nature of the simulation by a dimension and drastically increasing the memory requirements of each thread. The upside, as noted above, is the less stringent stability rules related to time steps and grid spacing. For this we simulate a 2D grid of 2n nodes in each dimension, using OpenMP in single precision. Again our grid is isotropic with the boundaries acting as sinks.

The IPC and increased memory bandwidth of the E5-2690 system comes through here, with the X5690s being 20% slower. The dual CPU nature of the system is still at odds with the coding, as a single i7-3930K at stock should perform similarly.

44 Comments

View All Comments

jamyryals - Monday, March 4, 2013 - link

Element is an acceptable term in this case. Anyone confusing a finite element with a chemical element would do well to read up on these types of mathematical models anyways.Your other points are well made, and highlight the difficulty in creating meaningful benchmarks.

Kevin G - Monday, March 4, 2013 - link

I agree that the usage of the word element is technically correct. The thing that threw me off more was its usage in conjunction with particle. When I read that paragraph I had to do a double take to get the proper context. My issue here is just a small editorial quibble than a technical issue. :)IanCutress - Tuesday, March 5, 2013 - link

A majority of the results in the graphs (essentially all the overclocked ones) were on systems out of my control - several users from the Overclock.net HWBot team helped on that one and offered me insight into their setups. Unfortunately I do not have access to a vast array of sockets and systems for comparison.The implicit calculations have a fair few division elements per loop, as noted in the previous article where I posted the code (http://www.anandtech.com/show/6533/8) - for each timestep there are >2 divisions per node calculation. Technically the non-CS scientist might not know what is inside the silicon regarding Ivy's better divisor .

Don't forget the whole point of a review of something like this was to look at the scenario I was in. We went and ordered dual Nehalem systems (E5520s) just because of all the threads. Looking back on it now, I wish we had stuck to single processor systems based on the code we were writing.

Regarding the built-in Ivy PRNG, as noted in the previous review, the code wasn't hand written for each processor. It was written once and applied over. We didn't get extra time or money to find the best way to simulate something, we just had to simulate.

Regarding element and particle, I almost use them synonymously in the text. I like to use 'element' to describe the motion of one point in the simulation, but my Chemistry supervisor thought I was being an idiot when we were dealing with chemicals, despite my pleas that element was a CS term. He preferred the term particle as a mid-way point between the two (and also not to confuse the chemistry people reading our papers) and mentally I have equated the two, which is not always the best thing.

For XVC, I'm not sure why there is such a difference. With HT On, we have 24 threads to do 33 videos, which is one batch of 24 then another of 9 (put your turbos in where appropriate). Without HT, we're slightly faster per core (if we're lucky, or 0 if not), but we have batches of 12, 12 and then 9. Again, apply turbos where appropriate. That's just the program runs - it decides if it wants to commit one thread per video, or multiple threads per video. If it is coding more videos than half the available threads, it does one thread per video - if there is enough threads that each video can get two, it applies two. So the set of 9 videos when HT is on probably gets two threads per video, rather than one thread per video for the 9 videos when HT is off.

Ian

Kevin G - Tuesday, March 5, 2013 - link

The thing with Ivy Bridge's improved division unit is that it can explain some of the speed up. Glancing at the code, those operations don't seem to be that common that it'd make such a noticeable impact. (The real test would be to compile, disassemble and then count the number of division instructions.) The other thing about Ivy Bridge's divisor is that its performance gains are 'free' in the sense that it doesn't require rewriting or recompiling code to take advantage of. It is an architectural tweak that benefits existing code.Upon release, Nehalem was a very good platform and still respectable today. I think the issue is that consumer systems have been catching up. Looking at the charts the only consumer system that's a roughly the same age as the E5520's was an overclocked Phenom II X4's and the dual socket Xeon showed an advantage there. The problem I'm seeing is that the code isn't scaling across multiple sockets and memory controllers very well. Solving that would put performance closer to expectations. If possible, I would suggest enabling memory mirroring across sockets to see if that solves some of the scaling issues. The code wouldn't have to be written to be NUMA aware but usable memory in the system is halved.

If the NUMA problem is not practical to solve, then going single socket makes sense. Howevever, I would expand the discussion into include RAS. I would not recommend a single highly overclocked system to run scientific simulations as the reliability simply isn't there. One way around that is to get two similarly configured systems and run the simulation twice and compare the results for redunancy. With some of these heavily overclocked systems costing less than half the dual Xeon's price tag and running the code twice as fast, it is worth considering such a mirrored configuration. Other options to consider would be a single 8 core Xeon on socket 2011 or some of the quad core Xeon on socket 1155 and gain ECC memory support to forgo the second system.

The XVC results can see some improvements in queuing but those benefits should be able to carry over to the non-HT results with a software tweak. (Most software like that can accept such tuning parameters but I'm personally unfamiliar with XVC.) The results are falling outside the realm of reason. It is like say cooling a gas until you realize you're at -20 kelvin. At that point you have to realize something is erroneous. At best HT can double performance and the results are roughly five times faster. Turbo is a factor but that would benefit the non-HT results more as utilization is lower (ie. fewer transistors switching, less heat, more turbo boost).

toyotabedzrock - Monday, March 4, 2013 - link

I looks like Intel forgot about HT on sandy bridge.IanCutress - Tuesday, March 5, 2013 - link

i5-2500K is a 4C/4T processor.Ian

TeXWiller - Monday, March 4, 2013 - link

Ian, have you tried playing with the numa options of the boards?IanCutress - Tuesday, March 5, 2013 - link

NUMA was enabled in the BIOS, I made sure before I tested :) I also looked at various ways to keep the top turbo in force through all loading, but the limited BIOS options relating to clock speed on server boards are not up to scratch compared to consumer products (as you would expect).Ian

TeXWiller - Tuesday, March 5, 2013 - link

I was thinking about the improved bandwith between the processors in E5 family. Some aplications might prefer node interleaved memory instead.alpha754293 - Monday, March 4, 2013 - link

re: OpenMP vs. MPIMultithreaded codes using OpenMP is known to be quite a lot slower than a proper, MPI code. In the testing that I've done, the difference can be as much as 40% because the OpenMP code just simply cannot keep the CPU/FPU units occupied long enough. I've never really dug in deep as to WHY that is (I'm sooo NOT a programmer), but as an end user; that a HUGE difference.

Secondly, also depending on how you write your MPI code - some of them can be VERY efficient at using multicore/multiprocessors. It depends on the code, the nature and physics of the problem, and a whole bunch of other things. (LS-DYNA for example scales VERY well to the number of processors and/or cores. And my research is showing about an 11-17% benefit with HTT enabled on a 3930K (I don't have 8-core Xeons to play with). :(

Conversely, I've also seen some MPI codes that don't really quite parallelize nearly quite as well. It SAYS that it's MPI, but it looks more like an OpenMP implementation for the parallelization.

Part of it also depends on how much data dependency there is - does the information of one depend on the results or the information/data of another (either on spatial or temporal terms)?

Third - I've had many arguments about this. A single socket, multi-core processor is still a parallel multicore system. Yes, you don't have to deal with NUMA, but unless you have a LOT of traffic going through between your two sockets (something which NO ONE has been able to tell me how to measure so far) - chances are, both either OpenMP OR MPI can scale to single multi-core processor, or multiple multi-core processors. It shouldn't really care (unless you've hard-coded the domain decomposition and the number of "partitions" or "divisions" it makes for the parallelization.)

I think that the statement/comment that you wrote about how some of the benchmarks or some types of simulations/processes favour a single-CPU setup isn't QUITE exactly accurate only because your single-socket, multi-core CPUs were quite highly overclocked. (I've got my 3930K up to 4.5 GHz, and I just re-enabled C1E/EIST in order to cut my idle power consumption).

[brb...to be continued]