NVIDIA Tegra 4 Architecture Deep Dive, Plus Tegra 4i, Icera i500 & Phoenix Hands On

by Anand Lal Shimpi & Brian Klug on February 24, 2013 3:00 PM ESTSilicon makers almost always put together a reference design of their own for both testing their hardware, optimizing software stack, and generally having something to build to. Increasingly we’ve seen these vendors then take that reference design and do something with it beyond just having it for their own internal use — after all, if you’ve built and qualified a device, it makes sense to do something with it. While NVIDIA isn’t going to sell the FFRD directly, it’s a platform they can quickly hand off to OEMs wanting to implement a smartphone-platform with Tegra 4 or 4i relatively quickly.

To that end, NVIDIA has crafted Phoenix, which is their very own FFRD (Form Factor Reference Design) for both Tegra 4 and 4i versions. The high level specifications are what you’d expect for something from this current generation, with a 5-inch 1080p display, LTE, relatively thin profile, and of course a Tegra 4 SoC inside.

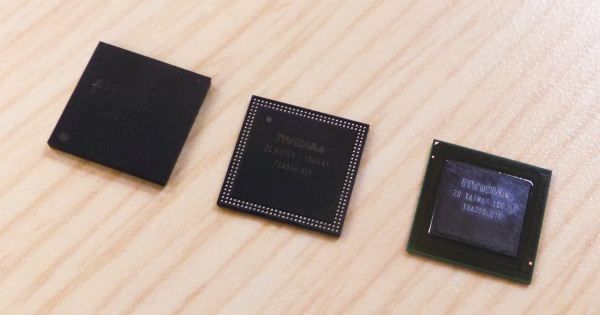

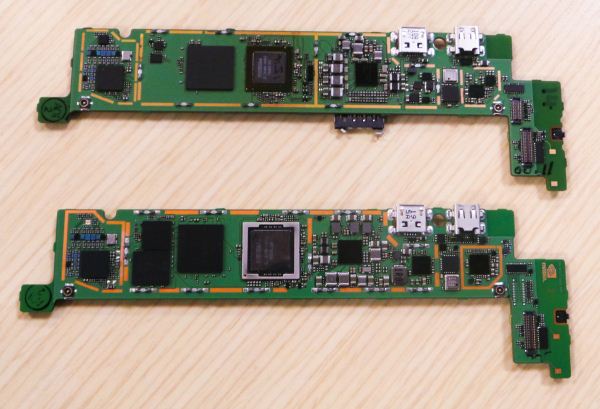

There are actually three different versions of the Phoenix — one in a version with Tegra 4, Tegra 4i without PoP (an external DRAM package), and a Tegra 4i version with PoP memory. All of them have the same PCB geometry inside, just a different SoC, and in the case of the Tegra 4 version, an external Icera i500 modem. NVIDIA showed us an image of their Tegra 4 Phoenix PCB, and in addition the Tegra 4i non PoP and Tegra 4 PCBs in the flesh. The Tegra 4 version has to include both Icera i500 and a MCP DRAM plus NAND of its own adjacent to it, right next to the DRAM for the Tegra 4. On the Tegra 4i version there’s simply unused space in the region occupied by those packages.

Glancing at the Tegra 4i package, we can also get Grey’s actual internal codename, which isn’t T30 series or T40 but rather T8A. The rest of the platform is basically what you’d expect for a modern device, and the PCB follows the rather typical L shaped design that’s common right now across the entire segment.

NVIDIA also showed a Tegra 4i based version of the Phoenix playing a version of Riptide 2 at 1080p with even more graphical assets (real time lighting, shadows, and improved water simulation) enabled over the previous version of Riptide optimized for Tegra 3.

I didn’t get too much time to play with the Phoenix – like any reference design from any of the players in this space it’s more of a function over form piece of equipment for developers or the silicon vendor themselves to get easy access to the insides – but superficially it’s the right kind of stuff for a smartphone right now.

75 Comments

View All Comments

xsacha - Saturday, March 23, 2013 - link

Tegra4i uses Cortex-A9. Krait is similar to Cortex-A15. The Krait obviously uses way more power and gives way more performance clock-for-clock. So you are comparing apples and oranges here. The 1.9GHz Krait quad-core is roughly equivalent to 2.5GHz+ in a Tegra 4i.name99 - Monday, February 25, 2013 - link

"But in favor of quad-core: software might start using cores a little more effectively w/time--Google and Apple are apparently trying to make WebKit able to do things like HTML parsing and JavaScript garbage collection in the background, and Microsoft's browser team backgrounds JavaScript compilation"It would be wise to design for the technology we have today, not the dream of technology we may one day have. As I have stated elsewhere, there is ample evidence that on the desktop, even today, multiple threads running on more than two cores at once is very rare. (More precisely

- many apps are multithreaded, but those threads tend to be mostly async IO type threads, mostly waiting

- there is a mild win to having three cores available, but it's not much advantage over two cores

- the situation has improved a little over ten years ago (when the first SMT P4s first started appearing) and when there was little advantage to two cores over one. But most of the improvement is the result of OS vendors moving as much stuff as possible of what they do (GUI, IO, etc) onto the second core.)

The only real code that utilizes multiple cores is video-encoding. In particular both games and photo processing do not use nearly as much multi-core as people imagine.

The situation for mobile is the same, only a little worse because there is less of simultaneous heavyweight apps running.

Given these facts, and the way code is actually structured today, 4 cores makes very little sense.

SMT makes sense, mainly in that its power and area footprint is very low, so it's a win on those occasions when the OS can make use of it. Beyond that, if you have excess transistors available, beefed up vectors (wider registers, and wider units) probably makes more sense. You'll notice that these recommendations parallel what Intel has done over the past few years --- they are not idiots, and desktop code is very similar to mobile code.

As for parallel web browsing, people have been publishing about it for years now; but the real world results remain unimpressive. It remains an unfortunate fact that the things that have been converted to parallel don't seem to be, for most sites, the things that are actually gating performance. A similar problem exists with PDF display (still not as snappy as I would like on an iPad3) --- the simple and obvious things you can imagine for parallelizing the rendering aren't the things that are usually the problem.

In both cases, the ideal situation would be to restart with totally redesigned file formats that are non-serial in nature; but that seems to be a "boil-the-ocean" strategy that no-one wants to commit to yet. (Though it would be nice if Apple and Adobe could get together to redefine a PDF2.0 file format that was explicitly parallel, and that seems rather easier than fixing the web.)

Krysto - Sunday, February 24, 2013 - link

It seems Nvidia really pulled off making Tegra 4's GPU 6x faster than Tegra 3, and with 5 Cortex A15 cores and 6x more GPU cores, all in the same size. Pretty impressive. But still quite disappointing for lack of OpenGL ES 3.0 and OpenCL support. I really hope they plan on supporting them in Tegra 5 along with the new 64 CPU and Maxwell-based GPU cores.Mike1111 - Sunday, February 24, 2013 - link

I would really like to see an analysis/comparison of companion core (Nvidia) vs. big.LITTLE (Samsung).lmcd - Sunday, February 24, 2013 - link

BIG.little (fixed it for ARM) isn't even in reference device stage yet is it?Krysto - Monday, February 25, 2013 - link

No need to fix it. The "opposite" style naming is intentional. It's ironic. Get it?phoenix_rizzen - Monday, February 25, 2013 - link

Exynos 5 Octa, which is A15/A7 big.LITTLE, has been demoed. Tegra 4, which is A15 plus a companion core, has been demoed.Neither are commercially available, neither are in shipping products, neither are available to consumers.

IOW, the Cortex-A15 variations for bit.LITTLE have passed the reference stage, and are in the "find companies to use them to build devices" stage. They'll be in consumers' grubby little hands before Christmas 2013.

tviceman - Sunday, February 24, 2013 - link

GPU performance ended up better than I thought it would after the subdued announcement and leaked early prototype benchmarks. Good to see.wongwarren - Monday, February 25, 2013 - link

I wonder which is faster. This or the Snapdragon 600.varad - Monday, February 25, 2013 - link

Snapdragon 600:http://www.anandtech.com/show/6792/lg-optimus-g-pr...

Tegra 4:

http://www.anandtech.com/show/6787/nvidia-tegra-4-...

So if the metric is simply raw performance [since you asked "faster"], looks like the Tegra 4 will win easily against the Snapdragon 600.

A better/fair comparison would be when we have performance numbers for Snapdragon 600 in a tablet or Tegra 4 in a phone.