Understanding Camera Optics & Smartphone Camera Trends, A Presentation by Brian Klug

by Brian Klug on February 22, 2013 5:04 PM EST- Posted in

- Smartphones

- camera

- Android

- Mobile

The Camera Module & CMOS Sensor Trends

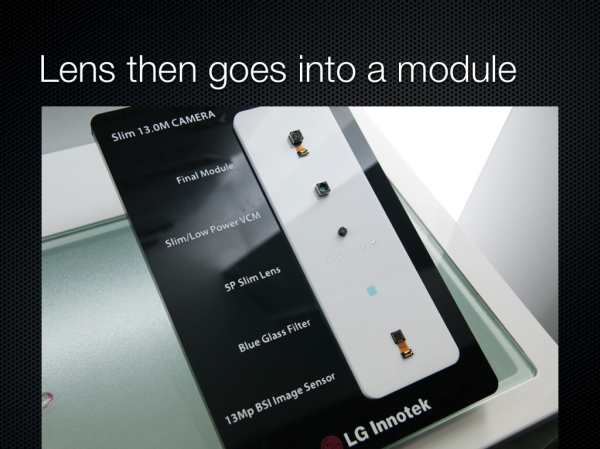

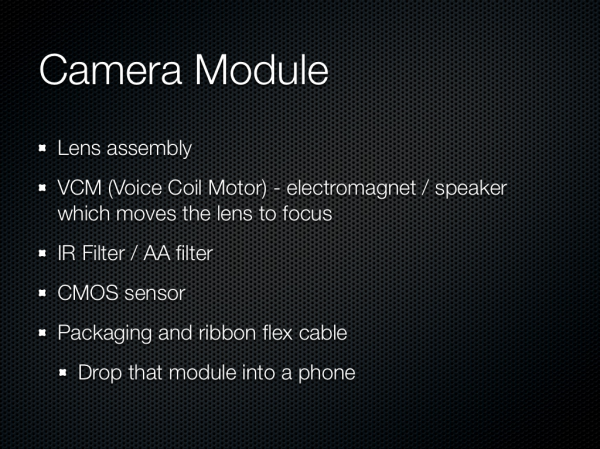

So after we have the lenses, what does that go into? Turns out there is some standardization, and that standardization for packaging is called a module. The module consists of of course our lens system, an IR filter, voice coil motor for focusing, and finally the CMOS and fanout ribbon cable. Fancy systems with OIS will contain a more complicated VCM and also a MEMS gyro somewhere in the module.

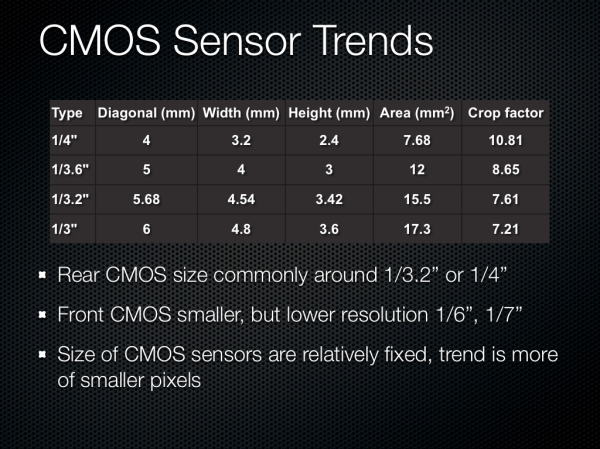

Onto CMOS, which is of course the image sensor itself. Most smartphone CMOSes end up being between 1/4“ and 1/3” in optical format, which is pretty small. There are some outliers for sure, but at the high end this is by far the prevailing trend. Optical format is again something we need to go look at a table for or consult the manufacturer about. Front facing sensors are way smaller, unsurprisingly. The size of the CMOS in most smartphones has been relatively fixed because going to a larger sensor would necessitate a thicker optical system, thus the real trend to increase megapixels has been more of smaller pixels.

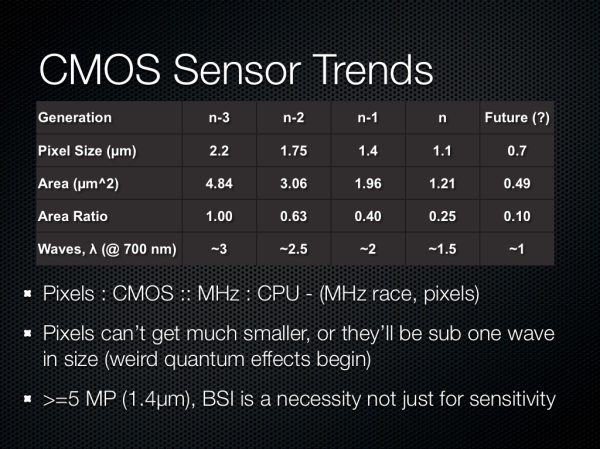

The trend in pixel size has been pretty easy to follow, with each generation going to a different size pixel to drive megapixel counts up. The current generation of modern pixels is around 1.1 microns square, basically any 13 MP smartphone is shipping 1.1 microns, like the Optimus G, and interestingly enough others are using 1.1 microns at 8 MP to drive thinner modules, like the thinner Optimus G option or Nexus 4. The previous generation of 8 MP sensors were using 1.4 micron pixels, and before that at 5 MP we were talking 1.65 or 1.75 micron pixels. Those are pretty tiny pixels, and if you stop and think about a wave of very red light at around 700nm, we’re talking about 1.5 waves with 1.1 micron pixels, around 2 waves at 1.4 microns, and so forth. There’s really not much smaller you can go, it doesn’t make sense to go smaller than one wave.

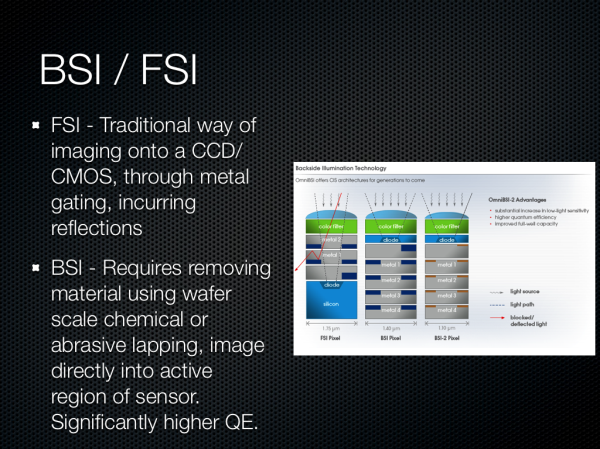

There was a lot of talk about the difference between backside (BSI) and front side illumination (FSI) for systems as well. BSI images directly through silicon into the active region of the pixel, whereas FSI images through metal layers which incur reflections and a smaller area and thus loss of light. BSI has been around for a while in the industrial and scientific field for applications wanting the highest quantum efficiency (conversion of photons to electrons), and while they were adopted in smartphone use to increase the sensitivity (quantum efficiency) of these pixels, there’s an even more important reason. With pixels this small in 2D profile (eg 1.4 x 1.4 microns) the actual geometry of a pixel began to look something like a long hallway, or very tall cylinder. The result would be quantum blur where a photon being imaged onto the surface of the pixel, converted to an electron, might not necessarily map to the appropriate active region underneath - it takes an almost random walk for some distance. In addition the numerical aperture of these pixels wouldn’t be nearly good enough for the systems they would be paired with.

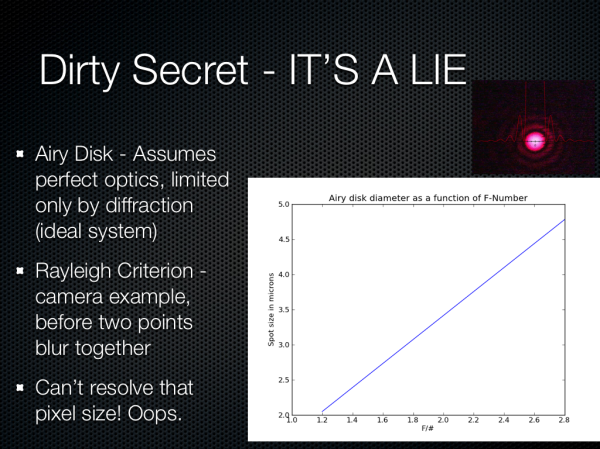

Around the time I received the One X and One S last year, I finally became curious about whether we could ever see nice bokeh (blurry background) with an F/2.0 system and small pixels. While trapped on some flight somewhere, I finally got bored enough to go quantify what this would be, and a side effect of this was some question about whether an ideal, diffraction limited (no aberrations, ideal, if we had perfect optics) system could even resolve a spot the size of the pixels on these sensors.

It turns out that we can’t, really. If we look at the airy disk diameter formed from a perfect diffraction limited HTC One X or S camera system (the parameters I chose since at the time this was, and still is, the best system on paper), we get a spot size around 3.0 microns. There’s some fudge factor here since interpolation takes place thanks to there being a bayer grid atop the CMOS that then is demosaiced, more on that later, so we’re close to being at around the right size, but obviously 1.1 microns is just oversampling.

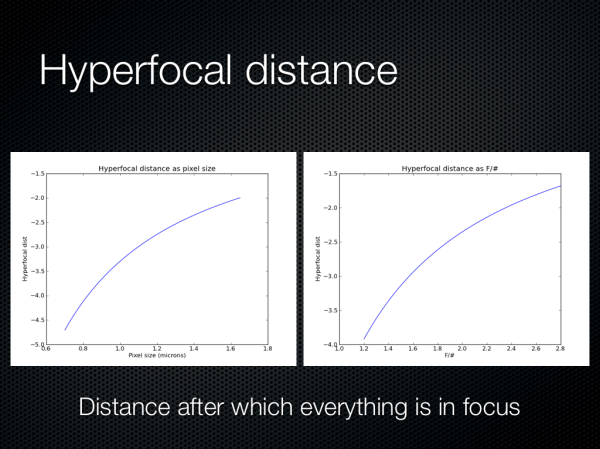

Oh, and also here are some hyperfocal distance plots as a function of pixel size and F/# for the same system. It turns out that everything is in focus pretty close to your average smartphone, so you have to be petty close to the subject to get a nice bokeh effect.

60 Comments

View All Comments

ssj3gohan - Sunday, February 24, 2013 - link

Couple of comments on this and your rant in the podcast :)First of all, you're lauding HTC for their larger pixel size and lamenting the move towards smaller pixels. But isn't it true that effective resolution, especially when your pixels are significantly smaller than the airy disk, is basically a function of integration area? The only downside to using smaller pixels is that you increase the effect of read noise and decrease fill factor. In an ideal world, a 100MP phone camera with the same sensor size as a 10MP one would make pictures that are just as good. With read noise being essentially absent nowadays, I don't see the reason to particularly bash on 13MP phone cameras compared to larger-pixel but same-integration-area sensors. They make the same pictures, just take up a little less space on the sd card.

Of course, you could make the argument that it's wrong to give in to the 'moar megapixels!' consumer side of things and try to educate people that sometimes less is more.

Next, you say that refractive index and focal length is essentially what limits the focal length for very thin cameras, but this can be alleviated by using diffractive optics (not yet now, but in the future). We may very well see 3mm-thickness 35mm focal length equivalent camera modules with large sensors someday. It's technically possible. Especially with, as you said, nanodiamonds and other very high refractive index synthetic lens materials in the making.

Next, about the resolving power. There's the airy disk and rayleigh's criterion, but this is not the end of resolving power. It does make sense to oversample beyond this point, you will get extra image information. It becomes exponentially less as you increase the megapixel count but you can still get about 150% extra image information by oversampling beyond the size of the airy disk. Again, in an ideal world without drawbacks to doing so, this does make sense.

tuxRoller - Sunday, February 24, 2013 - link

Especially, with the use of metamaterials that make use of negative indexes of refraction to allow you to resolve detail beyond the diffraction limit?ssj3gohan - Monday, February 25, 2013 - link

Well, keep in mind that the reason you can resolve beyond the diffraction limit is the fact that the geometrical properties of the sensor and optics differ. Optics will by definition cause gaussian blur as their defect mode, while the sensor has square and offset pixels. These areas do not overlap perfectly, so in order to perfectly image that blurry optical image you need pixels that are smaller than the fundamental size of the diffraction pattern (airy disk).These optical effects don't go away when you're using metamaterials/quantum opticss/etc. Light will still be a wave that will not necessarily enter the sensor perfectly perpendicular.

UltraTech79 - Monday, February 25, 2013 - link

I ave seen many many reviews of lenses and the technical details of digital imaging ect, and almost every time the article would have really shitty JPG images. I found it highly ironic. Kudos to you for using PNG throughout this quality article.AnnihilatorX - Monday, February 25, 2013 - link

I was reading the review for Sony's Xperia Z at techradar, I was astonished at how poor the 13MP Exmor RS sensor performs. Frankly, the image looks blurry and more like it's taken by a 5MP scaled up, with heavy noise even in a well lit scene:http://mos.futurenet.com/techradar/art/mobile_phon...

While I don't really care too much about smart phone camera, and I use my budget DSLR (cheaper than a smart phone) for my photography pleasure, I was thinking if the MP race and new gen smart phones can eliminate the need for me to lunge a DSLR around. If this article is correct on the physical limitations of smartphone camera technology, looks like there is still a future for DSLRs.

danacee - Monday, February 25, 2013 - link

Traditional, aka -crap- P&S clearly are at a disadvantage now, only the still very useful of optical zoom keeping them alive. However high end, 'big' sensor P&S such as the not too young Sony RX100 are still many many generations ahead of smartphone cameras, even the Nokia Pureview has terrible image quality next to it.pandemonium - Tuesday, February 26, 2013 - link

I am surprised at the lack of mention for Carl Zeiss lenses in here. If you're going to make an article about lens quality and cameraphone technology, why wouldn't you include the best in the market for such? Or are we disputing that fact?Also, not all cameraphones suffer as much from dramatic lens flare discoloration issues as said "very popular phone."

ShieTar - Tuesday, February 26, 2013 - link

Sure, you get a 3µm diffraction spot on your camera, and with 1.1µm pixels it gets oversampled. But that does not have to be a waste. As long as the diffraction pattern is well characterised, you can remove the diffraction effect through a deconvolution as part of your ISP. This even remains true for near-field optical effects that occur once you pixel size gets close to or below the image wavelength. As long as such corrections are implemented, and as long as your per-pixel noise is small enough for these algorithms to work, decreasing the pixel size does make a certain sense.Once noise becomes a larger problem then resolution, the smaller pixels hurt though, by wasting light through the larger crop factor and also by increasing the overall read-out noise. When exactly that point is reached depends on the light conditions you want to use your camera in, so it would be interesting to understand for which kind of conditions smartphone-cameras are being optimised.

rwei - Wednesday, February 27, 2013 - link

hurr hurrtheSuede - Wednesday, February 27, 2013 - link

I don't know where your Rayleigh limit comes from, but in real world optics, Rayleigh is:[1.22 x F# x wavelength] -giving 1.3µm for green (550nm) light in an F2.0 lens.

But maybe it's your interpretation of Rayleigh that is wrong, and that's where the error stems from. From the graphs, you show spot resolution limit as 2xRayleigh - and it isn't. Spot resolution is 1xRayleigh - giving an F2.0 lens a maximum resolution of the aforementioned 1.3µm - NOT 2.6µm.

The definition of Rayleigh:

-"Two point sources are regarded as just resolved when the principal diffraction maximum of one image coincides with the first minimum of the other.

"Just resolved" in this case means a resulting MTF of about 7% - i.e The minimum distance between two peaks where you can still resolve that they are two, not one large is equal to the RADIUS of the first null on the Airy disk. Not the diameter. This is quite a common error made by people from the "E" side of ElectrOptics.