Understanding Camera Optics & Smartphone Camera Trends, A Presentation by Brian Klug

by Brian Klug on February 22, 2013 5:04 PM EST- Posted in

- Smartphones

- camera

- Android

- Mobile

The Camera Module & CMOS Sensor Trends

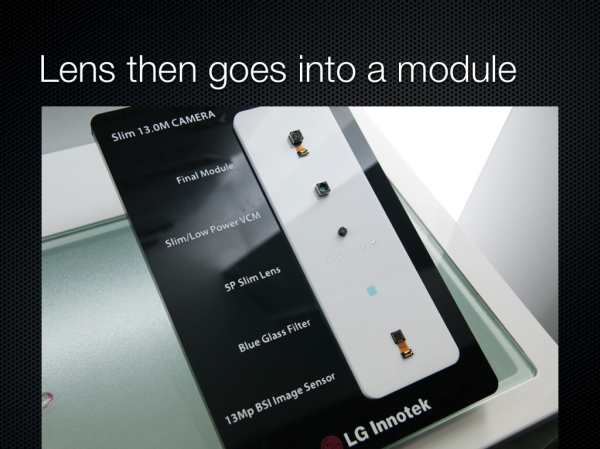

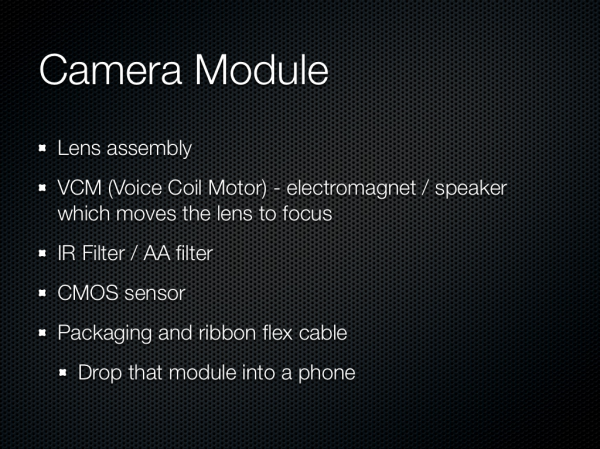

So after we have the lenses, what does that go into? Turns out there is some standardization, and that standardization for packaging is called a module. The module consists of of course our lens system, an IR filter, voice coil motor for focusing, and finally the CMOS and fanout ribbon cable. Fancy systems with OIS will contain a more complicated VCM and also a MEMS gyro somewhere in the module.

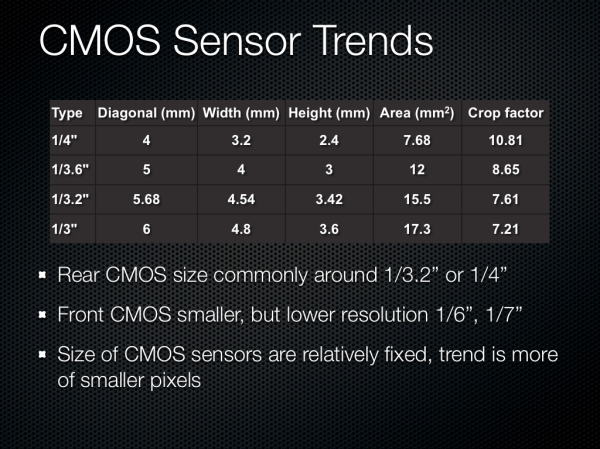

Onto CMOS, which is of course the image sensor itself. Most smartphone CMOSes end up being between 1/4“ and 1/3” in optical format, which is pretty small. There are some outliers for sure, but at the high end this is by far the prevailing trend. Optical format is again something we need to go look at a table for or consult the manufacturer about. Front facing sensors are way smaller, unsurprisingly. The size of the CMOS in most smartphones has been relatively fixed because going to a larger sensor would necessitate a thicker optical system, thus the real trend to increase megapixels has been more of smaller pixels.

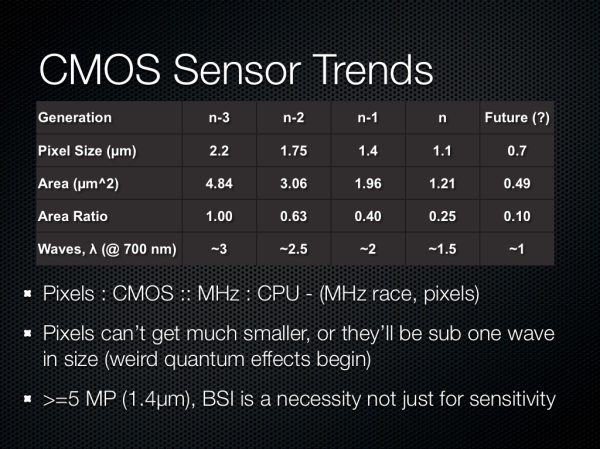

The trend in pixel size has been pretty easy to follow, with each generation going to a different size pixel to drive megapixel counts up. The current generation of modern pixels is around 1.1 microns square, basically any 13 MP smartphone is shipping 1.1 microns, like the Optimus G, and interestingly enough others are using 1.1 microns at 8 MP to drive thinner modules, like the thinner Optimus G option or Nexus 4. The previous generation of 8 MP sensors were using 1.4 micron pixels, and before that at 5 MP we were talking 1.65 or 1.75 micron pixels. Those are pretty tiny pixels, and if you stop and think about a wave of very red light at around 700nm, we’re talking about 1.5 waves with 1.1 micron pixels, around 2 waves at 1.4 microns, and so forth. There’s really not much smaller you can go, it doesn’t make sense to go smaller than one wave.

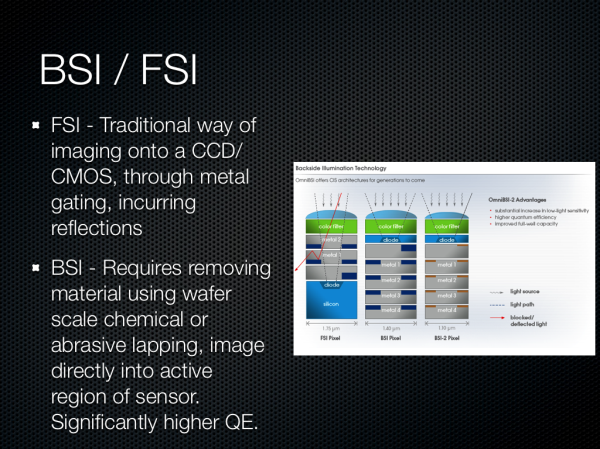

There was a lot of talk about the difference between backside (BSI) and front side illumination (FSI) for systems as well. BSI images directly through silicon into the active region of the pixel, whereas FSI images through metal layers which incur reflections and a smaller area and thus loss of light. BSI has been around for a while in the industrial and scientific field for applications wanting the highest quantum efficiency (conversion of photons to electrons), and while they were adopted in smartphone use to increase the sensitivity (quantum efficiency) of these pixels, there’s an even more important reason. With pixels this small in 2D profile (eg 1.4 x 1.4 microns) the actual geometry of a pixel began to look something like a long hallway, or very tall cylinder. The result would be quantum blur where a photon being imaged onto the surface of the pixel, converted to an electron, might not necessarily map to the appropriate active region underneath - it takes an almost random walk for some distance. In addition the numerical aperture of these pixels wouldn’t be nearly good enough for the systems they would be paired with.

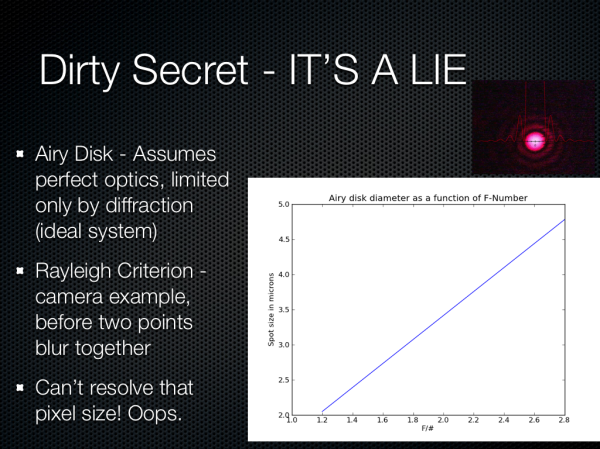

Around the time I received the One X and One S last year, I finally became curious about whether we could ever see nice bokeh (blurry background) with an F/2.0 system and small pixels. While trapped on some flight somewhere, I finally got bored enough to go quantify what this would be, and a side effect of this was some question about whether an ideal, diffraction limited (no aberrations, ideal, if we had perfect optics) system could even resolve a spot the size of the pixels on these sensors.

It turns out that we can’t, really. If we look at the airy disk diameter formed from a perfect diffraction limited HTC One X or S camera system (the parameters I chose since at the time this was, and still is, the best system on paper), we get a spot size around 3.0 microns. There’s some fudge factor here since interpolation takes place thanks to there being a bayer grid atop the CMOS that then is demosaiced, more on that later, so we’re close to being at around the right size, but obviously 1.1 microns is just oversampling.

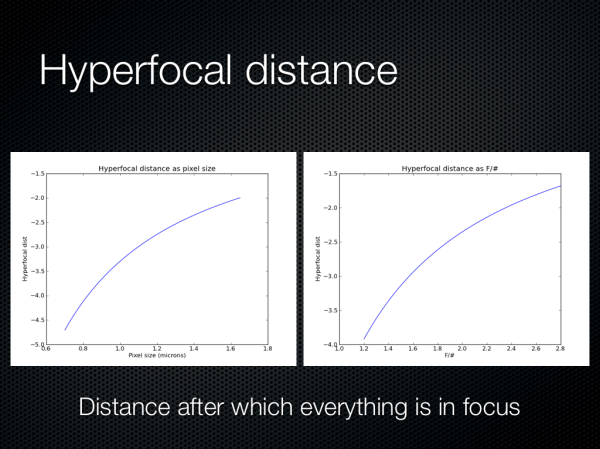

Oh, and also here are some hyperfocal distance plots as a function of pixel size and F/# for the same system. It turns out that everything is in focus pretty close to your average smartphone, so you have to be petty close to the subject to get a nice bokeh effect.

60 Comments

View All Comments

MrSpadge - Sunday, February 24, 2013 - link

You're right, conceptually one would "only" need to adapt a multi-junction solar cell for spatial resoluition, i.e. small pixels. This would introduce shadowing for the bottom layers similar to front side illumination again, though. Which might be countered with vias through the chips, at the cost of making manufacturing more expensive. And the materials and their processing become way more expensive in general, as they will be CMOS incompatible III-V composites.And worst: one could only gain a 3 times higher light sensitivity at maximum, so currently it's probably not worth the effort.

mdar - Thursday, February 28, 2013 - link

I think you are talking about Foveon sensors, used by Sigma to make some of their DSLR cameras. Since photons of different colors have different energies, they use this principle to detect color. Not sure how they do it (probably by checking at which depth the electron is generated), but there is lot of information on web about it.fuzzymath10 - Saturday, February 23, 2013 - link

one of the non-traditional imaging sensors around is the foveon x3 sensor. each pixel can sense all three primary colours rather than relying on bayer interpolation. it does have many limitations though.evonitzer - Wednesday, February 27, 2013 - link

Yeah, like only being in Sigma cameras that use Sigma mounts. Who on earth buys those things? The results are stunning to see, but they need some, well, design wins, to use the parlance of cell phones.They also need to make tiny sensors. AFAIK they only have the APS-C one, and those won't be showing up in phones anytime soon. :)

ShieTar - Tuesday, February 26, 2013 - link

The camera equivalent of a 3 LCD projector does exist, for example in so-called "Multi-Spectral Imager" instruments for space missions. The light entering the camera aperture is split into spectral bands by dichroic mirrors, and then imaged on a number of CCDs.The problem with this approach is that it takes considerable engineering effort to make sure that all the CCDs are aligned to each other with sub-pixel accuracy. Of course the cost of multiple CCDs and the space demand for the more complex optical system make this option quiet irrelevant for mobile devices.

nerd1 - Friday, February 22, 2013 - link

I wonder who actually tested the captured image using proper analysing software (Dxo for example) to see how much they ACTUALLY resolve?And I don't think we get diffraction limit of 3um - see the chart here

http://egami.blog.so-net.ne.jp/2011-07-11

We have 1.34um at f2.0 and 1.88um at f2.8.

Typical 8MP sensor have 1.4um photosites so 8MP sensors looks like an ideal spot for f2 optics. (Yes, 13MP @ 1.1um is just marketing gimmick I think)

In comparison, 36MP Nikon D800 has 4.9um photosite size, which is diffraction limited between f5.6 and f8.

jjj - Friday, February 22, 2013 - link

"smartphones are or are poised to begin displacing the role of a traditional point and shoot camera "That started quite a while ago so a rather disappointing "trends"section. Was waiting for some actual features , ways to get there. and more talk about video since it's becoming a lot more important.

Johnmcl7 - Friday, February 22, 2013 - link

Agreed, the presentation feels a bit out of date for current technology particularly as you say phone cameras have been displacing compact cameras for years - I'd say right back to the N95 which offered a decent 5MP AF camera and was released before the first Iphone.I'm also surprised to see no mention of Nokia pretty much even though they've very much been pushing the camera limits, their ultra high resolution Pureview camera showed you could have a very high number of pixels and high image quality (which this article seems to claim isn't possible even with lower resolution devices) and the Lumia 920 is an interesting step forward in having a physical image stabilisation system.

Also with regards to shallow depth of field with F2, that's just not going to happen on a camera phone because depth of field is primarily a function of the actual focal length (not the equivalent focal length) so to get a proper shallow depth of field effect (as in not shooting at very close macro distances) a camera phone would need a massive aperture many stops wider than F2 to counter the very short focal length.

John

Tarwin - Saturday, February 23, 2013 - link

Actually it makes sense he doesn't mention all that. He's talking about trends, the Pureview did not fit into the trends, both in quality and sensor size.Optical image stabilization doesn't fit in either as it only affects image quality in less than ideal situations such as no tripods/shaky hands. But he did mention the need for extra parts in the module configuration shouldnthat be part of the setup.

And in his defence of the comment ofndisplacing P&S cameras, he says "smartphones are or are poised to begin displacing the role" so he's notnsaying that they aren't doing it already, he gives you a choice in perspectives. Also I don't think you can say the N95 displaced P&S at the market level, only in casual use.

Manabu - Friday, February 22, 2013 - link

What about the "large" sensor 41MP Nokia 808 phone? It is sure a interesting outlier.And point & shot cameras still have the advantage of optical zoom, better handling, and can have bigger sensors. Just look at S110, LX7 or RX100 cameras. But budget super-compact cameras are indeed in extinction.