NVIDIA’s GeForce GTX Titan Review, Part 2: Titan's Performance Unveiled

by Ryan Smith & Rahul Garg on February 21, 2013 9:00 AM ESTPower, Temperature, & Noise

Last but certainly not least, we have our obligatory look at power, temperature, and noise. Next to price and performance of course, these are some of the most important aspects of a GPU, due in large part to the impact of noise. All things considered, a loud card is undesirable unless there’s a sufficiently good reason to ignore the noise.

It’s for that reason that GPU manufacturers also seek to keep power usage down, and under normal circumstances there’s a pretty clear relationship between power consumption, heat generated, and the amount of noise the fans will generate to remove that heat. At the same time however this is an area that NVIDIA is focusing on for Titan, as a premium product means they can use premium materials, going above and beyond what more traditional plastic cards can do for noise dampening.

| GeForce GTX Titan Voltages | ||||

| Titan Max Boost | Titan Base | Titan Idle | ||

| 1.1625v | 1.012v | 0.875v | ||

Stopping quickly to take a look at voltages, Titan’s peak stock voltage is at 1.162v, which correlates to its highest speed bin of 992MHz. As the clockspeeds go farther down these voltages drop, to a load low of 0.95v at 744MHz. This ends up being a bit less than the GTX 680 and most other desktop Kepler cards, which go up just a bit higher to 1.175v. Since NVIDIA is classifying 1.175v as an “overvoltage” on Titan, it looks like GK110 isn’t going to be quite as tolerant of voltages as GK104 was.

| GeForce GTX Titan Average Clockspeeds | |||

| Max Boost Clock | 992MHz | ||

| DiRT:S | 992MHz | ||

| Shogun 2 | 966MHz | ||

| Hitman | 992MHz | ||

| Sleeping Dogs | 966MHz | ||

| Crysis | 992MHz | ||

| Far Cry 3 | 979MHz | ||

| Battlefield 3 | 992MHz | ||

| Civilization V | 979MHz | ||

One thing we quickly notice about Titan is that thanks to GPU Boost 2 and the shift from what was primarily a power based boost system to a temperature based boost system is that Titan hits its maximum speed bin far more often and sustains it more often too, especially since there’s no longer a concept of a power target with Titan, and any power limits are based entirely by TDP. Half of our games have an average clockspeed of 992MHz, or in other words never triggered a power or thermal condition that would require Titan to scale back its clockspeed. For the rest of our tests the worst clockspeed was all of 2 bins (26MHz) lower at 966MHz, with this being a mix of hitting both thermal and power limits.

On a side note, it’s worth pointing out that these are well in excess of NVIDIA’s official boost clock for Titan. With Titan boost bins being based almost entirely on temperature, the average boost speed for Titan is going to be more dependent on environment (intake) temperatures than GTX 680 was, so our numbers are almost certainly a bit higher than what one would see in a hotter environment.

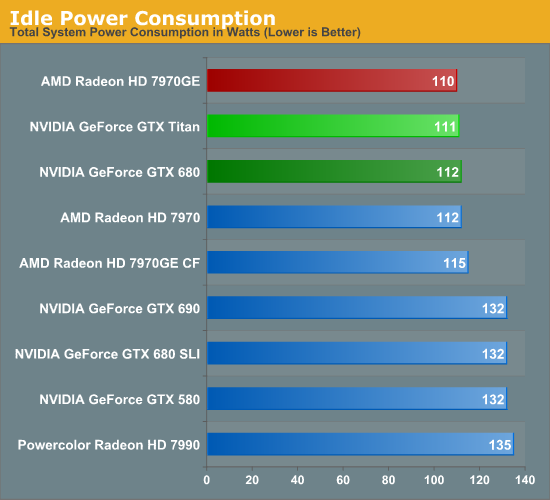

Starting as always with a look at power, there’s nothing particularly out of the ordinary here. AMD and NVIDIA have become very good at managing idle power through power gating and other techniques, and as a result idle power has come down by leaps and bounds over the years. At this point we still typically see some correlation between die size and idle power, but that’s a few watts at best. So at 111W at the wall, Titan is up there with the best cards.

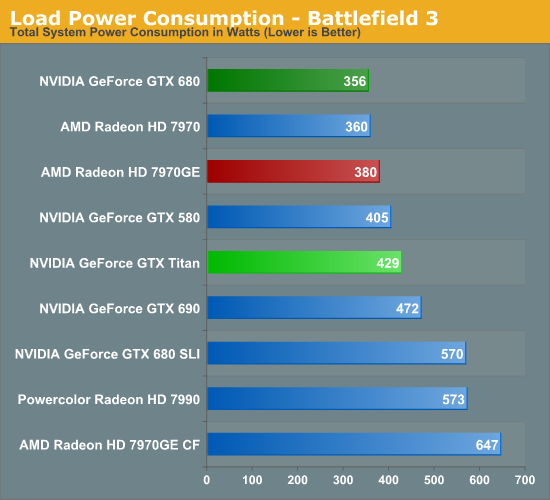

Moving on to our first load power measurement, as we’ve dropped Metro 2033 from our benchmark suite we’ve replaced it with Battlefield 3 as our game of choice for measuring peak gaming power consumption. BF3 is a difficult game to run, but overall it presents a rather typical power profile which of all the games in our benchmark suite makes it one of the best representatives.

In any case, as we can see Titan’s power consumption comes in below all of our multi-GPU configurations, but higher than any other single-GPU card. Titan’s 250W TDP is 55W higher than GTX 680’s 195W TDP, and with a 73W difference at the wall this isn’t too far off. A bit more surprising is that it’s drawing nearly 50W more than our 7970GE at the wall, given the fact that we know the 7970GE usually gets close to its TDP of 250W. At the same time since this is a live game benchmark, there are more factors than just the GPU in play. Generally speaking, the higher a card’s performance here, the harder the rest of the system will have to work to keep said card fed, which further increases power consumption at the wall.

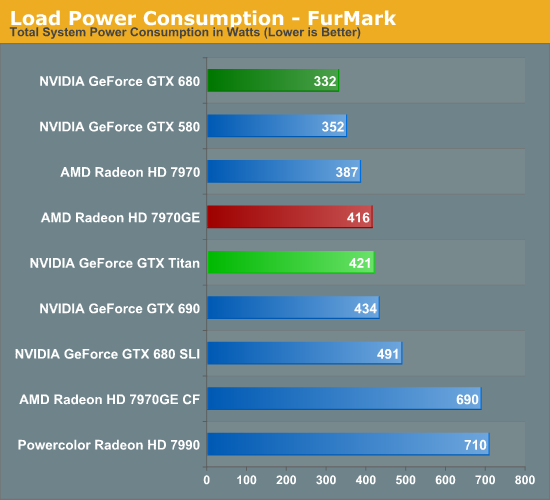

Moving to Furmark our results keep the same order, but the gap between the GTX 680 and Titan widens, while the gap between Titan and the 7970GE narrows. Titan and the 7970GE shouldn’t be too far apart from each other in most situations due to their similar TDPs (even if NVIDIA and AMD TDPs aren’t calculated in quite the same way), so in a pure GPU power consumption scenario this is what we would expect to see.

Titan for its part is the traditional big NVIDIA GPU, and while NVIDIA does what they can to keep it in check, at the end of the day it’s still going to be among the more power hungry cards in our collection. Power consumption itself isn’t generally a problem with these high end cards so long as a system has the means to cool it and doesn’t generate much noise in doing so.

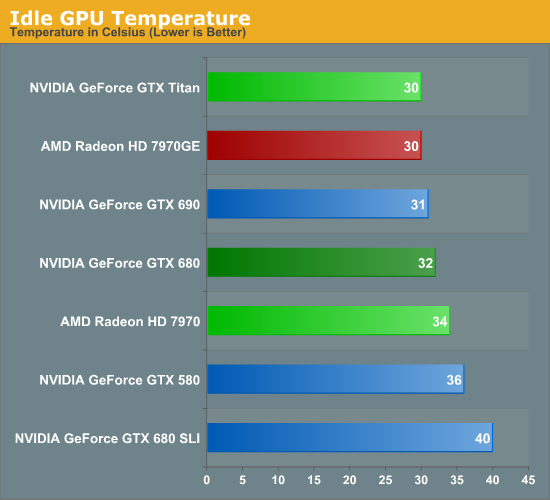

Moving on to temperatures, for a single card idle temperatures should be under 40C for anything with at least a decent cooler. Titan for its part is among the coolest at 30C; its large heatsink combined with its relatively low idle power consumption makes it easy to cool here.

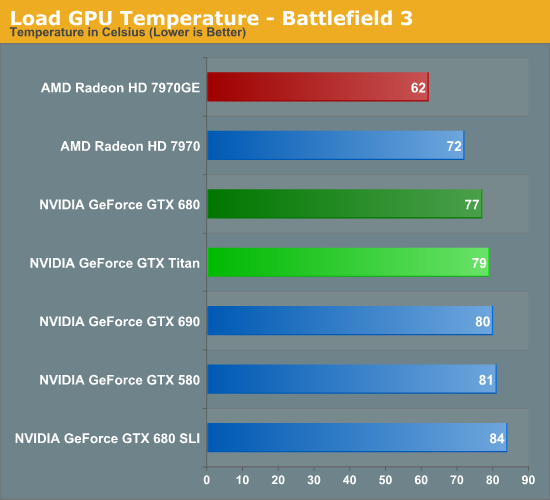

Because Titan’s boost mechanisms are now temperature based, Titan’s temperatures are going to naturally gravitate towards its default temperature target of 80C as the card raises and lowers clockspeeds to maximize performance while keeping temperatures at or under that level. As a result just about any heavy load is going to see Titan within a couple of degrees of 80C, which makes for some very predictable results.

Looking at our other cards, while the various NVIDIA cards are still close in performance the 7970GE ends up being quite a bit cooler due to its open air cooler. This is typical of what we see with good open air coolers, though with NVIDIA’s temperature based boost system I’m left wondering if perhaps those days are numbered. So long as 80C is a safe temperature, there’s little reason not to gravitate towards it with a system like NVIDIA’s, regardless of the cooler used.

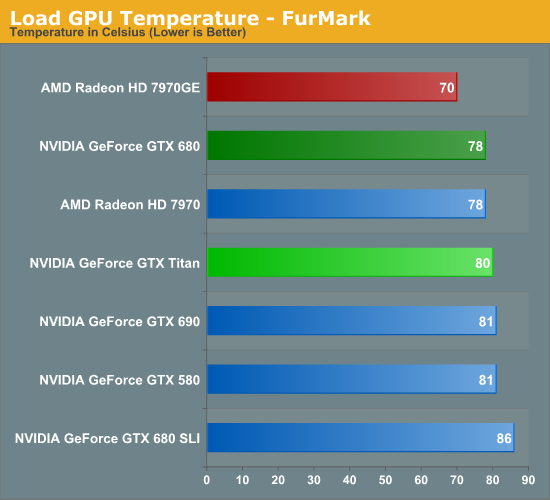

With Furmark we see everything pull closer together as Titan holds fast at 80C while most of the other cards, especially the Radeons, rise in temperature. At this point Titan is clearly cooler than a GTX 680 SLI, 2C warmer than a single GTX 680, and still a good 10C warmer than our 7970GE.

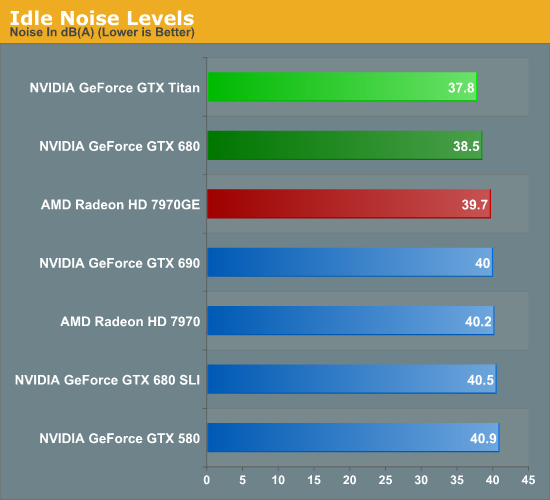

Just as with the GTX 690, one of the things NVIDIA focused on was construction choices and materials to reduce noise generated. So long as you can keep noise down, then for the most part power consumption and temperatures don’t matter.

Simply looking at idle shows that NVIDIA is capable of delivering on their claims. 37.8dB is the quietest actively cooled high-end card we’ve measured yet, besting even the luxury GTX 690, and the also well-constructed GTX 680. Though really with the loudest setup being all of 40.5dB, none of these setups is anywhere near loud at idle.

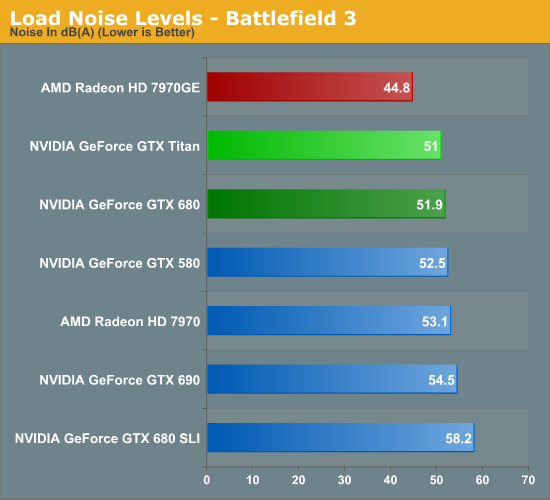

It’s with load noise that we finally see the full payoff of Titan’s build quality. At 51dB it’s only marginally quieter than the GTX 680, but as we recall from our earlier power data, Titan is drawing nearly 70W more than GTX 680 at the wall. In other words, despite the fact that Titan is drawing significantly more power than GTX 680, it’s still as quiet as or quieter than the aforementioned card. This coupled with Titan’s already high performance is Titan’s true power in NVIDIA’s eyes; it’s not just fast, but despite its speed and despite its TDP it’s as quiet as any other blower based card out there, allowing them to get away with things such as Tiki and tri-SLI systems with reasonable noise levels.

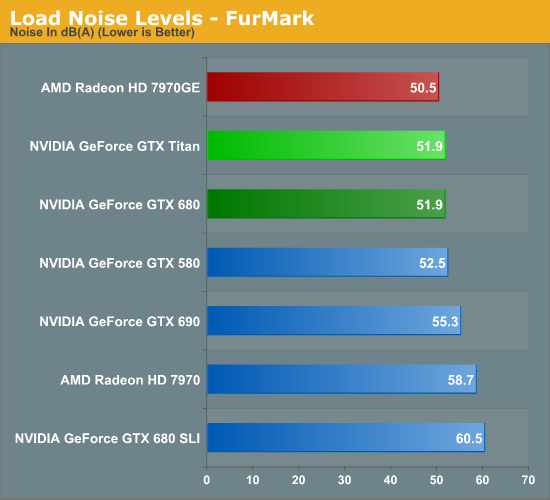

Much like what we saw with temperatures under Furmark, noise under Furmark has our single-GPU cards bunching up. Titan goes up just enough to tie GTX 680 in our pathological scenario, meanwhile our multi-GPU cards start shooting up well past Titan, while the 7970GE jumps up to just shy of Titan. This is a worst case scenario, but it’s a good example of how GPU Boost 2.0’s temperature functionality means that Titan quite literally keeps its cool and thereby keeps its noise in check.

Of course we would be remiss to point out that in all these scenarios the open air cooled 7970GE is still quieter, and in our gaming scenario by actually by quite a bit. Not that Titan is loud, but it doesn’t compare to the 7970GE. Ultimately we get to the age old debate between blowers and open air coolers; open air coolers are generally quieter, but blowers allow for more flexibility with products, and are more lenient with cases with poor airflow.

Ultimately Titan is a blower so that NVIDIA can do concept PCs like Tiki, which is something an open air cooler would never be suitable for. For DIY builders the benefits may not be as pronounced, but this is also why NVIDIA is focusing so heavily on boutique systems where the space difference really matters. Whereas realistically speaking, AMD’s best blower-capable card is the vanilla 7970, a less power hungry but also much less powerful card.

337 Comments

View All Comments

cliffnotes - Thursday, February 21, 2013 - link

Price is a disgrace. Can we really be surprised though ? We saw the 680 release and knew then they were selling their mid ranged card as a flagship with a flagship price.We knew then the real flagship was going to come at some point. I admit I assumed they would replace the 680 with it and charge maybe 600 or 700. Can't believe they're trying to pawn it off for 1000. Looks like nvidia has decided to try and reshape what the past flagship performance level is worth. 8800gtx,280,285,480,580 all 500-600, we all know gtx680 is not a proper flagship and was their mid-range. Here is the real one and..... 1000

Outrageous.

ogreslayer - Thursday, February 21, 2013 - link

Problem here is this gen none of the reviewers chewed out AMD for the 7970. This led Nvidia to think it was totally fine to release GK104 for $500 which was still cheaper then a 7970 but not where that die was originally slotted and to do this utter insanity with a $1000 solution that is more expensive then solutions that are faster then it.7950 3-way Crossfire, GTX690, GTX660Ti 3 Way SLI, GTX670SLI and GTX680SLI are all better options for anyone who isn't spending $3000 on cards as even dual card you are better off with the GTX690s in SLI. Poor form Nvidia, poor form. But poor form to every reviewer who gives this an award of any kind. It's time to start taking pricing and availability into the equation.

I think I'd have much less of an issue if partners had access to GK110 dies binned for slightly lower clocks and limited to 3GB at 750-800. I'd wager you'd hit close to the same performance window at a more reasonable price that people wouldn't have scoffed at. GTX670SLI is about $720...

HisDivineOrder - Thursday, February 21, 2013 - link

Pretty much agree. GPU reviewers of late have been so forgiving toward nVidia and AMD for all kinds of crap. They don't seem to have the cahoneys to put their foot down and say, "This far, no farther!"They just keep bowing their head and saying, "Can I have s'more, please?" Pricing is way out of hand, but the reviewers here and elsewhere just seem to be living in a fantasy world where these prices make even an iota of sense.

That said, the Titan is a halo card and I don't think 99% of people out there are even supposed to be considering it.

This is for that guy you read about on the forum thread who says he's having problems with quad-sli working properly. This is for him to help him spend $1k more on GPU's than he already would have.

So then we can have a thread with him complaining about how he's not getting optimal performance from his $3k in GPU's. And how, "C'mon, nVidia! I spent $3k in your GPU's! Make me a custom driver!"

Which, if I'd spent 3k in GPU's, I'd probably want my very own custom driver, too.

ronin22 - Thursday, February 21, 2013 - link

For 3k, you can pay a good developer (all cost included) for about 5 days, to build your custom driver.Good luck with that :D

CeriseCogburn - Tuesday, February 26, 2013 - link

I can verify that programmer pricing personally.Here is why we have crap amd crashing and driver problems only worsening still.

33% CF failure, right frikkin now.

Driver teams decimated by losing financial reality.

"Investing" as our many local amd fanboy retard destroyers like to proclaim, in an amd card, is one sorry bet on the future.

It's not an investment.

If it weren't for the constant crybaby whining about price in a laser focused insane fps only dream world of dollar pinching beyond the greatest female coupon clipper in the world's OBSESSION level stupidity, I could stomach an amd fanboy buying Radeons at full price and not WHINING in an actual show of support for the failing company they CLAIM must be present for "competition" to continue.

Instead our little hoi polloi amd ragers rape away at amd's failed bottom line, and just shortly before screamed nVidia would be crushed out of existence by amd's easy to do reduction in prices.... it went on and on and on for YEARS as they were presented the REAL FACTS and ignored them entirely.

Yes, they are INSANE.

Perhaps now they have learned to keep their stupid pieholes shut in this area, as their meme has been SILENCED for it's utter incorrectness.

Thank God for small favors YEARS LATE.

Keep crying crybabies, it's all you do now, as you completely ignore amd's utter FAILURE in the driver department and are STUPID ENOUGH to unconsciously accept "the policy" about dual card usage here, WHEN THE REALITY IS NVIDIA'S CARDS ALWAYS WORK AND AMD'S FAIL 33% OF THE TIME.

So recommending CROSSFIRE cannot occur, so here is thrown the near perfect SLI out with the biased waters.

ANOTHER gigantic, insane, lie filled BIAS.

Congratulations amd fanboys, no one could possibly be more ignorant nor dirtier. That's what lying and failure is all about, it's all about amd and their little CLONES.

CeriseCogburn - Saturday, February 23, 2013 - link

Prices have been going up around the world for a few years now.Of course mommies basement has apparently not been affected by the news.

trajan2448 - Thursday, February 21, 2013 - link

Awesome card! best single GPU on the planet at the moment. Almost 50% better in frame latencies than 7970. Crossfire,don't make me laugh. here's an analysis. Many of the frames "rendered" by the 7970 and especially Crossfire aren't visible.http://www.pcper.com/reviews/G...

CeriseCogburn - Thursday, February 21, 2013 - link

So amd has been lying, and the fraps boys have been jiving for years now....It's coming out - the BIG LIE of the AMD top end cards... LOL

Fraudster amd and their idiot fanboys are just about finished.

http://www.pcper.com/reviews/Graphics-Cards/NVIDIA...

LOL- shame on all the dummy reviewers

Alucard291 - Sunday, February 24, 2013 - link

What you typed here sounds like sarcasm.And you're actually serious aren't you?

That's really cute. But can you please take your comments to 4chan/engadget where they belong.

CeriseCogburn - Sunday, February 24, 2013 - link

Ok troll, you go to wherever the clueless reign. You will fit right in.Those aren't suppositions I made, they are facts.