NVIDIA’s GeForce GTX Titan Review, Part 2: Titan's Performance Unveiled

by Ryan Smith & Rahul Garg on February 21, 2013 9:00 AM ESTPower, Temperature, & Noise

Last but certainly not least, we have our obligatory look at power, temperature, and noise. Next to price and performance of course, these are some of the most important aspects of a GPU, due in large part to the impact of noise. All things considered, a loud card is undesirable unless there’s a sufficiently good reason to ignore the noise.

It’s for that reason that GPU manufacturers also seek to keep power usage down, and under normal circumstances there’s a pretty clear relationship between power consumption, heat generated, and the amount of noise the fans will generate to remove that heat. At the same time however this is an area that NVIDIA is focusing on for Titan, as a premium product means they can use premium materials, going above and beyond what more traditional plastic cards can do for noise dampening.

| GeForce GTX Titan Voltages | ||||

| Titan Max Boost | Titan Base | Titan Idle | ||

| 1.1625v | 1.012v | 0.875v | ||

Stopping quickly to take a look at voltages, Titan’s peak stock voltage is at 1.162v, which correlates to its highest speed bin of 992MHz. As the clockspeeds go farther down these voltages drop, to a load low of 0.95v at 744MHz. This ends up being a bit less than the GTX 680 and most other desktop Kepler cards, which go up just a bit higher to 1.175v. Since NVIDIA is classifying 1.175v as an “overvoltage” on Titan, it looks like GK110 isn’t going to be quite as tolerant of voltages as GK104 was.

| GeForce GTX Titan Average Clockspeeds | |||

| Max Boost Clock | 992MHz | ||

| DiRT:S | 992MHz | ||

| Shogun 2 | 966MHz | ||

| Hitman | 992MHz | ||

| Sleeping Dogs | 966MHz | ||

| Crysis | 992MHz | ||

| Far Cry 3 | 979MHz | ||

| Battlefield 3 | 992MHz | ||

| Civilization V | 979MHz | ||

One thing we quickly notice about Titan is that thanks to GPU Boost 2 and the shift from what was primarily a power based boost system to a temperature based boost system is that Titan hits its maximum speed bin far more often and sustains it more often too, especially since there’s no longer a concept of a power target with Titan, and any power limits are based entirely by TDP. Half of our games have an average clockspeed of 992MHz, or in other words never triggered a power or thermal condition that would require Titan to scale back its clockspeed. For the rest of our tests the worst clockspeed was all of 2 bins (26MHz) lower at 966MHz, with this being a mix of hitting both thermal and power limits.

On a side note, it’s worth pointing out that these are well in excess of NVIDIA’s official boost clock for Titan. With Titan boost bins being based almost entirely on temperature, the average boost speed for Titan is going to be more dependent on environment (intake) temperatures than GTX 680 was, so our numbers are almost certainly a bit higher than what one would see in a hotter environment.

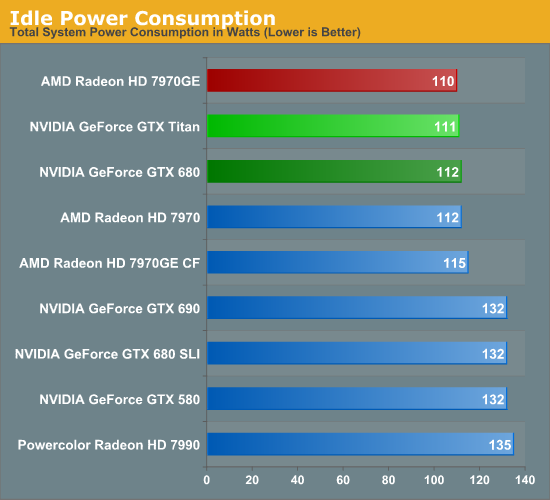

Starting as always with a look at power, there’s nothing particularly out of the ordinary here. AMD and NVIDIA have become very good at managing idle power through power gating and other techniques, and as a result idle power has come down by leaps and bounds over the years. At this point we still typically see some correlation between die size and idle power, but that’s a few watts at best. So at 111W at the wall, Titan is up there with the best cards.

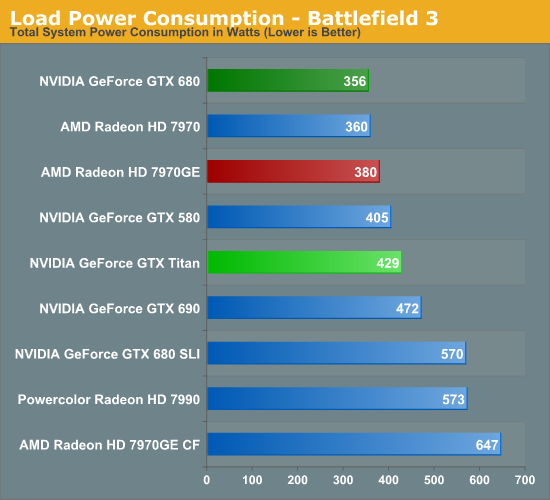

Moving on to our first load power measurement, as we’ve dropped Metro 2033 from our benchmark suite we’ve replaced it with Battlefield 3 as our game of choice for measuring peak gaming power consumption. BF3 is a difficult game to run, but overall it presents a rather typical power profile which of all the games in our benchmark suite makes it one of the best representatives.

In any case, as we can see Titan’s power consumption comes in below all of our multi-GPU configurations, but higher than any other single-GPU card. Titan’s 250W TDP is 55W higher than GTX 680’s 195W TDP, and with a 73W difference at the wall this isn’t too far off. A bit more surprising is that it’s drawing nearly 50W more than our 7970GE at the wall, given the fact that we know the 7970GE usually gets close to its TDP of 250W. At the same time since this is a live game benchmark, there are more factors than just the GPU in play. Generally speaking, the higher a card’s performance here, the harder the rest of the system will have to work to keep said card fed, which further increases power consumption at the wall.

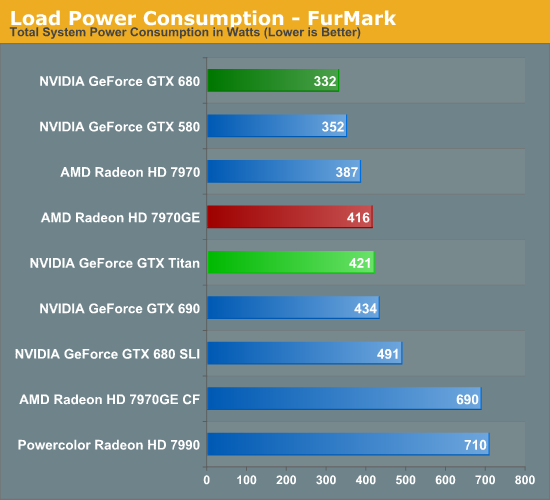

Moving to Furmark our results keep the same order, but the gap between the GTX 680 and Titan widens, while the gap between Titan and the 7970GE narrows. Titan and the 7970GE shouldn’t be too far apart from each other in most situations due to their similar TDPs (even if NVIDIA and AMD TDPs aren’t calculated in quite the same way), so in a pure GPU power consumption scenario this is what we would expect to see.

Titan for its part is the traditional big NVIDIA GPU, and while NVIDIA does what they can to keep it in check, at the end of the day it’s still going to be among the more power hungry cards in our collection. Power consumption itself isn’t generally a problem with these high end cards so long as a system has the means to cool it and doesn’t generate much noise in doing so.

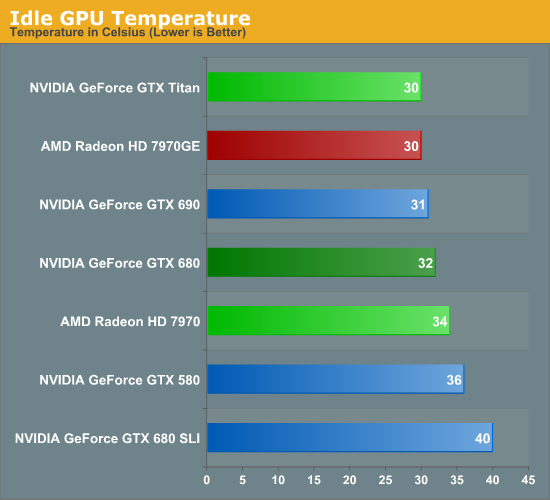

Moving on to temperatures, for a single card idle temperatures should be under 40C for anything with at least a decent cooler. Titan for its part is among the coolest at 30C; its large heatsink combined with its relatively low idle power consumption makes it easy to cool here.

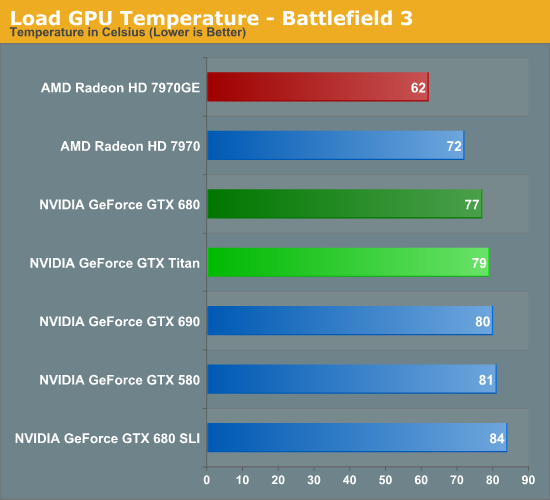

Because Titan’s boost mechanisms are now temperature based, Titan’s temperatures are going to naturally gravitate towards its default temperature target of 80C as the card raises and lowers clockspeeds to maximize performance while keeping temperatures at or under that level. As a result just about any heavy load is going to see Titan within a couple of degrees of 80C, which makes for some very predictable results.

Looking at our other cards, while the various NVIDIA cards are still close in performance the 7970GE ends up being quite a bit cooler due to its open air cooler. This is typical of what we see with good open air coolers, though with NVIDIA’s temperature based boost system I’m left wondering if perhaps those days are numbered. So long as 80C is a safe temperature, there’s little reason not to gravitate towards it with a system like NVIDIA’s, regardless of the cooler used.

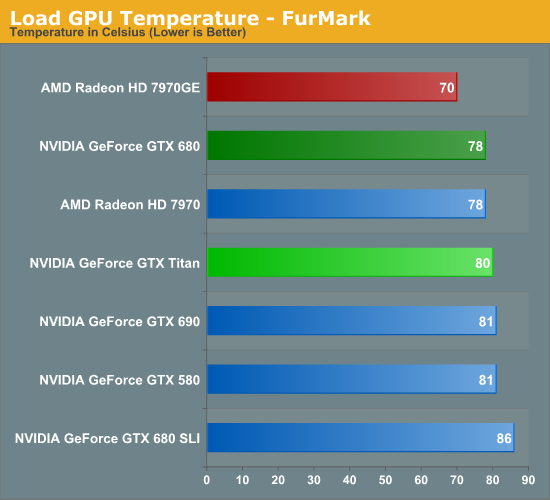

With Furmark we see everything pull closer together as Titan holds fast at 80C while most of the other cards, especially the Radeons, rise in temperature. At this point Titan is clearly cooler than a GTX 680 SLI, 2C warmer than a single GTX 680, and still a good 10C warmer than our 7970GE.

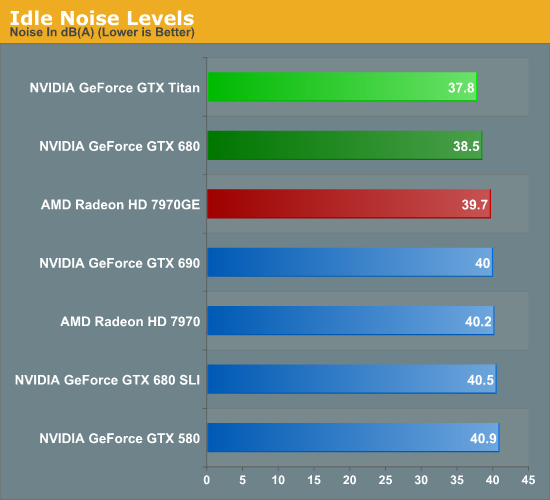

Just as with the GTX 690, one of the things NVIDIA focused on was construction choices and materials to reduce noise generated. So long as you can keep noise down, then for the most part power consumption and temperatures don’t matter.

Simply looking at idle shows that NVIDIA is capable of delivering on their claims. 37.8dB is the quietest actively cooled high-end card we’ve measured yet, besting even the luxury GTX 690, and the also well-constructed GTX 680. Though really with the loudest setup being all of 40.5dB, none of these setups is anywhere near loud at idle.

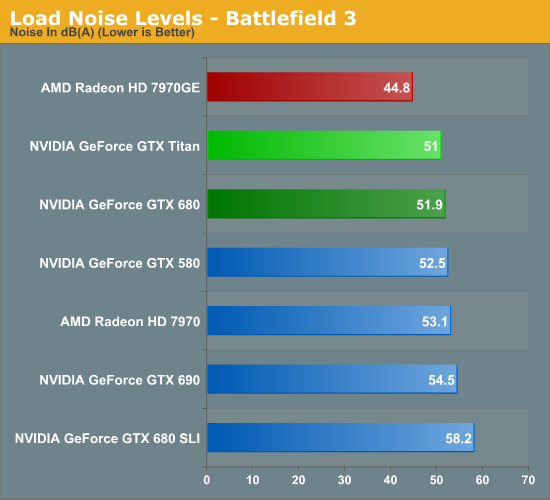

It’s with load noise that we finally see the full payoff of Titan’s build quality. At 51dB it’s only marginally quieter than the GTX 680, but as we recall from our earlier power data, Titan is drawing nearly 70W more than GTX 680 at the wall. In other words, despite the fact that Titan is drawing significantly more power than GTX 680, it’s still as quiet as or quieter than the aforementioned card. This coupled with Titan’s already high performance is Titan’s true power in NVIDIA’s eyes; it’s not just fast, but despite its speed and despite its TDP it’s as quiet as any other blower based card out there, allowing them to get away with things such as Tiki and tri-SLI systems with reasonable noise levels.

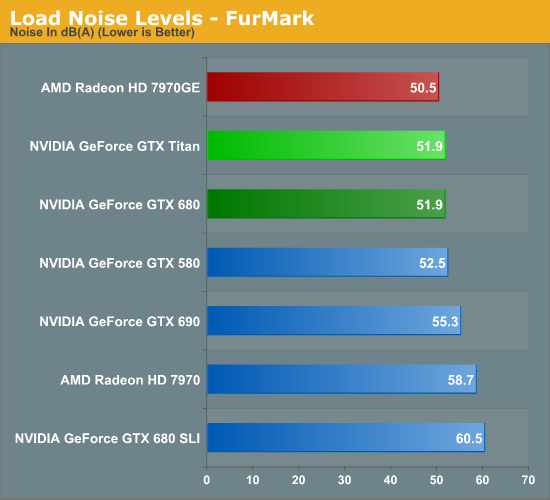

Much like what we saw with temperatures under Furmark, noise under Furmark has our single-GPU cards bunching up. Titan goes up just enough to tie GTX 680 in our pathological scenario, meanwhile our multi-GPU cards start shooting up well past Titan, while the 7970GE jumps up to just shy of Titan. This is a worst case scenario, but it’s a good example of how GPU Boost 2.0’s temperature functionality means that Titan quite literally keeps its cool and thereby keeps its noise in check.

Of course we would be remiss to point out that in all these scenarios the open air cooled 7970GE is still quieter, and in our gaming scenario by actually by quite a bit. Not that Titan is loud, but it doesn’t compare to the 7970GE. Ultimately we get to the age old debate between blowers and open air coolers; open air coolers are generally quieter, but blowers allow for more flexibility with products, and are more lenient with cases with poor airflow.

Ultimately Titan is a blower so that NVIDIA can do concept PCs like Tiki, which is something an open air cooler would never be suitable for. For DIY builders the benefits may not be as pronounced, but this is also why NVIDIA is focusing so heavily on boutique systems where the space difference really matters. Whereas realistically speaking, AMD’s best blower-capable card is the vanilla 7970, a less power hungry but also much less powerful card.

337 Comments

View All Comments

chizow - Saturday, February 23, 2013 - link

I haven't use this rebuttal in a long time, I reserve it for only the most deserving, but you sir are retarded.Everything you've written above is anti-progress, you've set Moore's law and semiconductor progress back 30 years with your asinine rants. If idiots like you running the show, no one would own any electronic devices because we'd be paying $50,000 for toaster ovens.

CeriseCogburn - Tuesday, February 26, 2013 - link

Yeah that's a great counter you idiot... as usual when reality barely glints a tiny bit through your lying tin foiled dunce cap, another sensationalistic pile of bunk is what you have.A great cover for a cornered doofus.

When you finally face your immense error, you'll get over it.

hammer256 - Thursday, February 21, 2013 - link

Not to sound like a broken record, but for us in scientific computing using CUDA, this is a godsend.The GTX 680 release was a big disappointment for compute, and I was worried that this is going to be the trend going forward with Nvidia: nerfed compute card for the consumers that focuses on graphics, and compute heavy professional cards for the HPC space.

I was worried that the days of cheap compute are gone. These days might still be numbered, but at least for this generation Titan is going to keep it going.

ronin22 - Thursday, February 21, 2013 - link

+1PCTC2 - Thursday, February 21, 2013 - link

For all of you complaining about the $999 price tag. It's like the GTX 690 (or even the 8800 Ultra, for those who remember it). It's a flagship luxury card for those who can afford it.But that's beside the real point. This is a K20 without the price premium (and some of the valuable Tesla features). But for researchers on a budget, using homegrown GPGPU compute code that doesn't validate to run only on Tesla cards, these are a godsend. I mean, some professional programs will benefit from having a Tesla over a GTX card, but these days, researchers are trying to reach into HPC space without the price premium of true HPC enterprise hardware. The GTX Titan is a good middle point. For the price of a Quadro K5000 and a single Tesla K20c card, they can purchase 4 GTX Titans and still have some money to spare. They don't need SLI. They just need the raw compute power these cards are capable of. So as entry GPU Compute workstation cards, these cards hit the mark for those wanting to enter GPU compute on a budget. As a graphics card for your gaming machine, average gamers need not apply.

ronin22 - Thursday, February 21, 2013 - link

"average gamers need not apply"If only people had read this before posting all this hate.

Again, gamers, this card is not for you. Please get the cr*p out of here.

CeriseCogburn - Tuesday, February 26, 2013 - link

You have to understand, the review sites themselves have pushed the blind fps mentality now for years, not to mention insanely declared statistical percentages ripened with over-interpretation on the now contorted and controlled crybaby whiners. It's what they do every time, they feel it gives them the status of consumer advisor, Nader protege, fight the man activist, and knowledgeable enthusiast.Unfortunately that comes down the ignorant demands we see here, twisted with as many lies and conspiracies as are needed, to increase the personal faux outrage.

Dnwvf - Thursday, February 21, 2013 - link

In absolute terms, this is the best non-Tesla compute card on the market.However, looking at flops/$, you'd be better off buying 2 7970Ghz Radeons, which would run around $60 less and give you more total Flops. Look at the compute scores - Titan is generally not 2x a single 7970. And in some of the compute scores, the 7970 wins.

2 7970ghz (not even in crossfire mode, you don't need that for OpenCL), will beat the crap out of Titan and cost less. They couldn't run AOPR on the AMD cards..but everybody knows from bitcoin that Amd cards rule over nvidia for password hashing ( just google bitcoin bit_align_int to see why).

There's an article on Toms Hardware where they put a bunch of nvidia and amd cards through a bunch of compute benchmarks, and when amd isn't winning, the gtx 580 generally beats the 680...most likely due to its 512 bit bus. Titan is still a 384 bit bus...can't really compare on price because Phi costs an arm and a leg like Tesla, but you have to acknowledge that Phi is probably gonna rock out with its 512 bit bus.

Gotta give Nvidia kudos for finally not crippling fp64, but at this price point, who cares? If you're looking to do compute and have a GPU budget of $2K, you could buy:

An older Tesla

2 Titans

-or-

Build a system with 2 7970Ghz and 2 Gtx 580.

And the last system would be the best...compute on the amd cards for certain algorithms, on the nvidia cards for the others, and pci bandwidth issues aside, running multiple complex algorithms simultaneously will rock because you can enqueue and execute 4 OpenCL kernels simultaneously. You'd have to shop around for a while to find some 580's though.

Gamers aren't gonna buy this card unless they're spending Daddy's money, and serious compute folk will realize quickly that if they buy a mobo that will fit 2 or 4 double-width cards, depending on Gpu budget, they can get more flops per dollar with a multiple-card setup (think of it as a micro-sized Gpu compute cluster). Don't believe me? Google Jeremi Gosni oclhashcat.

I'm not much for puns, but this card is gonna flop. (sorry)

DanNeely - Thursday, February 21, 2013 - link

Has any eta on when the rest of the Kepler refresh is due leaked out yet?HisDivineOrder - Thursday, February 21, 2013 - link

It's way out of my price range, first and foremost.Second, I think the pricing is a mistake, but I know where they are coming from. They're using the same Intel school of thought on SB-E compared to IB. They price it out the wazoo and only the most luxury of the luxury gamers will buy it. It doesn't matter that the benchmarks show it's only mostly better than its competition down at the $400-500 range and not the all-out destruction you might think it capable of.

The cost will be so high it will be spoken of in whispers and with wary glances around, fearful that the Titan will appear and step on you. It'll be rare and rare things are seen as legendary just so long as they can make the case it's the fastest single-GPU out there.

And they can.

So in short, it's like those people buying hexacore CPU's from Intel. You pay out the nose, you get little real gain and a horrible performance per dollar, but it is more marketing than common sense.

If nVidia truly wanted to use this product to service all users, they would have priced it at $600-700 and moved a lot more. They don't want that. They're fine with the 670/680 being the high end for a majority of users. Those cards have to be cheap to make by now and with AMD's delays/stalls/whatever's, they can keep them the way they are or update them with a firmware update and perhaps a minor retooling of the fab design to give it GPU Boost 2.

They've already set the stage for that imho. If you read the way the article is written about GPU Boost 2 (both of them), you can see nVidia is setting up a stage where they introduce a slightly modified version of the 670 and 680 with "minor updates to the GPU design" and GPU Boost 2, giving them more headroom to improve consistency with the current designs.

Which again would be stealing from Intel's playbook of supplement SB-E with IB mainstream cores.

The price is obscene, but the only people who should actually care are the ones who worship at the altar of AA. Start lowering that and suddenly even a 7950 is way ahead of what you need.