NVIDIA's GeForce GTX Titan, Part 1: Titan For Gaming, Titan For Compute

by Ryan Smith on February 19, 2013 9:01 AM ESTGPU Boost 2.0: Temperature Based Boosting

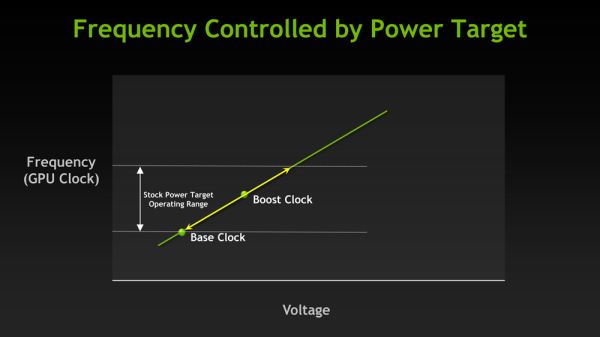

With the Kepler family NVIDIA introduced their GPU Boost functionality. Present on the desktop GTX 660 and above, boost allows NVIDIA’s GPUs to turbo up to frequencies above their base clock so long as there is sufficient power headroom to operate at those higher clockspeeds and the voltages they require. Boost, like turbo and other implementations, is essentially a form of performance min-maxing, allowing GPUs to offer higher clockspeeds for lighter workloads while still staying within their absolute TDP limits.

With the first iteration of GPU Boost, GPU Boost was based almost entirely around power considerations. With the exception of an automatic 1 bin (13MHz) step down in high temperatures to compensate for increased power consumption, whether GPU Boost could boost and by how much depended on how much power headroom was available. So long as there was headroom, GPU Boost could boost up to its maximum boost bin and voltage.

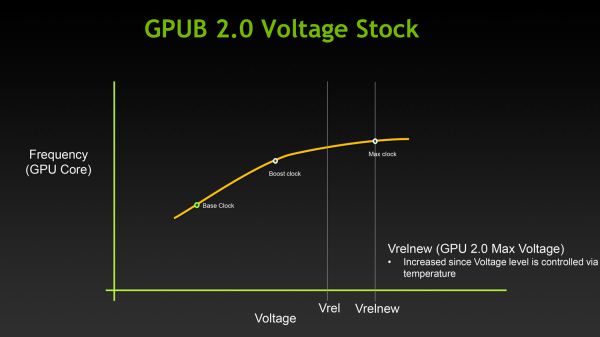

For Titan, GPU Boost has undergone a small but important change that has significant ramifications to how GPU Boost works, and how much it boosts by. And that change is that with GPU Boost 2, NVIDIA has essentially moved on from a power-based boost system to a temperature-based boost system. Or perhaps more precisely, a system that is predominantly temperature based but is also capable of taking power into account.

When it came to GPU Boost 1, its greatest weakness as explained by NVIDIA is that it essentially made conservative assumptions about temperatures and the interplay between high temperatures and high voltages in order keep from seriously impacting silicon longevity. The end result being that NVIDIA was picking boost bin voltages based on the worst case temperatures, which meant those conservative assumptions about temperatures translated into conservative voltages.

So how does a temperature based system fix this? By better mapping the relationship between voltage, temperature, and reliability, NVIDIA can allow for higher voltages – and hence higher clockspeeds – by being able to finely control which boost bin is hit based on temperature. As temperatures start ramping up, NVIDIA can ramp down the boost bins until an equilibrium is reached.

Of course total power consumption is still a technical concern here, though much less so. Technically NVIDIA is watching both the temperature and the power consumption and clamping down when either is hit. But since GPU Boost 2 does away with the concept of separate power targets – sticking solely with the TDP instead – in the design of Titan there’s quite a bit more room for boosting thanks to the fact that it can keep on boosting right up until the point it hits the 250W TDP limit. Our Titan sample can boost its clockspeed by up to 19% (837MHz to 992MHz), whereas our GTX 680 sample could only boost by 10% (1006MHz to 1110MHz).

Ultimately however whether GPU Boost 2 is power sensitive is actually a control panel setting, meaning that power sensitivity can be disabled. By default GPU Boost will monitor both temperature and power, but 3rd party overclocking utilities such as EVGA Precision X can prioritize temperature over power, at which point GPU Boost 2 can actually ignore TDP to a certain extent to focus on power. So if nothing else there’s quite a bit more flexibility with GPU Boost 2 than there was with GPU Boost 1.

Unfortunately because GPU Boost 2 is only implemented in Titan it’s hard to evaluate just how much “better” this is in any quantities sense. We will be able to present specific Titan numbers on Thursday, but other than saying that our Titan maxed out at 992MHz at its highest boost bin of 1.162v, we can’t directly compare it to how the GTX 680 handled things.

157 Comments

View All Comments

Wolfpup - Tuesday, February 19, 2013 - link

I think so too. And IMO this makes sense...no one NEEDS this card, the GTX 680 is still awesome, and still competitive where it is. They can be selling these elsewhere for more, etc.Now, who wants to buy me 3 of them to run Folding @ Home on :-D

IanCutress - Tuesday, February 19, 2013 - link

Doing some heavy compute, this card could pay for itself in a couple of weeks over a 680 or two. On the business side, it all comes down to 'does it make a difference to throughput', and if you can quantify that and cost it up, then it'll make sense. Gaming, well that's up to you. Folding... I wonder if the code needs tweaking a little.wreckeysroll - Tuesday, February 19, 2013 - link

Price is going to kill this card. See the powerpoints in the previews for performance. Titan is not too much faster than what they have on the market now, so not just the same price as a 690 but 30% slower as well.Game customers are not pro customers.

This card could of been nice before someone slipped a gear at nvidia and thought gamers would eat this $1000 rip-off. A few will like anything not many though. Big error was made here on pricing this for $1000. A sane price would of sold many more than this lunacy.

Nvidia dropped the ball.

johnthacker - Tuesday, February 19, 2013 - link

People doing compute will eat this up, though. I went to NVIDIA's GPU Tech Conference last year, people were clamoring for a GK110 based consumer card for compute, after hearing that Dynamic Paralleism and HyperQ were limited to the GK110 and not on the GK104.They will sell as many as they want to people doing compute, and won't care at all if they aren't selling them to gamers, since they'll be making more profit anyway.

Nvidia didn't drop the ball, it's that they're playing a different game than you think.

TheJian - Wednesday, February 20, 2013 - link

Want to place bet on them being out of stock on the day their on sale? I'd be shocked if you can get one in a day if not a week.I thought the $500 Nexus 10 would slow some down but I had to fight for hours to get one bought and sold out in most places in under an hour. I believe most overpriced apple products have the same problem.

They are not trying to sell this to the middle class ya know.

Asus prices the ares 2 at $1600. They only made 1000 last I checked. These are not going to sell 10 million and selling for anything less would just mean less money, and problems meeting production. You price your product at what you think the market will bare. Not what Wreckeysroll thinks the price should be. Performance like a dualchip card is quite a feat of engineering. Note the Ares2 uses like ~475watts. This will come in around 250w. Again, quite a feat. That's around ~100 less than a 690 also.

Don't forget this is a card that is $2500 of compute power. Even Amazon had to buy 10000 K20's just to get a $1500 price on them, and had to also buy $500 insurance for each one to get that deal. You think Amazon is a bunch of idiots? This is a card that fixes 600 series weakness and adds substantial performance to boot. It would be lunacy to sell it for under $1000. If we could all afford it they'd make nothing and be out of stock in .5 seconds...LOL

chizow - Friday, February 22, 2013 - link

Except they have been selling this *SAME* class of card for much <$1000 for the better part of a decade. *SAME* size, same relative performance, same cost to produce. Where have you been and why do you think it's now OK to sell it for 2x as much when nothing about it justifies the price increase?CeriseCogburn - Sunday, February 24, 2013 - link

LOL same cost to produce....You're insane.

Gastec - Wednesday, February 27, 2013 - link

You forget about the "bragging rights" factor. Perhaps Nvidia won't make many GTX Titan but all those they do make will definitely sell like warm bread. There are enough "enthusiasts" and other kinds of trolls out there (most of them in United States) willing to give anything to show to the Internet their high scores in various benchmarks and/or post a flashy picture with their shiny "rig".herzwoig - Tuesday, February 19, 2013 - link

Unacceptable price.Less than promised performance.

Pro customers will get a Tesla, that is what those cards are for with the attenuate support and drivers. Nvidia is selling this as a consumer gaming play card and trying to reshape the high end gaming SKU as an even more premium product (doubly so!!)

Terrible value and whatever performance it has going for it is erroded by the nonsensical pricing strategy. Surprising level of miscalculation on the greed front from nvidia...

TheJian - Wednesday, February 20, 2013 - link

They'll pay $2500 to get that unless they buy 10000 like amazon (which still paid $2000/card). Unacceptable for you, but I guess you're not their target market. You can get TWO of these for the price of ONE tesla at $2000 and ONLY if you buy 10000 like amazon. Heck if buying one Tesla, I get two of these, a new I7-3770K+ a board...LOL. They're selling this as a consumer card with telsa performance (sans support/insurance). Sounds like they priced it right in line with nearly every other top of the line card released for years in this range. 7990, 690 etc...on down the line.Less than promised performance? So you've benchmarked it then?

"Terrible value and whatever performance it has going for it"

So you haven't any idea yet right?...Considering a 7990 costs a $1000 too basically, and uses 475w vs. 250w, while being 1/2 the size this isn't so nonsensical. This card shouldn't heat up your room either. There are many benefits, you just can't see beyond those AMD goggles you've got on.