NVIDIA's GeForce GTX Titan, Part 1: Titan For Gaming, Titan For Compute

by Ryan Smith on February 19, 2013 9:01 AM ESTTitan For Compute

Titan, as we briefly mentioned before, is not just a consumer graphics card. It is also a compute card and will essentially serve as NVIDIA’s entry-level compute product for both the consumer and pro-sumer markets.

The key enabler for this is that Titan, unlike any consumer GeForce card before it, will feature full FP64 performance, allowing GK110’s FP64 potency to shine through. Previous NVIDIA cards either had very few FP64 CUDA cores (GTX 680) or artificial FP64 performance restrictions (GTX 580), in order to maintain the market segmentation between cheap GeForce cards and more expensive Quadro and Tesla cards. NVIDIA will still be maintaining this segmentation, but in new ways.

| NVIDIA GPU Comparison | ||||||

| Fermi GF100 | Fermi GF104 | Kepler GK104 | Kepler GK110 | |||

| Compute Capability | 2.0 | 2.1 | 3.0 | 3.5 | ||

| Threads/Warp | 32 | 32 | 32 | 32 | ||

| Max Warps/SM(X) | 48 | 48 | 64 | 64 | ||

| Max Threads/SM(X) | 1536 | 1536 | 2048 | 2048 | ||

| Register File | 32,768 | 32,768 | 65,536 | 65,536 | ||

| Max Registers/Thread | 63 | 63 | 63 | 255 | ||

| Shared Mem Config |

16K 48K |

16K 48K |

16K 32K 48K |

16K 32K 48K |

||

| Hyper-Q | No | No | No | Yes | ||

| Dynamic Parallelism | No | No | No | Yes | ||

We’ve covered GK110’s compute features in-depth in our look at Tesla K20 so we won’t go into great detail here, but as a reminder, along with beefing up their functional unit counts relative to GF100, GK110 has several feature improvements to further improve compute efficiency and the resulting performance. Relative to the GK104 based GTX 680, Titan brings with it a much greater number of registers per thread (255), not to mention a number of new instructions such as the shuffle instructions to allow intra-warp data sharing. But most of all, Titan brings with it NVIDIA’s Kepler marquee compute features: HyperQ and Dynamic Parallelism, which allows for a greater number of hardware work queues and for kernels to dispatch other kernels respectively.

With that said, there is a catch. NVIDIA has stripped GK110 of some of its reliability and scalability features in order to maintain the Tesla/GeForce market segmentation, which means Titan for compute is left for small-scale workloads that don’t require Tesla’s greater reliability. ECC memory protection is of course gone, but also gone is HyperQ’s MPI functionality, and GPU Direct’s RDMA functionality (DMA between the GPU and 3rd party PCIe devices). Other than ECC these are much more market-specific features, and as such while Titan is effectively locked out of highly distributed scenarios, this should be fine for smaller workloads.

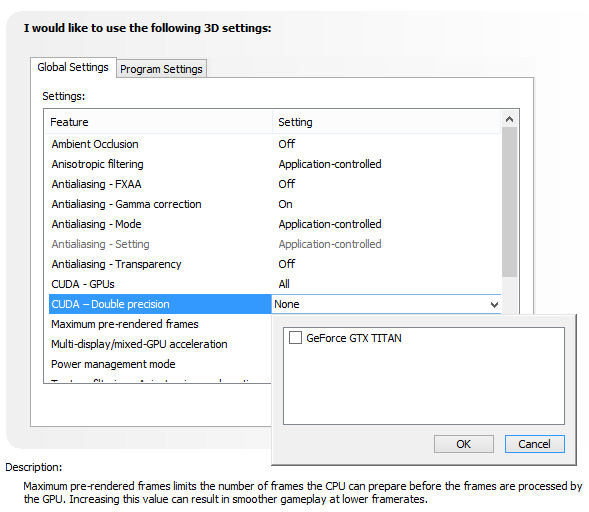

There is one other quirk to Titan’s FP64 implementation however, and that is that it needs to be enabled (or rather, uncapped). By default Titan is actually restricted to 1/24 performance, like the GTX 680 before it. Doing so allows NVIDIA to keep clockspeeds higher and power consumption lower, knowing the apparently power-hungry FP64 CUDA cores can’t run at full load on top of all of the other functional units that can be active at the same time. Consequently NVIDIA makes FP64 an enable/disable option in their control panel, controlling whether FP64 is operating at full speed (1/3 FP32), or reduced speed (1/24 FP32).

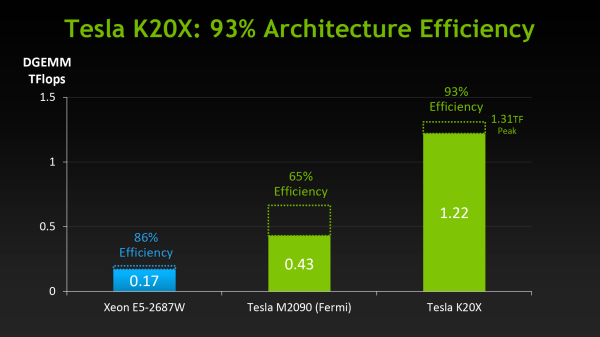

The penalty for enabling full speed FP64 mode is that NVIDIA has to reduce clockspeeds to keep everything within spec. For our sample card this manifests itself as GPU Boost being disabled, forcing our card to run at 837MHz (or lower) at all times. And while we haven't seen it first-hand, NVIDIA tells us that in particularly TDP constrained situations Titan can drop below the base clock to as low as 725MHz. This is why NVIDIA’s official compute performance figures are 4.5 TFLOPS for FP32, but only 1.3 TFLOPS for FP64. The former is calculated around the base clock speed, while the latter is calculated around the worst case clockspeed of 725MHz. The actual execution rate is still 1/3.

Unfortunately there’s not much else we can say about compute performance at this time, as to go much farther than this requires being able to reference specific performance figures. So we’ll follow this up on Thursday with those figures and a performance analysis.

157 Comments

View All Comments

vacaloca - Wednesday, February 20, 2013 - link

I'm assuming TCC driver would not work stock... if it's anything like the GTX 480 that could be BIOS/softstraps modded to work as Tesla C2050, it might be possible to get the HyperQ MPI, GPU Direct RDMA, and TCC support by doing the same except with a K20 or K20X BIOS. This would probably mean that the display outputs on the Titan card would be bricked. That being said, it's not entirely trivial... see below for details:https://devtalk.nvidia.com/default/topic/489965/cu...

tjhb - Thursday, February 21, 2013 - link

That's an amazing thread. How civilised, that NVIDIA didn't nuke it.I'm only interested in what is directly supported by NVIDIA, so I'll use the new card for both display and compute.

Thanks!

Arakageeta - Wednesday, February 20, 2013 - link

Thanks! I wasn't able to find this information anywhere else.Looks like the cheapest current-gen dual-copy engine GPU out there is still the Quadro K5000 (GK104-based) for $1800. For a dual-copy engine GK110, you need to shell out $3500. That's a steep price for a small research grant!

Shadowmaster625 - Tuesday, February 19, 2013 - link

For the same price as this thing, AMD could make a 7970 with a FX8350 all on the same die. Throw in 6GB of GDDR5 and 288GB/sec memory bandwidth and a custom ITX board and you'd have a generic PC gaming "console". Why dont they just release their own "AMDStation"?Ananke - Tuesday, February 19, 2013 - link

They will. It's called SONY Play Station 4 :)da_cm - Tuesday, February 19, 2013 - link

"Altogether GK110 is a massive chip, coming in at 7.1 billion transistors, occupying 551m2 on TSMC’s 28nm process."Damn, gonna need a bigger house to fit that one in :D.

Hrel - Tuesday, February 19, 2013 - link

I've still never even seen a monitor that has a display port. Can someone please make a card with 4 HDMI port, PLEASE!Kevin G - Tuesday, February 19, 2013 - link

Odd, I have two different monitors has home and a third at work that'll accept a DP input.They do carry a bit of a premium over those with just DVI though.

jackstar7 - Tuesday, February 19, 2013 - link

Well, I've got Samsung monitors that can only do 60Hz via HDMI, but 120Hz via DP. So I'd much rather see more DisplayPort adoption.Hrel - Thursday, February 21, 2013 - link

I only ever buy monitors with HDMI on them. I think anything beyond 1080p is silly. (lack of native content) Both support WAY more than 1080p, so I see no reason to spend more. I'm sure if I bought a 2560x1440 monitor it'd have DP. But I won't ever do that. I'd buy a 19200x10800 monitor though; one day.