NVIDIA's GeForce GTX Titan, Part 1: Titan For Gaming, Titan For Compute

by Ryan Smith on February 19, 2013 9:01 AM ESTTitan For Compute

Titan, as we briefly mentioned before, is not just a consumer graphics card. It is also a compute card and will essentially serve as NVIDIA’s entry-level compute product for both the consumer and pro-sumer markets.

The key enabler for this is that Titan, unlike any consumer GeForce card before it, will feature full FP64 performance, allowing GK110’s FP64 potency to shine through. Previous NVIDIA cards either had very few FP64 CUDA cores (GTX 680) or artificial FP64 performance restrictions (GTX 580), in order to maintain the market segmentation between cheap GeForce cards and more expensive Quadro and Tesla cards. NVIDIA will still be maintaining this segmentation, but in new ways.

| NVIDIA GPU Comparison | ||||||

| Fermi GF100 | Fermi GF104 | Kepler GK104 | Kepler GK110 | |||

| Compute Capability | 2.0 | 2.1 | 3.0 | 3.5 | ||

| Threads/Warp | 32 | 32 | 32 | 32 | ||

| Max Warps/SM(X) | 48 | 48 | 64 | 64 | ||

| Max Threads/SM(X) | 1536 | 1536 | 2048 | 2048 | ||

| Register File | 32,768 | 32,768 | 65,536 | 65,536 | ||

| Max Registers/Thread | 63 | 63 | 63 | 255 | ||

| Shared Mem Config |

16K 48K |

16K 48K |

16K 32K 48K |

16K 32K 48K |

||

| Hyper-Q | No | No | No | Yes | ||

| Dynamic Parallelism | No | No | No | Yes | ||

We’ve covered GK110’s compute features in-depth in our look at Tesla K20 so we won’t go into great detail here, but as a reminder, along with beefing up their functional unit counts relative to GF100, GK110 has several feature improvements to further improve compute efficiency and the resulting performance. Relative to the GK104 based GTX 680, Titan brings with it a much greater number of registers per thread (255), not to mention a number of new instructions such as the shuffle instructions to allow intra-warp data sharing. But most of all, Titan brings with it NVIDIA’s Kepler marquee compute features: HyperQ and Dynamic Parallelism, which allows for a greater number of hardware work queues and for kernels to dispatch other kernels respectively.

With that said, there is a catch. NVIDIA has stripped GK110 of some of its reliability and scalability features in order to maintain the Tesla/GeForce market segmentation, which means Titan for compute is left for small-scale workloads that don’t require Tesla’s greater reliability. ECC memory protection is of course gone, but also gone is HyperQ’s MPI functionality, and GPU Direct’s RDMA functionality (DMA between the GPU and 3rd party PCIe devices). Other than ECC these are much more market-specific features, and as such while Titan is effectively locked out of highly distributed scenarios, this should be fine for smaller workloads.

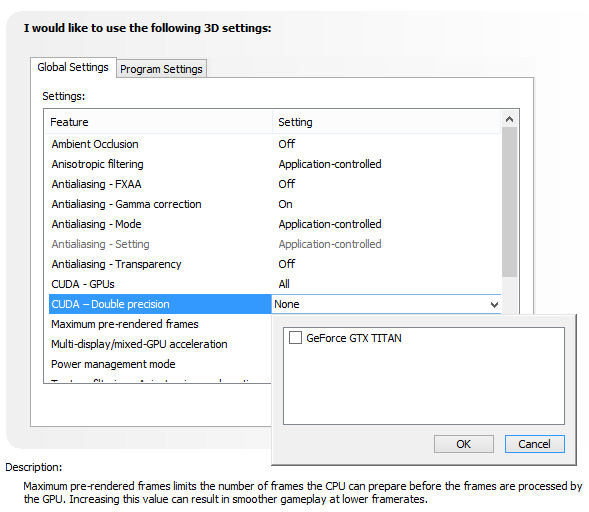

There is one other quirk to Titan’s FP64 implementation however, and that is that it needs to be enabled (or rather, uncapped). By default Titan is actually restricted to 1/24 performance, like the GTX 680 before it. Doing so allows NVIDIA to keep clockspeeds higher and power consumption lower, knowing the apparently power-hungry FP64 CUDA cores can’t run at full load on top of all of the other functional units that can be active at the same time. Consequently NVIDIA makes FP64 an enable/disable option in their control panel, controlling whether FP64 is operating at full speed (1/3 FP32), or reduced speed (1/24 FP32).

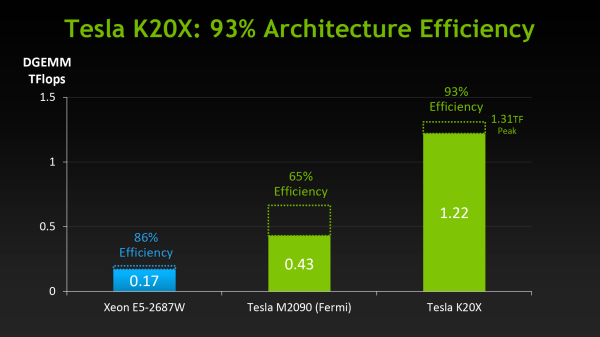

The penalty for enabling full speed FP64 mode is that NVIDIA has to reduce clockspeeds to keep everything within spec. For our sample card this manifests itself as GPU Boost being disabled, forcing our card to run at 837MHz (or lower) at all times. And while we haven't seen it first-hand, NVIDIA tells us that in particularly TDP constrained situations Titan can drop below the base clock to as low as 725MHz. This is why NVIDIA’s official compute performance figures are 4.5 TFLOPS for FP32, but only 1.3 TFLOPS for FP64. The former is calculated around the base clock speed, while the latter is calculated around the worst case clockspeed of 725MHz. The actual execution rate is still 1/3.

Unfortunately there’s not much else we can say about compute performance at this time, as to go much farther than this requires being able to reference specific performance figures. So we’ll follow this up on Thursday with those figures and a performance analysis.

157 Comments

View All Comments

bigboxes - Tuesday, February 19, 2013 - link

This is Wreckage we're talking about. He's trolling. Nothing to see here. Move along.chizow - Tuesday, February 19, 2013 - link

I agree with his title, that AMD is at fault at the start of all of this, but not necessarily with the rest of his reasonings. Judging from your last paragraph, you probably agree to some degree as well.This all started with AMD's pricing of the 7970, plain and simple. $550 for a card that didn't come anywhere close to justifying the price against the last-gen GTX 580, a good card but completely underwhelming in that flagship slot.

The 7970 pricing allowed Nvidia to:

1) price their mid-range ASIC, GK104, at flagship SKU position

2) undercut AMD to boot, making them look like saints at the time and

3) delay the launch of their true flagship SKU, GK100/110 nearly a full year

4) Jack up the prices of the GK110 as an ultra-premium part.

I saw #4 occurring well over a year ago, which was my biggest concern over the whole 7970 pricing and GK104 product placement fiasco, but I had no idea Nvidia would be so usurous as to charge $1k for it. I was expecting $750-800....$1k....Nvidia can go whistle.

But yes, long story short, Nvidia's greed got us here, but AMD definitely started it all with the 7970 pricing. None of this happens if AMD prices the 7970 in-line with their previous high-end in the $380-$420 range.

TheJian - Wednesday, February 20, 2013 - link

You realize you're dogging amd for pricing when they lost 1.18B for the year correct? Seriously you guys, how are you all not understanding they don't charge ENOUGH for anything they sell? They had to lay of 30% of the workforce, because they don't make any money on your ridiculous pricing. Your idea of pricing is KILLING AMD. It wasn't enough they laid of 30%, lost their fabs, etc...You want AMD to keep losing money by pricing this crap below what they need to survive? This is the same reason they lost the cpu war. They charged less for their chips for the whole 3yrs they were beating Intel's P4/presHOT etc to death in benchmarks...NV isn't charging too much, AMD is charging too LITTLE.AMD has lost 3-4B over the last 10yrs. This means ONE thing. They are not charging you enough to stay in business.

This is not complicated. I'm not asking you guys to do calculus here or something. If I run up X bills to make product Y, and after selling Y can't pay X I need to charge more than I am now or go bankrupt.

Nvidia is greedy because they aren't going to go out of business? Without Intel's money they are making 1/2 what they did 5yrs ago. I think they should charge more, but this is NOT gouging or they'd be making some GOUGING like profits correct? I guess none of you will be happy until they are both out of business...LOL

chizow - Wednesday, February 20, 2013 - link

1st of all, AMD as a whole lost money, AMD's GPU division (formerly ATI) has consistently operated at a small level of profit. So comparing GPU pricing/profits impact on their overall business is obviously going to be lost in the sea of red ink on AMD's P&L statement.Secondly, the massive losses and devaluation of AMD has nothing to do with their GPU pricing, as stated, the GPU division has consistently turned a small profit. The problem is the fact AMD paid $6B for ATI 7 years ago. They paid way too much, most sane observers realized that 7 years ago and over the past 5-6 years it's become more obvious. The former ATI's revenue and profits did not justify the $6B price tag and as a result, AMD was *FORCED* to write down their assets as there were some obvious valuation issues related to the ATI acquisition.

Thirdly, AMD has said this very month that sales of their 7970/GHz GPUs in January 2013 alone exceeded sales of those cards in the previous *TWELVE MONTHS* prior. What does that tell you? It means their previous price points that steadily dropped from $550>500>$450 were more than the market was willing to bear given the product's price:performance relative to previous products and the competition. Only after they settled in on that $380/$420 range for the 7970/GHz edition along with a very nice game bundle did they start moving cards in large volume.

Now you do the math, if you sell 12x as many cards in 1 month at $100 profit instead of 1/12x as many cards at $250 profit over the course of 1 year, would you have made more money if you just sold the higher volume at a lower price point from the beginning? The answer is yes. This is a real business case that any Bschool grad will be familiar with when performing a cost-value-profit analysis.

CeriseCogburn - Sunday, February 24, 2013 - link

Wow, first of all, basic common sense is all it takes, not some stupid idiot class for losers who haven't a clue and can't do 6th grade math.Unfortunately, in your raging fanboy fever pitch, you got the facts WRONG.

AMD said it sold more in January than any other SINGLE MONTH of 2012 including "Holiday Season" months.

Nice try there spanky, the brain farts just keep a coming.

frankgom23 - Tuesday, February 19, 2013 - link

Who wants to pay more for lessno new features..., this is a paper launch of a useless board for the consumer, I don't even need to see official benchmarks, I'm completely dissapointed.

Maybe it's time to go back to ATI/AMD.

imaheadcase - Tuesday, February 19, 2013 - link

If you would actually READ the article you would know why.I love how people cry a river without actually knowing how the card will perform yet.

CeriseCogburn - Sunday, February 24, 2013 - link

Yes, go back, your true home is with losers and fools and crashers and bankrupt idiots who cannot pay for their own stuff.The last guy I talked to who installed a new AMD card for his awesome Eyefinity monitors gaming setup struggled for several days encompassing dozens of hours to get the damned thing stable, exclaimed several times he had finally achieved, and yet, the next day at it again, and finally took the thing, walked outside and threw it up against the brick wall "shattering it into 150 pieces" and "he's not going dumpster diving" he tells me, to try to retrieve a piece or part of it which might help him repair one of the two other DEAD upper range amd cards ( of 4 dead amd cards in the house ) he recently bought for mega gaming system.

ROFL

Yeah man, not kidding. He doesn't like nVidia by the way. He still is an amd fanboy.

He is a huge gamer with multiple systems all running all day and night - and his "main" is "down"... needless to say it was quite stressful for him and has done nothing good for the very long friendship.

LOL - Took it and in a seeing red rage and smashed that puppy to smithereens against the brick wall.

So please, head back home, lots of lonely amd gamers need support.

iMacmatician - Tuesday, February 19, 2013 - link

"For our sample card this manifests itself as GPU Boost being disabled, forcing our card to run at 837MHz (or lower) at all times. This is why NVIDIA’s official compute performance figures are 4.5 TFLOPS for FP32, but only 1.3 TFLOPS for FP64. The former assumes that boost is enabled, while the latter is calculated around GPU Boost being disabled. The actual execution rate is still 1/3."But the 837 MHz base and 876 MHz boost clocks give 2·(876 MHz)·(2688 CCs) = 4.71 SP TFLOPS and 2·(837 MHz)·(2688 CCs)·(1/3) = 1.50 DP TFLOPS. What's the reason for the discrepancies?

Ryan Smith - Tuesday, February 19, 2013 - link

Apparently in FP64 mode Titan can drop down to as low as 725MHz in TDP-constrained situations. Hence 1.3TFLOPS, since that's all NVIDIA can guarantee.