Making Sense of the Intel Haswell Transactional Synchronization eXtensions

by Johan De Gelas on September 20, 2012 12:15 AM ESTEasy to Use?

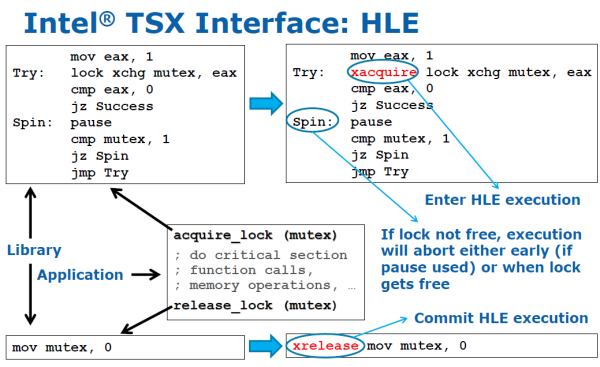

As we mentioned, HLE is backwards compatible. How does that work? If developers rely on libraries like glibc for locking, Intel claims only those libraries have to be changed. Take a look at the slide below:

Notice the rectangle with the pointer "application". For the developer of such an application that uses dynamic linking nothing changes. The library simply has to place xacquire in front of each lock (here a spinlock) and xrelease at the end of each lock. So (dynamic) linking to a new library and running it on an Intel Haswell CPU should let your application use TSX. If you run the same application plus a TSX enabled library on a previous generation CPU, it will still run but will not use TSX (HLE).

29 Comments

View All Comments

dealcorn - Thursday, September 20, 2012 - link

The graphs indicate the benefit of HLE versus no HLE gets bigger as the number of threads increase. However, they lack scaling on the horizonal axis: I have no sense of the benefit at real world numbers like 8 threads, 12 threads and 16 threads.Is the primary benefit of RTM greater CPU performance or programmer productivity?

GatoRat - Thursday, September 20, 2012 - link

With the right application, you get both. With the wrong application, you get an apparent increase in productivity at the cost of performance. In the end, you still need smart, experienced and expensive engineers to know when to use this technology and when not to.GatoRat - Thursday, September 20, 2012 - link

It fails to properly explain what STM is. Unfortunately, most of the descriptions I've read make the same fundamental mistake made in this article--you don't lock a data structure or variables, you lock code. This difference is important because it means you can control it very exactly. These same articles then basically argue that STM is beneficial to lazy programmers who use locks too broadly. Yet programmers who do that aren't going to use STM, which is far more complicated and for which the benefits are dubious outside of some very narrow applications.My own experience is that non-locking algorithms are highly problematic and rarely give a performance advantage over using carefully designed locking algorithms. Moreover, in several situations a crudely designed locking algorithm can perform extremely fast due to the decreased complexity of the overall algorithm.

Bottom line is that what Intel has been doing, even with Nehelem, is fantastic, but thinking this will obviate the need for spending a lot of time and effort and brain power is delusional. On the other hand, it will help in the myriad of situations where management contradicts reality and says "it's good enough."

GatoRat - Thursday, September 20, 2012 - link

Incidentally, STM imposes a very real overhead which can easily cause worse performance than the alternative. Between the overhead and the increased complexity in code, in lightly loaded systems, the performance will always be worse.The hype is similar to that of parallel programming and will have just as dismal results. Like with parallel programming, you need a certain mindset and a problem which is conducive to being solved in that matter. I recently worked on a problem which simply had too many threads and asynchronous events due to the underlying (and well known) framework. STM would have been a disaster. I designed a pseudo-parallel architecture, but was never able to implement and test it due to the project being put on hiatus (by new management that was utterly clueless.)

clarkn0va - Thursday, September 20, 2012 - link

You know these new extensions will succeed and rapidly gain market share because the marketers managed to use a capital X in the name.glugglug - Thursday, September 20, 2012 - link

Other than real lock-contention, where HLE will net a small improvement even over fine-grained locks, the situations where it won't work (i.e. running out of L1 cache lines) come into play just as much with only 1 thread running, and the code that gets hit by these will lose its single-threaded performance. Worst case would be single threaded performance hit near 50%, but I think that would be extremely rare. Still, the amount of multithreaded gain is deceptive when you are hindering the single threaded performance.Also, in order to avoid that single threaded hit you end up going back to fine grained locks -- the coarse locks are more likely to hit the restrictions and have to re-run.

JonBendtsen - Friday, September 21, 2012 - link

Where are the scaling on the graphs? without scales the graph is useless.wwwcd - Friday, September 21, 2012 - link

Conclusion: The Need for doubling or quadrupling the amount of cache on the first floor rather than continuing torture tricks with software to optimize the use of already too little used since time immemorial volume. Of course, accompanied by the necessary improvement of the design of the CPU cores and adjusting volume levels of other caches.It is also necessary to increase the throughput of caches, or by widening the bus or increase their frequency of use, or by both methods

mallik79 - Monday, September 24, 2012 - link

I work for improving performance on a large commercial database.Challenge seems to be multi-CPU than multi-core.

Bottlenecks get magnified by adding cpu's instead of cores.

(we have custom spinlock code, donot use system provided primitives).

How many ever cores you add to a CPU, people still want multi-CPU huge boxes to run their databases.

So does this transaction memory caching makes life tougher for multi-CPU as the lock is nearer to a particular CPU, it makes cores of other CPU starve?

Thanks for all the in-depth reviews.