The GeForce GTX 660 Ti Review, Feat. EVGA, Zotac, and Gigabyte

by Ryan Smith on August 16, 2012 9:00 AM ESTBattlefield 3

Its popularity aside, Battlefield 3 may be the most interesting game in our benchmark suite for a single reason: it’s the first AAA DX10+ game. It’s been 5 years since the launch of the first DX10 GPUs, and 3 whole process node shrinks later we’re finally to the point where games are using DX10’s functionality as a baseline rather than an addition. Not surprisingly BF3 is one of the best looking games in our suite, but as with past Battlefield games that beauty comes with a high performance cost.

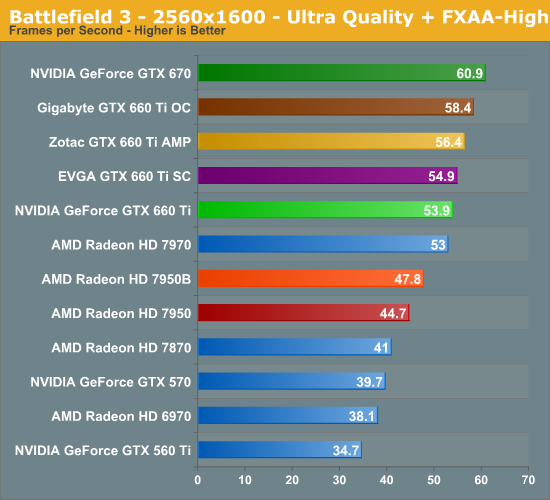

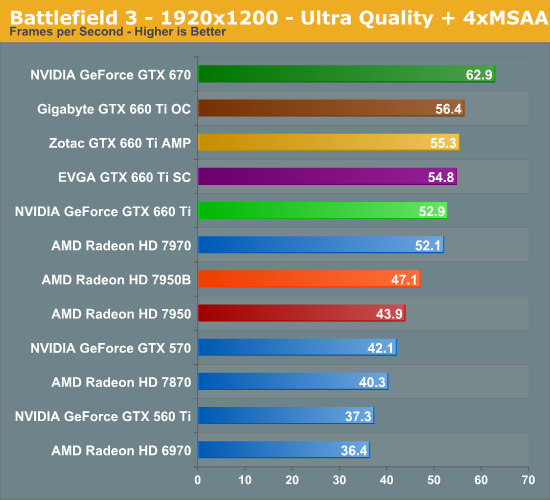

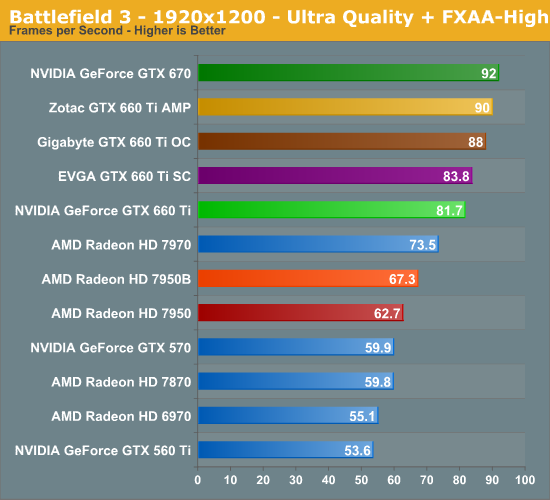

The reduction in memory bandwidth and ROP throughput coming from the GTX 670 comes with roughly an 11% performance cost here, just about splitting the difference between the best and worst case scenarios. This is important for the GTX 660 Ti since it means the card doesn’t surrender NVIDIA’s performance advantage in BF3. At 1920 with FXAA that means the GTX 660 Ti has a huge 30% performance lead over the 7950, and even the 7970 falls behind the GTX 660 Ti. The only real disappointment here is that 1920 with MSAA isn’t quite playable – 53fps means that framerates will bottom out in the mid-20s, which isn’t desirable.

Meanwhile the factory overclocked cards continue to up the ante, and ends up being another game that factory overclocks offer a decent improvement. Zotac tops the factory cards at 10%, followed by Gigabyte and EVGA. We’re once again seeing the impact of Zotac’s memory overclock, and how in memory bandwidth limited situations it’s more important than Gigabyte’s higher power target, though Gigabyte does come close.

313 Comments

View All Comments

skgiven - Friday, August 17, 2012 - link

Thanks for the review Ryan. Always appreciated.It's a bit annoying that AMD recently updated their drivers, but no card. I wonder how long that driver update was held back?

No doubt NVidia will find similar gaming improvements in the months to come. I expect any issues that may arise due to the asymmetric bandwidth to GDDR5 ratio will again be quickly overcome. People should remember that the CUDA issue is limited to fp64 (double precision); single precision is greater than previous generations.

Ryan Smith - Saturday, August 18, 2012 - link

I have no reason to believe that AMD has been holding back their driver updates. From our perspective they look to be pushing them out as soon as they're ready.Shark321 - Friday, August 17, 2012 - link

from this review:http://www.computerbase.de/artikel/grafikkarten/20...

you see that the 3GB edition of the 660 Ti is not faster than the 2GB version in 1920x1080.

CeriseCogburn - Sunday, August 19, 2012 - link

The amd fanboys have finally had to shut up their 8 month long lie after the 4G 680's, even though all the data was there from the very 1st 600 series nVidia release, which still wins in the highest resolutions the reviewers test in.Now the idea is down to some future fantasy, even though the cores are smoked out before they can use the ram, as Ryan FINALLY after 8 months admits above in a post.

Of course, an honest person like myself has said it from day one.

falc0ne - Saturday, August 18, 2012 - link

From what I'm seeing is hardly worth overclocking 660 Ti. What you get is just a few extra frame rates,5 FPS on average, with a quite high increase in load power and temperature.I don't believe it's really worth it. This just seems to be the case with most video cards in my opinion.

You just push it to its limits, probably shorten its life, for what?

The only good possible outcome is in titles where your FPS are under 30-35 and you need a bump to make the game smooth. What I prefer to that is simply lower the resolution a bit, disable shadows and other effects you don't stare at while you're busy playing.

What do you say?

CeriseCogburn - Sunday, August 19, 2012 - link

A certain review site comments on the effect maximum overclocking in it's ability to provide any game play improvements whatsoever. Often it does not. In a few rare cases, you can actually turn up one more feature in a game because of it, or have a frame rate playable when it was unplayable.The truth is there are so many drooling idiots willing to "love" whatever their fanboyism tells them to buy, that they will go to the ends of the earth to proclaim their few percent fps advantage that is notionally possible, if they had the monster rig and cpu the reviewer uses, and the gigantic screen, and the excessive ram, and fastest SSD, and supremely clean and defragged fresh install, and the years of OC stability settings under the belt, which they of course do not have, likely not even a single one of the above.

So they go on in rampant fps only fashion, only at the highest peak of perf on maxi system specs they don't own, and IGNORE every other feature set of the cards. Every single other thing is GONE from the mind of the fanboy - unles of course their fanboy fave uses some obscure thing they WON'T and DON'T use - that they just put down for the last few years when their enemy was best at it - like "compute".

So this is what they do.

Then after getting their junk, and OC'ing, and having instability issues, they slap it back to stock so their games don't crash, you know after bragging and posting for a night or two, and destroying their electric bill they so methodically whined about for so long before their fanboy card turned into the housefire.

So then they're stuck with their crap that does one thing that doesn't matter, and does not improve their gameplay whatsoever. Doesn't matter, that melted mind that brought them to the purchase is still swirling and ignorance is bliss, and boy are they happy they did the right thing and made the right choice.

Then they tell themselves TXAA sucks, PhysX sucks, auto overclock sucks, target frame rate sucks, smooth gaming sucks, driver improvements back to 6 year old series 6 sucks, adaptive v-sync sucks, 3+1 surround and 3d monitors capabilities sucks, cooler and quieter sucks, and they just got the bestest hardware eva' as they saved $15 or spent $30 more bucks, either way, kneeling toward Dubai is called for.

See, that's how it works.

Even gaming IQ sucks, because who can see that ?

CeriseCogburn - Sunday, August 19, 2012 - link

I failed to mention as well, although the jaggies really suck, TXAA sucks and is unneeded and really unwanted because nVidia screwed up and did not make it supersharp, and super sharp is very, very important.Of course, we also know, that indeed, AMD's morphological antialiasing is pretty cool, and an awesome feature, and the blur is not bad at all, and having the words and letters in huds and panels blurred is ok, because the performance advantage is well, well worth it.

Yes, that's exactly what we were told.

TheJian - Sunday, August 19, 2012 - link

http://store.steampowered.com/hwsurvey/Click the line for Primary Display Resolution.

All of the resolutions above 1920x1200 (above it) total less than a 2%. 1920x1080 and 1920x1200 are 29.x %. Everything else is below this. For all your talk about running out of rops, bandwidth etc...You're wasting most peoples time. I'd say every benchmark from 1920x1200 and below is the only thing that matters unless your after a 27in+ comparison. My 24/22 setup is 1920x1200 (dell 2407HC) and 1680x1050 (LG W2242T). Even the 27in I want is only 1920x1200 and I don't want higher (if only because I want to game in the same on both and it's just all around easier, not to mention a good card can run almost ANY game at this res).

It's nice to note changes at 2560x whatever, but who really cares? How many of you have 27in monitors? Why does anandtech tout this as anything special. You should be touting what we USE (not maybe what you special reviewers use, who I guess all have 27in+ or multi monitors in spanned resolutions...which again is NOT a large portion of users at steampowered.com). I don't use steam at all (it's a virus...LOL) but I do consult the stats they have (which are great).

I'd submit that the winner at 1920x1200 is what's important as most will never go over it. Find out what you can afford at that res and prep for future monitor purchases in that regard. If you don't ever plan on a 27in ignore 2560x+. You'd be looking at the wrong benchmarks and making an improper decision. I'd also submit that 97% (per the steampowered survey) of your users are NOT paying $301+ for their cards (I thought I paid a lot for my radeon 8850 at $260). It's pretty much $299 and below. A good 90% on that survey have 2GB or less of memory on the card (not many with SLI/Crossfire I guess). I'd rather see these results totally removed, and add more games to the testing. Perhaps an article dedicated to large resolutions should be written, but including it and bashing cards that fall off where nobody cares anyway is kind of pointless.

It's kind of like reviewing motherboards with 2+ vid slots. How many people have SLI/Crossfire in use? I have to buy a pointless/expensive motherboard to get all the ports I need (every time I buy a board! - try to get a board with all the trimmings and a SINGLE pcie x16 setup). I've yet to meet someone who actually OWNS 2 video cards and using sli/crossfire. PCIe 2.1 (or 2.0) x1 is 500MB/s (not Mbit), so what would I need 2 x16's for unless SLI/Crossfire is going to be used. Are motherboard makers catering to 5% of the market or what? The same can be said about testing boards in sli/crossfire. Review sites should complain more about wasting our money on slots that are never used. It takes a top of the line SSD to take out an X1 slot's bandwidth. Wireless etc will never need more than x1 before I'm dead...LOL.

Ryan Smith - Sunday, August 19, 2012 - link

Hi Jian;As I'm sure you're aware, our primary readership for video card articles are enthusiasts. So while we cover a broad spectrum of hardware on the whole, for video cards our testing methodologies are going to lean towards those methods best suited for people buying the product - enthusiasts.

To that end, 2560 monitors have become quite popular with enthusiasts. In recent months this especially goes for the $400 2560x1440 "Catleap" monitor (and other monitors based on the same LG panel), marking the first time such a high resolution IPS monitor has been available below $500. 1920 remains the most important resolution for gamers, but when we're talking about $300+ cards there is a significant minority running larger monitors.

Finally, in case you missed it in the article, we did quickly discuss optimal resolutions and what we would be focusing on:

"For a $300 performance card the most important resolution is typically going to be 1920x1080/1200, however in some cases these cards should be able to cover 2560x1440/1600 at a reasonable framerate. To that end, we’ll be focusing on 1920x1200 for the bulk of our review."

And 1920x1200 is what we based our concluding recommendations on.

TheJian - Tuesday, August 21, 2012 - link

So you don't write for 98% of your readership? You're expecting us to believe you're hitting the 2560x1600 so hard due to a monitor I can't even buy on newegg? Seriously? I already pointed out NO 24in monitor at newegg (68 of them) runs above 1920x1200, and out of 52 monitors at newegg that are 27in only 11 of those run at 2560x1440, NONE at 2560x1600. Why not run your benchmarks at that res then if it's all about catleap? Again, you may think your readership is "enthusiast" but I'd argue that if you're calling enthusiasts only people with a $688-$2300 monitor (see why it's not $400 below) you're not in tune with your readership or what enthusiast means. Read on, it's going to get worse, using YOUR WORDS. It's long people, but WORTH THE READ ;)The monitor: Available at Amazon from 4 SELLERS. 3 from Korea, and one from New Zealand...ROFL. 3 are "JUST LAUNCHED" WITH NO REVIEWS. Ok the one has 2 reviews in the last 12 months, but consider that just started too...The 4th,:

http://lowellmac.ecrater.com/help.php

No FAQ (BLANK PAGE) or ABOUT (BLANK PAGE) pages, and it looks like the store went up so quick they must be fraudulent. Contact? A GMAIL ACCOUNT! You can't even dial this joint...er...I mean can't dial this DUDE. :)

You couldn't come up with a better defense than a product (popular with enthusiasts? WHO?) that I can't even buy in the USA? Who the heck is buying $400 monitors from places with no phone, a BLANK about, a BLANK faq page, and ZERO reviews? Really? Where did you buy yours?...ROFL. KOREA? Refunds? From the only one I can dig up above: "Refunds and returns are generally not accepted unless product differs from description." from the only page they seem to have taken the time to fill in...Seriously Ryan? Are you freaking kidding me. I've never even heard of a YAMAKASI CATLEAP monitor...Now I know why. It doesn't exist in America...ONLY ONE REVIEW of the actual product from Aug 2nd. Probably from the guy that started his "JUS LAUNCHED" website...LOL. Which brings us to all the ones priced HIGHER than your $400. Amazon has an HP IPS for $688 (newegg too, this is the cheapest ANYWHERE!), Dell IPS for $800. Google that thing. In fact, ALL 2560x1440 monitors (11) at newegg are $690-2300! So you can't get into this club for under $690. So you benchmark based on Monitors you can't get (for the price you said, breaking the $400 barrier) from countries I would never give a credit card to (not without LIVING there). Raise your hand if you're one of the 2% that first, has a 27incher, then even more special the person Ryan wrote the review for who apparently has $688-2300 or this ONE "CATLEAP" owner....What's that like a decimal point of your readership? Yeah...I would write for that many people too.

By "significant minority" you mean .2% of the 2% right? :) So you think most of your readership are in that .2% of the 2% then? Really? Do people with $680-$2300 for monitors only have $300 for a vid card to push it? Do they really dicker over $20 as you insinuate in your review conclusion? For such a popular monitor I find it hard to believe newegg doesn't even sell it. Don't you? Amazon doesn't sell it either (only through marketplace from no-namers in KOREA...Not Amazon themselves). Nope didn't miss your statements, used them all over the place here in the comments section already :) You really should have just said, "I've written a biased article, I'm sorry, I retract it, now go away. thanks..."

Get ready...I'm going to use your words and benchmarks, easy for you to follow :)

Here we go:

"Coupled with the tight pricing between all of these cards, this makes it very hard to make any kind of meaningful recommendation here for potential buyers. Compared to the 7870 the GTX 660 Ti is a solid buy if you can spare the extra $20, though it’s not going to be a massive difference."

OK, so super enthusiasts care about $20 (but have $688-$2300 for a monitor or that POPULAR $400 available nowhere), but even if you buy this it won't make much difference in your conclusion. "if you can spare the $20"...They can buy that $688-$2300 monitor though..LOL You're not making sense here....Aren't these "potential buyers" buying $688-$2300 monitors? Isn't that what you said? But lets really break it down past the monitor $688-$2300, or nonexistent monitor garbage from companies with ZERO reviews and from Korea or New Zealand:

This is YOUR data:

7870 @2560x1600 unplayable at Warhead (25fps, min18.9fps),

"At 38.8fps it’s playable, but it’s definitely not a great experience" You said that about 1920x1200 on this game! You weren't done there though: "So for anyone wanting to partake in this classic, an AMD card is the way to go and it doesn’t matter which; even the 7870 is marginally faster."

MARGINALLY FASTER? Only over the REF card you can't get. TWO others in the list, it LOST by your MARGINALLY SLOWER. Come again? Even the REF card only lost 39.9fps (7870) to 38.8. ONE FPS! No other 660 was beat wins or loses by more than .5 fps (thats a 1/2 fps out of 40!) Have you heard of margin of error? This is the definition of it. $299 gets you ZOTAC AMP SPEEDS at newegg. Not to mention this game is from 2008 and Crysis 2 would be a LOSS.

Metro2033 28fps (min will be less, unplayable), Dirt3 74fps (but the 660's beat it anyway), shogun 2, again unplayable (19.1fps), arkham city 45fps (but beaten by 15%) but minimums are going to push unplayable [scratch that check hardocp below, the 7950 hits 10-15fps in this game min for quite a bit! with max 64fps] - TOTALLY UNPLAYABLE BATMAN 2560x1600, portal 2 37.3 (again 58+ for 660's-but 50% faster wont be noticed) so minimums here will hit unplayable probably also. Battlefield 3, 41fps (again 660's 56 jeez...no difference) again minimums may hit below 30fps in a MULTI Player game like Battlefield 3 it's UNPLAYABLE, elder is a wash at 76fps, Civ5 can run 68.8 but again beaten by 660 70+fps. So for MAYBE half of these games people will be running at your enthusiast res. But it's a $688-$2300 monitor (unless you're crazy and buy from korea) that they will turn down their graphics for so they can run on their shiny new monitor...Yep that's enthusiast mentality alright. I'm rich, on the bleeding edge but I'll turn down my graphics and run OUTSIDE the native res of my expensive toy. I think most buy $600 video cards and $300 monitors, not the other way around. Plenty of 27in 1920x1080 for $250-600 (the other 41 on newegg, NONE at 1920x1200). So this card really is NOT for enthusiasts thereby making it a race at 1920x1200 for this card 7870. Well really 1920x1080, since NO 27in on newegg.com is 1920x1200 and besides you just said RIGHT ABOVE THIS MSG "And 1920x1200 is what we based our concluding recommendations on. " We're about to test the truth of that statement Ryan :)

660TI vs. 7870 @1920x1200

Civ5 >3% faster

Skyrim >6% faster

Battlefield3 >37% faster (above 50% or so in FXAA High!!)

Portal 2 >62% faster (same in 2560x...even though it's useless IMHO)

Batman Arkham >22% faster

Shogun 2 >31% faster

Dirt3 >11% faster

Metro 2033>15% faster

Warhead ~wash (all 660 38.8fps-40.4 & 7870 is 39.9) WASH

STARCRAFT 2: 7970ghz (108FPS) VS. GTX670 (121FPS) @1920x1200

http://www.anandtech.com/show/6096/evga-geforce-gt...

So even though you left it out (lame excuse, use the same one you did here last month!), we can guestimate it would be just behind or FASTER the gtx670 as it's beaten a few times by the OC'd 660's (I.E. shogun2 3fps faster than gtx670) in this review for the 660 TI. Which BTW as I've just show SLAUGHTERED the 7970GHZ edition! NO wonder you left it out! The 7950 scored 88.2! in that test, so around 40-50% faster in STARCRAFT2 vs a card that isn't even the 7870 we're talking about for $20 ryan thinks you should save! So what 55% faster than 7870 in starcraft 2? Nah, never would notice that would they? Save your $20, none of these speed increases (some above 50%) don't matter at all...LOL.

So for $20 you won't notice the difference (in this res this card would be bought for) with these phenomenal results vs. the 7870. Faster in everything, 31%, 37%, 62%, 15%, 11%, 22% & 50%+ (starcraft2)...People won't notice a card running this much faster in most of the games you tested? You really want to stand by that statement? Only a retard wouldn't buy this performance gain almost across the board. It would be killed in Crysis 2 also at this res since 7870 can't do what the 7970 series can in warhead already and Crysis 2 doesn't work like Warhead does in AMD's favor as I've shown in other comments here. I'm sure it will do well in Borderlands 2 that comes free with it also as it's a TWIMTBP game (another 50% game?). MASSIVE DIFFERENCE HERE PAL. "if you can spare the $20" though you've just bought your shiny $688-$2300 monitor somehow...ROFL. OK. Still "hard to make any kind of meaningful recommendation."??? C'mon...Seriously? You made one that makes NO SENSE.

"What’s different about this launch compared to the launches before it is that AMD was finally prepared; this isn’t going to be another NVIDIA blow-out." REALLY? Does anyone believe this statement after following my points here?

"As it stands, AMD’s position correctly reflects their performance; the GTX 660 Ti is a solid and relatively consistent 10-15% faster than the 7870"

Didn't I just prove that BS? 31%, 37%, 62%, 15%, 11%, 22% & 50%+. Well you got two right, out of the bunch. But otherwise it's 22%+ for EVERYTHING you tested. OH and note while writing this I see AMD just hacked all their prices because - Well, they KNOW THE TRUTH. They did the math like I did and re-adjusted IMMEDIATELY. 10 hours ago...LOL.

"AMD has already bracketed the GTX 660 Ti by positioning the 7870 below it and the 7950 above it, putting them in a good position to fend off NVIDIA." ? REALLY? Why did they just drop prices (10 hours ago...ROFL) across the board?

"while the 7950 is anywhere between a bit faster to a bit slower depending on what benchmarks you favor." HERE WE GO AGAIN PEOPLE: Follow along:

Civ5 <5% slower

Skyrim >7% faster

Battlefield3 >25% faster (above 40% or so in FXAA High)

Portal 2 >54% faster (same in 2560x...even though it's useless IMHO)

Batman Arkham >6% faster

Shogun 2 >25% faster

Dirt3 >6% faster

Metro 2033 =WASH (ztac 51.5 vs. 7950 51...margin of error..LOL)

Crysis Warhead =WASH (ref 7950 (66.9) lost to ref 660 (67.1), and 7950B 73.1 vs 72.5/70.9/70.2fps for other 3 660's) this is a WASH either way.

STARCRAFT 2: 7950 (88.2fps) VS. GTX670 (121.2fps) @1920x1200

So another roughly 37% victory for 660TI extrapolated? I'll give you 5 frames for the Boost version...Which still makes it ~30% faster in Starcraft 2.

So vs. the 7950B which you MADE UP YOUR MIND ON, here's your quote:

"If we had to pick something, on a pure performance-per-dollar basis the 7950 looks good both now and in the future"

we have victories of 25% (bf3), 54%(P2), 7%(skyrim), 25% (shog2) 30%+ (sc2), 6% (dirt3)

1 loss at <5% in CIV5 and the rest washes (less than 3%) ...But YOU think people should buy the card that gets it's but kicked or is a straight up wash. You said your recommendations was based on 1920x1200...NOT 2560x1600...Well, suck it up, this is the truth here in your OWN benchmarks...Yet you've ignored them and LIED. Let me quote you from ABOVE again lest you MISSED your own words:

"And 1920x1200 is what we based our concluding recommendations on. "

Then explain to me how you can have a card that wins in ONE game at <5% and BEATEN in 6 games by OVER 6%, with 4 of those 6 games BEATEN by >25%, and yet still come up with this ridiculous statement (again your conclusion):

"On the other hand due to the constant flip-flopping of the GTX 660 Ti and 7950 on our benchmarks there is no sure-fire recommendation to hand down there. If we had to pick something, on a pure performance-per-dollar basis the 7950 looks good both now and in the future"

Are you smoking crack or just BIASED? Being paid by AMD? Well? The 7950 is NOT cheaper than the 660TI. Can you explain your math sir? I'm confused. What "flip-flopping of the GTX 660 Ti and 7950"?? You're making these statements on 1920x1200 right? OR should I quote you again??...LOL.

More bias just keeps going in that article too (if anyone still has questions):

"But the moment efficiency and power consumption start being important the GTX 660 Ti is unrivaled, and this is a position that is only going to improve in the future when 7950B cards start replacing 7950 cards."

So wait a minute: It's hot, sucks juice and it only wins ONE game by <5%, loses 4 games by >25%, another 2 games > 6% and you still recommended the 7950 "If we had to pick something, on a pure performance-per-dollar basis the 7950 looks good both now and in the future" WHOA...Why in the future with all these losses?

Maybe it's this next statement?:"in particular we suspect it’s going to weather newer games better than the GTX 660 Ti and its relatively narrow memory bus."

Are we back to concluding based on 2560x1600 again? Which again, no monitor UNDER 30inches uses. Even the 27in (11 of 52) only use 2560x1440. And with all the losses at 1920x1200 how can you conclude anything but this 1920x1200 resolution and below are NEVER memory constrained or it would be LOSING. You have to push the card above 27in monitor resolutions to get this difference to EVER show up. Even then, it's a VERY questionable argument. Or should I run through those scores for you too? Back to your BS:

"As we mentioned in our discussion on pricing, performance cards are where we see the market shift from RICH ENTHUSIASTS who buy cards virtually every generation to more practical buyers who only buy every couple of generations."

OK, so you benchmarked at 2560 and beat it like a dead horse because of "enthusiasts" who by your own words (in the freaking conclusion again) are "RICH ENTHUSIASTS" would buy a $300 card? I make ~$50K/yr and paid $260 for my Radeon 5850 and have a 24in and 22in in use (24in can be had for $170, 22in ~$120). I'm not in the top5% of wealthy, heck I don't even crack the top 25%...LOL. I'm about to buy a $300 660TI though. You won't see me buy a $700-$2300 monitor ANY time soon. But YOUR "RICH ENTHUSIASTS" buy these and then spend $300 on a card. No, that guy just bought 3 24's and a GTX690 (or two) because the rich are running 5760x1200 with these (a single card even). OR that RICH guy bought a GTX690 and one of your $700-2300 IPS 27in monitors. Rich, by the very definition, don't buy the 4th rung in video cards. WE have a 660/670/680 & 690. But you suggest the "RICH ENTHUSIASTS" would buy 4th place? Put the crack pipe down, they aren't buying this card to run 2560x1600. They already own one or two GTX 680/690's. I wouldn't even call you RICH if you had anything less than a 690. You say they buy every year and I'm sure the RICH make far more than 100K, so probably have $1000 lying around for a GTX 690. I mean we're talking a 2% market share above 1920x1200 (check steampowered.com), by that % we're talking millionaires here. As of 2011 we have 11 million millionaires in USA. A LOT higher than 2% and I think they all have >$300 for a yearly card. They buy 2 of whatever or #1 single. :) Buying a $300 card to a millionaire "ENTHUSIAST" would be EMBARRASSING.

WORSE: You have people thinking they can OC the crap out of these still:

http://www.anandtech.com/show/6152/amd-announces-n...

"The 7950 on the other hand is largely composed of salvaged GPUs that failed to meet 7970 specifications. GPUs that failed due to damaged units aren’t such a big problem here, but GPUs that failed to meet clockspeed targets are another matter. As a result of the fact that AMD is working with salvaged GPUs, AMD has to apply a lot more voltage to a 7950 to guarantee that those poorly clocking GPUs will correctly hit the 925MHz boost clock."

Don't expect HUGE overclocks on all chips...The deck is tacked against you. You listening RUSSIAN? ;) RYAN's words...NOT MINE :)

Let's look outside anandtech to further prove the 1920x1200 point or even make the whole BANDWIDTH POINT MOOT :) Can you OC a 3GB 660TI memory?

http://hardocp.com/article/2012/08/21/galaxy_gefor...

WOW, 7.71ghz MEMORY vs. 6ghz (6008) for normal REF 660TI. 185GB/sec! This card is also $339 and has 3GB...LOL. OMG...What just happened? :)

Raising JUST the memory got them 10.4% in battlefield 3 @ 2560x1600! LOL.

1920x1080 got 12% JUST FROM THE MEMORY. What bottleneck? So you can already go another 1100mhz (even with an extra GB of mem) than the ZOTAC AMP in this review at anandtech even though RYAN hasn't show why I would need it, nice to know it's all there just in case :) AMP=6.6ghz, this is 7.71ghz!

SKYRIM PLAYERS TAKE NOTE:

"In Skyrim at 8X MSAA plus FXAA, which is a very high setting for this card, at stock frequencies we got 44.2 FPS. Overclocking the memory boosted performance by 11%." - NOTE THE MINIMUM OF 35FPS AVG 49 MAX 65. 2560x

"We ended up with a GPU offset of +110, which put us at a baseclock of 1116GHz or GPU Boost of 1195MHz. The actual frequency though while gaming was running at 1298MHz, which we feel safe calling 1.3GHz. This was about 10MHz slower than it was when overclocking the GPU alone."

CHECK THAT OUT, 1298, CARD OVERCLOCKING IT'S OVERCLOCK BEHIND YOUR BACK BY 100MHZ MORE! What you buy out of the box is GUARANTEED, your card may do far more all by itself, no need to do anything unlike 7950's. Check it out people, with 8xMSAA/16af HIGHEST in game settings possible@2560

Zotac Anandtech 4xmsaa=75.9 HARDOCP 8xMSAA=67! Minfps=37! So you can double your MSAA and STILL not run into a memory bandwidth issue despite ryan harping, harping, harping,...Did I mention he beat it like a dead horse? They couldn't tell it from the GTX670!

Witcher 2 @2560 faster on GTX660, but unplayable on both at min 18/16...ROFL proving more, these cards are NOT for 2560x1600. Batman Arkham hit min 10fps at 2560x1600 on 7950. Look how much time it spends below 25fps in the graph, it's almost half the time beetween 10fps and 25fps. OUCH. NOT BUILT FOR 2560x1600, not sure the 7970 is either at these fps..LOL

Are you getting the point RYAN? You won't be running many things at 2560x1600 with fps in the 10 range. How many more take a 54fps hit like batman on Radeon 7950? This whole bandwidth thing is a NON issue because these just are NOT built for this res. Like I said, people usually run hi-res with TWO cards and ABOVE 2560x1600. You can verify that at Steampowered.com 2560x1600 is a DECIMAL point. Because most opt for two cheaper cards and run a LOT higher. But just for giggles:

"In Batman we experienced large improvements in performance with the overclock on the GALAXY GTX 660 Ti. We are performing 22% faster than the GTX 670 in this game with the overclocked GTX 660 Ti, and 55% faster than the Radeon HD 7950."

It never dropped below 28fps. Bandwidth issue? @2560x1600 the 7950 dropping to 10fps while 660TI is up 22% on the GTX670ref and well...10fps...LOL. Say what? 55% faster @2560x1600? I thought this card had a memory bandwidth problem? Only if you say so ryan...ROFLMAO.

CLASS DISMISSED. Anything left to say to defend yourself RYAN? At least an OOOPS? "I'm rewriting my analysis? My conclusion was all wrong JIAN" ? :)

Please accept my spelling grammar errors if there are any...it's 5:49am in AZ :)

I sincerely hope you have the GUTS to respond and defend this somehow.