Khronos Announces OpenGL ES 3.0, OpenGL 4.3, ASTC Texture Compression, & CLU

by Ryan Smith on August 6, 2012 9:00 AM ESTOpenGL 4.3 Specification Also Released

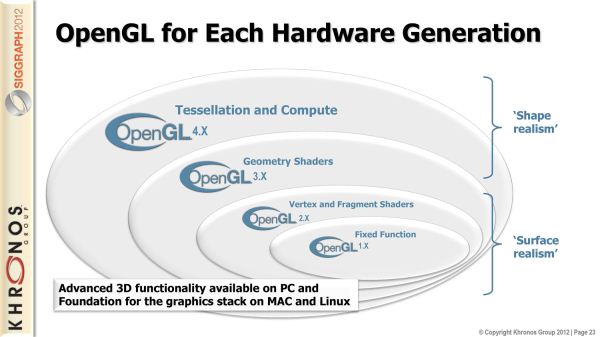

Alongside OpenGL ES 3.0, desktop OpenGL is also being iterated upon today with the launch of OpenGL 4.3. Unlike OpenGL ES this is only a much smaller point update. This is not to say that it’s unimportant, but it will bring a smaller list of new features to the table.

At the same time though, this also marks what may potentially be an interesting inflection point for OpenGL gaming on the desktop. On Windows OpenGL usage has never been lower; the only AAA game engine still based on OpenGL is id Tech 5, which with the termination of licensing by id is only used internally. Khronos of course would like to change that, so when Valve Software says that they’re going to be porting Source over to Linux (thereby increasing the audience for OpenGL games) it’s of quite some interest to Khronos. At the same time desktop OpenGL still has a long way to go to recapture its glory days in the late 90’s and early 2000’s, so Khronos is doing what they can to spur that on.

OpenGL ES 3.0 Superset & ETC Texture Compression

Moving on to looking at OpenGL 4.3’s features, unsurprisingly, one of the big additions to OpenGL 4.3 is to add the necessary features to make it a proper superset of OpenGL ES 3.0. One of Khronos’s missteps with OpenGL ES 2.0 was that it wasn’t until OpenGL 4.1 in 2010 that desktop OpenGL become a proper superset of OpenGL ES 2.0, which they’re rectifying this time around by launching OpenGL ES and its equivalent OpenGL at the same time.

Because OpenGL ES 3.0 is largely taken from desktop OpenGL in the first place, there aren’t a ton of changes due to this. But there is one: texture compression. The aforementioned standardization around ETC applies to both OpenGL ES and OpenGL, which means that starting with OpenGL 4.3, desktop OpenGL will have a standard texture compression format.

Practically speaking, this won’t make a huge difference to desktop developers right now. Because S3TC is a required part of the Direct3D specification and all desktop GPUs support Direct3D, S3TC has been a de-facto OpenGL standard for nearly 10 years now. And because few developers will target OpenGL 4.3 right away, that won’t change. But this means that developers targeting 4.3 do finally have a choice in texture compression, and developers doing cross-platform development with OpenGL ES can use the same texture compression format in both cases.

It’s worth noting though that just because a GPU “supports” ETC doesn’t mean it has hardware support. NVIDIA has told us that they’ll be 4.3 compliant, but they’re handling ETC by decompressing the texture in their drivers before sending it over to the GPU in an uncompressed format, and while AMD wasn’t able to get back to us in time it’s almost certainly the same story over there. For ports of OpenGL ES games this isn’t going to be a problem (dGPUs have plenty of high-bandwidth memory), but it means S3TC will remain the de-facto standard desktop OpenGL texture compression format for now.

OpenGL Compute Shaders

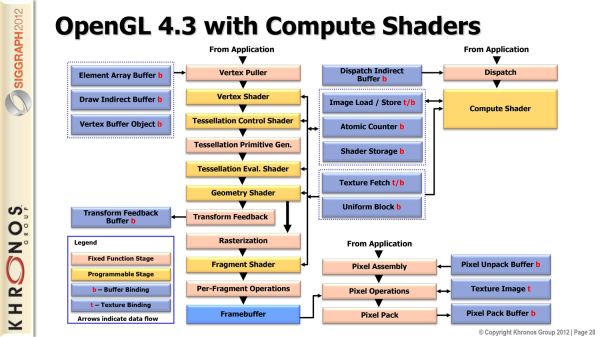

Moving on, while OpenGL ES 3.0 compatibility is a big deal for OpenGL, it’s actually not the biggest feature addition for OpenGL 4.3. For that we turn to compute shaders.

As a bit of background, when meaningful compute functionality was introduced for GPUs, Microsoft and Khronos went in two separate directions. Khronos of course created OpenCL, a full-featured ANSI C based API for compute. Microsoft on the other hand introduced compute shaders, which was a special class of HLSL designed for compute. OpenCL is far more flexible, but flexibility has its price. Specifically, implementing compute functionality in OpenGL games often wasn’t as easy as the equivalent functionality using a Direct3D compute shader, and the overhead of OpenCL limited performance.

The end result is that Khronos has decided to implement compute shaders in OpenGL in order to bridge this gap. OpenCL of course remains as Khronos’s premiere compute API for both stand-alone compute applications and OpenGL games/applications that need the full flexibility, for but games that don’t need that level of flexibility and only want to run compute work on a GPU there is now another option.

Like Direct3D’s compute shader functionality, OpenGL’s compute shader functionality is geared towards relatively simple pixel operations, where approaching an algorithm in a compute manner (instead of a graphics manner) allows for faster execution. The compute shaders themselves will be written in GLSL rather than C, underscoring the fact that this is an extension of OpenGL’s shading framework rather than their compute framework. The target for this functionality will be games and other applications which perform compute “close to the pixel”, taking advantage of the faster shared memory and greater thread count that compute shaders offer.

Since OpenGL’s compute shader functionality is being spurred on by Direct3D, it should come as no surprise that OpenGL’s compute shaders are going to be a very close implementation of Direct3D’s compute shaders. Specifically, OpenGL’s compute shader functionality is being advertised by Khronos as matching D3D11’s compute shader functionality. The differences between HLSL and GLSL mean that there’s no straightforward portability, but it underscores the fact that this is the OpenGL analog of D3D’s compute shader functionality.

New Texture & Buffer Features

Moving on, OpenGL 4.3 will also be introducing some new texture and buffer features. On the texture side of things, one new feature will be texture views, which allow for a texture to be “viewed” in a different way by interpreting its results in a different manner, all without needing to duplicate the texture for modification. As for buffers, 4.3 introduces support for reading and writing to very large buffers across all shader types and all stages. The idea behind this is that it’s an efficient way for those new compute shaders to communicate with the graphics pipeline, given the large amount of data that can be in flight with a compute shader.

Wrapping things up, for some time now Khronos has been working on bringing OpenGL into alignment with Direct3D in order to close the feature and developer gap that has been created between the two. As Khronos correctly notes, with the addition of compute shader functionality OpenGL is now a true superset of Direct3D. If desktop OpenGL is going to see a resurgence in the next few years, it’s now in a far better position to pull that off than it was in before.

46 Comments

View All Comments

whooleo - Monday, August 6, 2012 - link

Concerning the desktop, OpenGL is hardly being used in games due to DirectX being better and because many of the Linux and Mac OS X users aren't gamers. I mean there are games on Mac OS X but not many gamers on Macs. Plus it shows, Apple's graphics drivers aren't where they should be compared to Linux and Windows along with the graphics cards they include with their PCs. Enough about Mac OS X, Linux also has too many issues with graphics cards and drivers to be friendly enough to the average gamer. This is just a summary of the reasons why desktop OpenGL adoption is low. Now don't get me wrong, OpenGL can be a great open source alternative to DirectX but some things need to be addressed first.bobvodka - Tuesday, August 7, 2012 - link

Just a minor correction; OpenGL isn't 'open source' it is an 'open standard' - you get no source code for it :)whooleo - Tuesday, August 7, 2012 - link

Whoops! Thanks for the correction!beginner99 - Tuesday, August 7, 2012 - link

maybe I got it all wrong but aren't the normal gaming gpus rather lacking in OpenGL performance? I always thought that that was one of the factors the workstations cards differ in (due to driver). Wouldn't that impact game performance as well?Cogman - Tuesday, August 7, 2012 - link

> As OpenCL was designed to be a relatively low level language (ANSI C)OpenCL was BASED on C99. It is not, however, C99. They are two different languages (in other words, you can't take OpenCL code and throw it into a C compiler and vice versa).

Sorry for the nit pick, however, it is important to note that OpenCL is its own language (Just like Cuda is its own language).

UrQuan3 - Thursday, August 16, 2012 - link

Maybe someone can set me straight on this. Years ago, I had a PowerVR card for my desktop (Kyro II). While this was not a high end card by any means, I seem to remember a checkmark box "Force S3TC Compression". The card would then load the uncompressed textures from the game and compress them using S3TC before putting them in video RAM. The FPS performance increase was very noticeable although load times went up a little.Am I confused about that? If I'm not, why isn't that more common? Seems like that would solve the problem of supporting multiple compression schemes. Of course, if a compression scheme isn't general purpose, that could cause problems.