Intel Core i5 3470 Review: HD 2500 Graphics Tested

by Anand Lal Shimpi on May 31, 2012 12:00 AM EST- Posted in

- CPUs

- Intel

- Ivy Bridge

- GPUs

Intel's HD 2500 & Quick Sync Performance

What makes the 3470 particularly interesting to look at is the fact that it features Intel's HD 2500 processor graphics. The main difference between the 2500 and 4000 is the number of compute units on-die:

| Intel Processor Graphics Comparison | ||||

| Intel HD 2500 | Intel HD 4000 | |||

| EUs | 6 | 16 | ||

| Base Clock | 650MHz | 650MHz | ||

| Max Turbo | 1150MHz | 1150MHz | ||

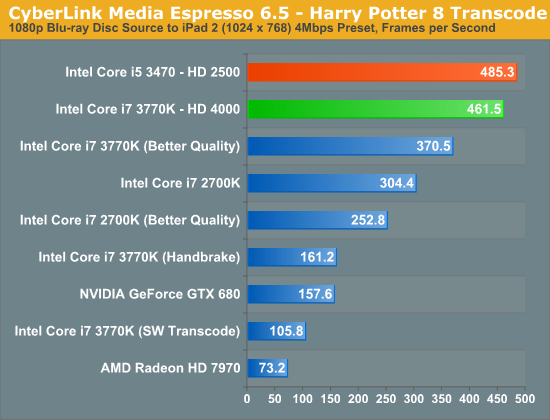

At 6 EUs, Intel's HD 2500 has the same number of compute resources as the previous generation HD 2000. In fact, Intel claims that performance should be around 10 - 20% faster than HD 2000 in 3D games. Given that Intel's HD 4000 is getting close to the minimum level of 3D performance we'd like to see from Intel, chances are the 2500 will not impress. We'll get to quantifying that shortly, but the good news is Quick Sync performance is retained:

The HD 2500 does a little better than our HD 4000 here, but that's just normal run to run variance. Quick Sync does rely heavily on the EU array for transcode work, but it looks like the workload itself isn't heavy enough to distinguish between the 6 EU HD 2500 and the 16 EU HD 4000. If your only need for Intel's processor graphics is for transcode work, the HD 2500 appears indistinguishable from the HD 4000.

The bad news is I can't say the same about its 3D graphics performance.

67 Comments

View All Comments

duploxxx - Thursday, May 31, 2012 - link

you could ask why even bother to add a GPU here, it is utter crap.now lets have a look at teh other review what they left in the U 17W parts ......

PrinceGaz - Thursday, May 31, 2012 - link

Because most people don't buy a PC to play the latest games or do 3D rendering work with it.CeriseCogburn - Monday, June 11, 2012 - link

Correct PrinceGaz, but then we have the amd fan boy contingent, that for some inexplicably insane fatnasy reason, now want to pretend llano, trinity and hd graphics are gaming items...The whole place has gone bonkers. But a freaking video card, every *********** motherboard has one 16x slot in it.

mother of god !

ananduser - Thursday, May 31, 2012 - link

I noticed you ran a BF3 bench and assumed that the game is playable on a HD4000. Have you actually played the game with the HD4000 on a 32 player map. I dare you to try and update this review and say that BF3 is "playable" on a HD4000.JarredWalton - Thursday, May 31, 2012 - link

"The HD 4000 delivered a nearly acceptable experience in Battlefield 3..." I wouldn't call that saying the game is "playable". Obviously, the more people there are on a map the worse it gets, but if you're playing BF3 on 32 player maps (or you plan to), I'd hope you understand that you'll need as much GPU (and quite a bit of CPU) as you can throw at the game.That said, I'll edit the paragraph to note that we're discussing single-player performance, and multiplayer is a different beast.

ananduser - Thursday, May 31, 2012 - link

Well, when one sees 37 fps BF3, one might wrongly assume that: "OMG 37 FPS on a thin and low powered [insert ultrabook brand]"; I'm going tomorrow and buying it.I think that playing the game for 5-10 minutes with FRAPS enabled and providing highest/lowest FPS count for each hardware setup is more revealing than running built-in engine demos.

CeriseCogburn - Friday, June 1, 2012 - link

Don't forget this applies to AMD Trinity whose crappy cpu will cave in on a 32 player server.ananduser - Thursday, May 31, 2012 - link

Oh and I doubt single player performance will ever touch that 37 data point as well.JarredWalton - Thursday, May 31, 2012 - link

On the quad-core desktop IVB chips it surely will -- that's why the result is in the charts -- but for laptops? Nope. I think the best result I've gotten (at minimum details and 1366x768) is around 25-26FPS in BF3.ltcommanderdata - Thursday, May 31, 2012 - link

"Intel has backed OpenCL development for some time and currently offers an OpenCL 1.1 runtime for their CPUs, however an OpenCL runtime for Ivy Bridge will not be available at launch. As a result Ivy Bridge is limited to DirectCompute for the time being, which limits just what kind of compute performance testing we can do with Ivy Bridge."http://software.intel.com/en-us/articles/vcsource-...

The Intel® SDK for OpenCL Applications 2012 has been available for several weeks now and is supposed to include GPU OpenCL support for Ivy Bridge. Isn't that sufficient to enable you to run your OpenCL benchmarks?

http://downloadcenter.intel.com/Detail_Desc.aspx?a...

If not, the beta drivers for Windows 8 also support Windows 7 and adds both GPU OpenCL 1.1. support and full OpenGL 4.0 support including tessellation so would allow you to run your OpenCL benchmarks and a Unigine Heaven OpenGL tessellation comparison.