The Intel Ivy Bridge (Core i7 3770K) Review

by Anand Lal Shimpi & Ryan Smith on April 23, 2012 12:03 PM EST- Posted in

- CPUs

- Intel

- Ivy Bridge

While compute functionality could technically be shoehorned into DirectX 10 GPUs such as Sandy Bridge through DirectCompute 4.x, neither Intel nor AMD's DX10 GPUs were really meant for the task, and even NVIDIA's DX10 GPUs paled in comparison to what they've achieved with their DX11 generation GPUs. As a result Ivy Bridge is the first true compute capable GPU from Intel. This marks an interesting step in the evolution of Intel's GPUs, as originally projects such as Larrabee Prime were supposed to help Intel bring together CPU and GPU computing by creating an x86 based GPU. With Larrabee Prime canceled however, that task falls to the latest rendition of Intel's GPU architecture.

With Ivy Bridge Intel will be supporting both DirectCompute 5—which is dictated by DX11—but also the more general compute focused OpenCL 1.1. Intel has backed OpenCL development for some time and currently offers an OpenCL 1.1 runtime for their CPUs, however an OpenCL runtime for Ivy Bridge will not be available at launch. As a result Ivy Bridge is limited to DirectCompute for the time being, which limits just what kind of compute performance testing we can do with Ivy Bridge.

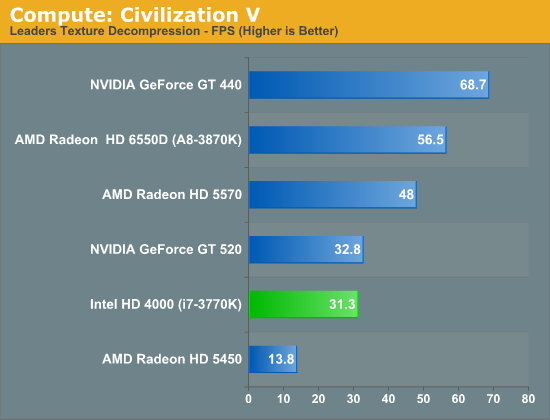

Our first compute benchmark comes from Civilization V, which uses DirectCompute 5 to decompress textures on the fly. Civ V includes a sub-benchmark that exclusively tests the speed of their texture decompression algorithm by repeatedly decompressing the textures required for one of the game’s leader scenes. And while games that use GPU compute functionality for texture decompression are still rare, it's becoming increasingly common as it's a practical way to pack textures in the most suitable manner for shipping rather than being limited to DX texture compression.

As we alluded to in our look at Civilization V's performance in game mode, Ivy Bridge ends up being compute limited here. It's well ahead of the even more DirectCompute anemic Radeon HD 5450 here—in spite of the fact that it can't take a lead in game mode—but it's slightly trailing the GT 520, which has a similar amount of compute performance on paper. This largely confirms what we know from the specs for HD 4000: it can pack a punch in pushing pixels, but given a shader heavy scenario it's going to have a great deal of trouble keeping up with Llano and its much greater shader performance.

But with that said, Ivy Bridge is still reaching 55% of Llano's performance here, thanks to AMD's overall lackluster DirectCompute performance on their pre-7000 series GPUs. As a result Ivy Bridge versus Llano isn't nearly as lop-sided as the paper specs tell us; Ivy Bridge won't be able to keep up in most situations, but in DirectCompute it isn't necessarily a goner.

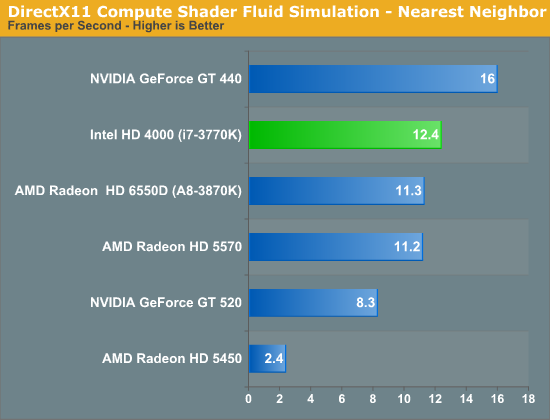

And to prove that point, we have our second compute test: the Fluid Simulation Sample in the DirectX 11 SDK. This program simulates the motion and interactions of a 16k particle fluid using a compute shader, with a choice of several different algorithms. In this case we’re using an (O)n^2 nearest neighbor method that is optimized by using shared memory to cache data.

Thanks in large part to its new dedicated L3 graphics cache, Ivy Bridge does exceptionally well here. The framerate of this test is entirely arbitrary, but what isn't is the performance relative to other GPUs; Ivy Bridge is well within the territory of budget-level dGPUs such as the GT 430, Radeon HD 5570, and for the first time is ahead of Llano, taking a lead just shy of 10%. The fluid simulation sample is a very special case—most compute shaders won't be nearly this heavily reliant on shared memory performance—but it's the perfect showcase for Ivy Bridge's ideal performance scenario. Ultimately this is just as much a story of AMD losing due to poor DirectCompute performance as it is Intel winning due to a speedy L3 cache, but it shows what is possible. The big question now is what OpenCL performance is going to be like, since AMD's OpenCL performance doesn't have the same kind of handicaps as their DirectCompute performance.

Synthetic Performance

Moving on, we'll take a few moments to look at synthetic performance. Synthetic performance is a poor tool to rank GPUs—what really matters is the games—but by breaking down workloads into discrete tasks it can sometimes tell us things that we don't see in games.

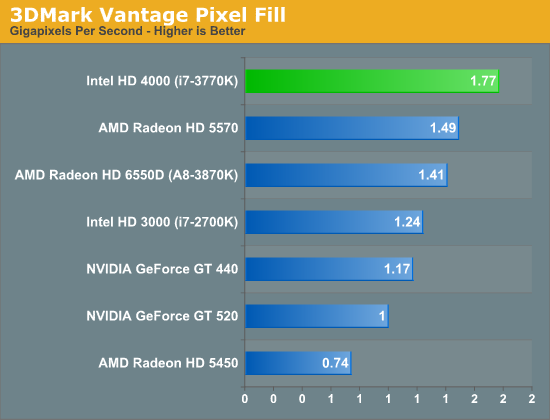

Our first synthetic test is 3DMark Vantage’s pixel fill test. Typically this test is memory bandwidth bound as the nature of the test has the ROPs pushing as many pixels as possible with as little overhead as possible, which in turn shifts the bottleneck to memory bandwidth so long as there's enough ROP throughput in the first place.

It's interesting to note here that as DDR3 clockspeeds have crept up over time, IVB now has as much memory bandwidth as most entry-to-mainstream level video cards, where 128bit DDR3 is equally common. Or on a historical basis, at this point it's half as much bandwidth as powerhouse video cards of yesteryear such as the 256bit GDDR3 based GeForce 8800GT.

Altogether, with 29.6GB/sec of memory bandwidth available to Ivy Bridge with our DDR3-1866 memory, Ivy Bridge ends up being able to push more pxiels than Llano, more pixels than the entry-level dGPUs, and even more pixels the budget-level dGPUs such as GT 440 and Radeon HD 5570 which have just as much dedicated memory bandwidth. Or put in numbers, Ivy Bridge is pushing 42% more pixels than Sandy Bridge and 25% more pixels than the otherwise more powerful Llano. And since pixel fillrates are so memory bandwidth bound Intel's L3 cache is almost certainly once again playing a role here, however it's not clear to what extent that's the case.

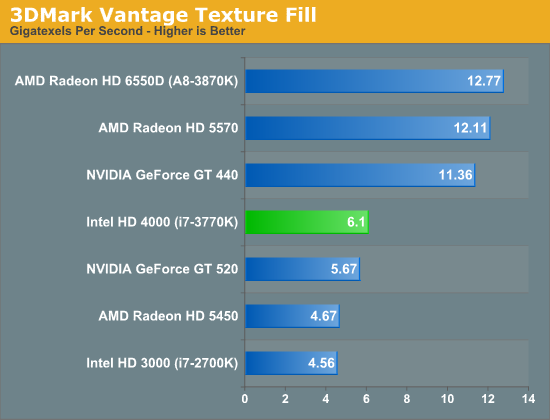

Moving on, our second synthetic test is 3DMark Vantage’s texture fill test, which provides a simple FP16 texture throughput test. FP16 textures are still fairly rare, but it's a good look at worst case scenario texturing performance.

After Ivy Bridge's strong pixel fillrate performance, its texture fillrate brings us back down to earth. At this point performance is once again much closer to entry level GPUs, and also well behind Llano. Here we see that Intel's texture performance increases also exactly linearly with the increase in EUs from Sandy Bridge to Ivy Bridge, indicating that those texture units are being put to good use, but at the same time it means Ivy Bridge has a long way to go to catch Llano's texture performance, achieving only 47% of Llano's performance here. The good news for Intel here is that texture size (and thereby texel density) hasn't increased much over the past couple of years in most games, however the bad news is that we're finally starting to see that change as dGPUs get more VRAM.

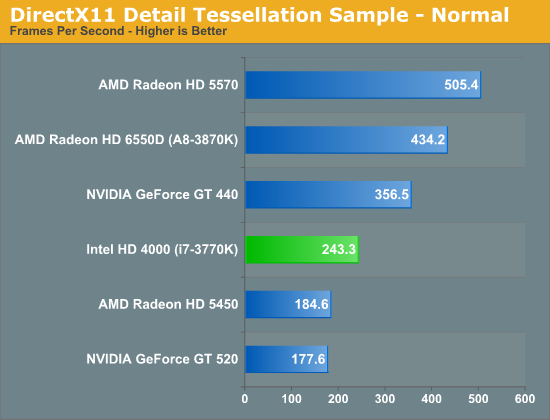

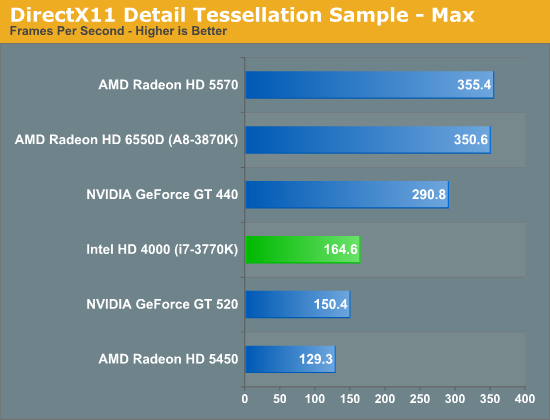

Our final synthetic test is the set of settings we use with Microsoft’s Detail Tessellation sample program out of the DX11 SDK. Since IVB is the first Intel iGPU with tessellation capabilities, it will be interesting to see how well IVB does here, as IVB is going to be the de facto baseline for DX11+ games in the future. Ideally we want to have enough tessellation performance here so that tessellation can be used on a global level, allowing developers to efficiently simulate their worlds with fewer polygons while still using many polygons on the final render.

The results here are actually pretty decent. Compared to what we've seen with shader and texture performance, where Ivy Bridge is largely tied at the hip with the GT 520, at lower tessellation factors Ivy Bridge manages to clearly overcome both the GT 520 and the Radeon HD 5450. Per unit of compute performance, Intel looks to have more tessellation performance than AMD or NVIDIA, which means Intel is setting a pretty good baseline for tessellation performance. Tessellation performance at high tessellation factors does dip however, with Ivy Bridge giving up much of its performance lead over the entry-level dGPUs, but still managing to stay ahead of both of its competitors.

173 Comments

View All Comments

hechacker1 - Monday, April 23, 2012 - link

VT-d is interesting if you run ESXi or a Linux based hyper visor, as they allow to utilize VT-d to directly assign hardware to the virtual machines. I think you can even share hardware with it.In Linux for example you could host Windows and assign it a real GPU and get full performance from it.

A while ago I built a machine with that idea in mind, but the software bits weren't in place just yet.

I too with for an overclockable VT-d part.

terragb - Monday, April 23, 2012 - link

Just to add to this, all the processors do support VT-x which is the potentially performance enhancing spec for virtualization.JimmiG - Monday, April 23, 2012 - link

Really annoying how Intel decides seemingly at random which parts get VT-d and which don't.Why do you get it with the $174 i5 3450, but not with the "one CPU to rule them all", everything-but-the-kitchen-sink, $313 i7 3770K?

It's also a stupid way to segment your product line, since 99% of the people buying systems with these CPUs won't even know what it does.

This means AMD also gets some of my money when I upgrade - I'll just build a cheap Bulldozer system for my virtualization needs. I can't really use my Phenom II X4 for that after upgrading - it uses too much power and it's dependent on DDR-2 RAM, which is hard to find and expensive.

dcollins - Monday, April 23, 2012 - link

VT-d is required to support Intel's Trusted Execution Platform, which is used by many OEMs to provide business management tools. That's why the low end CPUs have support and the enthusiast SKUs do not. VT-d provides no benefit to Desktop users right now because desktop virtualization packages do not support it.I agree that it is frustrating having to sacrifice future-proofing for overclocking, but Intel's logic kind of makes sense. Remember, any features that can be disabled will increase yields which means lower prices (or higher margins).

JimmiG - Tuesday, April 24, 2012 - link

VirtualBox, which is one of the most popular desktop virtualization packages, does support VT-d. In fact it's required for 64-bit guests and guests with more than one CPU being virtualized.Does VT-d really use so many transistors that disabling it increases yields? AMD keep their hardware virtualization features enabled even in their lowest-end CPUs (even those where entire cores have been disabled to increase yields)

dgingeri - Monday, April 23, 2012 - link

"I took the last Harry Potter Blu-ray, stripped it of its DRM and used Media Espresso to make it playable on an iPad 2 (1024 x 768 preset)."I wouldn't admit that in print, if I were you. The DMCA goblins will come and get you.

p05esto - Monday, April 23, 2012 - link

They can say they're just kidding and used it as an example, because they would "never" actually do that. I think pirate cops would need more than talk to go to court. Imagine how bad this site would rip into them if they said anything, lol.XJDHDR - Monday, April 23, 2012 - link

Why? No-one loses money from transcode benchmarks. Besides, piracy is the real problem. If it didn't exist, there would be no DRM to strip away.dgingeri - Monday, April 23, 2012 - link

Sure, nobody loses any money, but the entertainment industry pushed DMCA through, and they will use it if they think they could get any profit out of it. It's one law, out of many, that isn't there to protect anyone. It's there so the MPAA and RIAA can screw people over.copyrightforreal - Monday, April 23, 2012 - link

Don't pretend you know shit about copyright law when you don't.Ripping a DVD you own is NOT illegal under the DMCA or Copyright act.

Wikipedia article that even you will be able to comprehend:

http://en.wikipedia.org/wiki/Ripping#Circumvention...