AMD Radeon HD 7970 Review: 28nm And Graphics Core Next, Together As One

by Ryan Smith on December 22, 2011 12:00 AM EST- Posted in

- GPUs

- AMD

- Radeon

- ATI

- Radeon HD 7000

Managing Idle Power: Introducing ZeroCore Power

AMD has been on an idle power crusade for years now. Their willingness to be early adopters of new memory standards has allowed them to offer competitive products on narrower (and thereby cheaper) memory buses, but the tradeoff is that they get to experience the problems that come with the first revision of any new technology.

The most notable case where this has occurred would be the Radeon HD 4870 and 4890, the first cards to use GDDR5. The memory performance was fantastic; the idle power consumption was not. At the time AMD could not significantly downclock their GDDR5 products, resulting in idle power usage that approached 50W. Since then Cypress introduced a proper idle mode, allowing AMD to cut their idle power usage to 27W, while AMD has continued to further refine their idle power consumption.

With the arrival of Southern Islands comes AMD’s latest iteration of their idle power saving technologies. For 7970 AMD has gotten regular idle power usage down to 15W, roughly 5W lower than it was on the 6900 series. This is accomplished through a few extra tricks such as framebuffer compression, which reduce the amount of traffic that needs to move over the relatively power hungry GDDR5 memory bus.

However the big story with Southern Islands for idle power consumption isn’t regular idle, rather it’s “long idle.” Long idle is AMD’s term for any scenarios where the GPU can go completely idle, that is where it doesn’t need to do any work at all. For desktop computers this would primarily be for when the display is put to sleep, as the GPU does not need to do at work when the display itself can’t show anything.

Currently video cards based on AMD’s GPUs can cut their long idle power consumption by a couple of watts by turning off any display transmitters and their clock sources, but the rest of the GPU needs to be minimally powered up. This is what AMD seeks to change.

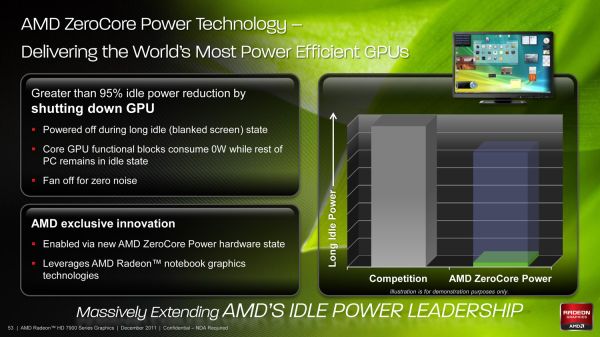

With Southern Islands AMD is introducing ZeroCore Power, their long idle power saving technology. By implementing power islands on their GPUs AMD can now outright shut off most of the functional units of a GPU when the GPU is going unused, leaving only the PCIe bus interface and a couple other components active. By doing this AMD is able to reduce their power consumption from 15W at idle to under 3W in long idle, a power level low enough that in a desktop the power consumption of the video card becomes trivial. So trivial in fact that with under 3W of heat generation AMD doesn’t even need to run the fan – ZeroCore Power shuts off the fan as it’s rendered an unnecessary device that’s consuming power.

Ultimately ZeroCore Power isn’t a brand new concept, but this is the first time we’ve seen something quite like this on the desktop. Even AMD will tell you the idea is borrowed from their mobile graphics technology, where they need to be able to power down the GPU completely for power savings when using graphics switching capabilities. But unlike mobile graphics switching AMD isn’t fully cutting off the GPU, rather they’re using power islands to leave the GPU turned on in a minimal power state. As a result the implementation details are very different even if the outcomes are similar. At the same time a technology like this isn’t solely developed for desktops so it remains to be seen how AMD can leverage it to further reduce power consumption on the eventual mobile Southern Islands GPUs.

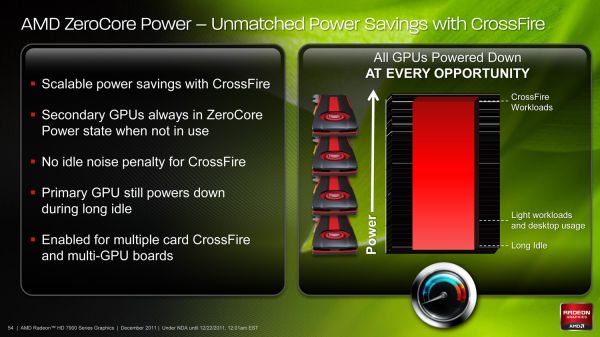

Of course as impressive as sub-3W long idle power consumption is on a device with 4.3B transistors, at the end of the day ZeroCore Power is only as cool as the ways it can be used. For gaming cards such as the 7970 AMD will be leveraging it not only as a way to reduce power consumption when driving a blanked display, but more importantly will be leveraging it to improve the power consumption of CrossFire. Currently AMD’s Ultra Low Power State (ULPS) can reduce the idle power usage of slave cards to a lower state than the master card, but the GPUs must still remain powered up. Just as with long idle, ZeroCore Power will change this.

Fundamentally there isn’t a significant difference between driving a blank display and being a slave card card in CrossFire, in both situations the video card is doing nothing. So AMD will be taking ZeroCore Power to its logical conclusion by coupling it with CrossFire; ZeroCore Power will put CrossFire slave cards in ZCP power state whenever they’re not in use. This not only means reducing the power consumption of the slave cards, but just as with long idle turning off the fan too. As AMD correctly notes, this virtually eliminates the idle power penalty for CrossFire and completely eliminates the idle noise penalty. With ZCP CrossFire is now no noisier and only ever so slightly more power hungry than a single card at idle.

Furthermore the benefits of ZCP in CrossFire not only apply to multiple cards, but multiple-GPU cards too. When AMD launches their eventual multi-GPU Tahiti card the slave GPU can be put in a ZCP state, leaving only the master GPU and the PCIe bridge active. Coupled with ZCP on the master GPU when in long idle and even a beastly multi-GPU card should be able to reduce its long idle power consumption to under 10W after accounting for the PCIe bridge.

Meanwhile as for load power consumption, not a great deal has changed from Cayman. AMD’s PowerTune throttling technology will be coming to the entire Southern Islands lineup, and it will be implemented just as it was in Cayman. This means it remains operationally the same by calculating the power draw of the card based on load, and then altering clockspeeds in order to keep the card below its PowerTune limit. For the 7970 the limit is the same as it was for the 6970: 250W, with the ability to raise or lower it by 20% in the Catalyst Control Center.

On that note, at this time the only way to read the core clockspeed of the 7970 is through AMD’s drivers, which don’t reflect the current status of PowerTune. As a result we cannot currently tell when PowerTune has started throttling. If you recall our 6970 results we did find a single game that managed to hit PowerTune’s limit: Metro 2033. So we have a great deal of interest in seeing if this holds true for the 7970 or not. Looking at frame rates this may be the case, as we picked up 1.5fps on Metro after raising the PowerTune limit by 20%. But at 2.7% this is on the edge of being typical benchmark variability so we’d need to be able to see the core clockspeed to confirm it.

292 Comments

View All Comments

Zingam - Thursday, December 22, 2011 - link

And at the time when it is available in D3D. AMD's implementation won't be compatible... :D That's sounds familiar. So will have to wait for another generation to get the things right.Ryan Smith - Thursday, December 22, 2011 - link

As for your question about FP64, it's worth noting that of the FP64 rates AMD listed for GCN, "0" was not explicitly an option. It's quite possible that anything using GCN will have at a minimum 1/16th FP64.Sind - Thursday, December 22, 2011 - link

Excellent review thanks Ryan. Looking forward to see what the 7950 performance and pricing will end up. Also to see what nv has up their sleeves. Although I can't shake the feeling amd is holding back.chizow - Thursday, December 22, 2011 - link

Another great article, I really enjoyed all the state-of-the-industry commentary more than the actual benchmarks and performance numbers.One thing I may have missed was any coverage at all of GCN. Usually you guys have all those block diagrams and arrows explaining the changes in architecture. I know you or Anand did a write-up on GCN awhile ago, but I may have missed the link to it in this article. Or maybe put a quick recap in there with a link to the full write-up.

But with GCN, I guess we can close the book on AMD's past Vec5/VLIW4 archs as compute failures? For years ATI/AMD and their supporters have insisted it was the better compute architecture, and now we're on the 3rd major arch change since unified shaders, while Nvidia has remained remarkably consistent with their simple SP approach. I think the most striking aspect of this consistency is that you can run any CUDA or GPU accelerated apps on GPUs as old as G80, while you even noted you can't even run some of the most popular compute apps on 7970 because of arch-specific customizations.

I also really enjoyed the ISV and driver/support commentary. It sounds like AMD is finally serious about "getting in the game" or whatever they're branding it nowadays, but I have seen them ramp up their efforts with their logo program. I think one important thing for them to focus on is to get into more *quality* games rather than just focusing on getting their logo program into more games. Still, as long as both Nvidia and AMD are working to further the compatibility of their cards without pushing too many vendor-specific features, I think that's a win overall for gamers.

A few other minor things:

1) I believe Nvidia will soon be countering MLAA with a driver-enabled version of their FXAA. While FXAA is available to both AMD and Nvidia if implemented in-game, providing it driver-side will be a pretty big win for Nvidia given how much better performance and quality it offers over AMD's MLAA.

2) When referring to active DP adapter, shouldn't it be DL-DVI? In your blurb it said SL-DVI. Its interesting they went this route with the outputs, but providing the active adapter was definitely a smart move. Also, is there any reason GPU mfgs don't just add additional TMDS transmitters to overcome the 4x limitation? Or is it just a cost issue?

3) The HDMI discussion is a bit fuzzy. HDMI 1.4b specs were just finalized, but haven't been released. Any idea whether or not SI or Kepler will support 1.4b? Biggest concern here is for 120Hz 1080p 3D support.

Again, thoroughly enjoyed reading the article, great job as usual!

Ryan Smith - Thursday, December 22, 2011 - link

Thanks for the kind words.Quick answers:

2) No, it's an active SL-DVI adapter. DL-DVI adapters exist, but are much more expensive and more cumbersome to use because they require an additional power source (usually USB).

As for why you don't see video cards that support more than 2 TMDS-type displays, it's both an engineering and a cost issue. On the engineering side each TMDS source (and thus each supported TMDS display) requires its own clock generator, whereas DisplayPort only requires 1 common clock generator. On the cost side those clock generators cost money to implement, but using TMDS also requires paying royalties to Silicon Image. The royalty is on the order of cents, but AMD and NVIDIA would still rather not pay it.

3) SI will support 1080P 120Hz frame packed S3D.

ericore - Thursday, December 22, 2011 - link

Core Next: It appears AMD is playing catchup to Nvidia's Cuda, but to an extent that halves the potential performance metrics; I see no other reason why they could not have achieved at varying 25-50% improvement in FPS. That is going to cost them, not just for marginally better performance 5-25%, but they are price matching GTX 580 which means less sales though I suppose people who buy 500$ + GPUs buy them no matter what. Though in this case, they may wait to see what Nvidia has to offer.Other New AMD GPUs: Will be releasing in February and April are based on the current architecture, but with two critical differences; smaller node + low power based silicon VS the norm performance based silicon. We will see very similar performance metrics, but the table completely flips around: we will see them, cheaper, much more power efficient and therefore very quiet GPUs; I am excited though I would hate to buy this and see Nvidia deliver where AMD failed.

Thanks Anand, always a pleasure reading your articles.

Angrybird - Thursday, December 22, 2011 - link

any hint on 7950? this card should go head to head with gtx580 when it release. good job for AMD, great review for Ryan!ericore - Thursday, December 22, 2011 - link

I should add with over 4 billion transistors, they've added more than 35% more transistors but only squeeze 5-25% improvement; unacceptable. That is a complete fail in that context relative to advancement in gaming. Too much catchup with Nvidia.Finally - Thursday, December 22, 2011 - link

...that saying? It goes like this:If you don't show up for a race, you lose by default.

Your favourite company lost, so their fanboys may become green of envydia :)

Besides that - I'd never shell out more than 150€ for a petty GPU, so neither company's product would have appealed to me...

piroroadkill - Thursday, December 22, 2011 - link

Wait, catchup? In my eyes, they were already winning. 6950 with dual BIOS, unlock it to 6970.. unbelievable value.. profit??Already has a larger framebuffer than the GTX580, so...