The Bulldozer Aftermath: Delving Even Deeper

by Johan De Gelas on May 30, 2012 1:15 AM ESTZooming in on SPEC CPU2006: the Good

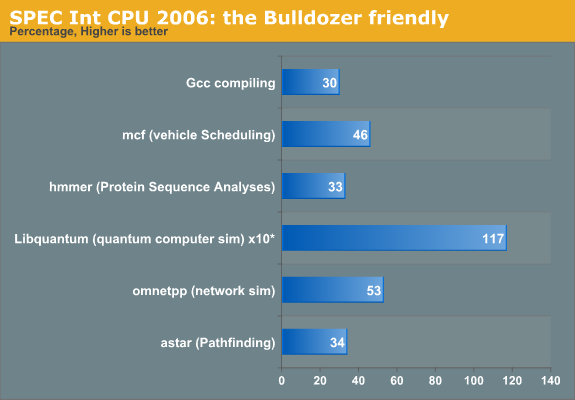

We filtered out those benchmarks that showed a 30% improvement over Magny-Cours (based on the K10 core). Remember the Bulldozer architecture has been designed to deliver 33% more cores in the same power envelope while keeping the IPC more or less at 95% of the K10. The rest of the performance should have come from a clock speed increase. The clock speed increases did not materialize in the real world, and we also kept the clock speed the same to focus on the architecture. Where a 30-35% performance increase is good, anything over 35% indicates that the Bulldozer architecture handles that particular sort of software better than Magny-Cours.

The Libquantum score is the most spectacular. Bulldozer performs over twice as fast and the score of 2750 is not that far from the all mighty Xeon 2660 at 2.2GHz (3310). Bulldozer here is only 17% slower.

At first sight, there is nothing that should make Libquantum run very fast on Bulldozer. Libquantum contains a high amount of branches (27%) and we have seen before that although Bulldozer has a somewhat improved branch predictor, the deeper pipeline and higher branch misprediction penalty can cause a lot of trouble. In fact, Perlbench (23%), Sjeng Chess (21%), and Gobmk (AI, 21%) are branchy software and are among the worst performing tests on Bulldozer. Luckily, Libquantum has a much easier to predict branches: libquantum is among the software pieces that has the lowest branch misprediction rates (less than six per 1000 instructions).

We all know that Bulldozer can deal much better with loads and stores than Magny-Cours. However, libquantum has the lowest (!) amount of load/stores (19%=14% Loads, 5% Stores). The improved Memory Level Parallelism of Bulldozer is not the answer. The table below gives an idea of the instruction mix of SPEC CPU2006int.

| SPEC Int 2006 Application | IPC* | Branches | Stores | Loads |

Total Loads/ Stores |

|---|---|---|---|---|---|

| perlbench | 1.67 | 23 | 12 | 24 | 36 |

| Bzip compression | 1.43 | 15 | 9 | 26 | 35 |

| Gcc | 0.83 | 22 | 13 | 26 | 39 |

| mcf | 0.28 | 19 | 9 | 31 | 40 |

| Go AI | 1.00 | 21 | 14 | 28 | 42 |

| hmmer | 1.67 | 8 | 16 | 41 | 57 |

| Chess | 1.25 | 21 | 8 | 21 | 29 |

| libquantum | 0.43 | 27 | 5 | 14 | 1 |

| h264 encoding | 2.00 | 8 | 12 | 35 | 47 |

| omnetppp | 0.38 | 21 | 18 | 34 | 52 |

| astar | 0.56 | 17 | 5 | 27 | 32 |

| XML processing | 0.66 | 26 | 9 | 32 | 41 |

* IPC as measured on Core 2 Duo.

Libquantum has a relatively high amount of cache misses on most CPUs as it works with a 32MB data set, so it benefits from a larger cache. The 8MB L3 vs 6MB L3 might have boosted performance a bit, but the main reason is vastly improved prefetching inside Bulldozer. According to the researchers of the university of Austin and Microsoft, the prefetch requests in libquantum are very accurate. If you check AMD's own publications you'll notice that there were two major improvements to improve the single-threaded performance of the Bulldozer architecture (compared to the previous ones): an improved Turbo Core and vastly improved prefetching.

Next, let's look at the excellent mcf result. mcf is by far the most memory intensive SPEC CPU Int benchmark out there. mcf misses the L1 data cache about five times more than all the other benchmarks on average. The hit rate is lower than 70%! mcf also misses the last level cache up to eight times more than all other benchmarks. Clearly mcf is a prime candidate to benefit from the vastly improved L/S units of Bulldozer.

Omnetpp is not that extreme, but the instruction mix has 52% loads and stores, and the L2 and last level cache misses are twice as high as the rest of the pack. In contrast to mcf, the amount of branch mispredictions is much lower, despite the fact that it has a similar, relatively high percentage of branches (20%). So the somewhat lower reliance on the memory subsystem is largely compensated for by a much lower amount of branch mispredictions. To be more precise: the amount of branch predictions is about three times lower! This most likely explains why Bulldozer makes a slightly larger step forward in omnetpp compared to the previous AMD architecture than in it does in mcf.

84 Comments

View All Comments

Spunjji - Wednesday, June 6, 2012 - link

Agreed. That will be nice!haukionkannel - Wednesday, May 30, 2012 - link

Very nice article! Can we get more thorough explanation about µop cache? It seems to be important part of Sandy bridge and you predict that it would help bulldoser...How complex it is to do and how heavily it has been lisensed?

JohanAnandtech - Thursday, May 31, 2012 - link

Don't think there is a license involved. AMD has their own "macro ops" so they can do a macro ops cache. Unfortunately I can not answer your question of the top of head on how easy it is to do, I would have to some research first.name99 - Thursday, May 31, 2012 - link

Oh for fsck's sake.The stupid spam filter won't let me post a URL.

Do a google search for

sandy bridge Real World Technologies

and look at the main article that comes up.

SocketF - Friday, June 1, 2012 - link

It is already planned, AMD has a patent for sth like that, google for "Redirect Recovery Cache". Dresdenboy found it already back in 2009:http://citavia.blog.de/2009/10/02/return-of-the-tr...

The BIG Question is:

Why did AMD not implement it yet?

My guess is that they were already very busy with the whole CMT approach. Maybe Streamroller will bring it, there are some credible rumors in that direction.

yuri69 - Wednesday, May 30, 2012 - link

Howdy,FOA thanks for the effort to investigate the shortcomings of this march :)

Quoting M. Butler (BD's chief architect): 'The pipeline within our latest "Bulldozer" microarchitecture is approximately 25 percent deeper than that of the previous generation architectures. ' This gives us 12 stages on K8/K10 => 12 * 1.25 = 15.

Btw all the major and significant architectural improvements & features for the upcoming BD successor line were set in stone long time ago. Remember, it takes 4-5 years for a general purpose CPU from the initial draft to mass availability. The stage when you can move and bend stuff seems to be around half of this period.

BenchPress - Wednesday, May 30, 2012 - link

"This means that Bulldozer should be better at extracting ILP (Instruction Level Parallelism) out of code that has low IPC (Instructions Per Clock)."This should be reversed. ILP is inherent to the code, and it's the hardware's job to extract it and achieve a high IPC.

Arnulf - Wednesday, May 30, 2012 - link

Ugh, so much crap in a single article ... this should never have been posted on AT.You weren't promised anything. You came across a website put up by some "fanboy" dumbass and you're actually using it as a reference. Why not quote some actual references (such as transcripts of the conference where T. Seifert clearly stated that gains are expected to be in line with core number increase, i.e. ~33%) instead of rehashing this Fruehe nonsense ?

erikvanvelzen - Wednesday, May 30, 2012 - link

Yes AMD totally set out to make a completely new architecture with a massive increase in transistors per core but 0 gains in IPC.Don't fool yourself.

Homeles - Wednesday, May 30, 2012 - link

It's a more intelligent analysis than your sorry ass could ever produce. Getting hung up on one quote... really?