The Bulldozer Review: AMD FX-8150 Tested

by Anand Lal Shimpi on October 12, 2011 1:27 AM ESTThe Pursuit of Clock Speed

Thus far I have pointed out that a number of resources in Bulldozer have gone down in number compared to their abundance in AMD's Phenom II architecture. Many of these tradeoffs were made in order to keep die size in check while adding new features (e.g. wider front end, larger queues/data structures, new instruction support). Everywhere from the Bulldozer front-end through the execution clusters, AMD's opportunity to increase performance depends on both efficiency and clock speed. Bulldozer has to make better use of its resources than Phenom II as well as run at higher frequencies to outperform its predecessor. As a result, a major target for Bulldozer was to be able to scale to higher clock speeds.

AMD's architects called this pursuit a low gate count per pipeline stage design. By reducing the number of gates per pipeline stage, you reduce the time spent in each stage and can increase the overall frequency of the processor. If this sounds familiar, it's because Intel used similar logic in the creation of the Pentium 4.

Where Bulldozer is different is AMD insists the design didn't aggressively pursue frequency like the P4, but rather aggressively pursued gate count reduction per stage. According to AMD, the former results in power problems while the latter is more manageable.

AMD's target for Bulldozer was a 30% higher frequency than the previous generation architecture. Unfortunately that's a fairly vague statement and I couldn't get AMD to commit to anything more pronounced, but if we look at the top-end Phenom II X6 at 3.3GHz a 30% increase in frequency would put Bulldozer at 4.3GHz.

Unfortunately 4.3GHz isn't what the top-end AMD FX CPU ships at. The best we'll get at launch is 3.6GHz, a meager 9% increase over the outgoing architecture. Turbo Core does get AMD close to those initial frequency targets, however the turbo frequencies are only typically seen for very short periods of time.

As you may remember from the Pentium 4 days, a significantly deeper pipeline can bring with it significant penalties. We have two prior examples of architectures that increased pipeline length over their predecessors: Willamette and Prescott.

Willamette doubled the pipeline length of the P6 and it was due to make up for it by the corresponding increase in clock frequency. If you do less per clock cycle, you need to throw more clock cycles at the problem to have a neutral impact on performance. Although Willamette ran at higher clock speeds than the outgoing P6 architecture, the increase in frequency was gated by process technology. It wasn't until Northwood arrived that Intel could hit the clock speeds required to truly put distance between its newest and older architectures.

Prescott lengthened the pipeline once more, this time quite significantly. Much to our surprise however, thanks to a lot of clever work on the architecture side Intel was able to keep average instructions executed per clock constant while increasing the length of the pipe. This enabled Prescott to hit higher frequencies and deliver more performance at the same time, without starting at an inherent disadvantage. Where Prescott did fall short however was in the power consumption department. Running at extremely high frequencies required very high voltages and as a result, power consumption skyrocketed.

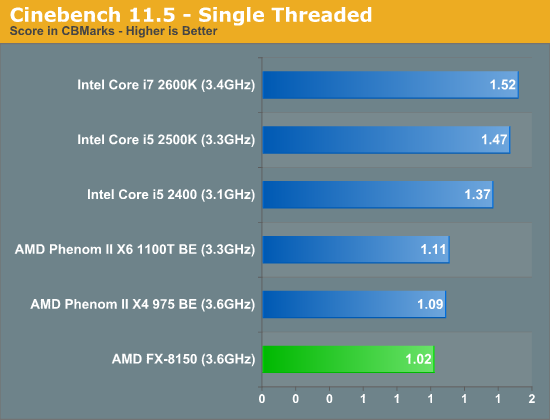

AMD's goal with Bulldozer was to have IPC remain constant compared to its predecessor, while increasing frequency, similar to Prescott. If IPC can remain constant, any frequency increases will translate into performance advantages. AMD attempted to do this through a wider front end, larger data structures within the chip and a wider execution path through each core. In many senses it succeeded, however single threaded performance still took a hit compared to Phenom II:

At the same clock speed, Phenom II is almost 7% faster per core than Bulldozer according to our Cinebench results. This takes into account all of the aforementioned IPC improvements. Despite AMD's efforts, IPC went down.

A slight reduction in IPC however is easily made up for by an increase in operating frequency. Unfortunately, it doesn't appear that AMD was able to hit the clock targets it needed for Bulldozer this time around.

We've recently reported on Global Foundries' issues with 32nm yields. I can't help but wonder if the same type of issues that are impacting Llano today are also holding Bulldozer back.

430 Comments

View All Comments

psiboy - Monday, February 6, 2012 - link

What kind of retarded person would benchmark at 1024 x 768 on an enthusiast site where every one owns at least 1 1920 x 1080 monitor as they are 1. Dirt cheap and 2. The single biggest selling resolution for quite some time now... Real world across the board benches at 1920 x 1080 please!mumbles - Sunday, February 12, 2012 - link

I am not trying to discount the reviewer, the performance of Sandy Bridge, or games as a test of general application performance. I have no connection to company mentioned really anywhere on this site. I am just a software engineer with a degree in computer science who wants to let the world know why these metrics are not a good way to measure relative performance of different architectures.The world has changed drastically in the hardware world and the software world has no chance to keep up with it these days. Developing software implementations that utilize multiprocessors efficiently is extremely expensive and usually is not prioritized very well these days. Business requirements are the primary driver in even the gaming industry and "performs well enough on high end equipment(or in the business application world, on whatever equipment is available)" is almost always as good as a software engineer will be allowed time for on any task.

In performance minded sectors like gaming development and scientific computing, this results in implementations that are specific to hardware architectures that come from whatever company decides to sponsor the project. nVidia and Intel tend to be the ones that engage in these activities most of the time. Testing an application on a platform it was designed for will always yield better results than testing it on a new platform that nobody has had access to even develop software on. This results in a biased performance metric anytime a game is used as a benchmark.

In business applications, the concurrency is abstracted out of the engineer's view. We use messaging frameworks to process many small requests without having to manage concurrency at all. This is partly due to the business requirements changing so fast that optimizing anything results in it being replaced by something else instead. The underlying frameworks are typically optimized for abstraction instead of performance and are not intended to make use of any given hardware architecture. Obviously almost all of these systems use Java to achieve this, which is great because JIT takes care of optimizing things in real time for the hardware it is running on and the operations the software uses.

As games are developed for this architecture it will probably see far better benchmark results than the i series in those games which will actually be optimized for it.

A better approach to testing these architectures would be to develop tests that actually utilize the strengths of the new design rather than see how software optimized for some other architecture will perform. This is probably way more than an e-mag can afford to do, but I feel an injustice is being done here based on reading other people's comments that seem to put stock in this review as indication of actual performance of this architecture in the future, which really none of these tests indicate.

I bet this architecture actually does amazing things when running Java applications. Business application servers and gaming alike. Java makes heavy use of integer processing and concurrency, and this processor seems highly geared towards both.

And I just have to add, CINEBENCH is probably almost 100% floating point operations. This is probably why the Bulldozer does not perform any better than the Phenom II x4.

Also, AMD continues to impress on the value measurement. Check out the PassMarks per dollar on this bad boy:

http://www.cpubenchmark.net/cpu.php?cpu=AMD+FX-815...

djangry - Sunday, February 19, 2012 - link

Beware !!!! this chip is junk.I love Amd with all my heart and soul.

This fx chip is a black screen machine.

It breaks my heart to write this.

I am sending it back and trying to snag the last x6 phenom 2 's

I can find.

The fact that this chip is a dud is too well hidden.

When I called newegg they told me your the second one today with

horror stories about this chip.

msi would not come clean ...this chip is a turkey....

yet they were nice.

I will waste no more time with this nonsense.

my 754's work better.

We need honesty about the failure of this chip and the fact windows pulled the hot fix.

tlb bug part two.

Even linux users say after grub goes in Black screens.

Why isn't the industry coming clean on this issue.

Amd's 939 kicked Intel butt for 3 years- till they got it together,we need Amd ,but I do not like hidden issues and lack of disclosure.

Buyer beware!

AMDiamond - Monday, March 5, 2012 - link

Guys you are already upset because you spent your lunch money on Intel and even with higher this and that boards and memory AMD (even with half as much memory onboard [32GB] & Intel has [64GB] ) Intel is misquoting thier performance again...no matter what you say AMD= Dodge as to Intel=Cheverolet ..and when it gets down to AMD on the game versus Intel ...Intel has another hardcore asswhipping behind and ahead... its the same thing as a Dx4 processor(versus the pentium) even though Pentium had 1 comprehesion level higher ..when running the same programs DooM for example Pentium couldn't run DooM anywhere near as good as a simple DX4 amd..same stays true ...this Bulldozer has already broken unmatched records...AMD only lacks in 1 area..when you install windows the intel drivers already match at least 80 percent performance of Intel ...where AMD needs a specific narrow driver to run...once that driver is matched ..AMD =General Lee versus (Smokey & the) Bandits POS =Intel's comaro and its true ashamed that Intel even with 2x as much ddr3 memory ..cant even pickup the torch when AMD is smoking a Jet on the highway to hell for Intel -Hahahamauhahaha...sorry as intel qx9650 ahahahaahahahahahahahhahahahAMDiamond - Monday, March 5, 2012 - link

watch AMD take Diablo 3 (1 expansion by the next/it will be so ) Intel always lags hard on gaming compared to a weaker AMD class...point proven ...everest has alot of false benchmarks for Intel example NWN2 Phenom x3 8400 (triple core hasa bench 10880) yet a Intel Core 2 Duo e7500 has a bench of 12391 thats a 2.9ghtz cpu versus a 2.1ghtz CPU ..ok the kicker is intel is a dell amd is an aspire..DDR2 memory on the AMD and ddr3 memory on the intel ..all the intel bus features say higher (like they always do) but try running the same dammned video board on both systems then try running 132 NWN2 maps each medium size...no way the intel can do it ..the AMD can run the game editor and the maps at once..Intel is selling you a number AMD is selling you true frames per second..but your going to say oh but my Intel is a better core and this and that..ok now lets compare the price of the 2 systems...Intel was $2,500 the AMD was $400 ..why do you think that phenom just stomps the ass off that intel?(always has always will)zkeng - Wednesday, May 9, 2012 - link

I work as a building architect and use this CPU on my Linux workstation, in a Fractal Design define mini micro atx case, with 8GB ram and AMD radeon hd 6700 GPU.I usually have several applications running at the same time. Typically BricsCAD, a file manager, a web browser with a few tabs, Gimp image editor, music player, our business system and sometimes Virtualbox as well with a virtual machine.

I do allot of 3D projects and use Thea Render for photo rendering of building designs.

I use conky system monitor to watch the processor load and temperature.

These are my thoughts about the performance:

Runs cool and the noise level is low, because the processor can handle several applications without taking any stress at all.

Usually runs at only a few % average load for heavy business use (graphics and CAD in my case).

When working you get the feeling that this processor has good torque. Eight cores means most of the time every application can have at least one dedicated core and there is no lag even with lots of apps running. I think this will be a great advantage even if you use allot of older single core business applications.

The fact that this processor has rather high power consumption at full load is a factor to take into consideration if you put it under allot of constant load (and especially if you over clock).

For any use except really heavy duty CPU jobs (compiling software, photo rendering, video encoding) temporary load peaks will be taken care of in a few seconds, and you will typically see your processor working at only 1,4 GHz clock frequency. When idle the power consumption of this CPU is actually pretty low and temporary load peaks will make very little difference in total power consumption.

I sometimes photo render jobs for up to 32 hours and think of myself as a CPU demanding user, but still most of the time when my computer is running, it will be at idle frequency. I consider the idle power consumption to be by far the most important value of comparison between processors for 90% of all users. This is not considered in many benchmarks.

It is really nice to fire up Thea Render, use the power of all cores for interactive rendering mode while testing different materials on a design and then start an unbiased photo rendering and watch all eight cores flatten out with 100% load at 3,6 GHz.

Not only does this processor photo render slightly faster compared to my colleagues Intel Sandy Bridge. What is really nice is that i can run, lets say four renderings at the same time in the background, for a sun study, and then fire up BricsCAD to do drawing work while waiting. Trying to do this was a disaster with my last i5 processor. I forced me to do renderings during the night (out of business hours) or to borrow another work station during rendering jobs because my work station was locked up by more than one instance of the rendering application.

....................

To summarize, this is by far the best setup (CPU included) I have ever used on a work station. Affordable price, reasonably small case, low noise level, completely modular, i will be able to upgrade in the future without changing my am3+ mother board. The CPU is fast and offers superb multi tasking. This is the first processor I have ever used that also offers good multi tasking under heavy load (photo rendering + cad at the same time)

This is a superb CPU for any business user who likes to run several apps at the same time. It is also really fast with multi core optimized software.

AMD FX-8150 is my first AMD desktop processor and I like it just as much as I dislike their fusion APUs on the laptop market. Bulldozer has all the power where it is best needed, perfectly adopted to my work flow.

la'quv - Wednesday, August 29, 2012 - link

I don't know what it is with all this hype destroying amd's reputation. The bulldozer architecture is the best cpu design I have seen in years. I guess the underdog is not well respected. The bulldozer architecture has more pipelines and schedulers that the Core 2. The problem is code is compiled intel optimized not amd optimized. These benchmarks for a bunch of applications I don't use have no bearing on my choice to by a cpu, there are some benchmarks where an i5 will outperform and i7 so what valid comparison's are we making here. The bulldozer cpu's are dirt cheap and people expect them to be cheaper and don't require high clock speed ram and run on cheaper motherboards. AMD is expected to keep up with intel on the manufacturing process. Cutting corners and going down to 32nm then 22nm as quickly as possible does not produce stable chips. I have my kernel compiled AMD64 and it is not taxed by anything I am doing.brendandaly711 - Friday, September 6, 2013 - link

AMD still hasn't been able to pull out of the rut that INTEL left them in after the Sandy Bridge breakthrough. I am a (not so proud) owner of an FX-4100 in one of my pc's and an 8150 in the other. The 4100 compares to an ivy bridge i3 or a sandy bridge i5. I will give AMD partial credit, though, the 8150 performs at the ivy bridge's i5 level for almost identical prices.Nfarce - Sunday, September 20, 2020 - link

And here we are in 2020 some 9 years after this review and 7 years after your comment and AMD still hasn't been able to equal Intel as an equal gaming performance contender. AMD's only saving face is the fact that now higher resolution demands of 1440p and now 4K essentially make any modern game CPU bound and more dependent on the GPU power.BlueB - Wednesday, October 5, 2022 - link

I always come back to this review every few years just to have a good laugh looking back at this turd architecture, and especially at genius comments like:"You don't get the architecture"; "it's a server CPU"; "it's because Windows scheduler"; etc., etc.

No, it wasn't any of those things. The CPU's a turd. It was a turd then, it's a turd now, and it will be a turd no matter what. It wasn't more future-proof than either Sandy or Ivy, 2600Ks from 11 years ago still run circles around it in both single and multi-threaded apps, old and new. The class action lawsuit against AMD was the cherry on top.

It really never gets old to read through the golden comment section here and chuckle at all the visionary comments which tried to defend this absolute failure of an architecture. It's an excellent article, and together with its comment section will always have a special place in my heart.