The Intel SSD 710 (200GB) Review

by Anand Lal Shimpi on September 30, 2011 8:53 PM EST- Posted in

- Storage

- SSDs

- Intel

- Intel SSD 710

Enterprise Storage Bench - Oracle Swingbench

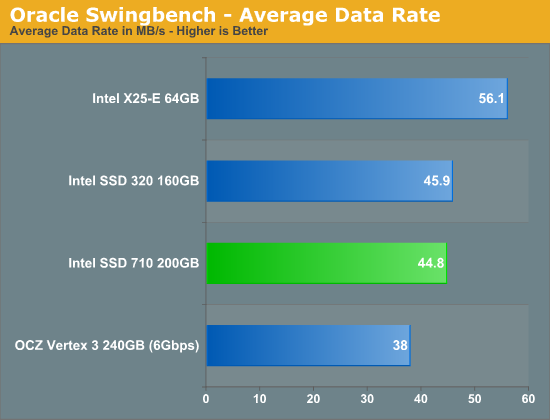

We begin with a popular benchmark from our server reviews: the Oracle Swingbench. This is a pretty typical OLTP workload that focuses on servers with a light to medium workload of 100 - 150 concurrent users. The database size is fairly small at 10GB, however the workload is absolutely brutal.

Swingbench consists of over 1.28 million read IOs and 3.55 million writes. The read/write GB ratio is nearly 1:1 (bigger reads than writes). Parallelism in this workload comes through aggregating IOs as 88% of the operations in this benchmark are 8KB or smaller. This test is actually something we use in our CPU reviews so its queue depth averages only 1.33. We will be following up with a version that features a much higher queue depth in the coming weeks.

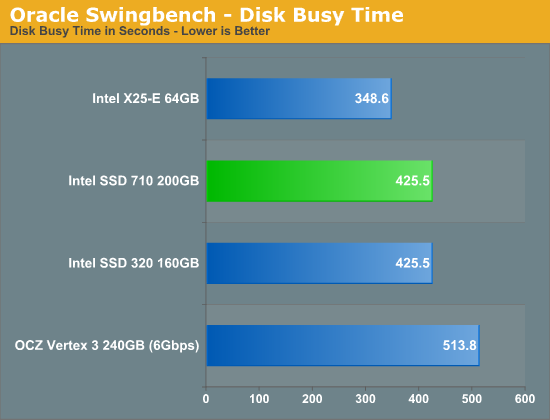

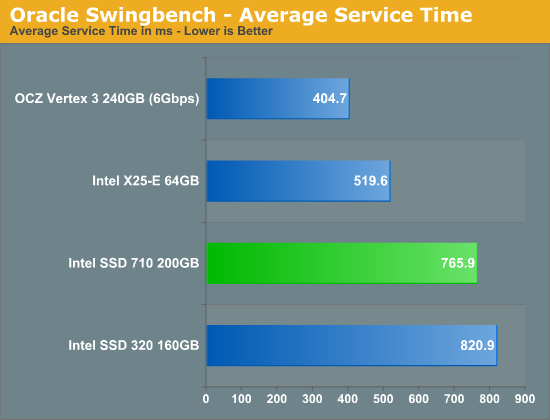

The X25-E offers 25% higher performance than the SSD 710 in our first enterprise benchmark. Here the 710 is actually about the same speed as the 320, which isn't surprising given the two drives share the same controller. The 710 obviously has the endurance advantage over the 320. Note that even SandForce's SF-2281 isn't able to outperform the 710 in our Swingbench test. As we discovered in our Z-Drive R4 review, average service time is a better indicator of heavy load performance than simply looking at average data rate in this benchmark:

Here we see that the Vertex 3 manages to chew through IOs much quicker than the 710, despite lower overall throughput. SandForce's real-time data compression/dedupe likely plays a major role here. The 710 does pull ahead of the 320, likely due to firmware optimizations for server rather than client workloads. The X25-E continues to hold onto a significant performance advantage over the 710 thanks to its higher random write performance.

68 Comments

View All Comments

cdillon - Friday, September 30, 2011 - link

I must be missing some important detail behind their decision to use MLC. MLC holds exactly twice as much information per cell as SLC, which means you can get twice the storage with the same number of chips. However, they are reserving up to 60% of the MLC NAND as spare area while still achieving a LOWER write-life than the SLC-based X25-E which only needs 20% spare. Why not continue to use lower-density SLC with a smaller spare area? The total capacity would only be slightly lower while achieving at least another 500GB of write life, if not more, and would probably also bring the 4KB Random Write numbers back up to X25-E levels.cdillon - Friday, September 30, 2011 - link

Oops, I meant to say "at least another 500TB of write life" instead of 500GB.Stahn Aileron - Saturday, October 1, 2011 - link

More than likely it has to do with production yields. Anand mentions SLC and MLC are physically identical, it's just how you address them. SLC seems to be very high quality NAND while MLC is the low end. MLC-HET (or eMLC) seems to be the middle of the pack in terms of overall quality.Unless you can get SLC yields that consistently outpace MLC-HET yields by a factor of 2, it's not very economical in the long run for the same capacity.

Also, chip manufacturing is a pretty fixed cost at the wafer level from my understanding (at least once you hit mass production on a mature process). For SLC vs MLC, you can either use double the SLC chips to match MLC capacities (higher cost) or use the same number of chips and sacrifice capacity. Intel seems to be trying to get the best of both worlds (higher capacity at the same or lower costs). (All that while maximizing their production capacity and ability to meet demand as needed as a side benefit.)

Obviously I could be wrong. That's all conjecture based on what little I know of the industry as a consumer.

ckryan - Friday, September 30, 2011 - link

Anand, thanks for the awesome Intel SMART data tip.I've joined in the XtremeSystems SSD endurance test. By writing a simulated desktop workload to the drive over and over, for months on end, eventually a drive will become read only. So far, only one drive has become RO, and that was a Samsung 470 with an apparent write amplification of 5+(this was the only SSD I've ever heard of that this has happened to outside of a lab). Another drive (a 64GB Crucial M4) has gone through almost 10,000 PE cycles, and still doesn't have any reallocated sectors -- but all of the drives have performed well, and many have hundreds of TBs on them. I chose a SF 2281 with Toshiba toggle NAND, but I'm having some issues with it (like it won't stop dropping out/or BSOD if it's the system drive). Though it takes months and months of 24/7 writing, I think the process is both interesting and likely to put many users at ease concerning drive longevity. I don't think consumers should be worrying about the endurance of 25nm NAND, but I do start wondering what will happen with the advent of next generation flash. If you want to worry about NAND, worry about sync vs async or toggle, but don't sweat the conservative PE ratings -- it seems like the controller itself plays a super important role in the preservation of NAND in addition to the NAND itself and spare area. Obviously, increasing spare area is always a good idea if you have a particularly brutal workload, but it's not a terrible idea in many other settings... it's not just for RAID0 you know.

The only real SSD endurance test takes place in a user's machine (or server), and I have no doubts that any modern SSD will last anything less than the better part of a decade -- at least as far as the flash is concerned (and probably much, much longer). You'll get mad at your SF's BSoDs and throw it out the window before you ever make a dent in the flash's lifespan. The only exception is if your drive isn't aligned (and especially without trim). Under these conditions don't expect your drive to last very long as WA jumps by double digit factors.

http://www.xtremesystems.org/forums/showthread.php...

Movieman420 - Friday, September 30, 2011 - link

Yup. And you can expect the current SF bsod problem to vanish when intel fixes it's drivers and oroms. What coincidence eh?http://thessdreview.com/latest-buzz/sandforce-driv...

JarredWalton - Saturday, October 1, 2011 - link

Ha! An educated guess in this case feels more like a pipe dream. If Intel is willing to jump on the SF controller bandwagon, I will be amazed. Then again, they've got the 510 using a non-Intel controller, so anything is possible.ckryan - Saturday, October 1, 2011 - link

It kinda makes sense though. Intel using a Sandforce controller (or possibly "Sandforce-eque") but with their firmware and NAND would be tough to stop. The SF controller (when it works -- In my case not always that often) yields benefits to consumer and enterprise workloads alike. Further, it could help bridge Intel into smaller process NAND with about the same overall TBW due to compression (My 60GB has about 85TB host to ~65TB nand writes). That's not a small amount over the lifespan of a drive. Along with additional overprovisioning, Intel could conceivably make a drive with sub-25nm NAND last as long as the 34nm stuff with those two advantages.There's nothing really stopping SF now except for the not-so little stability issues. I thought it was much rarer that it actually is (it's rare when it happens to someone else and an epidemic when it happens to you). With that heinous hose-beast no longer lurking in the closet, SandForce could end up being the only contender. Until such time as they get the problems resolved, whether or not you have problems is just a crapshoot... no seeming rhyme or reason, almost -- but not quite -- completely random. If Intel could bring that missing link to SF it would be a boon to consumers, but Intel could just as well buy SandForce to get rid of them. Either is just as likely, and conspiracy theorists would say that Intel is purposely causing issues with SF drives so they don't have to buy them (or don't have to pay as much). In the end, most consumers would just be happy if the 2281 powered drives they already have worked like the drive it was always mean to be.

Movieman420 - Saturday, October 1, 2011 - link

Guess you didn't follow the story over to VR Zone either. It's a done deal. Cherryville is SandForce 2200. It should be announced before long imo.rishidev - Friday, September 30, 2011 - link

Why even bother to make a 200gb drive at this point of time.AMD fanboys get abused for masturbating .

So What ?? im supposed to buy a $1300 Intel ""WOOOW" ssd drive.

TheSSDReview - Saturday, October 1, 2011 - link

Because Intel wants to hit the enthusiast market. The new drives wont be anywhere near the price of these 710 series drives and will demand product confidence as Intel has always had such. It is a win-win for SF since many have taken comfort in speculation of controller troubles rather than examining other such causes.The question then becomes one of SF purchase we think.