The Crucial m4 (Micron C400) SSD Review

by Anand Lal Shimpi on March 31, 2011 3:16 AM ESTRandom Read/Write Speed

The four corners of SSD performance are as follows: random read, random write, sequential read and sequential write speed. Random accesses are generally small in size, while sequential accesses tend to be larger and thus we have the four Iometer tests we use in all of our reviews.

Note that we've updated our C300 results on our new Sandy Bridge platform for these Iometer tests. As a result you'll see some higher scores for this drive (mostly with our 6Gbps numbers) for direct comparison to the m4 and other new 6Gbps drives we've tested.

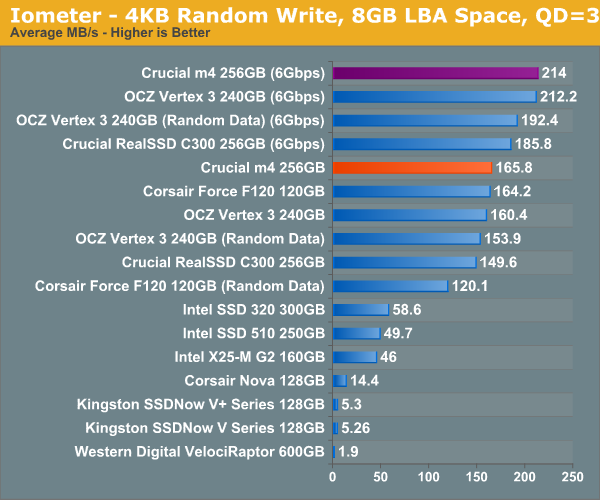

Our first test writes 4KB in a completely random pattern over an 8GB space of the drive to simulate the sort of random access that you'd see on an OS drive (even this is more stressful than a normal desktop user would see). I perform three concurrent IOs and run the test for 3 minutes. The results reported are in average MB/s over the entire time. We use both standard pseudo randomly generated data for each write as well as fully random data to show you both the maximum and minimum performance offered by SandForce based drives in these tests. The average performance of SF drives will likely be somewhere in between the two values for each drive you see in the graphs. For an understanding of why this matters, read our original SandForce article.

If there's one thing Crucial focused on with the m4 it's random write speeds. The 256GB m4 is our new king of the hill when it comes to random write performance. It's actually faster than a Vertex 3 when writing highly compressible data. It doesn't matter if I run our random write test for 3 minutes or an hour, the performance over 6Gbps is still over 200MB/s.

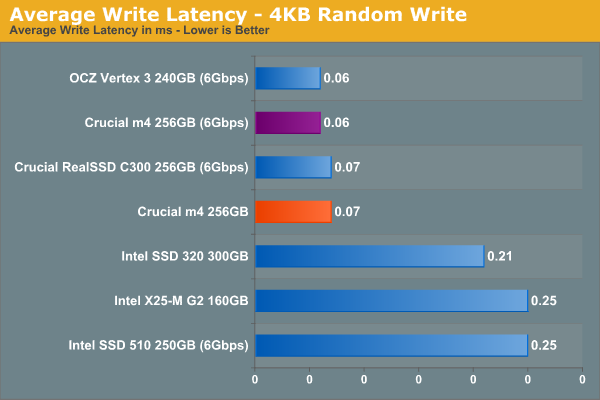

Let's look at average write latency during this 3 minute run:

On average it takes Crucial 0.06ms to complete three 4KB writes spread out over an 8GB LBA space. The original C300 was pretty fast here already at 0.07ms—it's clear that these two drives are very closely related. Note that OCZ's Vertex 3 has a similar average latency but it's not actually writing most of the data to NAND—remember this is highly compressible data, most of it never hits NAND.

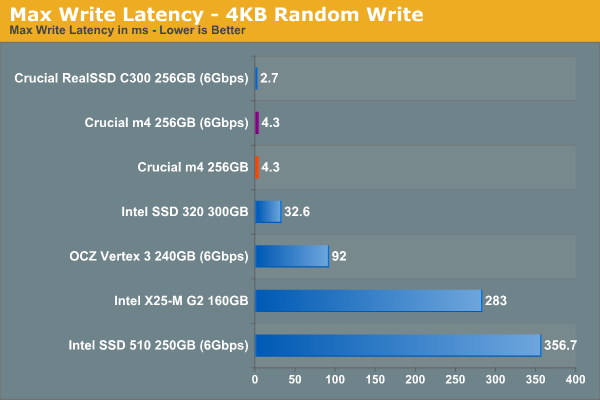

Now let's look at max latency during this same 3 minute period:

You'll notice a huge increase in max latency compared to average latency, that's because this is when a lot of drives do some real-time garbage collection. If you don't periodically clean up your writes you'll end up increasing max latency significantly. You'll notice that even the Vertex 3 with SandForce's controller has a pretty high max latency in comparison to its average latency. This is where the best controllers do their work. However not all OSes deal with this occasional high latency blip all that well. I've noticed that OS X in particular doesn't handle unexpectedly high write latencies very well, usually resulting in you having to force-quit an application.

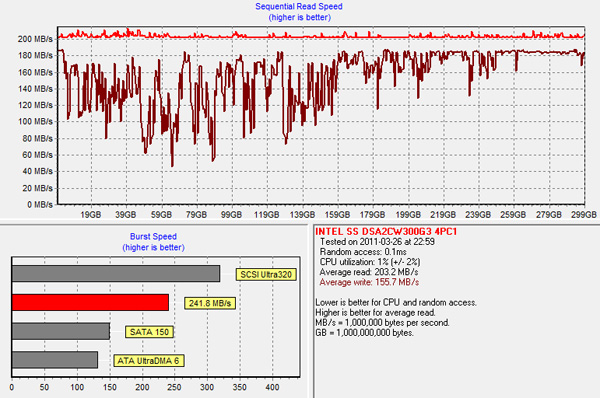

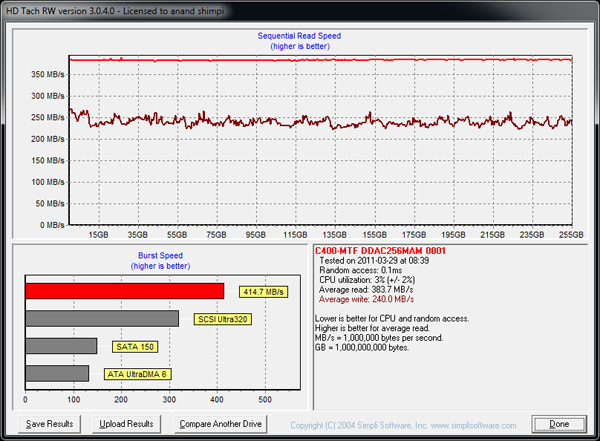

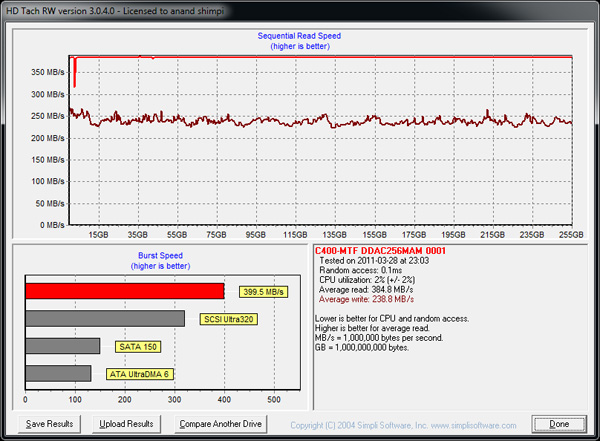

Note the extremely low max latency of the m4 here: 4.3ms. Either the m4 is ultra quick at running through its garbage collection routines or it's putting off some of the work until later. I couldn't get a clear answer from Crucial on this one, but I suspect it's the latter. I'm going to break the standard SSD review mold here for a second and take you through our TRIM investigation. Here's what a clean sequential pass looks like on the m4:

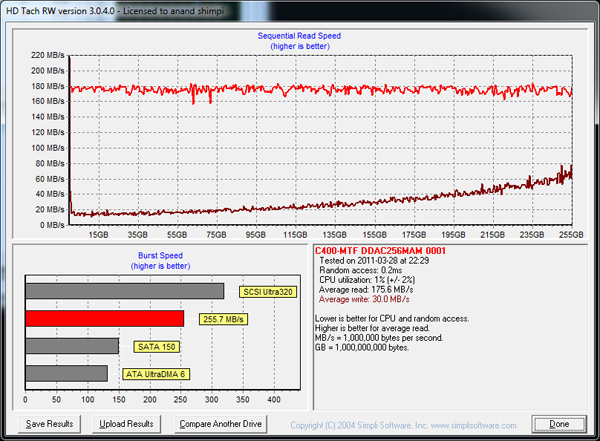

Average read speeds are nearing 400MB/s, average write speed is 240MB/s. The fluctuating max write speed indicates some clean up work is being done during the sequential write process. Now let's fill the drive with data, then write randomly across all LBAs at a queue depth of 32 for 20 minutes and run another HDTach pass:

Ugh. This graph looks a lot like what we saw with the C300. Without TRIM the m4 can degrade to a very, very low performance state. Windows 7's Resource Monitor even reported instantaneous write speeds as low as 2MB/s. The good news is the performance curve trends upward: the m4 is trying to clean up its performance. Write sequentially to the drive and its performance should start to recover. The bad news is that Crucial appears to be putting off this garbage collection work a bit too late. Remember that the trick to NAND management is balancing wear leveling with write amplification. Clean blocks too quickly and you burn through program/erase cycles. Clean them too late and you risk high write amplification (and reduced performance). Each controller manufacturer decides the best balance for its SSD. Typically the best controllers do a lot of intelligent write combining and organization early on and delay cleaning as much as possible. The C300 and m4 both appear to push the limits of delayed block cleaning however. Based on the very low max random write latencies from above I'd say that Crucial is likely doing most of the heavy block cleaning during sequential writes and not during random writes. Note that in this tortured state—max write random latencies can reach as high as 1.4 seconds.

Here's a comparison of the same torture test run on Intel's SSD 320:

The 320 definitely suffers, just not as bad as the m4. Remember the higher max write latencies from above? I'm guessing that's why. Intel seems to be doing more cleanup along the way.

And just to calm all fears—if we do a full TRIM of the entire drive performance goes back to normal on the m4:

What does all of this mean? It means that it's physically possible for the m4, if hammered with a particularly gruesome workload (or a mostly naughty workload for a longer period of time), to end up in a pretty poor performance state. I had the same complaint about the C300 if you'll remember from last year. If you're running an OS without TRIM support, then the m4 is a definite pass. Even with TRIM enabled and a sufficiently random workload, you'll want to skip the m4 as well.

I suspect for most desktop workloads this worst case scenario won't be a problem and with TRIM the drive's behavior over the long run should be kept in check. Crucial still seems to put off garbage collection longer than most SSDs I've played with, and I'm not sure that's necessarily the best decision.

Forgive the detour, now let's get back to the rest of the data.

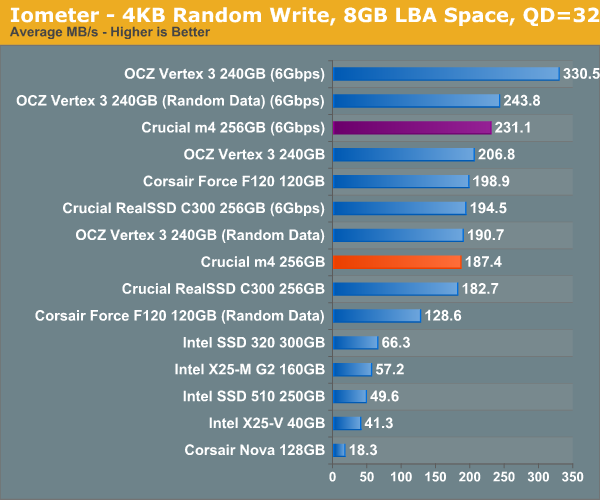

Many of you have asked for random write performance at higher queue depths. What I have below is our 4KB random write test performed at a queue depth of 32 instead of 3. While the vast majority of desktop usage models experience queue depths of 0—5, higher depths are possible in heavy I/O (and multi-user) workloads:

High queue depth 4KB random write numbers continue to be very impressive, although here the Vertex 3 actually jumps ahead of the m4.

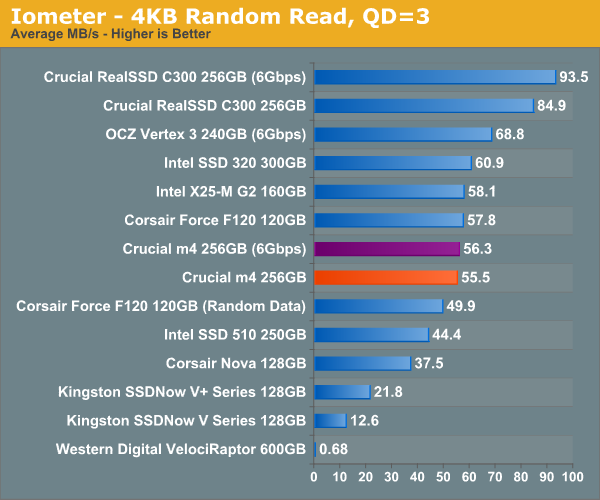

Random read performance is actually lower than on the C300. Crucial indicated that it reduced random read performance in favor of increasing sequential read performance on the m4. We'll see what this does to real world performance shortly.

103 Comments

View All Comments

dingo99 - Thursday, March 31, 2011 - link

While it's great that you test overall drive performance before and after a manually-triggered TRIM, it's unfortunate that you do not test real-world TRIM performance amidst other drive operations. You've mentioned often that Crucial drives like C300 need TRIM, but you've missed the fact that C300 is a very poor performer *during* the TRIM process. If you try to use a C300-based system on Win7 while TRIM operations are being performed (Windows system image backup from SSD to HD, for example) you will note significant stuttering due to the drive locking up while processing its TRIMs. Disable Win7 TRIM, and all the stuttering goes away. Sadly, the limited TRIM tests you perform now do not tell the whole story about how the drives will perform in day-to-day usage.Anand Lal Shimpi - Thursday, March 31, 2011 - link

I have noticed that Crucial's drives tend to be slower at TRIMing than the competition. I suspect this is a side effect of very delayed garbage collection and an attempt to aggressively clean blocks once the controller receives a TRIM instruction.I haven't seen this as much in light usage of either the C300 or m4 but it has definitely cropped up in our testing, especially during those full drive TRIM passes.

jinino - Thursday, March 31, 2011 - link

Is it possible to include MB with AMD's 890 series chipset to test SATA III performance?Thanks

whatwhatwhat2011 - Thursday, March 31, 2011 - link

I continue to be frustrated by the lack of actual, task-oriented, real world benchmarking for SSDs. That is to say, tests that execute common tasks (like booting, loading applications, doing disk intensive desktop tasks like audio/video/photo editing) and reporting exactly how long those tasks took (in seconds) using different disks.This is really what we care about when we buy SSDs. Sequential read and write numbers are near irrelevant in real world use. The same could be said for IOPs measurements, which have so many variables involved. I understand that your storage bench is supposed to satisfy this need, but I don't think that it does. The numbers it returns are still abstract values that, in effect, don't really communicate to the reader what the actual performance difference is.

Bringing it home, my point is that while we all understand that going from an HDD system drive to an SSD results in an enormous performance improvement, we really have no idea how much better a SF-2200 based Vertex 3 is than an Indilinx Vertex 1 is in real world use. Sure, we understand that it's tons faster in the benches, but if that translates to application loading times that are only 1 second faster, who really cares to make that upgrade?

In particular, I'm thinking of Sandforce drives. They really blow the doors off benchmarking suites, but how does that translate to real world performance? Most of the disk intensive desktop tasks out there involve editing photos and videos that are generally speaking already highly compressed (ie, incompressible).

Anand, you are a true leader in SSD performance analysis. I hope that you'll take the lead once again and put an end to this practice of reporting benchmark numbers that - while exciting to compare - are virtually useless when it comes to making buying decisions.

In the interest of being positive and helpful, here are a few tasks that I'd loved to see benched and compared going forward (especially between high end HDDs, second gen SSDs and third gen SSDs).

1. Boot times (obviously you'd have to standardize the mobo for useful results).

2. Application load times for "heavy" apps like Photoshop, After Effects, Maya, AutoCAD, etc

3. Load times for large Lightroom 3 catalogs using RAW files (which are generally incompressible) and large video editing project files (which include a variety of read types) using the various AVC-flavored acquisition codecs out there.

4. BIG ONE HERE: the real world performance delta for using SSDs as cache drives for content creation apps that deal mostly with incompressible data (like Lightroom and Premiere).

Thanks again for the great work. And I apologize for the typos. Day job's a-callin'.

MilwaukeeMike - Thursday, March 31, 2011 - link

I think part of the reason you don't see much of this is the difficulty in standardizing it. You’d like to see AutoCAD, but I’d like to see visual studio or RSA. I have seen game loading screens in reviews, and I’d like to see that again. Especially since you don’t really know how much data is being loaded. Will a game level load in 5 seconds vs 25 in a standard HD, or is it more like 15 vs 25? I’d also prefer to see a Veliciraptor on the graph because I own one, but that’s just getting picky. However, I’m sure not going to buy a SSD without knowing this stuff.whatwhatwhat2011 - Thursday, March 31, 2011 - link

That's a very valid point, but I don't see much in the way of even an effort in this regard. I certainly wouldn't complain if the application loading tests including a bunch of software that I never use just so long as that software has similar loading characteristics and times as the software I do use. Or anything, really, that gives me some idea of the actual difference in user experience.I have an ugly hunch that there isn't really much (or any) difference between a first gen and third gen SSD in terms of the actual user experience. My personal experience has more or less confirmed this, but that's just anecdotal. These benchmark numbers, as it is, don't tell us much about what is going on with the user's experience.

They do, however, get people excited about buying new SSDs every year. They're hundreds of megabytes per second faster! And I love megabytes.

Chloiber - Friday, April 1, 2011 - link

I do agree. Most SSD tests still lack these kind of tests.On what should I base my decision when buying an SSD? As the AnandStorage Benches show, the results can be completely different (just compare the new suite (which isn't "better", it's just "different") with the old one! Completely different results! And it's still just a benchmark where we don't actually know what has been benched. Yes, Anand provides some numbers, but it's not transparent enough. It's still ONE scenario.

I'd also like to see more simple benchmarks. Sit behind your computer and use a stop watch. Yes, it's more work than using simple tools, but the result is worth WAY more than "YABT" (yet another benchmark tool).

Well yes. Maybe the results are very close. But that's exactly what I want to know. I am very sorry, but right now, I only see synthetic benchmarks in these tests which can't tell me anything.

- Unzipping

- Copying

- Installing

- Loading times of applications (even multiple apps at once)

That's the kind of things I care about. And a trace benchmark is nice, but there is still a layer of abstraction that I just do not want.

whatwhatwhat2011 - Friday, April 1, 2011 - link

It's really gratifying to hear other users sharing my thoughts! I have a hunch we're onto something here.Anand, I hate to sound harsh - as you've clearly put a ton of work into this - but your storage bench is really a step in the wrong direction. Yes, it consists of real world tasks and produces highly replicable results.

But the actual test pattern itself is simply not realistic. Only a small percentage of users ever find themselves doing tasks like that, and even those power users only are capable of producing workloads like this everyone once in awhile (when they're highly, highly caffeinated, I would suppose).

Even more damning, the values it returns are not helpful when making buying decisions. So the Vertex 3 completes the heavy bench some 4-5 minutes ahead of the Crucial m4. What does that actually mean in terms of my user experience?

See, the core of the issue here is really why people buy SSDs. Contrary to the marketing justification, I don't think anyone buys SSDs for productivity gains (although that might be how they justify the purchase to themselves as well).

So what are you really getting with an SSD? Confidence and responsiveness. The sort of confidence that comes with immediate responsiveness. Much like how a good sports car will respond immediately to every single touch of the peddles or wheel, we expect a badass computer to respond immediately to our inputs. Until SSDs came along, this simply wasn't a reality.

So the question really is: is one SSD going to make my computer faster, smoother and more responsive than another?

seapeople - Friday, April 1, 2011 - link

How many times must Anand answer this question. Here's your answer:Question: What's the difference between all these SSD's and how they boot/load application X?

Answer: For every SSD from x25m-g2 on THERE IS VERY LITTLE DIFFERENCE.

Anand could spend a lot of time benchmarking how long it takes to start up Photoshop or boot up Windows 7 for these SSD's, but then we'd just get a lot of graphs that vary from 7 seconds to 9 seconds, or 25 seconds to 28 seconds. Or you could skew the graphs with a mechanical hard drive which would be 2-5x the loading time.

In short, the synthetic (or even real-life) torture tests that Anand shows here are the only tests which would show a large difference between these drives, and for everything else you keep asking about there would be very little difference. This is why it sucks that SSD performance is still increasing faster than price is dropping; SSD's are really fast enough to beat hard drives at anything, so the only important factor for most real world situations is the price and how much storage capacity you can live with.

whatwhatwhat2011 - Friday, April 1, 2011 - link

I'm not sure that I understand your indignation. If useful, effective real world benchmarks would demonstrate little difference between SSDs, how is that a waste of anyone's time? If anything, that is exactly the information that both consumers and technologists need.Consumers would be able to make better buying decisions, gauging the real-world benefits (or not) of upgrading from one SSD to another, later generation SSD.

Manufacturers and technologists would benefit from having to confront that fact that clearly performance bottle necks exist elsewhere in the system - either in the hardware I/O subsystems, or in software itself that is still designed to respond to HDD levels of latency. If consumers refused to upgrade from one SSD to another, based upon useful test data that revealed this diminishing real-world benefit, that would also help motivate manufacturers to move on price, instead of focusing on MORE MEGABYTES!

This charade that is currently going on - in which artificial benchmarks and torture tests are being used to exaggerate the difference between drives - certainly makes for exciting reading, but it does little to inform anyone.

Anand is a leader in this subject matter. I post here as opposed to other sites that are guilty of the same because I have a hunch that only he has the resources and enthusiasm necessary to tackle this issue.