The Sandy Bridge Review: Intel Core i7-2600K, i5-2500K and Core i3-2100 Tested

by Anand Lal Shimpi on January 3, 2011 12:01 AM ESTIntel HD Graphics 2000/3000 Performance

I dusted off two low-end graphics cards for this comparison: a Radeon HD 5450 and a Radeon HD 5570. The 5450 is a DX11 part with 80 SPs and a 64-bit memory bus. The SPs run at 650MHz and the DDR3 memory interface has a 1600MHz data rate. That’s more compute power than the Intel HD Graphics 3000 but less memory bandwidth than a Sandy Bridge if you assume the CPU cores aren’t consuming more than half of the available memory bandwidth. The 5450 will set you back $45 at Newegg and is passively cooled.

The Radeon HD 5570 is a more formidable opponent. Priced at a whopping $70, this GPU comes with 400 SPs and a 128-bit memory bus. The core clock remains at 650MHz and the DDR3 memory interface has an 1800MHz data rate. This is more memory bandwidth and much more compute than the HD Graphics 3000 can offer.

Based on what we saw in our preview I’d expect performance similar to the Radeon HD 5450 and significantly lower than the Radeon HD 5570. Both of these cards were paired with a Core i5-2500K to remove any potential CPU bottlenecks.

On the integrated side we have a few representatives. AMD’s 890GX is still the cream of the crop for AMD integrated for at least a few more months. I paired it with a 6-core 1100T to keep the CPU from impacting things.

Representing Clarkdale I have a Core i5-661 and 660. Both chips run at 3.33GHz but the 661 has a 900MHz GPU while the 660 runs at 733MHz. These are the fastest representatives with last year’s Intel HD Graphics, but given the margin of improvement I didn’t feel the need to show anything slower.

And finally from Sandy Bridge we have three chips: the Core i5-2600K and 2500K both with Intel HD Graphics 3000 (but different turbo modes) and the Core i3-2100 with HD Graphics 2000.

Nearly all of our test titles were run at the lowest quality settings available in game at 1024x768. We ran with the latest drivers available as of 12/30/2010. Note that all of the screenshots used below were taken on Intel's HD Graphics 3000. For a comparison of IQ between it and the Radeon HD 5450 I've zipped up originals of all of the images here.

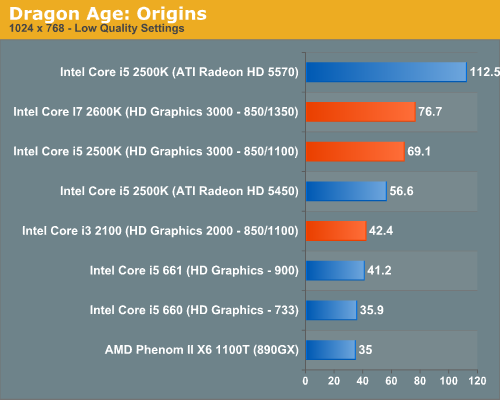

Dragon Age: Origins

DAO has been a staple of our integrated graphics benchmark for some time now. The third/first person RPG is well threaded and is influenced both by CPU and GPU performance.

We ran at 1024x768 with graphics and texture quality both set to low. Our benchmark is a FRAPS runthrough of our character through a castle.

The new Intel HD Graphics 2000 is roughly the same performance level as the highest clock speed HD Graphics offered with Clarkdale. The Core i3-2100 and Core i5-661 deliver about the same level of performance here. Both are faster than AMD’s 890GX and all three of them are definitely playable in this test.

HD Graphics 3000 is a huge step forward. At 71.5 fps it’s 70% faster than Clarkdale’s integrated graphics, and fast enough that you can actually crank up some quality settings if you’d like. The higher end HD Graphics 3000 is also 26% faster than a Radeon HD 5450.

What Sandy Bridge integrated graphics can’t touch however is the Radeon HD 5570. At 112.5 fps, the 5570’s compute power gives it a 57% advantage over Intel’s HD Graphics 3000.

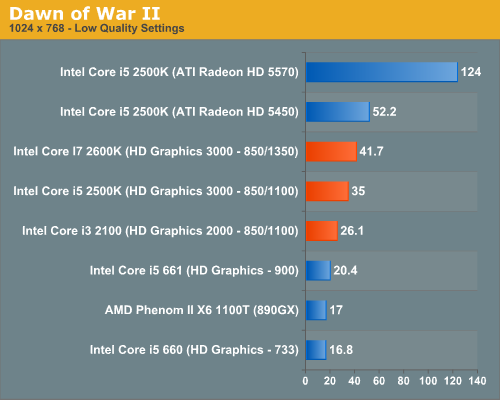

Dawn of War II

Dawn of War II is an RTS title that ships with a built in performance test. I ran at the lowest quality settings at 1024x768.

Here the Core i7-2600K and 2500K fall behind the Radeon HD 5450. The 5450 manages a 25% lead over the HD Graphics 3000 on the 2600K. It's interesting to note the tangible performance difference enabled by the higher max graphics turbo frequency of the 2600K (1350MHz vs. 1100MHz). It would appear that Dawn of War II is largely compute bound on these low-end GPUs.

Compared to last year's Intel HD Graphics, the performance improvement is huge. Even the HD Graphics 2000 is almost 30% faster than the fastest Intel offered with Clarkdale. While I wouldn't view Clarkdale as being useful graphics, at the performance levels we're talking about now game developers should at least be paying attention to Intel's integrated graphics.

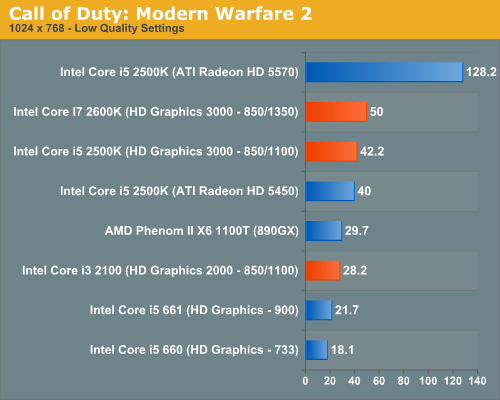

Call of Duty: Modern Warfare 2

Our Modern Warfare 2 benchmark is a quick FRAPS run through a multiplayer map. All settings were turned down/off and we ran at 1024x768.

The Intel HD Graphics 3000 enabled chips are able to outpace the Radeon HD 5450 by at least 5%. The 2000 model isn't able to do as well, losing out to even the 890GX. On the notebook side this won't be an issue but for desktops with integrated graphics, it is a problem as most will have the lower end GPU.

The performance improvement over last year's Clarkdale IGP is at least 30%, and more if you compare to the more mainstream Clarkdale SKUs.

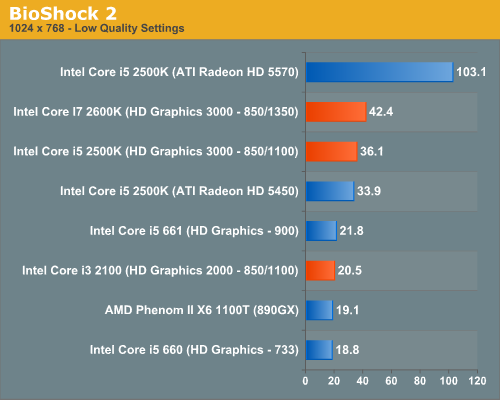

BioShock 2

Our test is a quick FRAPS runthrough in the first level of BioShock 2. All image quality settings are set to low, resolution is at 1024x768.

Once again the HD Graphics 3000 GPUs are faster than the Radeon HD 5450; it's the 2000 model that's slower. In this case the Core i3-2100 is actually slightly slower than last year's Core i5-661.

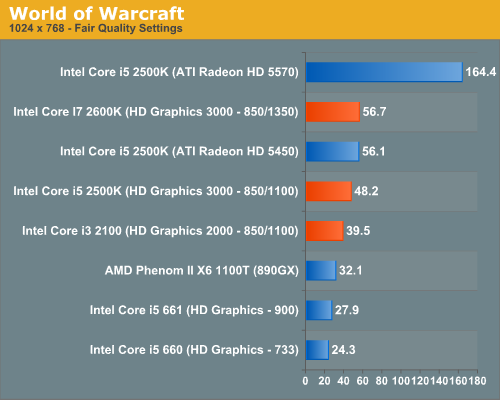

World of Warcraft

Our WoW test is run at fair quality settings (with weather turned down all the way) on a lightly populated server in an area where no other players are present to produce repeatable results. We ran at 1024x768.

The high-end HD Graphics 3000 SKUs do very well vs. the Radeon HD 5450 once again. We're at more than playable frame rates in WoW with all of the Sandy Bridge parts, although the two K-series SKUs are obviously a bit smoother.

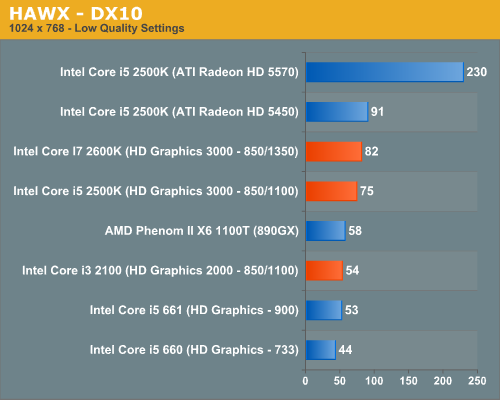

HAWX

Our HAWX performance tests were run with the game's built in benchmark in DX10 mode. All detail settings were turned down/off and we ran at 1024x768.

The Radeon HD 5570 continues to be completely untouchable. While Sandy Bridge can compete in the ~$40-$50 GPU space, anything above that is completely out of its reach. That isn't too bad considering Intel spent all of 114M transistors on the SNB GPU, but I do wonder if Intel will be able to push up any higher in the product stack in future GPUs.

Once again the HD Graphics 2000 GPU is a bit too slow for my tastes, just barely edging out the fastest Clarkdale GPU.

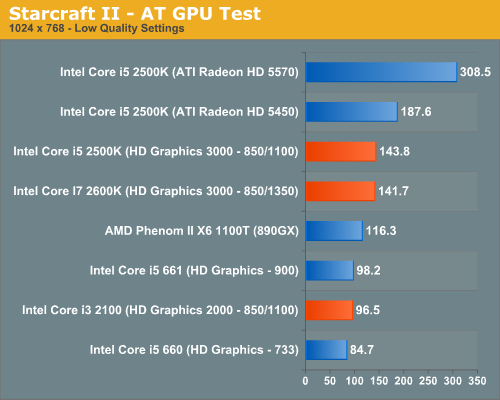

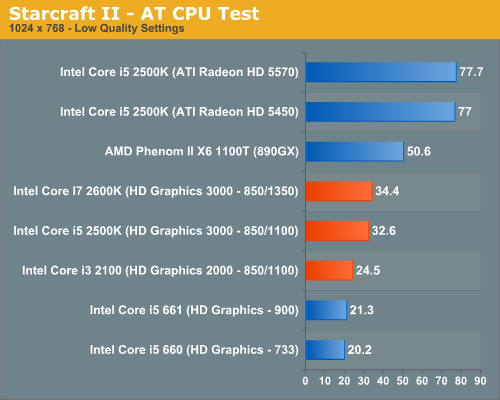

Starcraft II

We have two Starcraft II benchmarks: a GPU and a CPU test. The GPU test is mostly a navigate-around-the-map test, as scrolling and panning around tends to be the most GPU bound in the game. Our CPU test involves a massive battle of six armies in the center of the map, stressing the CPU more than the GPU. At these low quality settings, however, both benchmarks are influenced by CPU and GPU.

Starcraft II is really a strong point of Sandy Bridge's graphics. It's more than fast enough to run one of the most popular PC games out today. You can easily crank up quality settings or resolution without turning the game into a slideshow. Of course, low quality SC2 looks pretty weak compared to medium quality, but it's better than nothing.

Our CPU test actually ends up being GPU bound with Intel's integrated graphics, AMD's 890GX is actually faster here:

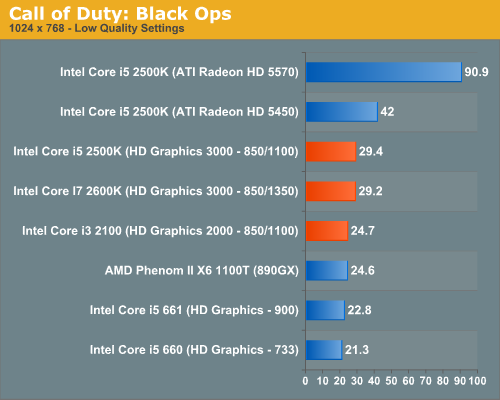

Call of Duty: Black Ops

Call of Duty: Black Ops is basically unplayable on Sandy Bridge integrated graphics. I'm guessing this is not a compute bound scenario but rather an optimization problem for Intel. You'll notice there's hardly any difference between the performance of the 2000 and 3000 GPUs, indicating a bottleneck elsewhere. It could be memory bandwidth. Despite the game's near-30fps frame rate, there's way too much stuttering and jerkiness during the game to make it enjoyable.

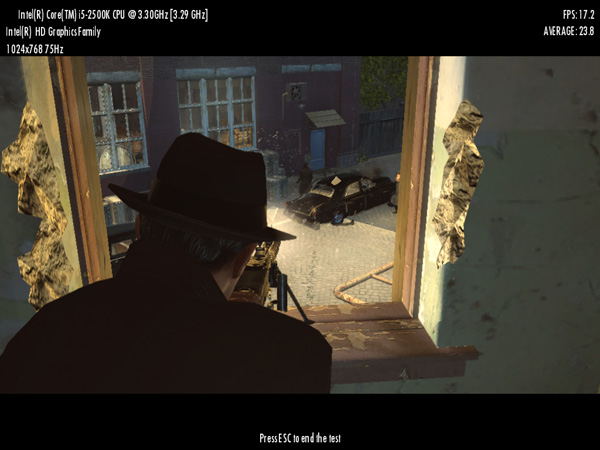

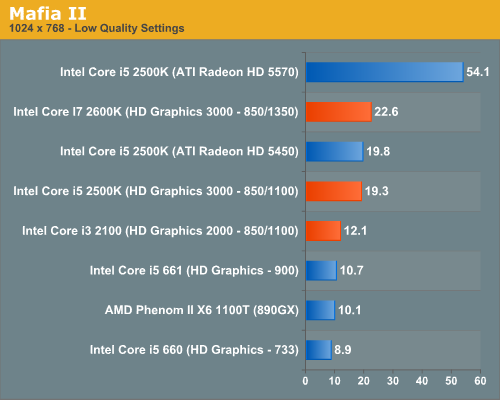

Mafia II

Mafia II ships with a built in benchmark which we used for our comparison.

Frame rates are pretty low here, definitely not what I'd consider playable. This is a fact across the board though; you need to spend at least $70 on a GPU to get a playable experience here.

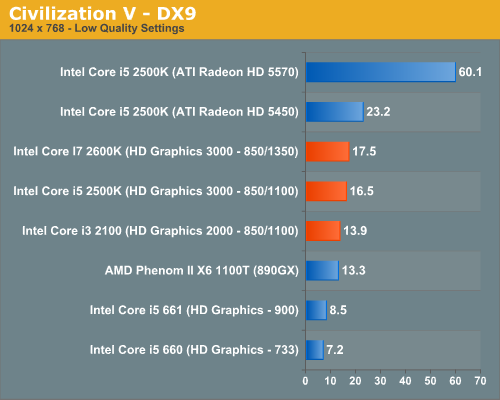

Civilization V

For our Civilization V test we're using the game's built in lateGameView benchmark. The test was run in DX9 mode with everything turned down at 1024x768:

Performance here is pretty low. Even a Radeon HD 5450 isn't enough to get you smooth frame rates; a discrete GPU is just necessary for some games. Civ V does have the advantage of not depending on high frame rates, though; the mouse input is decoupled from rendering, so you can generally interact with the game even at low frame rates.

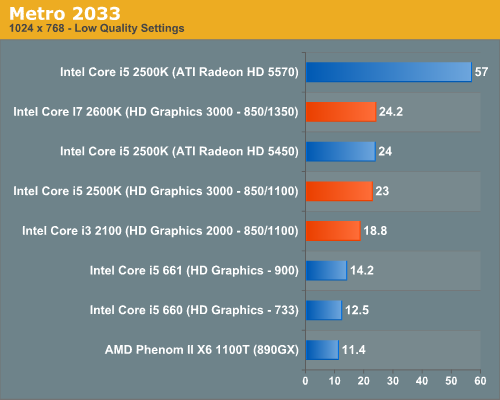

Metro 2033

We're using the Metro 2033 benchmark that comes with the patched game. Occasionally I noticed rendering issues at the Metro 2033 menu screen but I couldn't reproduce the problem regularly on Intel's HD Graphics.

Metro 2033 and many newer titles are just not playable at smooth frame rates on anything this low-end. Intel integrated graphics as well as low-end discrete GPUs are best paired with older games.

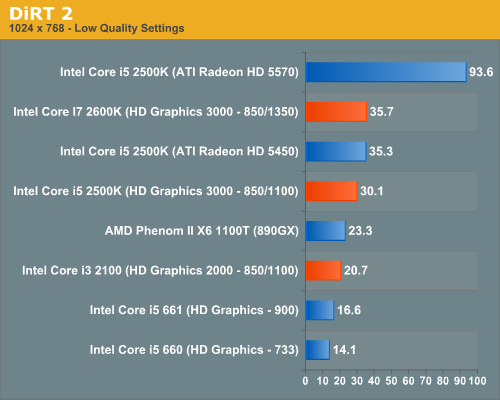

DiRT 2

Our DiRT 2 performance numbers come from the demo's built-in benchmark:

DiRT 2 is another game that needs compute power, and the faster 2600K gets a decent boost from the higher clock speed. Frame rates are relatively consistent as well, though you'll get dips into the low 20s and teens at times, so at these settings the game is borderline playable. (Drop to Ultra Low if you need higher performance.)

283 Comments

View All Comments

nuudles - Monday, January 3, 2011 - link

Anand, im not the biggest intel fan (due to their past grey area dealings) but I dont think the naming is that confusing. As I understand it they will move to the 3x00 series with Ivy Bridge, basically the higher the second number the faster the chip.It would be nice if there was something in the name to easily tell consumers the number of cores and threads, but the majority of consumers just want the fatest chip for their money and dont care how many cores or threads it has.

The ix part tells enthusiasts the number of cores/threads/turbo with the i3 having 2/4/no, the i5 having 4/4/yes and i7 4/8/yes. I find this much simpler than the 2010 chips which had some dual and some quad core i5 chips for example.

I think AMD's gpus has a sensible naming convention (except for the 68/6900 renaming) without the additional i3/i5/i7 modifier by using the second number as the tier indicator while maintaining the rule of thumb of "a higher number within a- generation means faster", if intel adopted something similar it would have been better.

That said I wish they stick with a naming convention for at least 3 or 4 generations...

nimsaw - Monday, January 3, 2011 - link

",,but until then you either have to use the integrated GPU alone or run a multimonitor setup with one monitor connected to Intel’s GPU in order to use Quick Sync"So have you tested the Transcoding with QS by using an H67 chipset based motherboard? The Test Rig never mentions any H67 motherboard. I am somehow not able to follow how you got the scores for the Transcode test. How do you select the codepath if switching graphics on a desktop motherboard is not possible? Please throw some light on it as i am a bit confused here. You say that QS gives a better quality output than GTX 460, so does that mean, i need not invest in a discrete GPU if i am not gaming. Moreover, why should i be forced to use the discrete GPU in a P67 board when according to your tests, the Intel QS is giving a better output.

Anand Lal Shimpi - Monday, January 3, 2011 - link

I need to update the test table. All of the Quick Sync tests were run on Intel's H67 motherboard. Presently if you want to use Quick Sync you'll need to have an H67 motherboard. Hopefully Z68 + switchable graphics will fix this in Q2.Take care,

Anand

7Enigma - Monday, January 3, 2011 - link

I think this needs to be a front page comment because it is a serious deficiency that all of your reviews fail to properly describe. I read them all and it wasn't until the comments came out that this was brought to light. Seriously SNB is a fantastic chip but this CPU/mobo issue is not insignificant for a lot of people.Wurmer - Monday, January 3, 2011 - link

I haven't read through all the comments and sorry if it's been said but I find it weird that the most ''enthusiast'' chip K, comes with the better IGP when most people buying this chip will for the most part end up buying a discreet GPU.Akv - Monday, January 3, 2011 - link

It's being said in reviews from China to France to Brazil, etc.nimsaw - Monday, January 3, 2011 - link

Strangely enough i also have the same query. what is the point of better Integrated graphics when you cannot use them on a P67 mobo?also i came across this screen shot

http://news.softpedia.com/newsImage/Intel-Sandy-Br...

where on the right hand corner you have a Drop Down menu which has selected Intel Quick Sync. Will you see a discrete GPU if you expand it? Does it not mean switching between graphics solutions. In the review its mentioned that switchable graphics is still to find its way in desktop mobos.

sticks435 - Tuesday, January 4, 2011 - link

It looks like that drop down is dithered, which means it's only displaying the QS system at the moment, but has a possibility to select multiple options in the future or maybe if you had 2 graphics cards etc.HangFire - Monday, January 3, 2011 - link

You are comparing video and not chipsets, right?I also take issue with the statement that the 890GX (really HD 4290) is the current onboard video cream of the crop. Test after test (on other sites) show it to be a bit slower than the HD4250, even though it has higher specs.

I also think Intel is going to have a problem with folks comparing their onboard HD3000 to AMD's HD 4290, it just sounds older and slower.

No word on Linux video drivers for the new HD2000 and HD3000? Considering what a mess KMS has made of the old i810 drivers, we may be entering an era where accelerated onboard Intel video is no longer supported on Linux.

mino - Wednesday, January 5, 2011 - link

Actually, 890GX is just a re-badged 780G from 2008 with sideport memory.And no HD4250 is NOT faster. While some specific implementation of 890GX wthout sideport _might_ be slower, it would also be cheaper and not really a "proper" representative.

(890GX withou sedeport is like sayin i3 with dual channel RAM is "faster" in games than i5 with single channel RAM ...)