The Sandy Bridge Review: Intel Core i7-2600K, i5-2500K and Core i3-2100 Tested

by Anand Lal Shimpi on January 3, 2011 12:01 AM ESTQuick Sync: The Best Way to Transcode

Currently Intel’s Quick Sync transcode is only supported by two applications: Cyberlink’s Media Espresso 6 and Arcsoft’s Media Converter 7. Both of these applications are video to go applications targeted at users who want to take high resolution/high bitrate content and transcode it to more compact formats for use on smartphones, tablets, media streamers and gaming consoles. The intended market is not users who are attempting to make high quality archives of Blu-ray content. As a result, there’s no support for multi-channel audio; both applications are limited to 2-channel MP3 or AAC output. There’s also no support for transcoding to anything higher than the main profile of H.264.

Intel indicates that these are not hardware limitations of Quick Sync, but rather limitations of the transcoding software. To that extent, Intel is working with developers of video editing applications to bring Quick Sync support to applications that have a more quality-oriented usage model. These applications are using Intel’s Media SDK 2.0 which is publicly available. Intel says that any developer can get access to and use it.

For the purposes of this comparison I’ve used Media Converter 7, but that’s purely a personal preference thing. The performance and image quality should be roughly identical between the two applications as they both use the same APIs. Jarred's look at Mobile Sandy Bridge will focus on MediaEspresso.

Where image quality isn’t consistent however is between transcoding methods in either application. Both applications support four codepaths: ATI Stream, Intel Quick Sync, NVIDIA CUDA, and x86. While you can set any of these codepaths to the same transcoding settings, the method by which they arrive at the transcoded image will differ. This makes sense given how different all four target architectures are (e.g. a Radeon HD 6870 doesn’t look anything like a NVIDIA GeForce GTX 460). Each codepath makes a different set of performance vs. quality tradeoffs which we’ll explore in this section.

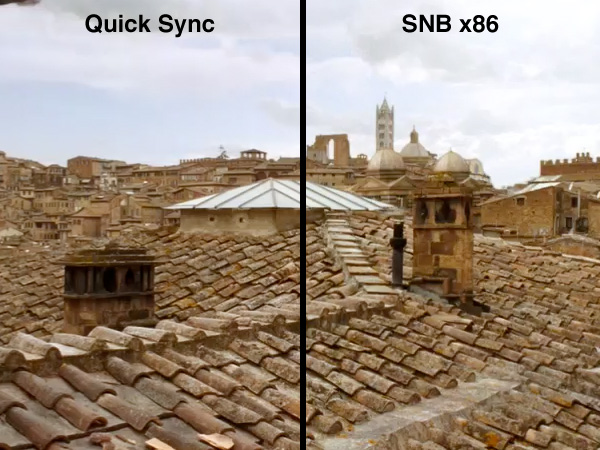

The first but not as obvious difference is if you use the Sandy Bridge CPU cores vs. Quick Sync to transcode you will actually get a different image. The image quality is slightly better on the x86 path, but the two are similar.

The reason for the image quality difference is easy to understand. CPUs are inherently not very parallel beasts. We get tremendous speedup on highly parallel tasks on multi-core CPUs, but compared to a GPU’s ability to juggle hundreds or thousands of threads, even a 6-core CPU doesn’t look too wide. As a result of this serial vs. parallel difference, transcoding algorithms optimized for CPUs are very computationally efficient. They have to be, because you can’t rely on hundreds of cores running in parallel when you’re running on a CPU.

Take the same code and run it on a GPU and you’ll find that the majority of your execution resources are wasted. A new codepath is needed that can take advantage of the greater amount of compute at your disposal. For example, a GPU can evaluate many different compression modes in parallel whereas on a CPU you generally have to pick a balance between performance and quality up front regardless of the content you’re dealing with.

There’s also one more basic difference between code running on the CPU vs. integrated GPU. At least in Intel’s case, certain math operations can be performed with higher precision on Sandy Bridge’s SSE units vs. the GPU’s EUs.

Intel tuned the PSNR of the Quick Sync codepath to be as similar to the x86 codepath as possible. The result is, as I mentioned above, quite similar:

Now let’s tackle the other GPUs. When I first started my Quick Sync investigations I did a little experiment. Without forming any judgments of my own, I quickly transcoded a ~15Mbps 1080p movie into a iPhone 4 compatible 720p H.264 at 4Mbps. I then trimmed it down to a single continuous 4 minute scene and passed the movie along to six AnandTech editors. I sent the editors three copies of the 4 minute scene. One transcoded on a GeForce GTX 460, one using Intel’s Quick Sync, and one using the standard x86 codepath. I named the three movies numerically and told no one which platform was responsible for each output. All I asked for was feedback on which ones they thought were best.

Here are some of the comments I received:

“Wow... there are some serious differences in quality. I'm concerned that the 1.mp4 is the accelerated transcode, in which case it looks like poop..”

“Video 1: Lots of distracting small compression blocks, as if the grid was determined pre-encoding (I know that generally there are blocks, but here the edges seem to persist constantly). Persistent artifacts after black. Quality not too amazing, I wouldn't be happy with this.”

Video one, which many assumed was Quick Sync, actually came from the GeForce GTX 460. The CUDA codepath, although extremely fast, actually produces a much worse image. Videos 2 and 3 were outputs from Sandy Bridge, and the editors generally didn’t agree on which one of those two looked better just that they were definitely better than the first video.

To confirm whether or not this was a fluke I set up three different transcodes. Lossy video compression is hard to get right when you’re transcoding scenes that are changing quickly, so I focused on scenes with significant movement.

The first transcode involves taking the original Casino Royale Blu-ray, stripping it of its DRM using AnyDVD HD, and feeding that into MC7 as a source. The output in this case was a custom profile: 15Mbps 1080p main profile H.264. This is an unrealistic usage model simply because the output file only had 2-channel audio, making it suitable only for PC use and likely a waste of bitrate. I simply wanted to see how the various codepaths looked and performed with an original BD source.

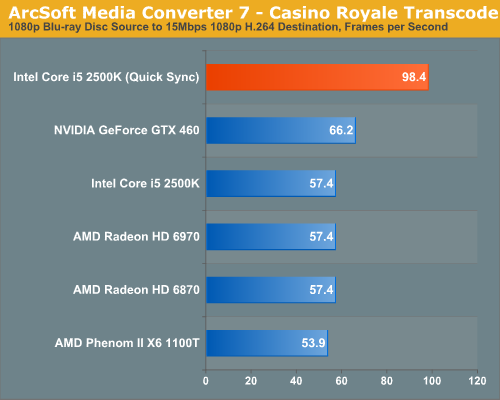

Let’s look at performance first. The entire movie has around 200,000 frames, the transcoding frame rate is below:

As we’ve been noting in our GPU reviews for quite some time now, there’s no advantage to transcoding on a GPU faster than the $200 mainstream parts. Remember that the transcode process isn’t all infinitely parallel, we are ultimately bound by the performance of the sequential components of the algorithm. As a result, the Radeon HD 6970 offers no advantage over the 6870 here. Both of these AMD GPUs end up being just as fast as a Core i5-2500K.

NVIDIA’s GPUs offer a 15.7% performance advantage, but as I mentioned earlier, the advantage comes at the price of decreased quality (which we’ll get to in a moment).

Inte’s Quick Sync is untouchable though. It’s 48% faster than NVIDIA’s GeForce GTX 460 and 71% faster than the Radeon HD 6970. I don’t want to proclaim that discrete GPU based transcoding is dead, but based on these results it sure looks like it. What about image quality?

My image quality test scene isn’t anything absurd. Bond and Vespyr are about to meet Mathis for the first time. Mathis walks towards the two and the camera pans to follow him. With only one character and the camera both moving at a predictable rate, using some simple motion estimation most high quality transcoders should be able to handle this scene without getting tripped up too much.

| Intel Core i5-2500K (x86) | Intel Quick Sync | NVIDIA GeForce GTX 460 | AMD Radeon HD 6870 |

| Download: PNG | Download: PNG | Download: PNG | Download: PNG |

Comparing the shots above the only real outlier is NVIDIA’s GeForce GTX 460. The CUDA path clearly errs on the side of performance vs. quality and produces a far noisier image. The ATI Stream codepath produces an image that’s very close to the standard x86 and Quick Sync output. In fact, everything but the GTX 460 does well here.

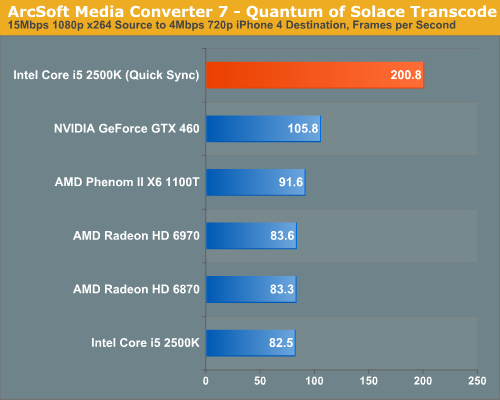

The next test uses an already transcoded 15Mbps 1080p x264 rip of Quantum of Solace Blu-ray disc. For many this is likely what you’ll have stored on your movie server rather than a full 50GB Blu-ray rip. Our destination this time is the iPhone 4. The settings are as follows: 4Mbps 720p H.264.

At only 4Mbps there’s a lot of compression going on, image quality isn’t going to be nearly as good as the previous test. Performance is considerably higher as the encoders are able to discard more data and optimize for performance over absolute quality. The entire movie has 152,000 frames that are transcoded in this test:

The six-core Phenom II X6 1100T is faster than the Core i5-2500K thanks to the latter’s lack of Hyper Threading. Both are around the speed of the Radeon HD 6870.

The GeForce GTX 460 is faster than any standalone x86 CPU, regardless of core count. However once again, Quick Sync blows them all out of the water. At 200 frames per second Quick Sync is more than twice the speed of a standard Core i5-2500K or even the Phenom II X6 1100T. And it’s nearly twice as fast as the GTX 460.

The image quality comparison scene is also far more stressful on the transcoders. There’s a lot of unpredictable movement going on as Bond is in pursuit of a double agent at the beginning of the film.

| Intel Core i5-2500K (x86) | Intel Quick Sync | NVIDIA GeForce GTX 460 | AMD Radeon HD 6870 |

| Download: PNG | Download: PNG | Download: PNG | Download: PNG |

The image quality story is about the same for AMD’s GPUs and the x86 path, however Quick Sync delivers a noticeably worse quality image. It’s no where near as bad as the GTX 460, but it’s just not as sharp as what you get from the software or ATI Stream codepaths.

The problem here seems to be that when transcoding from a lower quality source, the tradeoffs NVIDIA makes are amplified. Even Quick Sync isn’t perfect here. I’d say Quick Sync is closer to the pure x86 path than CUDA. Given the tremendous performance advantage I’d say the tradeoff is probably worth it in this case.

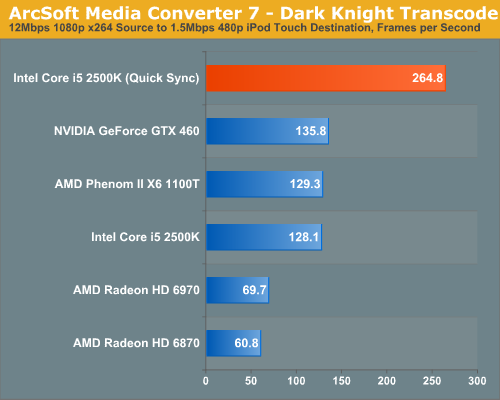

For our final test we’ve got a 12Mbps 1080p x264 rip of The Dark Knight. Our target this time is a 640x480, 1.5Mbps iPod Touch compatible format.

Surprisingly enough the 6970 shows a slight performance advantage compared to the 6870 in this test, but still not enough to approach the speed of the x86 CPUs in this test. Quick Sync is almost 4x faster than the Radeon HD 6970 and twice as fast as everything else.

Our Dark Knight image quality test is also the most strenuous of the review. We’re looking at a very dark, high motion scene with a sudden explosion. The frame we’re looking at is right after the Joker fires a rocket at the rear of a police car. The sudden explosion casts light everywhere which can’t be predicted based on the previous frame.

| Intel Core i5-2500K (x86) | Intel Quick Sync | NVIDIA GeForce GTX 460 | AMD Radeon HD 6870 |

| Download: PNG | Download: PNG | Download: PNG | Download: PNG |

The GeForce GTX 460 looks horrible here. The output looks like an old film, it’s simply inexcusable.

The Radeon HD 6870 produces a frame that has similar sharpness to the x86 codepath, but with muted colors. Quick Sync maintains color fidelity but loses the sharpness of the x86 path, similar to what we saw in the previous test. In this case the loss of sharpness does help smooth out some aliasing in the paint on the police car but otherwise is undesirable.

Overall, based on what I’ve seen in my testing of Quick Sync, it isn’t perfect but it does deliver a good balance of image quality and performance. With Quick Sync enabled you can transcode a ~2.5 hour Blu-ray disc in around 35 minutes. If you’ve got a lower quality source (e.g. a 15GB Blu-ray re-encode), you can plan on doing a full movie in around 13 minutes. Quick Sync will chew through TV shows in a couple of minutes, without a tremendous loss in quality.

With CUDA on NVIDIA GPUs we had to choose between high quality or high performance. (Perhaps other applications will do the transcode better as well, but at least Arcsoft's Media Converter 7 has serious image quality problems with CUDA.) With Quick Sync you can have both, and better performance than we’ve ever seen from any transcoding solution in desktops or notebooks.

Quick Sync with a Discrete GPU

There’s just one hangup to all of this Quick Sync greatness: it only works if the processor’s GPU is enabled. In other words, on a desktop with a single monitor connected to a discrete GPU, you can’t use Quick Sync.

This isn’t a problem for mobile since Sandy Bridge notebooks should support switchable graphics, meaning you can use Quick Sync without waking up the discrete GPU. However there’s no standardized switchable graphics for desktops yet. Intel indicated that we may see some switchable solutions in the coming months on the desktop, but until then you either have to use the integrated GPU alone or run a multimonitor setup with one monitor connected to Intel’s GPU in order to use Quick Sync.

283 Comments

View All Comments

-=Hulk=- - Monday, January 3, 2011 - link

That's crazy, are the chipsets PCI-e line still limited to v1 (250MB/s) speed or what????http://images.anandtech.com/reviews/cpu/intel/sand...

mino - Monday, January 3, 2011 - link

No, you read it wrong.There are altogether 8 PCIE 2.0 linex and all can be used independently, aka s as "PCIe x1".

The CPU-Chipset bandwith however is a basic PCIe x4 link, so do not expect wonders is more divices are in heavy use ...

-=Hulk=- - Monday, January 3, 2011 - link

No!Look at the PCI-e x16 from the CPU. Intel indicates a bandwidth of 16GB/s per line. That means 1GB/s per line.

But PCI-e v2 has a bandwidth of 500MB/s per line only. Thats mean that the values that Intel Indicates for the PCI-e lines are the sum of the upload AND download bandwidth of the PCI-e.

Thats means that the PCI-e lines of the chipset run at 250MB/s speed! That is the bandwidth of the PCI-e v1, and Intel has done the same bullshit with the P55/H57, he indicates that they are PCI-e v2 but they limits their speed to the values of the PCI-e v1:

P55 chipset (look at the 2.5GT/s !!!) :

"PCI Express* 2.0 interface:

Offers up to 2.5GT/s for fast access to peripheral devices and networking with up to 8 PCI Express* 2.0 x1 ports, configurable as x2 and x4 depending on motherboard designs.

http://www.intel.com/products/desktop/chipsets/p55... "

P55, also 500MB/s per line as for the P67

http://benchmarkreviews.com/images/reviews/motherb...

Even for the ancient ICH7 Intel indicates 500MB/s per line, but at that time PCI-e v didn't even exist... That's because it's le sum of the upload and download speed of the PCI-e v1.

http://img.tomshardware.com/us/2007/01/03/the_sout...

DanNeely - Monday, January 3, 2011 - link

Because 2.0 speed for the southbridge lanes has been reported repeatedly (along with a 2x speed DMI bus to connect them), my guess is an error when making the slides with bidirectional BW listed on the CPU and unidirectional BW on the southbridge.jmunjr - Monday, January 3, 2011 - link

Intel's sell out to big media and putting DRM in Sandy Bridge means I won't be getting one of these puppies. I don't care how fast it is...Exodite - Monday, January 3, 2011 - link

Uh, what exactly are you referencing?If it's TXT it's worth noting that the interesting chips, the 2500K and 2600K, doesn't even support it.

chirpy chirpy - Tuesday, January 11, 2011 - link

I think the OP is referring to Intel Insider, the not-so-secret DRM built into the sandy bridge chips. I can't believe people are overlooking the fact that Intel is attempting to introduce DRM at the CPU level and all everyone has to say is "wow, I can't WAIT to get one of dem shiny new uber fast Sandy Bridges!"I for one applaud and welcome our benevolent DRM overlords.....

http://www.pcmag.com/article2/0,2817,2375215,00.as...

nuudles - Monday, January 3, 2011 - link

I have a q9400, if I compare it to the 2500K in bench and average (straight average) all scores the 2500K is 50% faster. The 2500K has a 24% faster base clock, so all the architecture improvements plus faster RAM, more cache and turbo mode gained only ~20% or so on average, which is decent but not awesome taking into account the c2q is 3+ year old design (or is it 4 years?). I know that the idle power is significantly lower due to power gating so due to hurry up and wait it consumes less power (cant remember c2q 45nm load power, but it was not much higher than this core 2011 chips).So 50%+ faster sounds good (both chips occupy the same price bracket), but after equating clock speeds (yes it would increase load and idle power on the c2q) the improvement is not massive but still noticeable.

I will be holding out for Bulldozer (possibly slightly slower, especially in lightly threaded workloads?) or Ivy Bridge as mine is still fast enough to do what I want, rather spend the money on adding a SSD or better graphics card.

7Enigma - Monday, January 3, 2011 - link

I think the issue with the latest launch is the complete and utter lack of competition for what you are asking. Anand's showed that the OC'ing headroom for these chips are fantastic.....and due to the thermals even possible (though not recommended by me personally) on the stock low-profile heatsink.That tells you that they could have significantly increased the performance of this entire line of chips but why should they when there is no competition in sight for the near future (let's ALL hope AMD really produces a winner in the next release) or we're going to be dealing with a plodding approach with INTEL for a while. In a couple months when the gap shrinks (again hopefully by a lot) they simply release a "new" batch with slightly higher turbo frequencies (no need to up the base clocks as this would only hurt power consumption with little/no upside), and bam they get essentially a "free" release.

It stinks as a consumer, but honestly probably hurts us enthusiasts the least since most of us are going to OC these anyways if purchasing the unlocked chips.

I'm still on a C2D @ 3.85GHz but I'm mainly a gamer. In a year or so I'll probably jump on the respin of SDB with even better thermals/OC potential.

DanNeely - Monday, January 3, 2011 - link

CPUs need to be stable in Joe Sixpack's unairconditioned trailer in Alabama during August after the heatsink is crusted in cigarette tar and dust, in one of the horrible computer desks that stuff the tower into a cupboard with just enough open space in the back for wires to get out; not just in an 70F room where all the dust is blown out regularly and the computer has good airflow. Unless something other than temperature is the limiting factor on OC headroom that means that large amounts of OCing can be done easily by those of us who take care of their systems.Since Joe also wants to get 8 or 10 years out of his computer before replacing it the voltages need to be kept low enough that electromigration doesn't kill the chip after 3 or 4. Again that's something that most of us don't need to worry about much.