AMD's Radeon HD 6970 & Radeon HD 6950: Paving The Future For AMD

by Ryan Smith on December 15, 2010 12:01 AM ESTTweaking PowerTune

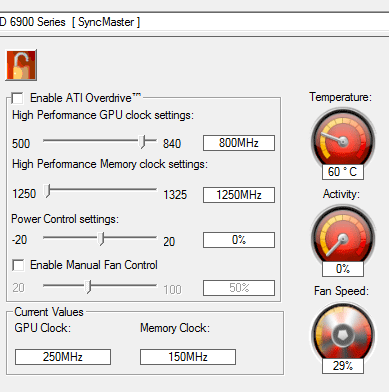

While the primary purpose of PowerTune is to keep the power consumption of a video card within its TDP in all cases, AMD has realized that PowerTune isn’t necessarily something everyone wants, and so they’re making it adjustable in the Overdrive control panel. With Overdrive you’ll be able to adjust the PowerTune limits both up and down by up to 20% to suit your needs.

We’ll start with the case of increasing the PowerTune limits. While AMD does not allow users to completely turn off PowerTune, they’re offering the next best thing by allowing you to increase the PowerTune limits. Acknowledging that not everyone wants to keep their cards at their initial PowerTune limits, AMD has included a slider with the Overdrive control panel that allows +/- 20% adjustment to the PowerTune limit. In the case of the 6970 this means the PowerTune limit can be adjusted to anywhere between 200W and 300W, the latter being the ATX spec maximum.

Ultimately the purpose of raising the PowerTune limit depends on just how far you raise it. A slight increase can bring a slight performance advantage in any game/application that is held back by PowerTune, while going the whole nine yards to 20% is for all practical purposes disabling PowerTune at stock clocks and voltages.

We’ve already established that at the stock PowerTune limit of 250W only FurMark and Metro 2033 are PowerTune limited, with only the former limited in any meaningful way. So with that in mind we increased our PowerTune limit to 300W and re-ran our power/temperature/noise tests to look at the full impact of using the 300W limit.

| Radeon HD 6970: PowerTune Performance | ||||

| PowerTune 250W | PowerTune 300W | |||

| Crysis Temperature | 78 | 79 | ||

| Furmark Temperature | 83 | 90 | ||

| Crysis Power | 340W | 355W | ||

| Furmark Power | 361W | 422W | ||

As expected, power and temperature both increase with FurMark with PowerTune at 300W. At this point FurMark is no longer constrained by PowerTune and our 6970 runs at 880MHz throughout the test. Overall our power consumption measured at the wall increased by 60W, while the core clock for FurMark is 46.6% faster. It was under this scenario that we also “uncapped” PowerTune for Metro, when we found that even though Metro was being throttled at times, the performance impact was impossibly small.

Meanwhile we found something interesting when running Crysis. Even though Crysis is not impacted by PowerTune, Crysis’ power consumption still crept up by 15W. Performance is exactly the same, and yet here we are with slightly higher power consumption. We don’t have a good explanation for this at this point – PowerTune only affects the core clock (and not the core voltage), and we never measured Crysis taking a hit at 250W or 300W, so we’re not sure just what is going on. However we’ve already established that FurMark is the only program realistically impacted by the 250W limit, so at stock clocks there’s little reason to increase the PowerTune limit.

This does bring up overclocking however. Due to the limited amount of time we had with the 6900 series we have not been able to do a serious overclocking investigation, but as clockspeed is a factor in the power equation, PowerTune is going to impact overclocking. You’re going to want to raise the PowerTune limit when overclocking, otherwise PowerTune is liable to bring your clocks right back down to keep power consumption below 250W. The good news for hardcore overclockers is that while AMD set a 20% limit on our reference cards, partners will be free to set their own tweaking limits – we’d expect high-end cards like the Gigabyte SOC, MSI Lightning, and Asus Matrix lines to all feature higher limits to keep PowerTune from throttling extreme overclocks.

Meanwhile there’s a second scenario AMD has thrown at us for PowerTune: tuning down. Although we generally live by the “more is better” mantra, there is some logic to this. Going back to our dynamic range example, by shrinking the dynamic power range power hogs at the top of the spectrum get pushed down, but thanks to AMD’s ability to use higher default core clocks, power consumption of low impact games and applications goes up. In essence power consumption gets just a bit worse because performance has improved.

Traditionally V-sync has been used as the preferred method of limiting power consumption by limiting a card’s performance, but V-sync introduces additional input lag and the potential for skipped frames when triple-buffering is not available, making it a suboptimal solution in some cases. Thus if you wanted to keep a card at a lower performance/power level for any given game/application but did not want to use V-sync, you were out of luck unless you wanted to start playing with core clocks and voltages manually. By being able to turn down the PowerTune limits however, you can now constrain power consumption and performance on a simpler basis.

As with the 300W PowerTune limit, we ran our power/temperature/noise tests with the 200W limit to see what the impact would be.

| Radeon HD 6970: PowerTune Performance | ||||

| PowerTune 250W | PowerTune 200W | |||

| Crysis Temperature | 78 | 71 | ||

| Furmark Temperature | 83 | 71 | ||

| Crysis Power | 340W | 292W | ||

| Furmark Power | 361W | 292W | ||

Right off the bat everything is lower. FurMark is now at 292W, and quite surprisingly Crysis is also at 292W. This plays off of the fact that most games don’t cause a card to approach its limit in the first place, so bringing the ceiling down will bring the power consumption of more power hungry games and applications down to the same power consumption levels as lesser games/applications.

Although not whisper quiet, our 6970 is definitely quieter at the 200W limit than the default 250W limit thanks to the lower power consumption. However the 200W limit also impacts practically every game and application we test, so performance is definitely going to go down for everything if you do reduce the PowerTune limit by the full 20%.

| Radeon HD 6970: PowerTune Crysis Performance | ||||

| PowerTune 250W | PowerTune 200W | |||

| 2560x1600 | 36.6 | 28 | ||

| 1920x1200 | 51.5 | 43.3 | ||

| 1680x1050 | 63.3 | 52 | ||

At 200W, you’re looking at around 75%-80% of the performance for Crysis. The exact value will depend on just how heavy of a load the specific game/application was in the first place.

168 Comments

View All Comments

529th - Sunday, December 19, 2010 - link

Great job on this review. Excellent writing and easy to read.Thanks

marc1000 - Sunday, December 19, 2010 - link

yes, that's for sure. we will have to wait a little to see improvements from VLIW4. but my point is the "VLIW processors" count, they went up by 20%. with all other improvements, I was expecting a little more performance, just that.but in the other hand, I was reading the graphs, and decided that 6950 will be my next card. it has double the performance of 5770 in almost all cases. that's good enough for me.

Iketh - Friday, December 24, 2010 - link

This is how they've always reviewed new products? And perhaps the biggest reason AT stands apart from the rest? You must be new to AT??WhatsTheDifference - Sunday, December 26, 2010 - link

the 4890? I see every nvidia config, never a card overlooked there, ever, but the ATI's (then) top card is conspicuously absent. long as you include the 285, there's really no excuse for the omission. honestly, what's the problem?PeteRoy - Friday, December 31, 2010 - link

All games released today are in the graphic level of the year 2006, how many games do you know that can bring the most out of this card? Crysis from 2007?Hrel - Tuesday, January 11, 2011 - link

So when are all these tests going to be re-run at 1920x1080 cause quite frankly that's what I'm waiting for. I don't care about any resolution that doesn't work on my HDTV. I want 1920x1080, 1600x900 and 1280x720. If you must include uber resolutions for people with uber money then whatever; but those people know to just buy the fastest card out there anyway so they don't really need performance numbers to make up their mind. Money is no object so just buy nvidia's most expensive card and ur off.AKP1973 - Thursday, October 13, 2011 - link

Have you guys noticed the "load GPU temp" of the 6870 in XFIRE?... It produced so very low heat than any enthusiast card in a multi-GPU setup. That's one of the best XFIRE card in our time today if you value price, performance, cool temp, and silence.!Travisryno - Wednesday, April 26, 2017 - link

It's dishonest referring to enhanced 8x as 32x. There are industry standards for this, which AMD, NEC, 3DFX, SGI, SEGA AM2, etc..(everybody) always follow(ed), then nVidia just makes their own...Just look how convoluted it is..