AMD’s Radeon HD 6870 & 6850: Renewing Competition in the Mid-Range Market

by Ryan Smith on October 21, 2010 10:08 PM ESTFor a while now we’ve been trying to establish a proper cross-platform compute benchmark suite to add to our GPU articles. It’s not been entirely successful.

While GPUs have been compute capable in some form since 2006 with the launch of G80, and AMD significantly improved their compute capabilities in 2009 with Cypress, the software has been slow to catch on. From gatherings such as NVIDIA’s GTC we’ve seen first-hand how GPU computing is being used in the high-performance computing market, but the consumer side hasn’t materialized as quickly as the right situations for using GPU computing aren’t as straightforward and many developers are unwilling to attach themselves to a single platform in the process.

2009 saw the ratification of OpenCL 1.0 and the launch of DirectCompute, and while the launch of these cross-platform APIs removed some of the roadblocks, we heard as recently as last month from Adobe and others that there’s still work to be done before companies can confidently deploy GPU compute accelerated software. The immaturity of OpenCL drivers was cited as one cause, however there’s also the fact that a lot of computers simply don’t have a suitable compute-capable GPU – it’s Intel that’s the world’s biggest GPU vendor after all.

So here in the fall of 2010 our search for a wide variety of GPU compute applications hasn’t panned out quite like we expected it too. Widespread adoption of GPU computing in consumer applications is still around the corner, so for the time being we have to get creative.

With that in mind we’ve gone ahead and cooked up a new GPU compute benchmark suite based on the software available to us. On the consumer side we have the latest version of Cyberlink’s MediaEspresso video encoding suite and an interesting sub-benchmark from Civilization V. On the professional side we have SmallLuxGPU, an OpenCL based ray tracer. We don’t expect this to be the be all and end all of GPU computing benchmarks, but it gives us a place to start and allows us to cover both cross-platform APIs and NVIDIA & AMD’s platform-specific APIs.

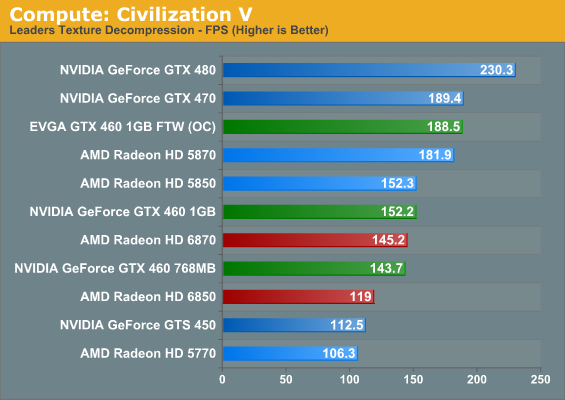

Our first compute benchmark comes from Civilization V, which uses DirectCompute to decompress textures on the fly. Civ V includes a sub-benchmark that exclusively tests the speed of their texture decompression algorithm by repeatedly decompressing the textures required for one of the game’s leader scenes.

In our look at Civ V’s performance as a game, we noted that it favors NVIDIA’s GPUs at the moment, and this may be part of the reason why. NVIDIA’s GPUs clean up here, particularly when compared to the 6800 series and its reduced shader count. Furthermore within the GPU families the results are very straightforward, with the order following the relative compute power of each GPU. To be fair to AMD they made a conscious decision to not chase GPU computing performance with the 6800 series, but as a result it fares poorly here.

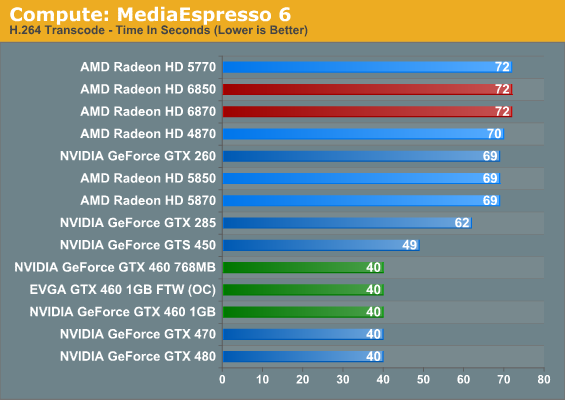

Our second compute benchmark is Cyberlink’s MediaEspresso 6, the latest version of their GPU-accelerated video encoding suite. MediaEspresso 6 doesn’t currently utilize a common API, and instead has codepaths for both AMD’s APP (née Stream) and NVIDIA’s CUDA APIs, which gives us a chance to test each API with a common program bridging them. As we’ll see this doesn’t necessarily mean that MediaEspresso behaves similarly on both AMD and NVIDIA GPUs, but for MediaEspresso users it is what it is.

We decided to go ahead and use MediaEspresso in this article not knowing what we’d find, and it turns out the results were both more and less than we were expecting at the same time. While our charts don’t show it, video transcoding isn’t all that GPU intensive with MediaEspresso; once we achieve a certain threshold of compute performance on a GPU – such as a GTX 460 in the case of an NVIDIA card – the rest of the process is CPU bottlenecked. As a result all of our Fermi NVIDIA cards at the GTX 460 or better take just as long to encode our sample video, and while the AMD cards show some stratification, it’s on the order of only a couple of seconds. From this it’s clear that with Cyberlink’s technology having a GPU is going to help, but it can’t completely offload what’s historically been a CPU-intensive activity.

As for an AMD/NVIDIA cross comparison, the results are straightforward but not particularly enlightening. It turns out that MediaEspresso 6 is significantly faster on NVIDIA GPUs than it is on AMD GPUs, but since we’ve already established that MediaEspresso 6 is CPU limited when using these powerful GPUs, it doesn’t say anything about the hardware. AMD and NVIDIA both provide common GPU video encoding frameworks for their products that Cyberlink taps in to, and it’s here where we believe the difference lies.

In particular we see MediaEspresso 6 achieve 50% CPU utilization (4 core) when being used with an NVIDIA GPU, while it only achieves 13% CPU utilization (1 core) with an AMD GPU. At this point it would appear that the CPU portions of NVIDIA’s GPU encoding framework are multithreaded while AMD’s framework is singlethreaded. And since the performance bottleneck for video encoding still lies with the CPU, this would be why the NVIDIA GPUs do so much better than the AMD GPUs in this benchmark.

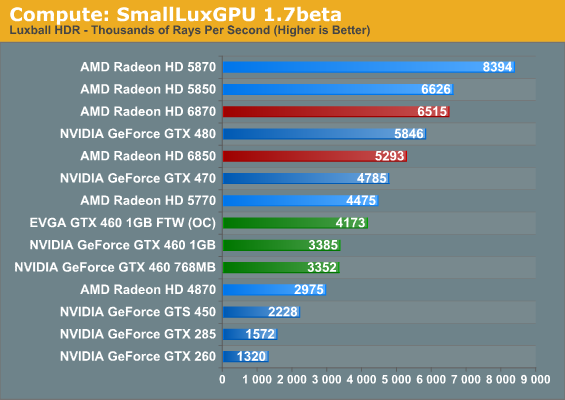

Our final GPU compute benchmark is SmallLuxGPU, the GPU ray tracing branch of the open source LuxRender renderer. While it’s still in beta, SmallLuxGPU recently hit a milestone by implementing a complete ray tracing engine in OpenCL, allowing them to fully offload the process to the GPU. It’s this ray tracing engine we’re testing.

Compared to our other two GPU computing benchmarks, SmallLuxGPU follows the theoretical performance of our GPUs much more closely. As a result our Radeon GPUs with their difficult-to-utilize VLIW5 design end up topping the charts by a significant margin, while the fastest comparable NVIDIA GPU is still 10% slower than the 6850. Ultimately what we’re looking at is what amounts to the best-case scenarios for these GPUs, with this being as good an example as any that in the right circumstances AMD’s VLIW5 shader design can go toe-to-toe with NVIDIA’s compute-focused design and still win.

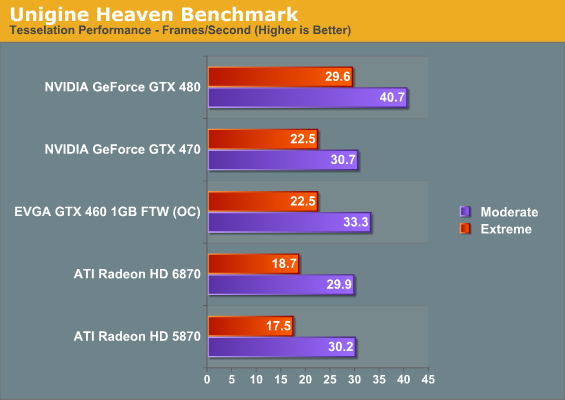

At the other end of the spectrum from GPU computing performance is GPU tessellation performance, used exclusively for graphical purposes. For the Radeon 6800 series, AMD enhanced their tessellation unit to offer better tessellation performance at lower tessellation factors. In order to analyze the performance of AMD’s enhanced tessellator, we’re using the Unigine Heaven benchmark and Microsoft’s DirectX 11 Detail Tessellation sample program to measure the tessellation performance of a few of our cards.

Since Heaven is a synthetic benchmark at the moment (the DX11 engine isn’t currently used in any games) we’re less concerned with performance relative to NVIDIA’s cards and more concerned with performance relative to the 5870. Compared to the 5870 the 6870 ends up being slightly slower when using moderate amounts of tessellation, while it pulls ahead when using extreme amounts of tessellation. Considering that the 6870 is around 7% slower in games than the 5870 this is actually quite an accomplishment for Barts, and one that we can easily trace back to AMD’s tessellator improvements.

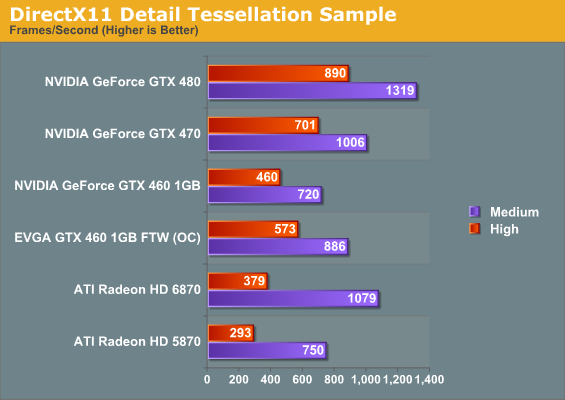

Our second tessellation test is Microsoft’s DirectX 11 Detail Tessellation sample program, which is a much more straightforward test of tessellation performance. Here we’re simply looking at the framerate of the program at different tessellation levels, specifically level 7 (the default level) and level 11 (the maximum level). Here AMD’s tessellation improvements become even more apparent, with the 6870 handily beating the 5870. In fact our results are very close to AMD’s own internal results – at level 7 the 6870 is 43% faster than the 5870, while at level 11 that improvement drops to 29% as the increased level leads to an increasingly large tessellation factor. However this also highlights the fact that AMD’s tessellation performance still collapses at high factors compared to NVIDIA’s GPUs, making it all the more important for AMD to encourage developers to use more reasonable tessellation factors.

197 Comments

View All Comments

Chris Peredun - Friday, October 22, 2010 - link

Not bad, but consider that the average OC from the AT GTX 460 review was 24% on the core. (No memory OC was tried.)http://www.anandtech.com/show/3809/nvidias-geforce...

thaze - Friday, October 22, 2010 - link

German magazine "PC Games Hardware" states the 68xx need "high quality" driver settings in order to reach 58xx image quality. Supposedly AMD confirmed changes regarding the driver's default settings.Therefore they've tested in "high quality" mode and got less convincing results.

Details (german): http://www.pcgameshardware.de/aid,795021/Radeon-HD...

Ryan Smith - Friday, October 22, 2010 - link

Unfortunately I don't know German well enough to read the article, and Google translations of technical articles are nearly worthless.What I can tell you is that the new texture quality slider is simply a replacement for the old Catalyst AI slider, which only controlled Crossfire profiles and texture quality in the first place. High quality mode disables all texture optimizations, which would be analogous to disabling CatAI on the 5800 series.So the default setting of Quality would be equivalent to the 5800 series setting of CatAT Standard.

thaze - Saturday, October 30, 2010 - link

"High quality mode disables all texture optimizations, which would be analogous to disabling CatAI on the 5800 series.So the default setting of Quality would be equivalent to the 5800 series setting of CatAT Standard. "According to computerbase.de, this is the case with Catalyst 10.10. But they argue that the 5800's image quality suffered in comparison to previous drivers and the 6800 just reaches this level of quality. Both of them now need manual tweaking (6800: high quality mode; 5800: CatAI disabled) to deliver the Catalyst 10.9's default quality.

tviceman - Friday, October 22, 2010 - link

I would really like more sites (including Anandtech) to investigate this. If the benchmarks around the web using default settings with the 6800 cards are indeed NOT apples to apples comparisons vs. Nvidia's default settings, then all the reviews aren't doing fair comparisons.thaze - Saturday, October 30, 2010 - link

computerbase.de also subscribes to this view after having invested more time into image quality tests.Translation of a part of their summary:

" [...] on the other hand, the textures' flickering is more intense. That's because AMD has lowered the standard anisotropic filtering settings to the level of AI Advanced in the previous generation. An incomprehensible step for us, because modern graphics cards provide enough performance to improve the image quality.

While there are games that hardly show any difference, others suffer greatly to flickering textures. After all, it is (usually) possible to reach the previous AF-quality with the "High Quality" function. The Radeon HD 6800 can still handle the quality of the previous generation after manual switching, but the standard quality is worse now!

Since we will not support such practices, we decided to test every Radeon HD 6000 card with the about five percent slower high-quality settings in the future, so the final result is roughly comparable with the default setting from Nvidia."

(They also state that Catalyst 10.10 changes the 5800's AF-quality to be similar to the 6800's, both in default settings, but again worse than default settings in older drivers.)

Computer Bottleneck - Friday, October 22, 2010 - link

The boost in low tessellation factor really caught my eye.I wonder what kind of implications this will have for game designers if AMD and Nvidia decide to take different paths on this?

I have been under the impression that boosting lower tessellation factor is good for System on a chip development because tessellating out a low quality model to a high quality model saves memory bandwidth.

DearSX - Friday, October 22, 2010 - link

Unless the 6850 overclocks a good 25%, what 460s reference 460s seem to overclock on average, it seems to not be any better overall to me. Less noise, heat, price and power, but also less overclocked performance? I'll need to wait and see. Overclocking a 460 presents a pretty good deal at current prices, which will probably continue to drop too.Goty - Friday, October 22, 2010 - link

Did you miss the whole part where the stock 6870 is basically faster (or at worst on par with) the overclocked 460 1GB? What do you think is going to happen when you overclock the 5870 AT ALL?DominionSeraph - Friday, October 22, 2010 - link

The 6870 is more expensive than the 1GB GTX 460. Apples to apples would be DearSX's point -- 6850 vs 1GB GTX 460. They are about the same performance at about the same price -- $~185 for the 6850 w/ shipping and ~$180 for the 1GB GTX 460 after rebate.The 6850 has the edge in price/performance at stock clocks, but the GTX 460 overclocks well. The 6850 would need to consistently overclock ~20% to keep its advantage over the GTX 460.