SandForce Announces Next-Gen SSDs, SF-2000 Capable of 500MB/s and 60K IOPS

by Anand Lal Shimpi on October 7, 2010 9:30 AM ESTNAND Support: Everything

The SF-2000 controllers are NAND manufacturer agnostic. Both ONFI 2 and toggle interfaces are supported. Let’s talk about what this means.

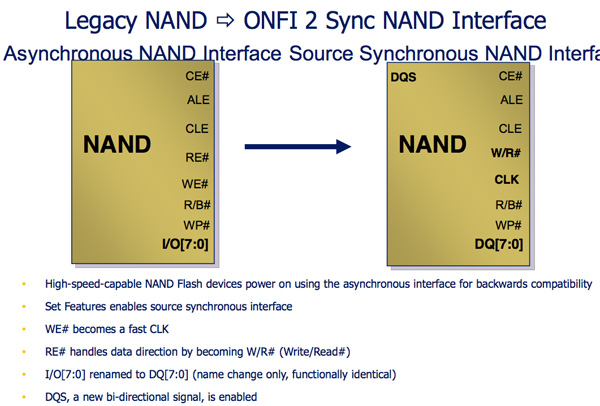

Legacy NAND is written in a very straight forward manner. A write enable signal is sent to the NAND, once the WE signal is high data can latch to the NAND.

Both ONFI 2 and Toggle NAND add another bit to the NAND interface: the DQS signal. The Write Enable signal is still present but it’s now only used for latching commands and addresses, DQS is used for data transfers. Instead of only transferring data when the DQS signal is high, ONFI2 and Toggle NAND support transferring data on both the rising and falling edges of the DQS signal. This should sound a lot like DDR to you, because it is.

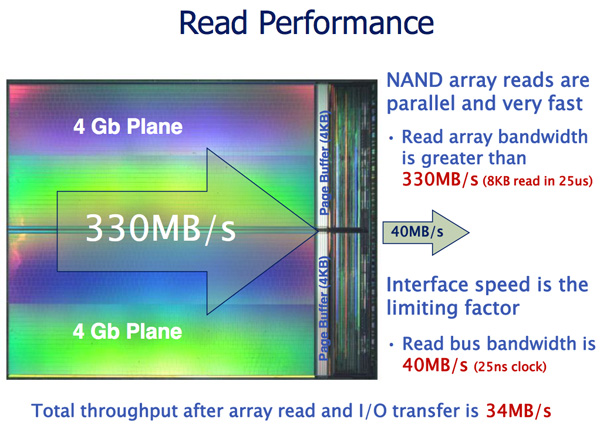

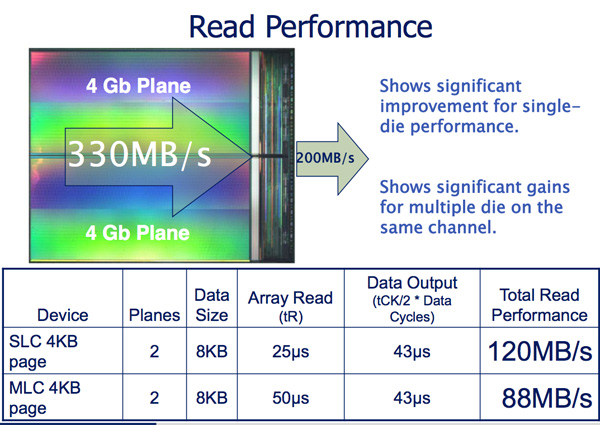

The benefit is tremendous. Whereas the current interface to NAND is limited to 40MB/s per device, ONFI 2 and Toggle increase that to 166MB/s per device.

Micron indicates that a dual plane NAND array can be read from at up to 330MB/s and written to at 33MB/s. By implementing an ONFI 2 and Toggle compliant interface, SandForce immediately gets a huge boost in potential performance. Now it’s just a matter of managing it all.

The controller accommodates the faster NAND interface by supporting more active NAND die concurrently. The SF-2000 controllers can activate twice as many die in parallel compared to the SF-1200/1500. This should result in some pretty hefty performance gains as you’ll soon see. The controller is physically faster and has more internal memory/larger buffers in order to enable the higher expected bandwidths.

Initial designs will be based on 34nm eMLC from Micron and 32nm eMLC from Toshiba. The controller does support 25nm NAND as well, so we’ll see a transition there when the yields are high enough. Note that SandForce, like Intel, will be using enterprise grade MLC instead of SLC for the server market. The demand pretty much requires it and luckily, with a good enough controller and NAND, eMLC looks like it may be able to handle a server workload.

84 Comments

View All Comments

mino - Thursday, October 7, 2010 - link

Not really. Remember how 5 Watt VIA chips crushed 130W quad cores by HW accelerating it.Encryption is not that hard for specialized circuitry.

DesktopMan - Thursday, October 7, 2010 - link

"The SF-1200/1500 controllers have a real time AES-128 encryption engine. Set a BIOS password and the drive should be locked unless you supply that password once again (note I haven’t actually tried this)."Why don't you? No site I've found have and this would differentiate AnandTech. Surely it's of interest for anyone with a laptop?

theagentsmith - Thursday, October 7, 2010 - link

As a Sandforce SSD owner (60GB Corsair Force) I hope they won't forget SF-1200 customers and release a firmware that fixes random disappearing drives. while the computer is working as well as in idle. There is a 30-page topic on Corsair forums about this.My drive so far stuck three times since the end of July, so you can live with it even if it's a bit annoying, but there are reports of suddenly erased drives. The firmware here is the key, and as Anand has already shown they aren't flawless.

About SF-2000 drives, it would be interesting to see if there is a benefit switching from an "old" SF-1200 drive from the consumer perspective. Half a gigabyte in a second is pretty astounding, but if it translates into less than a second faster at loading a software, I suppose Intel 25nm's drives could be better because of their cost per gigabyte.

beginner99 - Friday, October 8, 2010 - link

Interesting. Also the other comments. Did not know that sf-drives have so much issues. Well I'm glad now I went conservative an bought an Intel 80 Gb drive. No issues till now and it's fast enough for me. My PC now boots faster than most other devices like my mobile phone (And I don't even have one of those fancy ones).Makaveli - Thursday, October 7, 2010 - link

Thank you for posting your real world experience agent smith, i've seen alot of people talking about these drives but also alot of people saying once you hit data it can't compress its start to get slower than intel drives.I will be waiting for real benchmarks aswell because right now those are just controller specs and the actual retail product might be slower I also highly doubt they will release consumer drives that read/write at 500/500 if they can actually live up to this performance.

After looking at the intel specs again I understand why they aren't going balls out for speed, reliablity and capacity are bigger driving factors in the current market people wants prices to go down and size up more so than 500mb/s.

Q1 2011 will be interesting.... alot of people said in 2009 that 2010 will be the year for SSD's well I think they were off by a year.

toast2 - Thursday, October 7, 2010 - link

After their announcement of first generation product, which was extremely buggy,it took 1.5 yrs to make it work.

Let's see how long this one takes.

Rumor is that they have issues with sequential bandwidth.

Sublym3 - Friday, October 8, 2010 - link

Just a small question, I have wondered if normal drive imaging/cloning is still possible on SandForce based drives? and are there any limitations to the type of drives that the image can be restored to."SandForce’s controller gets around the inherent problems with writing to NAND by simply writing less. Using real time compression and data deduplication algorithms, the SF controllers store a representation of your data and not the actual data itself. The reduced data stored on the drive is also encrypted and stored redundantly across the NAND to guarantee against dataloss from page level or block level failures. Both of these features are made possible by the fact that there’s simply less data to manage. "

When I read that it makes it sound like you would not get a proper image of a drive. I am currently using Acronis software to make images of my own computers which use Intel SSD's and everything seems to work fine.

Keatah - Friday, October 15, 2010 - link

You would get a drive image just fine. All the remapping and compression and redundant storing and encryption AND wear-leveling happen behind the scenes.Acronis would only see the data and 'sectors'.. Sector 1 *IS* sector 1, regardless of where it is stored on the drive. Sector 1's data may be spread across several pages or blocks and chips. But that is irrelevant.

If you ask for data at sector 1, you get data from sector 1. Simple as that. So yes, it will work just fine.

HachavBanav - Friday, October 8, 2010 - link

2 facts:-A single NAND (dual plane) reads 330MB/s and writes 33MB/s

-Controllers looks like being capable of aggregating the bandwidth of more and more NAND

Hypothesis 2012:

-A quad plane NAND may reads 660MB/s and writes 66MB/s (using 16KB page)

-Controllers may read/write from 8 NAND simultaneously (think of a 128KB stripe)

==> Reads @ 4GB/s and Writes @ 500MB/s may be expected !

This clearly means we are facing an INTERFACE bandwidth bottleneck !!!

SAS 3Gb or SATA II and their 300MB/s are just ridiculous

...but SAS 6Gb and SATA III and their 600MB/s looks already outdated

What's next for those SSD interfaces :

-SAS or SATA 12Gb ? Not mature enough !

-FC 16Gb ? Always been so pricey !

-100Gb Ethernet and iSCSI embedded ? This is a revolution !

-Infiniband 40Gb ? A good challenger !

Chloiber - Friday, October 8, 2010 - link

I think the only possible "short term" solution will be PCIe...But to be honest: I think we need to stop somewhere. No home user needs 4GB/s. I rather have a really stable, cheap 1GB/s drive, with a robust firmware than a 4GB/s unstable thing I don't need.

Of course - faster is always better, and there will be a time where 4GB/s + stable + cheap is possible, but seriously...the computers of today are too slow to handle this (talking about IOPS now). You probably won't see a difference between a 100k IOPS drive and a 30k IOPS drive using a hexacore. The bottleneck in real performance isn't the drive anymore, it's the CPU (at least with about 2-3 drives on external controller).

So yeah - to be honest, I don't really care about huuuuuge numbers anymore - all I want is a cheap, really stable, bug-free, big drive with nice performance.