ZFS - Building, Testing, and Benchmarking

by Matt Breitbach on October 5, 2010 4:33 PM EST- Posted in

- IT Computing

- Linux

- NAS

- Nexenta

- ZFS

Benchmarks

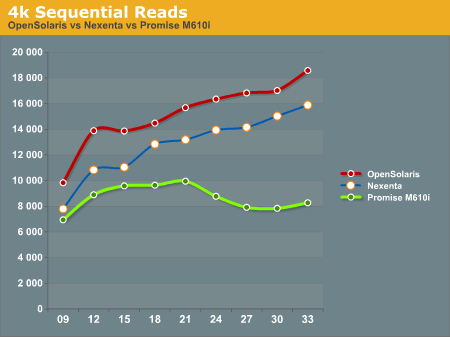

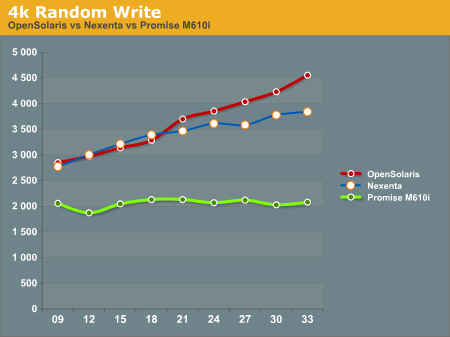

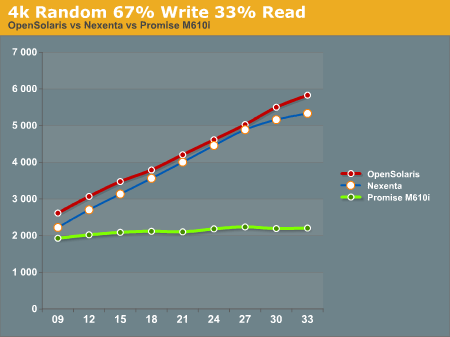

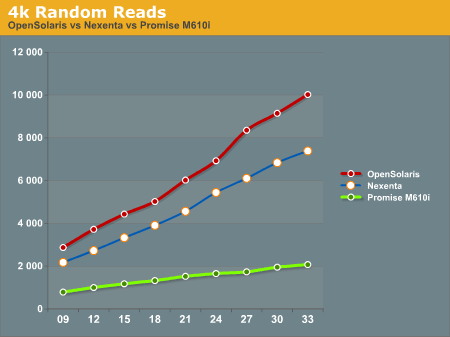

After running our tests on the ZFS system (both under Nexenta and OpenSolaris) and the Promise M610i, we came up with the following results. All graphs have IOPS on the Y-Axis, and Disk Que Lenght on the X-Axis.

In the 4k Sequential Read test, we see that the OpenSolaris and Nexenta systems both outperform the Promise M610i by a significant margin when the disk queue is increased. This is a direct effect of the L2ARC cache. Interestingly enough the OpenSolaris and Nexenta systems seem to trend identically, but the Nexenta system is measurably slower than the OpenSolaris system. We are unsure as to why this is, as they are running on the same hardware and the build of Nexenta we ran was based on the same build of OpenSolaris that we tested. We contacted Nexenta about this performance gap, but they did not have any explanation. One hypothesis that we had is that the Nexenta software is using more memory for things like the web GUI, and maybe there is less ARC available to the Nexenta solution than to a regular OpenSolaris solution.

In the 4k Random Write test, again the OpenSolaris and Nexenta systems come out ahead of the Promise M610i. The Promise box seems to be nearly flat, an indicator that it is reaching the limits of its hardware quite quickly. The OpenSolaris and Nexenta systems write faster as the disk queue increases. This seems to indicate a better re-ordering of data to make the writes more sequential the disks.

The 4k 67% Write 33% Read test again gives the edge to the OpenSolaris and Nexenta systems, while the Promise M610i is nearly flat lined. This is most likely a result of both re-ordering writes and the very effective L2ARC caching.

4k Random Reads again come out in favor of the OpenSolaris and Nexenta systems. While the Promise M610i does increase its performance as the disk queue increases, it's nowhere near the levels of performance that the OpenSolaris and Nexenta systems can deliver with their L2ARC caching.

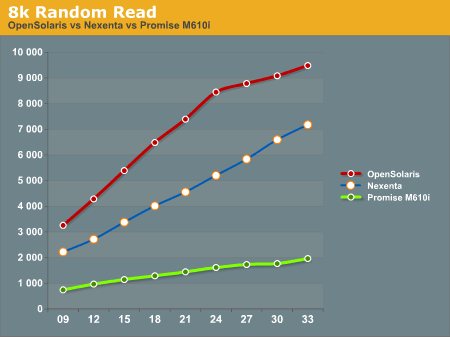

8k Random Reads indicate a similar trend to the 4k Random Reads with the OpenSolaris and Nexenta systems outperforming the Promise M610i. Again, we see the OpenSolaris and Nexenta systems trending very similarly but with the OpenSolaris system significantly outperforming the Nexenta system.

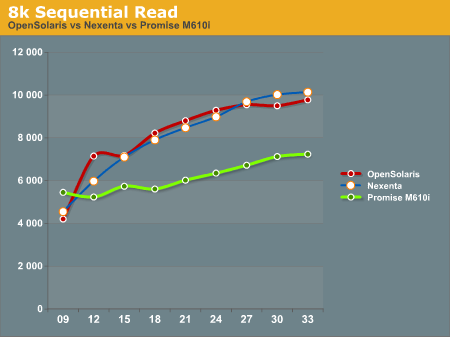

8k Sequential reads have the OpenSolaris and Nexenta systems trailing at the first data point, and then running away from the Promise M610i at higher disk queues. It's interesting to note that the Nexenta system outperforms the OpenSolaris system at several of the data points in this test.

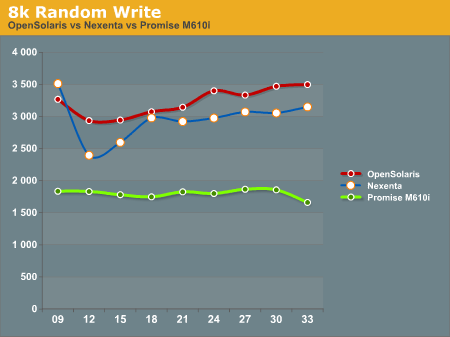

8k Random writes play out like most of the other tests we've seen with the OpenSolaris and Nexenta systems taking top honors, with the Promise M610i trailing. Again, OpenSolaris beats out Nexenta on the same hardware.

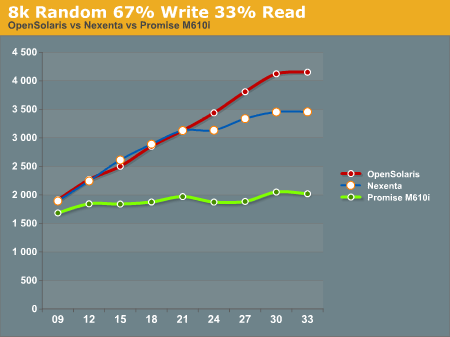

8k Random 67% Write 33% Read again favors the OpenSolaris and Nexenta systems, with the Promise M610i trailing. While the OpenSolaris and Nexenta systems start off nearly identical for the first 5 data points, at a disk queue of 24 or higher the OpenSolaris system steals the show.

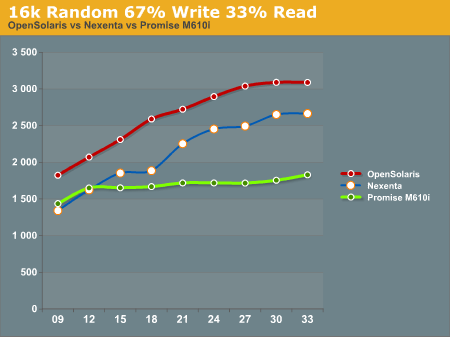

16k Random 67% Write 33% read gives us a show that we're familiar with. OpenSolaris and Nexenta both soundly beat the Promise M610i at higher disk ques. Again we see the pattern of the OpenSolaris and Nexenta systems trending nearly identically, but the OpenSolaris system outperforming the Nexenta system at all data points.

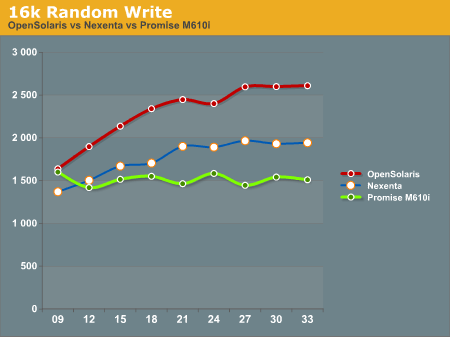

16k Random write shows the Promise M610i starting off faster than the Nexenta system and nearly on par with the OpenSolaris system, but quickly flattening out. The Nexenta box again trends higher, but cannot keep up with the OpenSolaris system.

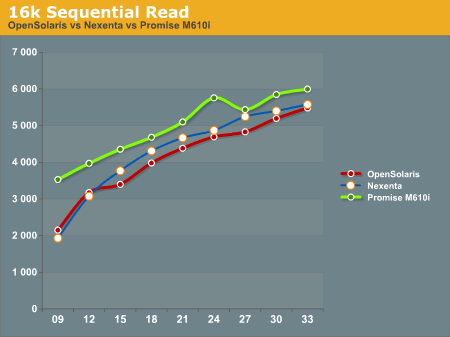

The 16k Sequential read test is the first test that we see where the Promise M610i system outperforms OpenSolaris and Nexenta at all data points. The OpenSolaris system and the Nexenta system both trend upwards at the same rate, but cannot catch the M610i system.

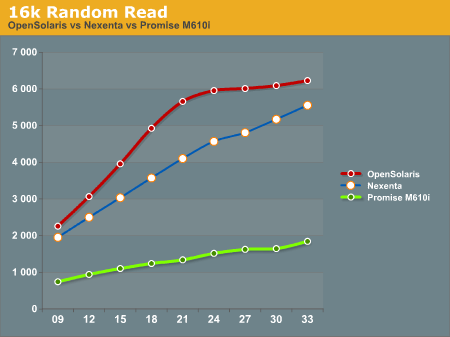

The 16k Random Read test goes back to the same pattern that we've been seeing, with the OpenSolaris and Nexenta systems running away from the Promise M610i. Again we see the OpenSolaris system take top honors with the Nexenta system trending similarly, but never reaching the performance metrics seen on the OpenSolaris system.

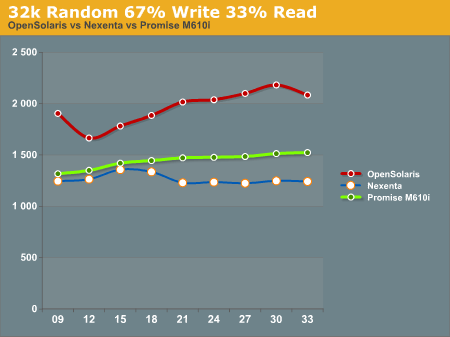

32k Random 67% Write 33% read has the OpenSolaris system on top, with the Promise M610i in second place, and the Nexenta system trailing everything. We're not really sure what to make of this, as we expected the Nexenta system to follow similar patterns to what we had seen before.

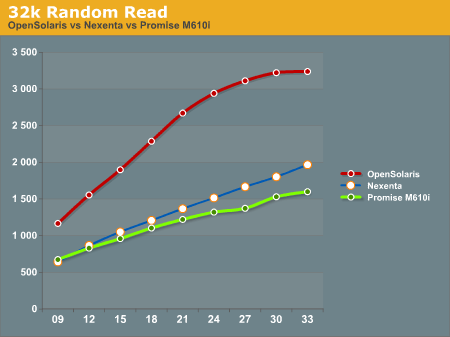

32k Random Read has the OpenSolaris system running away from everything else. On this test the Nexenta system and the Promise M610i are very similar, with the Nexentaq system edging out the Promise M610i at the highest queue depths.

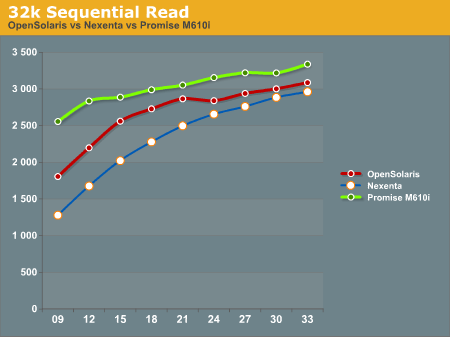

32k Sequential Reads proved to be a strong point for the Promise M610i. It outperformed the OpenSolaris and Nexenta systems at all data points. Clearly there is something in the Promise M610i that helps it excel at 32k Sequential Reads.

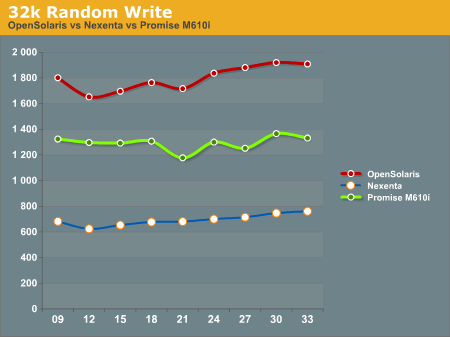

32k random writes have the OpenSolaris system on top again, with the Promise M610i in second place, and the Nexenta system trailing far behind. All of the graphs trend similarly, with little dips and rises, but not ever moving much from the initial reading.

After all the tests were done, we had to sit down and take a hard look at the results and try to formulate some ideas about how to interpret this data. We will discuss this in our conclusion.

102 Comments

View All Comments

Penti - Wednesday, October 6, 2010 - link

And a viable alternative still isn't available how is Nexenta and the community suppose to get driver support and support for new hardware there, when Oracle has closed the development kernel (SXDE is closed source), meaning that they maybe just maybe can use the retail Solaris 11 kernel if it's released in a functioning form that can be piped in with existing software and distro. They aren't going to develop it themselves and the vendors have no reason giving the code/drivers to anybody but Oracle. Continuing the OpenSolaris kernel means creating a new operating system. It means you won't get the latest ZFS updates and tools any more, at least not till they are in the normal S11 release. Means you can't expect the latest driver updates and so on either. You can continue to use it on todays hardware, but tomorrow it might be useless, you might not find working configurations.It's not clear that Nexenta actually can develop their own operating system, rather then just a distro, it means they have to create their own OS with their own kernel eventually. With their own drivers and so on. And it's not clear how much code Oracle will let slip out. It's just clear that they will keep it under wraps till official releases. It's however clear that there won't be any distro for them to base it on and any and all forks would be totally dependent on what Nexenta (Illuminos) manage to do. It will quickly get outdated without updates flowing all the time, and they came from Sun.

andersenep - Wednesday, October 6, 2010 - link

OpenIndiana/Illumos runs the same latest and greatest pool/zfs versions as the most recent Solaris 10 update.Work continues on porting newer pool/ZFS versions to FreeBSD which has plenty of driver support (better than OpenSolaris ever did).

A stated goal of the Illumos project is to maintain 100% binary compatibility with Solaris. If Oracle decides the break that compatibility, intentionally or not, it will truly become a fork. Development will still continue.

Even if no further development is made on ZFS, it's still an absolutely phenomenal filesystem. How many years now has Apple been using HFS+? FAT is still around in everything. If all development on ZFS stopped today, it would still remain an absolutely viable filesystem for many years to come. There is nothing else currently out there that even comes close to its feature set.

I don't see how ZFS being under Oracle's control makes it any worse than any other open source filesystem. The source is still out there, and people are free to do what they want with it within the CDDL terms.

This idea that just because the OpenSolaris DISTRO has been discontinued, that everything that went into it is no longer viable is silly. It is like calling Linux dead because Mandriva is dead.

Guspaz - Wednesday, October 6, 2010 - link

Thanks for mentioning OpenIndiana. I've been eagerly awaiting IllumOS to be built into an actual distribution to give me an upgrade path for my home OpenSolaris file server, and I look forward to upgrading to the first stable build of OpenIndiana.I'm currently running a dev build of OpenSolaris since the realtek network driver was broken in the latest stable build of OpenSolaris (for my chipset, at least).

Mattbreitbach - Wednesday, October 6, 2010 - link

I believe all of the current Hypervisors support this. Hyper-V does, as does XenServer. I have not done extensive testing with ESXi, but I would imagine that it supports it also.joeribl - Wednesday, October 6, 2010 - link

"Nexenta is to OpenSolaris what OpenFiler or FreeNAS is to Linux."FreeNAS has always been FreeBSD based, not Linux. It does however provide ZFS support.

Mattbreitbach - Wednesday, October 6, 2010 - link

I should have caught that - thanks for the info. I've edited the article to reflect as such.vermaden - Wednesday, October 6, 2010 - link

... with deduplication and other features, here You can grab an ISO build or a VirtualBox apliance here: http://blog.vx.sk/archives/9-Pomozte-testovat-ZFS-...It would be great to see how FreeBSD performs (8.1 and 9-CURRENT) on that hardware, I can help You configure FreeBSD for these tests if You would like to, for example, by default FreeBSD does not enables AHCI mode for SATA drives which increases random performance a lot.

Anyway, great article about ZFS performance on nice piece of hardware.

Mattbreitbach - Wednesday, October 6, 2010 - link

In Hyper-V it is called a Differencing disk - you have a parent disk that you build, and do not modify. You then create a "differencing disk". That disk uses the parent disk as it's source, and writes any changes out to the differencing disk. This way you can maintain all core OS files in one image, and write any changes out to child disks. This allows the storage system to cache any core OS components once, and any access to those core components comes directly from the cache.I believe that Xen calls it a differencing disk also, but I do not currently have a Xen Hypervisor running anywhere that I can check quickly.

gea - Wednesday, October 6, 2010 - link

new: Version 0.323napp-it ZFS appliance with Web-UI and online-installer for NexentaCore and Openindiana

Napp-it, a project to build a free "ready to run" ZFS- Web und NAS-Appliance with Web-UI and Online-Installer now supports NexentaCore and OpenIndiana (free successor of OpenSolaris) up from Version 0.323. With its online Installer, you will have your ZFS-Server running with all services and tools within minutes.

Features

NAS Fileserver with AFP (incl. Time Maschine and Zero Config), SMB with ACLs, AD-Support and User/ Groups

SAN Server with iSCSI (Comstar) and NFS forr XEN or Vmware esxi

Web-Server, FTP

Database-Server

Backup-Server

newest ZFS-Features (highest security with parity and Copy On Write, Deduplication, Raid-Z3, unlimited Snapshots via Windows previous Version, working ACLs, Online Pooltest with Datarefresh, Hybridpools, expandable Datapools=simply add Controller or Disks,............)

included Tools:

bonnie Pool-Performancetest

iperf Net-Performancetest

midnight commander

ndmpcopy Backup

rsync

smartmontools

socat

unzip

Management:

remote via Web-UI and Browser

Howto with NexentaCore:

1. insert NexentaCore CD and install

2. login as root and enter:

wget -O - www.napp-it.org/nappit | perl

During First-Installation you have to enter a mySQL Passwort angeben and select Apache with space-key

Howto with OpenIndiana (free successor of OpenSolaris):

1. Insert OpenIndiana CD and install

2. login as admin, open a terminal and enter su to get root permissions and enter:

wget -O - www.napp-it.org/nappit | perl

AFP-Server is currently installed only on Nexenta.

thats all, no step 3!

You can now remotely manage this Mac/PC NAS appliance via Browser

Details

www.napp-it.org

running Installation

www.napp-it.org/pop_en.html

Mattbreitbach - Wednesday, October 6, 2010 - link

Very neat - I am installing OpenIndiana on our hardware right now and will test out the Napp-it application.