ZFS - Building, Testing, and Benchmarking

by Matt Breitbach on October 5, 2010 4:33 PM EST- Posted in

- IT Computing

- Linux

- NAS

- Nexenta

- ZFS

Benchmarks

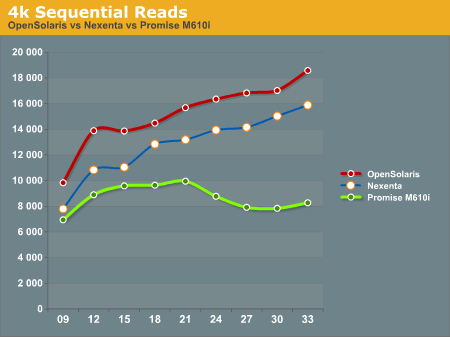

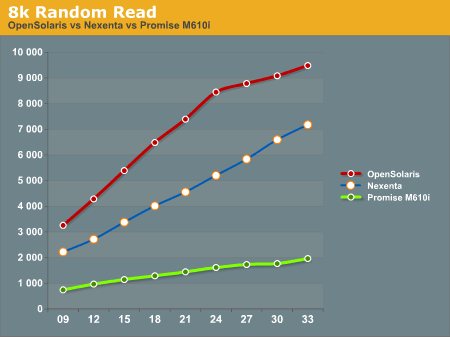

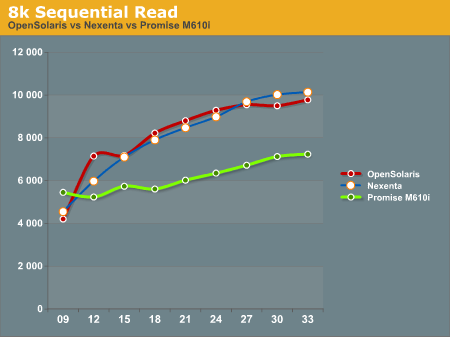

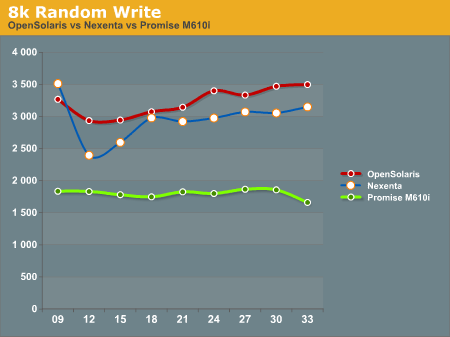

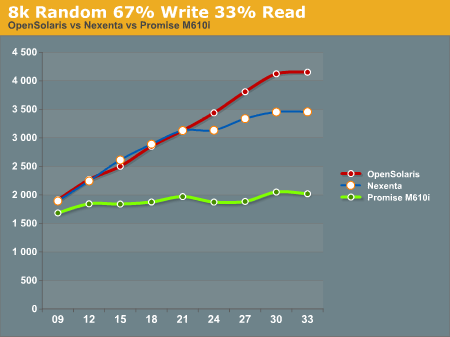

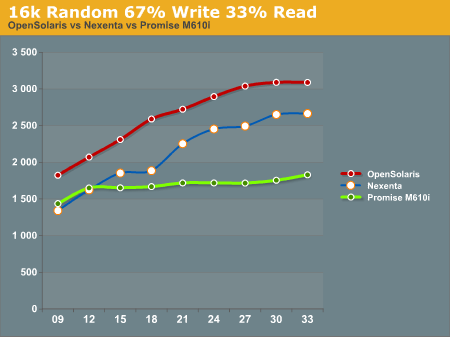

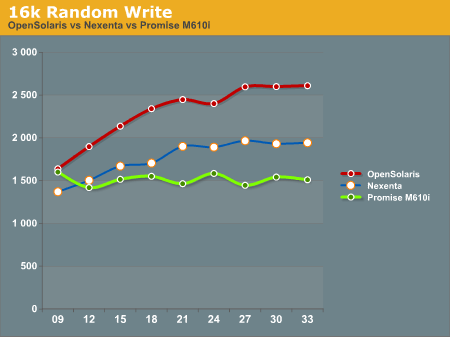

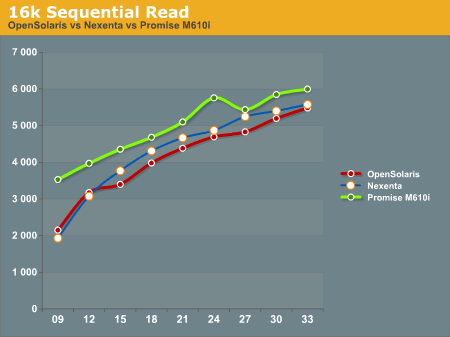

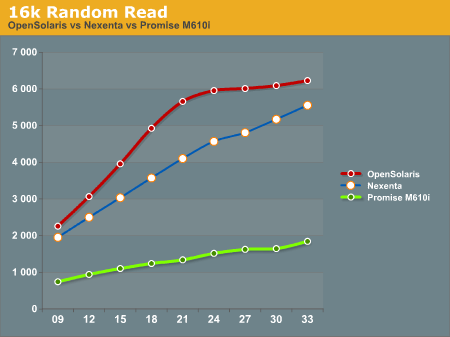

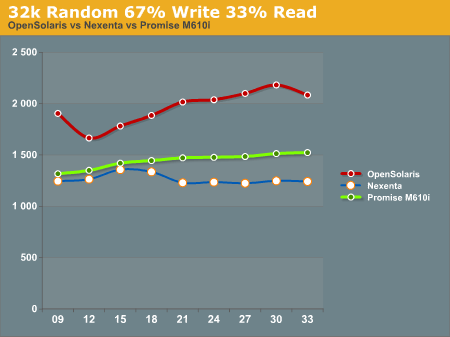

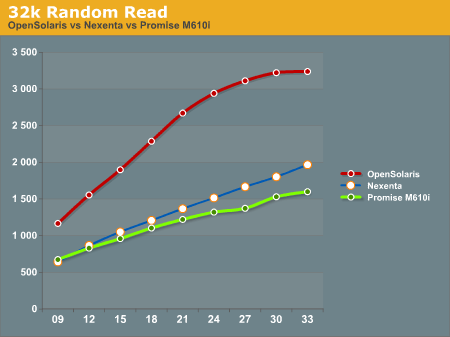

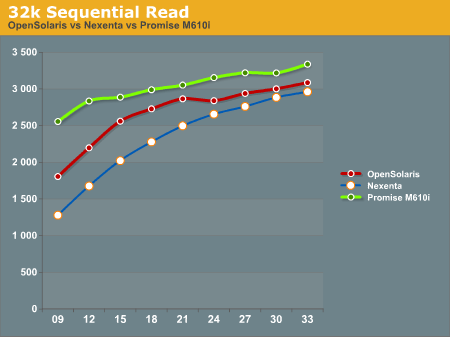

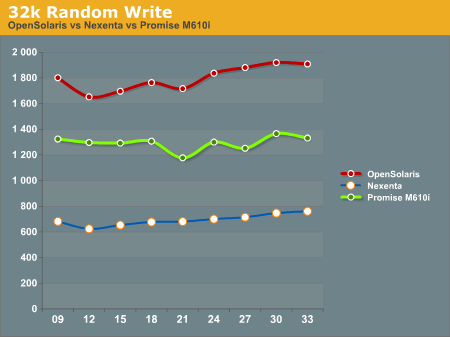

After running our tests on the ZFS system (both under Nexenta and OpenSolaris) and the Promise M610i, we came up with the following results. All graphs have IOPS on the Y-Axis, and Disk Que Lenght on the X-Axis.

In the 4k Sequential Read test, we see that the OpenSolaris and Nexenta systems both outperform the Promise M610i by a significant margin when the disk queue is increased. This is a direct effect of the L2ARC cache. Interestingly enough the OpenSolaris and Nexenta systems seem to trend identically, but the Nexenta system is measurably slower than the OpenSolaris system. We are unsure as to why this is, as they are running on the same hardware and the build of Nexenta we ran was based on the same build of OpenSolaris that we tested. We contacted Nexenta about this performance gap, but they did not have any explanation. One hypothesis that we had is that the Nexenta software is using more memory for things like the web GUI, and maybe there is less ARC available to the Nexenta solution than to a regular OpenSolaris solution.

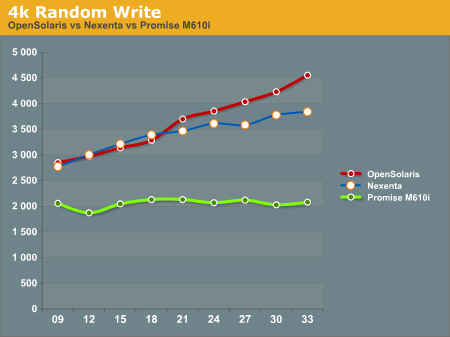

In the 4k Random Write test, again the OpenSolaris and Nexenta systems come out ahead of the Promise M610i. The Promise box seems to be nearly flat, an indicator that it is reaching the limits of its hardware quite quickly. The OpenSolaris and Nexenta systems write faster as the disk queue increases. This seems to indicate a better re-ordering of data to make the writes more sequential the disks.

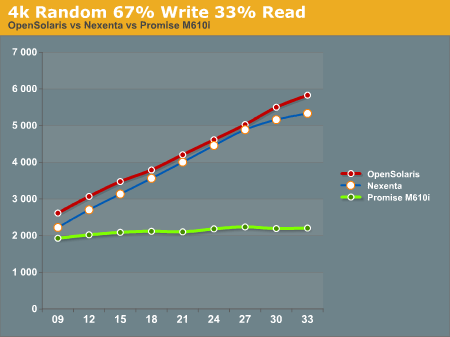

The 4k 67% Write 33% Read test again gives the edge to the OpenSolaris and Nexenta systems, while the Promise M610i is nearly flat lined. This is most likely a result of both re-ordering writes and the very effective L2ARC caching.

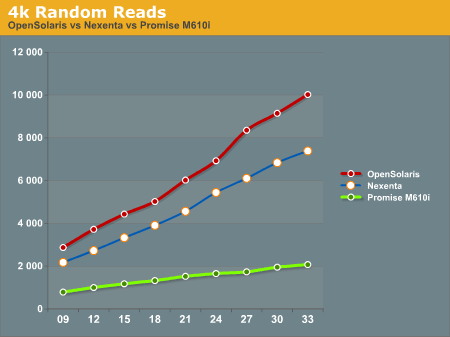

4k Random Reads again come out in favor of the OpenSolaris and Nexenta systems. While the Promise M610i does increase its performance as the disk queue increases, it's nowhere near the levels of performance that the OpenSolaris and Nexenta systems can deliver with their L2ARC caching.

8k Random Reads indicate a similar trend to the 4k Random Reads with the OpenSolaris and Nexenta systems outperforming the Promise M610i. Again, we see the OpenSolaris and Nexenta systems trending very similarly but with the OpenSolaris system significantly outperforming the Nexenta system.

8k Sequential reads have the OpenSolaris and Nexenta systems trailing at the first data point, and then running away from the Promise M610i at higher disk queues. It's interesting to note that the Nexenta system outperforms the OpenSolaris system at several of the data points in this test.

8k Random writes play out like most of the other tests we've seen with the OpenSolaris and Nexenta systems taking top honors, with the Promise M610i trailing. Again, OpenSolaris beats out Nexenta on the same hardware.

8k Random 67% Write 33% Read again favors the OpenSolaris and Nexenta systems, with the Promise M610i trailing. While the OpenSolaris and Nexenta systems start off nearly identical for the first 5 data points, at a disk queue of 24 or higher the OpenSolaris system steals the show.

16k Random 67% Write 33% read gives us a show that we're familiar with. OpenSolaris and Nexenta both soundly beat the Promise M610i at higher disk ques. Again we see the pattern of the OpenSolaris and Nexenta systems trending nearly identically, but the OpenSolaris system outperforming the Nexenta system at all data points.

16k Random write shows the Promise M610i starting off faster than the Nexenta system and nearly on par with the OpenSolaris system, but quickly flattening out. The Nexenta box again trends higher, but cannot keep up with the OpenSolaris system.

The 16k Sequential read test is the first test that we see where the Promise M610i system outperforms OpenSolaris and Nexenta at all data points. The OpenSolaris system and the Nexenta system both trend upwards at the same rate, but cannot catch the M610i system.

The 16k Random Read test goes back to the same pattern that we've been seeing, with the OpenSolaris and Nexenta systems running away from the Promise M610i. Again we see the OpenSolaris system take top honors with the Nexenta system trending similarly, but never reaching the performance metrics seen on the OpenSolaris system.

32k Random 67% Write 33% read has the OpenSolaris system on top, with the Promise M610i in second place, and the Nexenta system trailing everything. We're not really sure what to make of this, as we expected the Nexenta system to follow similar patterns to what we had seen before.

32k Random Read has the OpenSolaris system running away from everything else. On this test the Nexenta system and the Promise M610i are very similar, with the Nexentaq system edging out the Promise M610i at the highest queue depths.

32k Sequential Reads proved to be a strong point for the Promise M610i. It outperformed the OpenSolaris and Nexenta systems at all data points. Clearly there is something in the Promise M610i that helps it excel at 32k Sequential Reads.

32k random writes have the OpenSolaris system on top again, with the Promise M610i in second place, and the Nexenta system trailing far behind. All of the graphs trend similarly, with little dips and rises, but not ever moving much from the initial reading.

After all the tests were done, we had to sit down and take a hard look at the results and try to formulate some ideas about how to interpret this data. We will discuss this in our conclusion.

102 Comments

View All Comments

L. - Wednesday, March 16, 2011 - link

Too bad you already have the 15k drives.2) I wanted to say this earlier, but I'm quite confident that SLC is NOT required for a SLOG device, as with current wear leveling, unless you actually write more than <MLC disk capacity> / day there is no way you'll ever need the SLC's extended durability.

3) Again, MLC SSD's, good stuff

4) Yes again

5) not too shabby

6) Why use 15k or 7k2 rpm drives in the first place

All in all nice project, just too bad you have to start from used equipment.

In my view, you can easily trash both your similar system and Anandtech's test system and simply go for what the future is going to be anyway :

Raid-10 MLC drives, 48+RAM, 4 CPU's (yes those MLC's are going to perform so much faster you will need this - quite a fair chance you'll need AMD stuff on that as 4-socket is their place) and mainly and this is the hardest part, sata 6 Gb/s * many with a controller that can actually handle the bandwidth.

Overall you'd get a much simpler, faster and cleaner solution (might need to upgrade your networking though to match with the rest).

L. - Wednesday, March 16, 2011 - link

Of course, 6 months later .. .its not the same equation ;) Sorry for the necroB3an - Tuesday, October 5, 2010 - link

I like seeing stuff like this on Anand. It's a shame it dont draw as much interest as even the poor Apple articles.Tros - Tuesday, October 5, 2010 - link

Actually, I was just hoping to see a ZFS vs HFS+ comparison for the higher-end Macs. But with the given players (Oracle, Apple), I don't know if the drivers will ever be officially released.Taft12 - Wednesday, October 6, 2010 - link

Doesn't it? This interests me greatly and judging by the number of comments is as popular as any article about the latest video or desktop CPU techgreenguy - Wednesday, October 6, 2010 - link

I have to say, kudos to you Anand for featuring an article about ZFS! It is truly the killer app for filesystems right now, and nothing else is going to come close to it for quite some time. What use is performance if you can't automatically verify that your data (and the system files that tells your system how to manipulate that data) was what it was the last time you checked?You picked up on the benefits of the SSD (low latency) before anyone else, it is no wonder you've figured out the benefits of ZFS too earlier than most of your compatriots as well. Well done.

elopescardozo - Tuesday, October 5, 2010 - link

Hi Matt,Thank you for the extensive report. In your testing results there are a few unexpected results. I find the difference between Nexenta and Open Solaris hard to understand, unless it is due to misalignment of the IO in the case of Nexenta.

A zvol (the basis for an iSCSI volume) is created on top of the ZFS pool with a certain block size. I believe the default is 8kB. Next you initialize the volume and format it with NTFS. By default the NTFS structure starts at sector 63 (sixty three, not a typo!), which means that every other 4kB cluster (the NTFS allocation size) falls over a zvol block boundary. That has a serious impact on performance. I saw a report of 70% improvement after properly alignment.

Is it possible that the Open Solaris and Nexenta pools were different in this respect, either because of different zvol block size (e.g. 8kB for Nexenta, 128kB for Open Solaris – larger blocks means less “boundary cases”) or differences in how the volumes were initialized and formatted?

mbreitba - Tuesday, October 5, 2010 - link

It's possible that the sector alignment could be a problem, but I believe the build that we tested, the default sector size was set to 128kB, which was identical to OpenSolaris. If that has changed, then we should re-test with the newest build to see if that makes any differences.cdillon - Tuesday, October 5, 2010 - link

Windows Server 2008 aligns all newly created partitions at 1MB, so his NTFS block access should have been properly aligned by default.Mattbreitbach - Tuesday, October 5, 2010 - link

I was unaware that Windows 2008 correctly aligned NTFS partitions now. Thanks for the info!