SeaMicro Announces SM10000 Server with 512 Atom CPUs and Low Power Consumption

by Anand Lal Shimpi on June 14, 2010 1:38 PM EST- Posted in

- IT Computing

- CPUs

- SeaMicro

Understanding the SeaMicro Architecture

The secret begins at the motherboard level. It all starts with a single core Intel Atom Z530 (1.6GHz, Silverthorne) and US15 chipset (Poulsbo). Astute readers will recognize this as the previous generation Intel Atom platform for MIDs, codenamed Menlow. SeaMicro chose single core Menlow and not the newer Pine Trail platform in order to hit its power targets. Moorestown would probably be a good fit as well but the chips only recently started shipping. Hanging off Poulsbo is 2GB of DDR2 memory.

SeaMicro excludes the I/O hub and instead connects its custom ASIC over Poulsbo’s PCIe x2 interface. The custom ASIC emulates all I/O features, everything from SATA to Gigabit Ethernet is handled by the SeaMicro chip. As far as the Atom CPU is concerned, it has a bunch of I/O devices that hang off of Poulsbo. The virtualized I/O is a key part of making SeaMicro’s technology work.

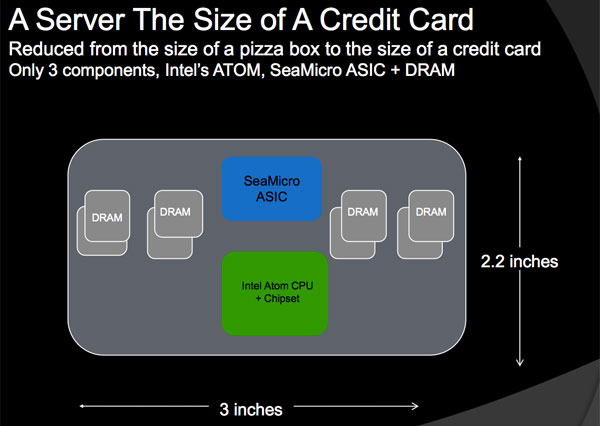

Those three chips (+ DRAM) make up the basic building block of a SM10000 server. They occupy a PCB area about the size of a credit card: 2.2” x 3”. Since this basic building block is physically autonomous, SeaMicro refers to it as a single server.

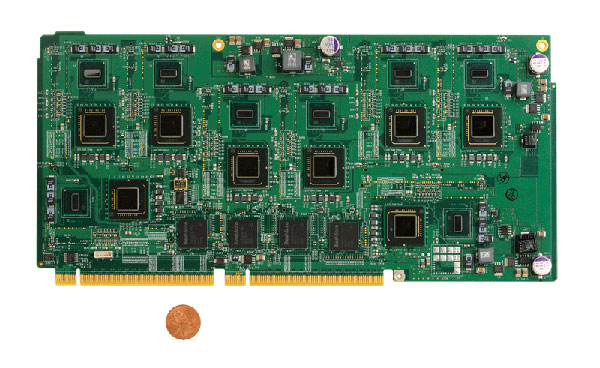

SeaMicro then takes eight of these server building blocks and puts them on a card measuring 5” x 11”. Instead of using one SeaMicro ASIC per Atom, the ratio is one ASIC per two Atom processors.

Eight Atom "servers", four SeaMicro ASICs and a 32-lane electrical PCIe interface to the rest of the box

Each one of these cards has a pair of electrical PCIe x16 connectors that plug into the SM10000’s back plane.

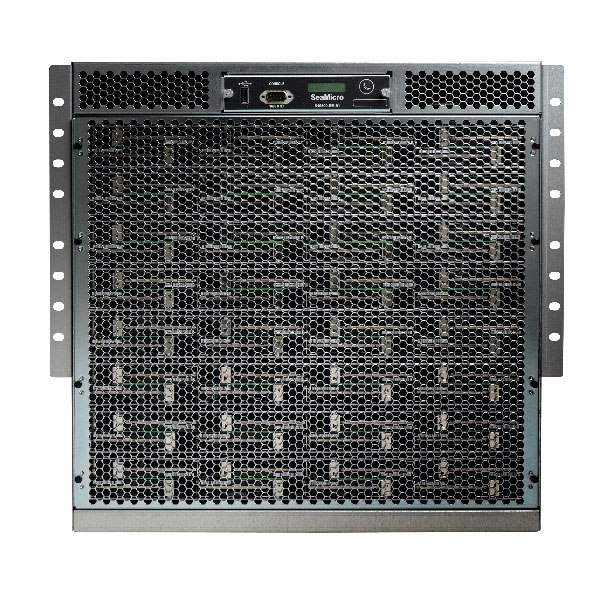

A single SM10000 can support up to 64 of these cards, which is how you end up with 512 Atom CPUs in a 10U chassis. Intra-system communication occurs over a multidimensional torus bus interface. The link is built by connecting all of the SM ASICs together, allowing each Atom server to communicate with any other server in the system.

Despite being well connected, the server architecture doesn’t support shared memory (each Atom has exclusive access to its 2GB of DRAM). The torus interface is instead used to share the virtualized I/O amongst all of the servers. If server/CPU 0 wants to access the virtual HDD on server 206, it can. Each hop takes 8 microseconds so it’s fairly low latency for storage and network I/O but not fast enough for memory.

Since each Atom CPU is paired with 2GB of memory, the total machine has a terabyte of DDR2 memory. But like I said earlier, the memory isn’t shared so you have a 2GB maximum limit on each server. This in itself imposes a restriction on the type of applications you’ll run on a SM10000. If you need more than 2GB of memory per server in your rack, the SM10000 isn’t for you.

Poulsbo’s memory controller doesn’t support ECC, which is fine for MIDs but can be a problem for some enterprise customers. SeaMicro claims that most of its customers aren’t bothered by the lack of ECC. There’s no hope for future ECC support unless Intel eventually embraces the Atom platform for servers.

Networking

SeaMicro not only wants to replace some of your server hardware with its boxes, but also some of Cisco’s networking equipment. A single SM10000 is designed to replace your top rack switch.

The idea is you’d take the uplink provided to your backbone and plug it directly into one of the ports on the back of the SM10000. All load balancing, terminal server and switching functionality is handled by the SM10000 itself. It’s all Linux based so you should be able to add a firewall as well.

On the back of the machine you’ll see rows of ethernet ports, up to 64 to be exact. On each one of these cards is a separate CPU that is used to handle all of the network functionality of the server. It helps SeaMicro justify the pricing of the server as you’re replacing not only your server hardware but also some expensive networking gear.

Each server has a physical Gigabit Ethernet interface on it. A fully populated SM10000 can have up to 64 Gigabit Ethernet ports, or it can be configured to have 16 10GbE ports. If you don’t need that much bandwidth you can just use the Ethernet ports you need.

The networking is fully virtualized so each Atom “server” gets its own IP address and thinks it has its own connection to the outside world.

Storage

SeaMicro’s ASIC virtualizes four SATA ports per Atom processor. The SM10000 can support up to 64 physical 2.5” HDDs or SSDs. The customer will configure the machine to determine what four physical disks or slices of disks will map to each Atom CPU.

The SM ASIC emulates RAID-0, but nothing more. SeaMicro states this is because its target market is to replace dozens of simple servers that have limited or no storage. If you’re replacing a couple hundred web servers that only use their storage for OS and little else, the SeaMicro approach makes sense.

OS

Linux is fully supported today but currently there’s no official Windows support. SeaMicro claims the box works just fine running a VM with Windows Server installed however Microsoft doesn’t officially support the configuration. SM is working with Microsoft on fixing that but for now, if you want support, you need to be running Linux.

53 Comments

View All Comments

nofumble62 - Tuesday, June 15, 2010 - link

So now this server need only 4 racks. Cheaper, more energy efficient.I think they have demonstrated something like 80 cores on a chip couple years ago. You bet it is coming.

joshua4000 - Tuesday, June 15, 2010 - link

I thought current AMD/Intel CPUs will save some power due to reduced clock speeds when unused. Wasn't there that core-parking thingy introduced with Win7 as well?So why opt for a slower solution which will probably take a good amount of time longer to finish the same task?

piroroadkill - Tuesday, June 15, 2010 - link

Why would I want a bunch of shite servers? Initially, I thought they were just unified into one big ass server, or maybe a couple of servers.But this as I understand it, is effectively a bunch of netbooks in an expensive box. No.

LoneWolf15 - Tuesday, June 15, 2010 - link

Silly question, but...Say I run VMWare ESX on this box. Is it possible to dedicate more than one server board to support a single virtual machine, or at least, more than one server board to running the VMWare OS itself? Or am I looking at it all wrong?

It seems like even if I could, VMWare's limit on how many CPU sockets you can have for a given license might be a limiting factor.

cdillon - Tuesday, June 15, 2010 - link

ESX and other virtualization systems can cluster, but not in that way. Each Atom on the DM10000 would have to run its own copy of the virtualization software. That's not what you want to do. The DM10000 is really the *opposite* of virtualization. Instead of taking fewer, faster CPUs and splitting them up into smaller virtual CPUs, which is a very flexible way of doing things, they're using far more slower CPUs in a completely inflexible way. I'm having a really hard time thinking of any actual benefits of this thing compared to virtualization on commodity servers.cl1020 - Tuesday, June 15, 2010 - link

Same here. For the money you could easlily build out 500 vm's on comodity blade servers running ESX and have a much more flexible solution.I just don't see any scenario where this would be a better solution.

mpschan - Tuesday, June 15, 2010 - link

Many people here are talking about how virtualization is a better solution and how they can't envision a market for this.Personally, I think that this isn't a bad first design for a server of this type. Sure, it could be better. But I doubt they whipped up this idea yesterday. They probably spent a great deal of time designing this sucker from the ground up.

The market that will find this useful might be small, but it also might be large enough to fund the next version. And who knows what that might be capable of. Better cross-"server" computation, better access to other CPUs' memory, maybe other CPU options, ECC, etc.

MGSsancho - Tuesday, June 15, 2010 - link

Would be an awesome server if you want to index things. 64 nics on it? yes I know 1 server could easy connect to thousands of connections but this beast has a market. maybe for server testing; use this box as a client node, have each atom have 25 concurrent connections. that is 12,800 connections to your server array. great for testing IMO. or how about network testing; with 64 nics you hook up 10 or so nics to each switch and test your setup. you can mix it up with 10gb Ethernet nics as well. I personally see this device being amazing for testing.ReaM - Thursday, June 17, 2010 - link

Hi,Atom has a horrible performance per watt value!!!

Using simple mobile core2duo will save you more power!!!

I mean, read every Atom test, the mobile chips are far better. (as I can remember up to 9 times more efficient, for the same performance)

this product is a failure

Oxford Guy - Friday, June 18, 2010 - link

"The things that suck about the atom:1. double precision. Use a double, and the Atom will grind to a halt.

2. division. Use rcp + mul instead.

3. sqrt. Same as division.

All of those produce unacceptable stalls, and annihilate your performance immediately. So don't use them!

Now, you'd imagine those are insurmountable, but you'd be wrong. If you use the Intel compiler, restrict yourself to float or int based SSE instuctions only, avoid the list of things that kill performance, and make extreme use of OpenMP, they really can start punching above their weight. Sure they'll never come close to an i7, but they aren't *that* bad if you tune your code carefully. Infact, the biggest problem I've found with my Atom330 system is not the CPU itself, but good old fashioned memory bandwidth. The memory bandwidth appears to be about half that of Core2 (which makes sense since it doesn't support dual channel memory), and for most people that will cripple the performance long before the CPU runs out of grunt.

The biggest problem with them right now is that they are so different architecturally from any other x86/x64 CPU that all apps need to be re-compiled with relevant compiler switches for them. Code optimised for a Core2 or i7 performs terribly on the atom."

How do these drawbacks compare to the ARM, Tegra, and Apple chips?