SeaMicro Announces SM10000 Server with 512 Atom CPUs and Low Power Consumption

by Anand Lal Shimpi on June 14, 2010 1:38 PM EST- Posted in

- IT Computing

- CPUs

- SeaMicro

Understanding the SeaMicro Architecture

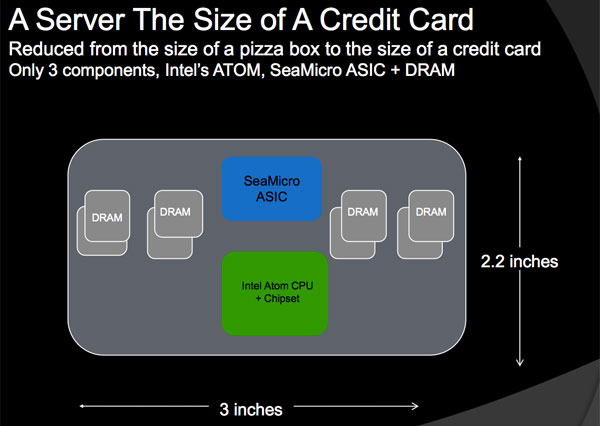

The secret begins at the motherboard level. It all starts with a single core Intel Atom Z530 (1.6GHz, Silverthorne) and US15 chipset (Poulsbo). Astute readers will recognize this as the previous generation Intel Atom platform for MIDs, codenamed Menlow. SeaMicro chose single core Menlow and not the newer Pine Trail platform in order to hit its power targets. Moorestown would probably be a good fit as well but the chips only recently started shipping. Hanging off Poulsbo is 2GB of DDR2 memory.

SeaMicro excludes the I/O hub and instead connects its custom ASIC over Poulsbo’s PCIe x2 interface. The custom ASIC emulates all I/O features, everything from SATA to Gigabit Ethernet is handled by the SeaMicro chip. As far as the Atom CPU is concerned, it has a bunch of I/O devices that hang off of Poulsbo. The virtualized I/O is a key part of making SeaMicro’s technology work.

Those three chips (+ DRAM) make up the basic building block of a SM10000 server. They occupy a PCB area about the size of a credit card: 2.2” x 3”. Since this basic building block is physically autonomous, SeaMicro refers to it as a single server.

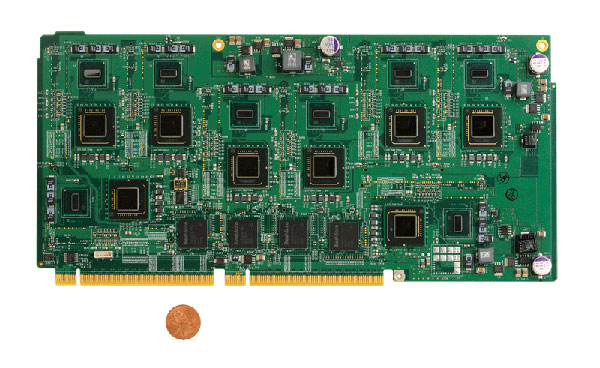

SeaMicro then takes eight of these server building blocks and puts them on a card measuring 5” x 11”. Instead of using one SeaMicro ASIC per Atom, the ratio is one ASIC per two Atom processors.

Eight Atom "servers", four SeaMicro ASICs and a 32-lane electrical PCIe interface to the rest of the box

Each one of these cards has a pair of electrical PCIe x16 connectors that plug into the SM10000’s back plane.

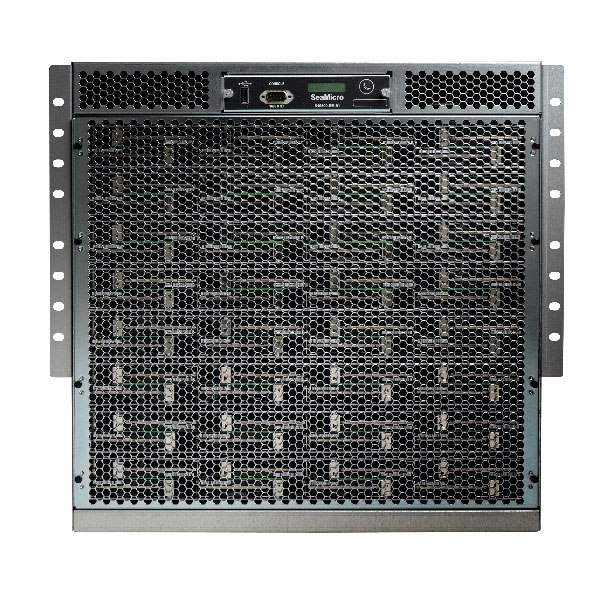

A single SM10000 can support up to 64 of these cards, which is how you end up with 512 Atom CPUs in a 10U chassis. Intra-system communication occurs over a multidimensional torus bus interface. The link is built by connecting all of the SM ASICs together, allowing each Atom server to communicate with any other server in the system.

Despite being well connected, the server architecture doesn’t support shared memory (each Atom has exclusive access to its 2GB of DRAM). The torus interface is instead used to share the virtualized I/O amongst all of the servers. If server/CPU 0 wants to access the virtual HDD on server 206, it can. Each hop takes 8 microseconds so it’s fairly low latency for storage and network I/O but not fast enough for memory.

Since each Atom CPU is paired with 2GB of memory, the total machine has a terabyte of DDR2 memory. But like I said earlier, the memory isn’t shared so you have a 2GB maximum limit on each server. This in itself imposes a restriction on the type of applications you’ll run on a SM10000. If you need more than 2GB of memory per server in your rack, the SM10000 isn’t for you.

Poulsbo’s memory controller doesn’t support ECC, which is fine for MIDs but can be a problem for some enterprise customers. SeaMicro claims that most of its customers aren’t bothered by the lack of ECC. There’s no hope for future ECC support unless Intel eventually embraces the Atom platform for servers.

Networking

SeaMicro not only wants to replace some of your server hardware with its boxes, but also some of Cisco’s networking equipment. A single SM10000 is designed to replace your top rack switch.

The idea is you’d take the uplink provided to your backbone and plug it directly into one of the ports on the back of the SM10000. All load balancing, terminal server and switching functionality is handled by the SM10000 itself. It’s all Linux based so you should be able to add a firewall as well.

On the back of the machine you’ll see rows of ethernet ports, up to 64 to be exact. On each one of these cards is a separate CPU that is used to handle all of the network functionality of the server. It helps SeaMicro justify the pricing of the server as you’re replacing not only your server hardware but also some expensive networking gear.

Each server has a physical Gigabit Ethernet interface on it. A fully populated SM10000 can have up to 64 Gigabit Ethernet ports, or it can be configured to have 16 10GbE ports. If you don’t need that much bandwidth you can just use the Ethernet ports you need.

The networking is fully virtualized so each Atom “server” gets its own IP address and thinks it has its own connection to the outside world.

Storage

SeaMicro’s ASIC virtualizes four SATA ports per Atom processor. The SM10000 can support up to 64 physical 2.5” HDDs or SSDs. The customer will configure the machine to determine what four physical disks or slices of disks will map to each Atom CPU.

The SM ASIC emulates RAID-0, but nothing more. SeaMicro states this is because its target market is to replace dozens of simple servers that have limited or no storage. If you’re replacing a couple hundred web servers that only use their storage for OS and little else, the SeaMicro approach makes sense.

OS

Linux is fully supported today but currently there’s no official Windows support. SeaMicro claims the box works just fine running a VM with Windows Server installed however Microsoft doesn’t officially support the configuration. SM is working with Microsoft on fixing that but for now, if you want support, you need to be running Linux.

53 Comments

View All Comments

datasegment - Monday, June 14, 2010 - link

Looks to me like the savings go up, but the amount of power used steadily *increases* when CPU usage drops... I'm guessing the charts and titles are mismatched?JarredWalton - Monday, June 14, 2010 - link

No... it's a poorly made marketing chart IMO. Look at the number of systems listed:1000 Dell vs. 40 SeaMicro = $1084110 ($52.94 per "server")

2000 Dell vs. 80 SeaMicro = $1638120 ($39.99 per "server")

4000 Dell vs. 160 SeaMicro = $2718720 ($33.19 per "server")

Based on that, the savings per "server" actually drops at lower workloads -- that's with 512 Atom chips in each SeaMicro server. It's also a bit of a laugh that 20480 Atom chips are "equal" to 8000 Nehalem cores (dual socket + quad-core, plus Hyper-Threading) under 100% load. Although they're probably using the slowest/cheapest E5502 CPU as a comparison point (dual-core, no HTT). Under 100% load, the Nehalem cores are far more useful than Atom.

cdillon - Monday, June 14, 2010 - link

Virtualization has already solved the problem they've attempted to solve, and I think virtualization does a better job at it. For example, I'm going to be implementing desktop virtualization at my workplace by next year, and have been looking at blade systems to house the virtualization hosts. You can pack at least twice the computing power into the same rack space, and use less than twice the wall-power (using low-power Xeon or Opteron CPUs and low-power DIMMs). You actually have an opportunity to SAVE EVEN MORE power because systems like VMware vSphere can pack idle virtual machines on to fewer hosts and can dynamically power on and off entire hosts (blades) as demand requires it. Because the DM10000 does non-virtualized (other than I/O) 1:1 computing, there's no opportunity to power-down completely idle CPU nodes, yet keep the software "running" for when that odd network request comes in and needs attention. Virtualization does that.PopcornMachine - Monday, June 14, 2010 - link

Couldn't you also use this box as a VMware server, and then save even more energy?cdillon - Monday, June 14, 2010 - link

No, for several reasons, but mainly the licensing costs for vSphere for 512 CPU sockets. You wouldn't want to waste a fixed per-socket licensing cost on such slow, single-core CPUs. You want the best performance/dollar ratio, and that generally means as many cores as you can fit per socket. With free virtualization solutions that wouldn't really matter, but there's no free virtualization solutions that I'd want to manage 512 individual VM hosts with. Remember, the DM10000 is NOT a single 512-core server. It's 512 independent 1-core servers. Not only that, but each single Atom core is going to have a hard time running more than one or two VM guests at a time anyway, so trying to virtualize an Atom seems rather pointless.Sorry if this is a double post, first time I tried to post it just hung for about 10 minutes until I gave up on it and tried again...

PopcornMachine - Tuesday, June 15, 2010 - link

In to OS section, the article mentions running Windows under a VM. So sounds like it can handle VMware to me.On the other hand, if the box does not let the cores work together in some type of cluster, which I assumed was the point of it, then I don't see the point of it. Just a bunch of cores to weak to power a netbook properly?

fr500 - Tuesday, June 15, 2010 - link

You got 512 cores, why would you go for virtualization?beginner99 - Tuesday, June 15, 2010 - link

yeah not to mention other advantages of virtualisation especially when performing upgrades on these web applications.Or single-threaded performance. Really only useful if your apps never ever do anything moderatley cpu intensive.

PopcornMachine - Monday, June 14, 2010 - link

When Intel introduce the Atom, this was what I thought would be one its main uses.Cluster a bunch together. Low power, scalable, cheap. No brainer. Makes more sense that using them in under powered netbooks.

How come it took someone this long to do it?

It would be nice to see some benchmarks to be sure, though.

moozoo - Monday, June 14, 2010 - link

I'd love to see something like this made using a beefed up Tegra 2 type chipsets supporting OpenCL.