Since NVIDIA did not send out review samples for the G(T) 200 series last year, we’ve been slowly building up our database of results as cards have arrived from the vendors themselves. We saw the GT 220 late last year, and last month we finally saw the GT 240. Thanks to MSI, today we’ll be looking at the final card in the (G)T 200 series: the GeForce 210. The specific card we’re looking at is MSI’s N210-MD512H.

| 9600GT | GT 220 | 9500GT |

9400GT

|

G 210 | |

| Stream Processors | 64 | 48 | 32 | 16 | 16 |

| Texture Address / Filtering | 32 / 32 | 16 / 16 | 16 / 16 | 8 / 8 | 8 / 8 |

| ROPs | 16 | 8 | 8 | 4 | 4 |

| Core Clock | 650MHz | 625MHz | 550MHz | 550MHz | 589MHz |

| Shader Clock | 1625MHz | 1360MHz | 1400MHz | 1400MHz | 1402MHz |

| Memory Clock | 900MHz (1800MHz effective) GDDR3 | 900MHz (1800MHz effective) GDDR3 | 400MHz (800MHz effective) DDR2 | 400MHz (800MHz effective) DDR2 | 500MHz (1000Mhz effective) DDR2 |

| Memory Bus Width | 256-bit | 128-bit | 128-bit | 128-bit | 64-bit |

| Frame Buffer | 512MB | 512MB | 512MB | 512MB | 512MB |

| Transistor Count | 505M | 486M | 314M | 314M | 260M |

| Manufacturing Process | TSMC 55nm | TSMC 40nm | TSMC 55nm | TSMC 55nm | TSMC 40nm |

| Price Point | $69-$85 | $69-$79 | $45-$60 | $40-60 | $30-$50 |

Like the GT 220, the G210 started life over the summer as an OEM-only card, earning its wings for a public launch in October along-side the GT 220. G210 is based on GT218, the smallest member of NVIDIA’s DirectX 10.1 GPU lineup, built out of 260M transistors and measuring a mere 57mm2 on TSMC’s 40nm process. As a product it replaces NVIDIA’s similar 9400GT, while in terms of pricing it replaces the slower 8400 GS.

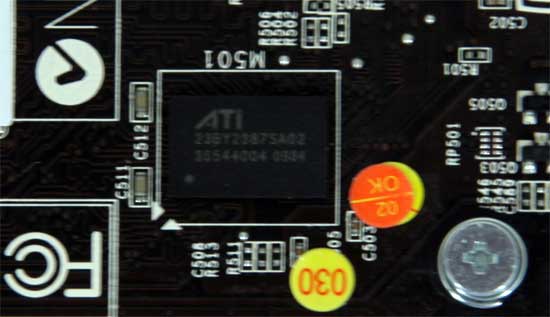

Coupled with the GT218 GPU on the G210 is 512MB of DDR2 RAM, using the customary 64bit memory bus. Interestingly, unlike most other entry-level products, the G210 only comes in 1 memory configuration: 512MB. Even more interesting are the DDR2 chips on our MSI card – 4 chips we can’t identify bearing the ATI logo.

Since the G210 is intended to replace the 9400GT, the GPU configuration is basically the same, composed of 16 SPs, 8 TMUs, and 4 ROPs. The clocks are 589MHz for the core, 1402MHz for the shaders, and 500MHz (1000MHz effective) for the RAM. Compared to the GT 220, this gives the G210 around 30% of the shader power, 50% the texture and ROP power, and 27% of the memory bandwidth. All of this consumes 30W under load, and less than 7W when idling.

The G210 is a low-profile card, the only reference card to be that way out of NVIDIA’s entire 200-series lineup. NVIDIA’s reference design (not that anyone quite uses it) is a single-slot card with a similarly wide active cooler. For our MSI card, MSI has forgone that for a passive design, using a single double-wide heatsink. Most G210 cards do use some form of an active cooler, so MSI’s N210 is fairly unique in that regard.

Since the G210 is a low-profile card, the port configurations are as expected: 1 DVI, 1 HDMI or DisplayPort, and then the detachable VGA port. In the case of MSI's card, they have gone with an HDMI port as the second digital port, while the VGA port can be used on a full-profile bracket or a second bracket in a low-profile case.

Finally, in terms of pricing, the G210 is the ultimate budget card. With the MSI card going for only $30 after rebate, it’s for all practical purposes as cheap as a video card can get. Even the DDR2 version of the Radeon HD 5450 we reviewed last week goes for nearly 50% more. In terms of pricing this makes its competitors the GeForce 8400GS and the DDR2-based Radeon HD 4350, the latter of which is also its closest competitor to in terms of feature parity. It goes without saying that this is the ultimate entry-level card, and performs accordingly.

24 Comments

View All Comments

gumdrops - Thursday, February 18, 2010 - link

Where can I find this card for $30? Froogle and Newegg both list this card at $40 which is only $2 cheaper than a 5450. In fact, the cheapest 210 of *any* brand is $38.99.With only a $2-$5 difference to the 5450, is it really value for money to go with this card?

Taft12 - Thursday, February 18, 2010 - link

In a word: Noncix.com has the BFG version of this card on sale for $29.99CAD, but Ryan makes it pretty clear the MSI is the only OEM that produces a G210 worth owning

mindless1 - Thursday, February 18, 2010 - link

If building for a small form factor system you have to be a bit more concerned because you may not have any place to put bigger 92+mm fans, so for any particular airflow rate your smaller fans are running at higher RPM already.If you are building towards low noise, your system will be quieter by having a lower intake and exhaust rate, then a very low RPM fan on a heatsink instead of a passive heatsink.

That way it will also accumulate less dust, and help cool other areas like the power regulation circuit (mosfets). It also makes a product more compatible to have a single-height heatsink without an elaborate construction to maximize surface area like you'd need if that single height sink were passive.

Don't fear or avoid fans, just avoid high(er) RPM fans. Low RPM fans are inaudible, last a long time if they don't pick a very low quality fan.

greenguy - Wednesday, February 24, 2010 - link

You've got a good point there - that's why I went with the megahalems and a pwm fan (as opposed to ninja), and scythe kama pwm fans on both intake and exhaust (on 400rpm or so). I probably should have done the same with the graphics card, but didn't do the research. Do you have any pointers to specific cards or coolers?I might have to come up with a more localized fan or some ducting.

AnnonymousCoward - Wednesday, February 17, 2010 - link

I just wanted to say, great article and I love the table on Page 1. Without it, it's so hard to keep model numbers straight.teko - Wednesday, February 17, 2010 - link

Come on, does it really make sense to benchmark Crysis for this card? Choose something that the card buyer will actually use/play!killerclick - Wednesday, February 17, 2010 - link

Once my discrete graphics card died on me on a saturday afternoon and since I didn't have a spare or an IGP my computer was useless until monday around noon. I'm going to get this card to keep as a spare. It has passive cooling, it's small, it's only $30 and I'm sure it'll perform better than any IGP even if I had one.greenguy - Wednesday, February 17, 2010 - link

I was quite amazed to see this review of the card I had just purchased two of. I wasn't sure, but I have since determined that you can run two 1920x1200 monitors from the one card (using the DVI port and the HDMI port). This is pretty cool - it doesn't force you to use the D-SUB port if you want multi-monitors, so you have all that fine detailed resolutiony goodness.It looks very promising that I will be able to get the quad monitor in portrait setup working in linux like I wanted to, using two of these cards rather than an expensive quadro solution. Fingers crossed that I can do it also in FreeBSD or OpenSolaris. I really want the self-healing properties of ZFS, because this will be a developer workstation and I don't want any errors not of my own introduction.

I'm using a P183 case, and I've found that the idle temperature of the heatsinks are 61 degrees C without the front fan (the one in front of the top 3.5" enclosure). Installing a Scythe Kama PWM fan there I got this down to 47 degrees C. (Note that both of these I had both exhaust fans installed, though they are only doing about 500rpm tops.)

Using nvidia-settings to monitor the actual temperature of the GPU itself, I am getting a temperature of 74 degrees C of one card that is running two displays with compiz on, and the other is running at 54 degrees C.

Note that the whole system is a Xeon 3450 (equivalent to i5-750 with HT), 8GB RAM, with Seasonic X-650, and it is idling at 62-67 Watts. Phenomenal.

Exelius - Tuesday, February 16, 2010 - link

I'd be interested in seeing how this card performs as an entry-level CAD card. I understand it's not going to set any records, but for a low-end CAD station coupled with 8GB RAM and a core i7, does this card perform acceptably with AutoCAD 2010 (or perform at all?)I'm not a CAD guy, btw, so don't flame me too hard if this is totally unacceptable (and I know you can't benchmark AutoCAD so I'm not expecting numbers.) This card just shows up in a lot of OEM configurations so I'm curious if I'd need to replace it with something beefier for a CAD station.

LtGoonRush - Tuesday, February 16, 2010 - link

The reality is that the cards at this pricepoint don't really provide any advantages over onboard video to justify their cost. There's so little processing power that they still can't game at all, can't provide a decent HTPC experience, all they're capable of is the same basic video decode acceleration as any non-Atom video chipset. This sort of makes sense when you're talking about an Ion 2 drop-in accelerator for an Atom system to compete with Broadcom, but I just don't see the value proposition over AMD HD 4200 or Intel GMA X4500 (much less Intel HD Graphics in Clarkdale). I'd like to see how the upcoming AMD 800-series chipsets with onboard graphics stack up.