Why NVIDIA Is Focused On Geometry

Up until now we haven’t talked a great deal about the performance of GF100, and to some extent we still can’t. We don’t know the final clock speeds of the shipping cards, so we don’t know exactly what the card will be like. But what we can talk about is why NVIDIA made the decisions they did: why they went for the parallel PolyMorph and Raster Engines.

The DX11 specification doesn’t leave NVIDIA with a ton of room to add new features. Without the capsbits, NVIDIA can’t put new features on their hardware and easily expose them, nor would they want to at risk of having those features (and hence die space) go unused. DX11 rigidly says what features a compliant video card should offer, and leaves you very little room to deviate.

So NVIDIA has taken a bit of a gamble. There’s no single wonder-feature in the hardware that immediately makes it stand out from AMD’s hardware – NVIDIA has post-rendering features such as 3D Vision or compute features such as PhysX, but when it comes to rendering they can only do what AMD does.

But the DX11 specification doesn’t say how quickly you have to do it.

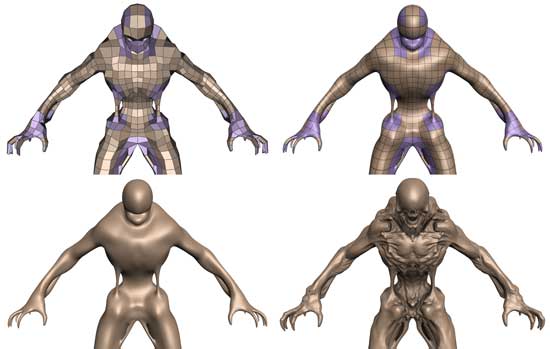

Tessellation in action

To differentiate themselves from AMD, NVIDIA is taking the tessellator and driving it for all its worth. While AMD merely has a tessellator, NVIDIA is counting on the tessellator in their PolyMorph Engine to give them a noticeable graphical advantage over AMD.

To put things in perspective, between NV30 (GeForce FX 5800) and GT200 (GeForce GTX 280), the geometry performance of NVIDIA’s hardware only increases roughly 3x in performance. Meanwhile the shader performance of their cards increased by over 150x. Compared just to GT200, GF100 has 8x the geometry performance of GT200, and NVIDIA tells us this is something they have measured in their labs.

So why does NVIDIA want so much geometry performance? Because with tessellation, it allows them to take the same assets from the same games as AMD and generate something that will look better. With more geometry power, NVIDIA can use tessellation and displacement mapping to generate more complex characters, objects, and scenery than AMD can at the same level of performance. And this is why NVIDIA has 16 PolyMorph Engines and 4 Raster Engines, because they need a lot of hardware to generate and process that much geometry.

NVIDIA believes their strategy will work, and if geometry performance is as good as they say it is, then we can see why they feel this way. Game art is usually created at far higher levels of detail than what eventually ends up being shipped, and with tessellation there’s no reason why a tessellated and displacement mapped representation of that high quality art can’t come with the game. Developers can use tessellation to scale down to whatever the hardware can do, and in NVIDIA’s world they won’t have to scale it down very far to meet up with the GF100.

At this point tessellation is a message that’s much more for developers than it is consumers. As DX11 is required to take advantage of tessellation, only a few games exist while plenty more are on the way. NVIDIA needs to convince developers to ship their art with detailed enough displacement maps to match GF100’s capabilities, and while that isn’t too hard, it’s also not a walk in the park. To that extent they’re also extolling the other virtues of tessellation, such as the ability to do higher quality animations by only needing to animate the control points of a model, and letting tessellation take care of the rest. A lot of the success of the GF100 architecture is going to ride on how developers respond to this, so it’s going to be something worth keeping an eye on.

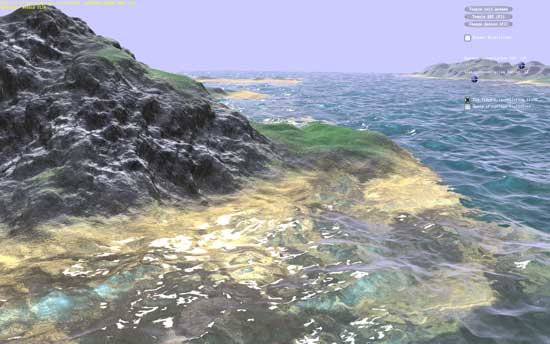

NVIDIA's water tessellation demo

NVIDIA's hair tessellation demo

115 Comments

View All Comments

FlyTexas - Monday, January 18, 2010 - link

I have a feeling that nVidia is taking the long road here...The past 6 months have been painful for nVidia, however I think they are looking way ahead. At its core, the 5000 series from AMD is really just a supersized 4000 series. Not a bad thing, but nothing new either (DX11 is nice, but that'll be awhile, and multiple monitors are still rare).

Games have all looked the same for years now. CPU and GPU power have gone WAY up in the past 5 years, but too much is still developed for DX9 (X360/PS3 partly to blame, as is Vista's poor adoption), and I suspect that even the 5000 series is really still designed around DX9 and games meant for it with a few "enhancements".

This new chip seems designed for DX11 and much higher detailed graphics. Polygon counts can go up with this, the number of new details can really shine, but only once games are designed from scratch for it. From that point, the 6 month wait isn't a big deal, it'll be another few years before games are really designed from scratch for DX11 ONLY. Otherwise you have DX9 games with a few "enhancements" that don't add to gameplay.

It seems like we are really skipping DX10 here, partly due to Vista's poor adoption, partly due to XP not being able to use DX10. With Windows 7 being a success and DX11 backported to Vista, I think in the next 2-3 years you'll finally see most games come out that really require Vista/7 because they will require DX10/11.

Of course, my 260GTX still runs everything I throw at it, so until games get more complex or something else changes, I see no reason to upgrade. I thought about a 5870 as an upgrade, but why? Everything already runs fast enough, what does it get me other than some headroom? If I was still on a 8800GT, it would make sense, but I'd rather wait for nVidia to launch so the prices come down.

PorscheRacer - Tuesday, January 19, 2010 - link

Well then there's the fact ATI designed their 2000 series (and 3000 and 4000 series) to comply with the full DirectX 10 specification. NVIDIA didn't have the chips required for this spec, and talked Microsoft into castrating DX10 by only adding in a few things. Tessellation was notably left out. ATI wsa hung out to dry on performanec and features wasted on die. They finalyl got DX10.1 later on but the damage was done.Sure people complained about Vista, mostly gamers as games ran slower, but I wonder how those games would have been if DX10 was run at the full spec (which was marginally lower the DX11 today)?

Scali - Wednesday, January 27, 2010 - link

I think you need to read this, and reconsider your statement:http://scalibq.spaces.live.com/blog/cns">http://scalibq.spaces.live.com/blog/cns!663AD9A4F9CB0661!194.entry

jimhsu - Monday, January 18, 2010 - link

I made this post in another forum, but I think it's relevant here:---

Yes, I'm beginning to see this [games becoming less GPU limited and more CPU limited] with more mainstream games (to repeat, Crysis is NOT a mainstream game). FLOP wise, a high end video card (i.e. 5970 at 5 TFLOP) is something like 100 TIMES the performance of a high end CPU (i7 at 50 GFLOPS).

In comparison, during the 2004 days, we had GPUs like the 6800 Ultra (54 GFLOP) and P4's (6 GFLOP) (historical data here: http://forum.beyond3d.com/showthread.php?t=51677)">http://forum.beyond3d.com/showthread.php?t=51677). That's 9X the performance. We've gone from 9X to 100X the performance in a matter of 5 years. No wonder few modern games are actually pushing modern GPUs (requiring people who want to "get the most" out of their high powered GPUs to go for multiple screens, insane AA/AF, insane detail settings, complex shaders, etc)

I know this is a horrible comparison, but still - it gives you an idea of the imbalance in performance. This kind of reminds me of the whole hard drive capacity vs. transfer rate argument. Today's 2 TB monsters are actually not much faster than the few GB drives at the turn of the millennium (and even less so latency wise).

Personally, I think the days of GPU bound (for mainstream discrete GPU computing) closed when Nvidia's 8 series launched (the 8800GTX is perhaps the longest-lived video card ever made). And in general, when the industry adopted programmable compute units (aka DirectX 10).

AznBoi36 - Tuesday, January 19, 2010 - link

Actually the Radeon 9700/9800 Pro had a pretty long life too. The 9700 Pro I bought in 2002/2003 had lasted me all the way to early 2007, which was when I then bought a 8800GTS 640mb. 4 years is pretty good. It could have lasted longer, but then I was itching for a new platform and needed to get a PCI-Express card (the Radeon was AGP).RJohnson - Monday, January 18, 2010 - link

Sorry you lost all credibility when you tried to spin this bullsh*#t "Today's 2 TB monsters are actually not much faster than the few GB drives at the turn of the millennium"Go try and run your new rig off one of those old drives, come back and post your results in 2 hours when your system finally boots.

jimhsu - Monday, January 18, 2010 - link

A fun chart. Note the performance disparity.http://i65.photobucket.com/albums/h204/killer-ra/V...">http://i65.photobucket.com/albums/h204/...Game%20S...

jimhsu - Monday, January 18, 2010 - link

Disclosure: I'm still on a 8800 GTS 512, and I am in no pressure to upgrade right now. While a 58xx would be nice to have, on a single monitor I really have no need to upgrade. I may look into going i7 though.dentatus - Monday, January 18, 2010 - link

If something works well for you then there is no real reason (or need) to upgrade.I still run an 8800 ultra, it still runs many games well on a 22 inch monitor. The GT200 was really only a 50% boost over the 8 series on average. For comparison, I bought a second hand ultra for $60, transplanted both of them into an i7 based system and this really produced a significant boost over a GTX285 in the games I liked; about 25% more performance- roughly equivalent to HD5850, albeit not always as smooth.

It would be good to upgrade to a single GPU that is more than double the performance of this kind of setup. But a HD5800 series card is not in that league, and it remains to be seen if the GF100 is.

dentatus - Monday, January 18, 2010 - link

I agree this chip does seem designed around new or upcoming features. Many architectural shortcomings from the GT200 chip seem to be addressed and worked around getting usable performance (like tesselation) for new API features.Anyway to be pragmatic about things, nvidias history leaves much to be desired; performance promised and performance delivered is very variable. HardOCP mentioned the 5800 Ultra launch as a con, there is also th G80 launch on the flip side.

A GPU's theoretical performance and the expectations hanging around it are nothing to make choices by, wait for the real proof. Anyone recall the launch of the 'monstrous' 2900XT? A toothless beast that one.