AnandTech Tests GPU Accelerated Flash 10.1 Prerelease

by Anand Lal Shimpi on November 19, 2009 12:00 AM EST- Posted in

- GPUs

I suppose I could start this article off with a tirade on how frustrating Adobe Flash is. But, I believe the phrase “preaching to the choir” would apply.

I’ve got a two socket, 16-thread, 3GHz, Nehalem Mac Pro as my main workstation. I have an EVGA GeForce GTX 285 in there. It’s fast.

It’s connected to a 30” monitor, running at its native resolution of 2560 x 1600.

The machine is fast enough to do things I’m not smart or talented enough to know how to do. But the one thing it can’t do is play anything off of Hulu in full screen without dropping frames.

This isn’t just a Mac issue, it’s a problem across all OSes and systems, regardless of hardware configuration. Chalk it up to poor development on Adobe’s part or...some other fault of Adobe’s, but Flash playback is extremely CPU intensive.

Today, that’s about to change. Adobe has just released a preview of Flash 10.1 (the final version is due out next year) for Windows, OS X and Linux. While all three platforms feature performance enhancements, the Windows version gets H.264 decode acceleration for flash video using DXVA (OS X and Linux are out of luck there for now).

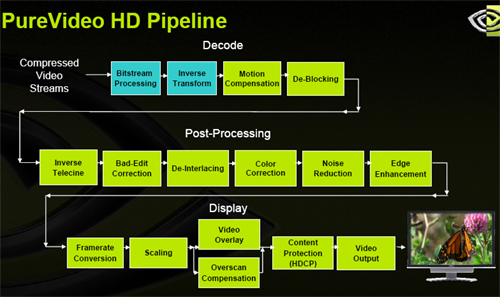

The same GPU-based decode engines that are used to offload CPU decoding of Blu-rays can now be used to decode H.264 encoded Flash video. NVIDIA also let us know that GPU acceleration for Flash animation is coming in a future version of Flash.

To get the 10.1 pre-release just go here. NVIDIA recommends that you uninstall any existing versions of flash before installing 10.1 but I’ve found that upgrading works just as well.

What Hardware is Supported?

As I just mentioned, Adobe is using DXVA to accelerate Flash video playback, which means you need a GPU that properly supports DXVA2. From NVIDIA that means anything after G80 (sorry, GeForce 8800 GTX, GTS 640/320MB and Ultra owners are out of luck). In other words anything from the GeForce 8 series, 9 series or GeForce GT/GTX series, as well as their mobile equivalents. The only exceptions being those G80 based parts I just mentioned.

Anything based on NVIDIA’s ION chipset is also supported, which will be the foundation of some of our tests today.

AMD supports the following:

- ATI Radeon™ HD 4000, HD 5700 and HD 5800 series graphics

- ATI Mobility Radeon™ HD 4000 series graphics (and higher)

- ATI Radeon™ HD 3000 integrated graphics (and higher)

- ATI FirePro™ V3750, V5700, V7750, V8700 and V8750 graphics accelerators (and later)

It’s a healthy list of supported GPUs from both camps, including integrated graphics. The only other requirement is that you have the latest drivers installed. I used 195.50 from NVIDIA and Catalyst 9.10 from AMD. (Update: The Release Notes now indicate Catalyst 9.11 drivers are required, which would explain our difficulties in testing. ATI just released Catalyst 9.11 but we're having issues getting GPU acceleration to work, waiting on a response from AMD now)

Intel’s G45 should, in theory, work. We tested it on a laptop for this article and since the acceleration is DXVA based, anything that can offload H.264 decode from the CPU using DXVA (like G45) should work just fine. As you’ll see however, our experiences weren’t exactly rosy.

135 Comments

View All Comments

Autisticgramma - Tuesday, November 17, 2009 - link

I saw all this happening long ago, when adobe aquired flash to begin with.Adobe used to just make Acrobat reader, it sucked then it sucks now, its just so embedded in any corperate high-wire act its stoopid. Not to mention all the memory space want on start up, leaves in memory ect sloppy from day one.

Macromedia was the company that created flash (at least to my memory). When macromedia owned it, it wasn't bloated crap ware. And then again we weren't streaming whole shows, and 720I 1080P were not the buzzwords of the day.

I realize homestarrunner and illwillpress are not fully transmitted/encoded video, they are created in flash for flash.

But I don't see how this is enough to require gpu acceleration, isn't there a way to streamline this? Why doesn't other video kill everything else with such efficency? Are we sure they're not just accelerating how fast my computer can be exploited, this is a net application.

I'm not a coder, or some software guru, just a dude that works on computers. Could some one explain, or link me to something, that explains how this isn't an incoding issue, and a NEEDZ M0r3 PoWA issue? Adobe on my GPU - Sounds like "Sure I need some nike xtrainers for my ears?

cosmotic - Tuesday, November 17, 2009 - link

Flash original came from FutureSplash.You really need to work on your spelling. =/

Video decode is extremely CPU intensive. This is why most video decode now happens (at least partially) on the GPU.

PrinceGaz - Tuesday, November 17, 2009 - link

Video decode is quite CPU intensive, but nowhere near as heavy as video encoding with decent quality settings. Also, all current HD video formats will be able to be handled by the CPU within a few years once sex and octal-core or higher CPUs are mainstream.The situation we are in currently regarding HD video playback of MPEG4 AVC type video is rather like the mid-late 1990's with DVD MPEG2 video, where hardware assistance was required for the CPUs of the day (typically around 200-400MHz) and you could even buy dedicated MPEG2 decoder cards. Within a few years, the CPU was doing all of the important decoding work with the only assistance being from graphics-cards for some later steps (and even that was not necessary as the CPU could do it easily if required). The same will apply with HD video in due course, especially as the boundary between a CPU and GPU narrows.

bcronce - Tuesday, November 17, 2009 - link

I can watch 1080p 1920x1080 HD videos from Apple's site with 10% cpu, silky smooth. Now that is 80% of one of my logical CPUs, but that's also some crazy nice graphics.A Core i5 dual core should handle full HD videos with sub 25% cpu usage.

Autisticgramma - Tuesday, November 17, 2009 - link

Thanks for that.Misspellers Untie! Engrish is strictly a method of conveying information/ideas.

If ya get the gist the rest is irrelevant, at least to me.

johnsonx - Tuesday, November 17, 2009 - link

Flash has always had a Hardware Acceleration checkbox, at least in 9 & 10. What did it do?KidneyBean - Wednesday, November 18, 2009 - link

For video, I think it allowed the GPU to scale the screen size. So now you can maximize or resize the video without it taking up extra CPU resources.SanLouBlues - Tuesday, November 17, 2009 - link

Adobe is kinda right about Linux, but we're getting closer:http://www.phoronix.com/scan.php?page=article&...">http://www.phoronix.com/scan.php?page=article&...

phaxmohdem - Tuesday, November 17, 2009 - link

I'm still rocking my trusty 8800GTX card. My heart sunk a little bit when I read that G80 cards are not supported. This is the first time since I bought the ol' girl years ago that she has not been able to perform.However, I also have an 8600GT that runs two extra monitors in my workstation, and I always do my Hulu watching on one of those monitors anyway, so things may still work out between us for a while longer.

CharonPDX - Tuesday, November 17, 2009 - link

I have an original early 2006 MacBook Pro (2.0 GHz Core Duo; 2 GB RAM, Radeon X1600) running Snow Leopard 10.6.2.I not only don't see any difference, but I think something was wrong with your Mac Pro. Hulu 480P and YouTube 720P videos have been fully watchable on my system, in full screen on a 1080p monitor, all along.

When playing your same Hulu video (The Office - Murder, 480P, full screen) with both versions of Flash, I get a nice stable full frame rate (I don't know how to measure frame rate on OS X, but it looks the same as when I watch it on broadcast TV,) with 150% CPU usage. (Average; varies from 130% to 160%; but seems to hover in the 148-152 range the vast majority of the time.)

And Legend of the Seeker, episode 1 in HD skips a few frames, but is perfectly watchable.