NVIDIA's Fermi: Architected for Tesla, 3 Billion Transistors in 2010

by Anand Lal Shimpi on September 30, 2009 12:00 AM EST- Posted in

- GPUs

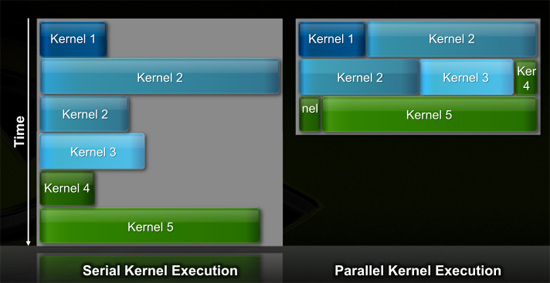

Efficiency Gets Another Boon: Parallel Kernel Support

In GPU programming, a kernel is the function or small program running across the GPU hardware. Kernels are parallel in nature and perform the same task(s) on a very large dataset.

Typically, companies like NVIDIA don't disclose their hardware limitations until a developer bumps into one of them. In GT200/G80, the entire chip could only be working on one kernel at a time.

When dealing with graphics this isn't usually a problem. There are millions of pixels to render. The problem is wider than the machine. But as you start to do more general purpose computing, not all kernels are going to be wide enough to fill the entire machine. If a single kernel couldn't fill every SM with threads/instructions, then those SMs just went idle. That's bad.

GT200 (left) vs. Fermi (right)

Fermi, once again, fixes this. Fermi's global dispatch logic can now issue multiple kernels in parallel to the entire system. At more than twice the size of GT200, the likelihood of idle SMs went up tremendously. NVIDIA needs to be able to dispatch multiple kernels in parallel to keep Fermi fed.

Application switch time (moving between GPU and CUDA mode) is also much faster on Fermi. NVIDIA says the transition is now 10x faster than GT200, and fast enough to be performed multiple times within a single frame. This is very important for implementing more elaborate GPU accelerated physics (or PhysX, great ;)…).

The connections to the outside world have also been improved. Fermi now supports parallel transfers to/from the CPU. Previously CPU->GPU and GPU->CPU transfers had to happen serially.

415 Comments

View All Comments

- Friday, October 2, 2009 - link

You are all talking too much about technologies. Who cares about this? DX11 from ATI is already available in Japan and they are selling like sex dolls. And why didnt NVDIA provided any benchmarks? Perhaps the drivers aren ready or Nvidia doesnt even know at what clockspeed this monster can run without exhausting your pcs power supply. Fermi is not here yet, it is a concept but not a product. ATI will cash in and Nvidia can only look. And when the Fermi-Monster will finally arrive, ATI will enroll with 5890 and X2 in the luxury class and some other products in the 100 Dollar class. Nvidia will always be a few months late and ATI will get the business. It is that easy. Who wants all this Cuda stuff? Some number crunching in the science field, ok. But if it were for physix an add-on board would do. But in reality there was never any run for physix. Why should this boom come now? I think Nvdia bet on the wrong card and they will suffer heavily for this wrong decision. They had better bought VIA or its CPU-division instead of Physix. Physix is no standard architecture and never will. In contrast, ATI is doing just what gamers want and this is were the money is. Were are the Gaming-benchmarks for FERMI? Nvidia is over!- Friday, October 2, 2009 - link

With all this Cuda and Physix stuff Nvidia will have 20-30% more power consumption at any pricepoint and up to 50% higher production costs because of their much bigger die size. ATI will lower the price whenever necessary in order to beat Nvidia in the market place! And when will Nvida arrive? Yesterday we didnt see even a paperlaunch! It was the announcement of a paperlaunch maybe in late december but the cards wont be available until late q12010 I guess. They are so much out of the business but most people do not realise this.Ahmed0 - Friday, October 2, 2009 - link

I know for sure SD is from Illinois (his online profiles which are related to his rants [which in turn are related to each other] point to it).So, Im going to go out on a limb here and suggest that SiliconDoc was/is this guy:

http://www.automotiveforums.com/vbulletin/member.p...">http://www.automotiveforums.com/vbulletin/member.p...

A little googling might (or might not) support the fact that he is a loony. Just type "site:forums.sohc4.net silicondoc" and youll find he has quite a reputation there (different site but seems to be the same profile, "handwriting" and same bike)

And that MIGHT lead us to the fact that he MIGHT actually be (currently) 45 and not a young raging teenage nerd called Brian.

Of course... this is just some fun guesswork I did (its all just oh so entertaining).

Ahmed0 - Friday, October 2, 2009 - link

Well... either that or all users called SiliconDoc are arsholes.k1ckass - Friday, October 2, 2009 - link

I guess silicondoc would eat **** if nvidia says that it tastes good, LOL.btw, fermi cards shown appears to be fake...

http://www.semiaccurate.com/2009/10/01/nvidia-fake...">http://www.semiaccurate.com/2009/10/01/nvidia-fake...

and btw, I use an nvidia gtx, propable would get an hd5870 next week because of all this crap nvidia throws at its consumers.

Pastuch - Friday, October 2, 2009 - link

Below is an email I got from Anand. Thanks so much for this wonderful site.-------------------------------------------------------------------

Thank you for your email. SiliconDoc has been banned and we're accelerating the rollout of our new comments rating/reporting system as a result of him and a few other bad apples lately.

A-

tamalero - Saturday, October 3, 2009 - link

about time, was getting boring with the constant "bubba, red roosters, morons..etc.."sigmatau - Friday, October 2, 2009 - link

.......

SiliconDoc getting banned.... PRICELESS.

PorscheRacer - Friday, October 2, 2009 - link

So it's safe now to post again? Much thanks has to go to Anand to cleaning up the virus that has infected these comments. I mean, it's new tech. Aren't we free to postulate about what we think is going on, discuss our thoughts and feelings without fear of some person trolling us down till we can't breathe? It feels better in here now, so thanks again.Mr Perfect - Saturday, October 3, 2009 - link

It looks like it safe... After about 37 pages.Good job though, it's actually been worse in Anandtech comments then it usually is on Daily Tech! Now that's saying something...