NVIDIA's Fermi: Architected for Tesla, 3 Billion Transistors in 2010

by Anand Lal Shimpi on September 30, 2009 12:00 AM EST- Posted in

- GPUs

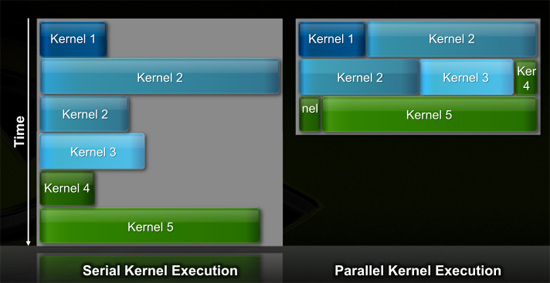

Efficiency Gets Another Boon: Parallel Kernel Support

In GPU programming, a kernel is the function or small program running across the GPU hardware. Kernels are parallel in nature and perform the same task(s) on a very large dataset.

Typically, companies like NVIDIA don't disclose their hardware limitations until a developer bumps into one of them. In GT200/G80, the entire chip could only be working on one kernel at a time.

When dealing with graphics this isn't usually a problem. There are millions of pixels to render. The problem is wider than the machine. But as you start to do more general purpose computing, not all kernels are going to be wide enough to fill the entire machine. If a single kernel couldn't fill every SM with threads/instructions, then those SMs just went idle. That's bad.

GT200 (left) vs. Fermi (right)

Fermi, once again, fixes this. Fermi's global dispatch logic can now issue multiple kernels in parallel to the entire system. At more than twice the size of GT200, the likelihood of idle SMs went up tremendously. NVIDIA needs to be able to dispatch multiple kernels in parallel to keep Fermi fed.

Application switch time (moving between GPU and CUDA mode) is also much faster on Fermi. NVIDIA says the transition is now 10x faster than GT200, and fast enough to be performed multiple times within a single frame. This is very important for implementing more elaborate GPU accelerated physics (or PhysX, great ;)…).

The connections to the outside world have also been improved. Fermi now supports parallel transfers to/from the CPU. Previously CPU->GPU and GPU->CPU transfers had to happen serially.

415 Comments

View All Comments

SiliconDoc - Thursday, October 1, 2009 - link

Sweet ! Nice pick, looks like carbon fiber at the bracket end.Wowzie, a real honker based on THOUSANDS OF DOLLARS of tech and core per part.

I feel SO PRIVLEDGED to have a chance at the gaming segment version, all that massive power jammed into a gaming card !

Whoo! U P S C A L E !

justaviking - Thursday, October 1, 2009 - link

Look at every bright area of high contrast. All the spotlight reflections have a red ring around them. So the thumb, in front of the highly reflective gold connectors, also has the same halo effect. I think that it's as much evidence of a digital camera as it is Photoshop manipulation.With that said, it could also be a non-functional mock-up. Holding a mock-up or prototype in your hand is not the same as benchmarking a production (ready for consumer release) product.

papapapapapapapababy - Thursday, October 1, 2009 - link

look at that irregular borders closely. ( above the watch) also, the shadows (finger) are off. thats a (terrible) shop.v1001 - Thursday, October 1, 2009 - link

All they did was blacken out the background more. Probably was more noise and distraction going on that they didn't want in there.justaviking - Thursday, October 1, 2009 - link

OK, so assuming it's a fake (and I'm not saying it isn't), I have three questions:1) Where did you get the photo?

2) Why do it? (And "Who did it?", but that's closely related to Q1.

3) Where did they get the photo of the hardware, which they then put into the person's hand?

Combining #2 and #3) If the card is from a real photo of real hardware, then what was the value of photoshopping it into someone's hand?

I'm not trying to argue, just trying to understand.

papapapapapapapababy - Thursday, October 1, 2009 - link

more fakes! source: bit-tech ( this one is even "better")http://i34.tinypic.com/34inz9j.jpg">http://i34.tinypic.com/34inz9j.jpg

also, not mine ( from xnews)

http://img28.imageshack.us/img28/2883/tesafilm.png">http://img28.imageshack.us/img28/2883/tesafilm.png

papapapapapapapababy - Thursday, October 1, 2009 - link

also below the card... whats that sloppy withe trim in the middle of a shadow? JAaAAAUNCjigga - Thursday, October 1, 2009 - link

Seriously? I have a 1080p monitor and Radeon 4670 with UVD2, but my PS3 with 1080p output to the same monitor looks MUCH better at upscaling DVDs (night and day difference.) PowerDVD does have a better upscaling tech, but that's using software decoding. Can somebody port ffdshow/libmpeg2 for CUDA and ATI Stream (or DirectCompute?) kthxbyePastuch - Thursday, October 1, 2009 - link

I buy two videocards per year on average. I've owned an almost equal number of ATI/Nvidia cards. I loved my geforce 8800 GTX despite it costing a fortune but since then it's been ALL down hill. I've had driver issues with home theater PCs and Nvidia drivers. I've been totally disappointed with Nvidias performance with high def audio formats. The fact that the entire ATI 48xx line can do 7.1 audio pass-through while only a handful of Nvidia videocards can even do 5.1 audio passthrough is just sad. The world is moving to hometheater gaming PCs and Nvidia is dragging arse.The fact that 5850 can do bitstreaming audio for $250 RIGHT NOW and is the second fastest 1 GPU solution for gaming makes it one hell of a product in my eyes. You no longer nead an Asus Xonar or Auzentech soundcard saving me $200. Hell with the money I saved I could almost buy a SECOND 5850! Lets see if the new Nvidia cards can do bitstreaming... if they can't then Nvidia won't be getting any more of my money.

P.S. Thanks Anand for inspiring me to build the hometheater of my dreams. Gaming on a 110 Inch screen is the future!

SiliconDoc - Thursday, October 1, 2009 - link

Well that's very nice, and since this has been declared the home of "only game fps and bang for that buck" matters, and therefore PhysX, ambient occlusion, CUDA, and other nvidia advantages, and your "outlier" htpc desires are WORTHLESS according to the home crowd, I guess they can't respond without contradiciting themselves, so I will considering I have always supported added value, and have been attacked for it.--

Yes, throw out your $200 sound cards, or sell them, and plop that heat monster into the tiny unit, good luck. Better spend some on after market cooling, or the raging videocard fan sound will probably drive you crazy. So another $100 there.

Now the $100 you got for the used soundcard is gone.

I also wonder what sound chip you're going to use then when you aren't playing a movie or whatever, I suppose you'll use your motherboard sound chip, which might be a lousy one, and definitely is lousier than the Auzentech you just sold or tossed.

So how exactly does "passthrough" save you a dime ?

If you're going to try to copy Anand's basement theatre projection, I have to wonder why you wouldn't use the digital or optical output of the high end soundcard... or your motherboards, if indeed it has a decent soundchip on it, which isn't exactly likely.

-

Maybe we'll all get luckier,and with TESLA like massive computing power, we'll get an NVIDIA blueray dvd movie player converter that runs on the holy grail of the PhysX haters, openCL and or direct compute, and you'll have to do with the better sound of your add on sound cards, anyway, instead of using a videocard as a transit device.

I can't imagine "cable mamnagement" as an excuse either, with a 110" curved screen home built threate room...

---

Feel free to educate me.