AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

The Return of Supersample AA

Over the years, the methods used to implement anti-aliasing on video cards have bounced back and forth. The earliest generation of cards such as the 3Dfx Voodoo 4/5 and ATI and NVIDIA’s DirectX 7 parts implemented supersampling, which involved rendering a scene at a higher resolution and scaling it down for display. Using supersampling did a great job of removing aliasing while also slightly improving the overall quality of the image due to the fact that it was sampled at a higher resolution.

But supersampling was expensive, particularly on those early cards. So the next generation implemented multisampling, which instead of rendering a scene at a higher resolution, rendered it at the desired resolution and then sampled polygon edges to find and remove aliasing. The overall quality wasn’t quite as good as supersampling, but it was much faster, with that gap increasing as MSAA implementations became more refined.

Lately we have seen a slow bounce back to the other direction, as MSAA’s imperfections became more noticeable and in need of correction. Here supersampling saw a limited reintroduction, with AMD and NVIDIA using it on certain parts of a frame as part of their Adaptive Anti-Aliasing(AAA) and Supersample Transparency Anti-Aliasing(SSTr) schemes respectively. Here SSAA would be used to smooth out semi-transparent textures, where the textures themselves were the aliasing artifact and MSAA could not work on them since they were not a polygon. This still didn’t completely resolve MSAA’s shortcomings compared to SSAA, but it solved the transparent texture problem. With these technologies the difference between MSAA and SSAA were reduced to MSAA being unable to anti-alias shader output, and MSAA not having the advantages of sampling textures at a higher resolution.

With the 5800 series, things have finally come full circle for AMD. Based upon their SSAA implementation for Adaptive Anti-Aliasing, they have re-implemented SSAA as a full screen anti-aliasing mode. Now gamers can once again access the higher quality anti-aliasing offered by a pure SSAA mode, instead of being limited to the best of what MSAA + AAA could do.

Ultimately the inclusion of this feature on the 5870 comes down to two matters: the card has lots and lots of processing power to throw around, and shader aliasing was the last obstacle that MSAA + AAA could not solve. With the reintroduction of SSAA, AMD is not dropping or downplaying their existing MSAA modes; rather it’s offered as another option, particularly one geared towards use on older games.

“Older games” is an important keyword here, as there is a catch to AMD’s SSAA implementation: It only works under OpenGL and DirectX9. As we found out in our testing and after much head-scratching, it does not work on DX10 or DX11 games. Attempting to utilize it there will result in the game switching to MSAA.

When we asked AMD about this, they cited the fact that DX10 and later give developers much greater control over anti-aliasing patterns, and that using SSAA with these controls may create incompatibility problems. Furthermore the games that can best run with SSAA enabled from a performance standpoint are older titles, making the use of SSAA a more reasonable choice with older games as opposed to newer games. We’re told that AMD will “continue to investigate” implementing a proper version of SSAA for DX10+, but it’s not something we’re expecting any time soon.

Unfortunately, in our testing of AMD’s SSAA mode, there are clearly a few kinks to work out. Our first AA image quality test was going to be the railroad bridge at the beginning of Half Life 2: Episode 2. That scene is full of aliased metal bars, cars, and trees. However as we’re going to lay out in this screenshot, while AMD’s SSAA mode eliminated the aliasing, it also gave the entire image a smooth makeover – too smooth. SSAA isn’t supposed to blur things, it’s only supposed to make things smoother by removing all aliasing in geometry, shaders, and textures alike.

As it turns out this is a freshly discovered bug in their SSAA implementation that affects newer Source-engine games. Presumably we’d see something similar in the rest of The Orange Box, and possibly other HL2 games. This is an unfortunate engine to have a bug in, since Source-engine games tend to be heavily CPU limited anyhow, making them perfect candidates for SSAA. AMD is hoping to have a fix out for this bug soon.

“But wait!” you say. “Doesn’t NVIDIA have SSAA modes too? How would those do?” And indeed you would be right. While NVIDIA dropped official support for SSAA a number of years ago, it has remained as an unofficial feature that can be enabled in Direct3D games, using tools such as nHancer to set the AA mode.

Unfortunately NVIDIA’s SSAA mode isn’t even in the running here, and we’ll show you why.

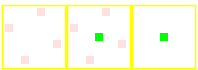

5870 SSAA

GTX 280 MSAA

GTX 280 SSAA

At the top we have the view from DX9 FSAA Viewer of ATI’s 4x SSAA mode. Notice that it’s a rotated grid with 4 geometry samples (red) and 4 texture samples. Below that we have NVIDIA’s 4x MSAA mode, a rotated grid with 4 geometry samples and a single texture sample. Finally we have NVIDIA’s 4x SSAA mode, an ordered grid with 4 geometry samples and 4 texture samples. For reasons that we won’t get delve into, rotated grids are a better grid layout from a quality standpoint than ordered grids. This is why early implementations of AA using ordered grids were dropped for rotated grids, and is why no one uses ordered grids these days for MSAA.

Furthermore, when actually using NVIDIA's SSAA mode, we ran into some definite quality issues with HL2: Ep2. We're not sure if these are related to the use of an ordered grid or not, but it's a possibility we can't ignore.

If you compare the two shots, with MSAA 4x the scene is almost perfectly anti-aliased, except for some trouble along the bottom/side edge of the railcar. If we switch to SSAA 4x that aliasing is solved, but we have a new problem: all of a sudden a number of fine tree branches have gone missing. While MSAA properly anti-aliased them, SSAA anti-aliased them right out of existence.

For this reason we will not be taking a look at NVIDIA’s SSAA modes. Besides the fact that they’re unofficial in the first place, the use of a rotated grid and the problems in HL2 cement the fact that they’re not suitable for general use.

327 Comments

View All Comments

Wreckage - Wednesday, September 23, 2009 - link

Hot, loud, huge power draw and it barely beats a 285.A disappointment for sure.

SiliconDoc - Thursday, September 24, 2009 - link

Thank you Wreckage, now, I was going to say draw up your shields, but it's too late, the attackers have already had at it.--

Thanks for saying what everyone was thinking. You are now "a hated fanboy", "a paid shill" for the "corporate greedy monster rip off machine", according to the real fanboy club, the ones who can't tell the truth, no matter what, and prefer their fantasiacal spins and lies.

Zstream - Wednesday, September 23, 2009 - link

They still allow you to post?yacoub - Wednesday, September 23, 2009 - link

He's right in the first sentence but went all fanboy in the second.Griswold - Wednesday, September 23, 2009 - link

Not really, he's a throughbred fanboy with everything he said. Even on the "loud" claim compared to what previous reference designs vom ATI were like...SiliconDoc - Wednesday, September 30, 2009 - link

So if YOU compare one loud design of ati's fan to another fan and as loud ati card( they're all quieter than 5870* but we'll make believe for you for now),

and they're both loud, anyone complaining about one of them being loud is "an nvidia fanboy" because he isn't aware of the other loud as heck ati cards, which of course, make another loud one "just great" and "not loud". LOL

It's just amazing, and if it was NV:

" This bleepity bleep fan and card are like a leaf blower again, just like the last brute force monster core power hog but this **tard is a hurricane with no eye."

But since it's the red cards that are loud, as YOU pointed out in the plural, not singular like the commenter, according to you HE's the FANBOY, because he doesn't like it. lol

ULTIMATE CONCLUSION: The commenter just told the truth, he was hoping for more, but was disappointed. YOU, the raging red, jumped his case, and pointed out the ati cards are loud "vom" prior.. and so he has no right to be a big green whining fanboy...

ROFLMAO

I bet he's a "racist against reds" every time he validly criticizes their cards, too.

---

the 5870 is THE LOUDEST ATI CARD ON THE CHART,AND THE LOUDEST SINGLE CORE CARD.

--

Next, the clucking rooster will whiplash around and flap the stubby wings at me, claiming at idle it only draws 27 watts and is therefore quiet.

As usual, the sane would them mention it will be nice not playing any 3d games with a 3d gaming card, and enjoying the whispery hush.

--

In any case:

Congratulations, you've just won the simpleton's red rooster raving rager thread contest medal and sarcastic unity award.(It's as real as any points you've made)

Anyhow thanks, you made me notice THE 5870 IS THE LOUDEST CARD ON THE CHARTS. I was too busy pointing out the dozen plus other major fibboes to notice.

It's the loudest ati card, ever.

GourdFreeMan - Wednesday, September 23, 2009 - link

I thought the technical portion of your review was well written. It is clear, concise and written to the level of understanding of your target audience. However, I am less than impressed with your choice of benchmarks. Why is everything run at 4xAA, 16xAF? Speaking for most PC gamers, I would have maxed the settings in Crysis Warhead before adding AA and AF. Also, why so many console ports? Neither I, nor anyone else I personally know have much interest in console ports (excluding RPGs from Bethesda). Where is Stalker: Clear Sky? As you note its sequel will be out soon. Given the short amount of time they had to work with DX11, I imagine it will run similarly to Stalker: Call of Pripyat. Also, where is ArmA II? Other than Crysis and Stalker it is the game most likely to be constrained by the GPU.I don't want to sound conspiratorial, but your choice of games and AA/AF settings closely mirror AMD's leaked marketing material. It is good that you put their claims to the test, as I trust Anandtech as an unbiased review site, but I don't think the games you covered properly cover the interests of PC gamers.

Ryan Smith - Wednesday, September 23, 2009 - link

For the settings we use, we generally try to use the highest settings possible. That's why everything except Crysis is at max quality and 4xAA 16xAF (bear in mind that AF is practically free these days). Crysis is the exception because of its terrible performance; Enthusiast level shaders aren't too expensive and improve the quality more than anything else, without driving performance right off a cliff. As far as playing the game goes, we would rather have AA than the rest of the Enthusiast features.As for our choice of games, I will note that due to IDF and chasing down these crazy AA bugs, we didn't get to run everything we wanted to. GRID and Wolfenstein (our OpenGL title) didn't make the cut. As for Stalker, we've had issues in the past getting repeatable results, so it's not a very reliable benchmark. It also takes quite a bit of time to run, and I would have had to drop (at least) 2 games to run it.

Overall our game selection is based upon several factors. We want to use good games, we want to use popular games so that the results are relevant for the most people, we want to use games that give reliable results, and ideally we want to use games that we can benchmark in a reasonable period of time (which means usually having playback/benchmark tools). We can't cover every last game, so we try to get what we can using the criteria above.

GourdFreeMan - Thursday, September 24, 2009 - link

Popularity and quality are strong arguments for World of Warcraft, Left 4 Dead, Crysis, Far Cry, the newly released Batman game... and *maybe* Resident Evil (though it is has far greater popularity among console gamers). However, HAWX? Battleforge? I would never have even heard of these games had I not looked them up on Wikipedia. In retrospect I can see you using Battleforge due to it being the only DirectX 11 title, but I still don't find your list of games compelling or comprehensive.To me *PC* gaming needs to offer something more than simple action to justify its cost of entry. In the past this included open worlds, multiplayer, greater graphical realism and attempts at balancing realistic simulation with entertaining game play. Console gaming has since offered the first two, but the latter are still lacking.

It's games like Crysis, Stalker and ArmA II along with the potential of modding that attract people to PC gaming in the first place...

dvijaydev46 - Wednesday, September 23, 2009 - link

Good review but it would be good if you could also add Steam and Cuda benchmarks. Now you have a common software Mediashow Espresso right?