i5 / P55 Lab Update - Now with more numbers

by Gary Key on September 15, 2009 12:05 AM EST- Posted in

- Motherboards

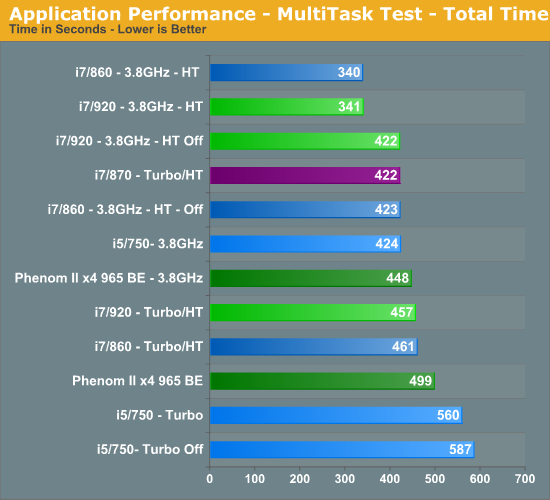

Multitasking-

The vast majority of our benchmarks are single task events that utilize anywhere from 23MB up to 1.4GB of memory space during the course of the benchmark. Obviously, this is not enough to fully stress test our 6GB or 8GB memory configurations. We devised a benchmark that would simulate a typical home workstation and consume as much of the 6GB/8GB as possible without crashing the machine.

We start by opening two instances of Internet Explorer 8.0 each with six tabs opened to flash intensive websites followed by Adobe Reader 9.1 with a rather large PDF document open, and iTunes 8 blaring the music selection of the day loudly. We then open two instances of Lightwave 3D 9.6 with our standard animation, Cinema 4D R11 with the benchmark scene, Microsoft Excel and Word 2007 with large documents, and finally Photoshop CS4 x64 with our test image.

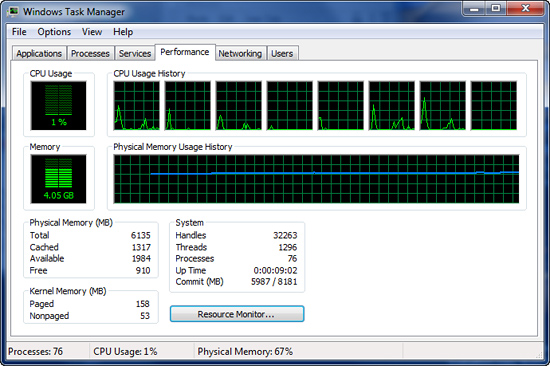

Before we start the benchmark process, our idle state memory usage is 4.05GB. Sa-weet!

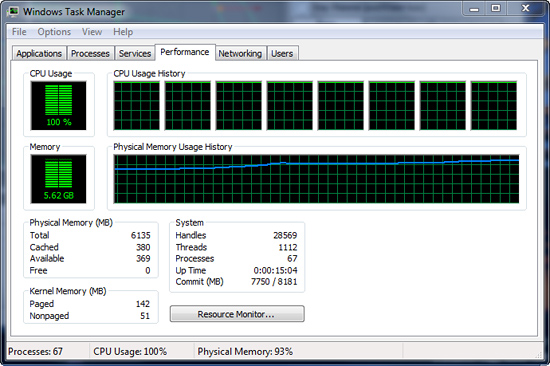

We wait two minutes for system activities to idle and then start playing Pinball Wizard via iTunes, start the render scene process in Cinema 4D R11, start a resize of our Photoshop image, and finally the render frame benchmark in Lightwave 3D. Our maximum memory usage during the benchmark is 5.62GB with 100% CPU utilization across all four or eight threads.

So far, our results have pretty much been a shampoo, rinse, and repeat event. I believe multitasking is what separates good systems from the not so good systems. I spend very little time using my system for gaming and when I do game, everything else is shutdown to maximize frame rates. Otherwise, I usually have a dozen or so browser windows open, music playing, several IM programs open and in use, Office apps, and various video/audio applications open in the background.

One or two of those primary applications are normally doing something simultaneously, especially when working. As such, I usually find this scenario to be one of the most demanding on a computer that is actually utilized for something besides trying to get a few benchmark sprints run before the LN2 pot goes dry.

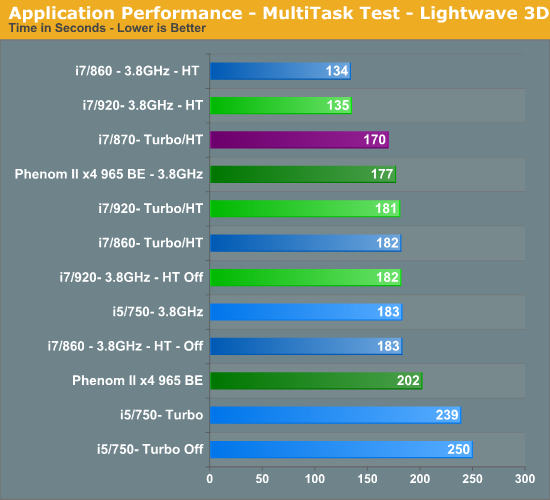

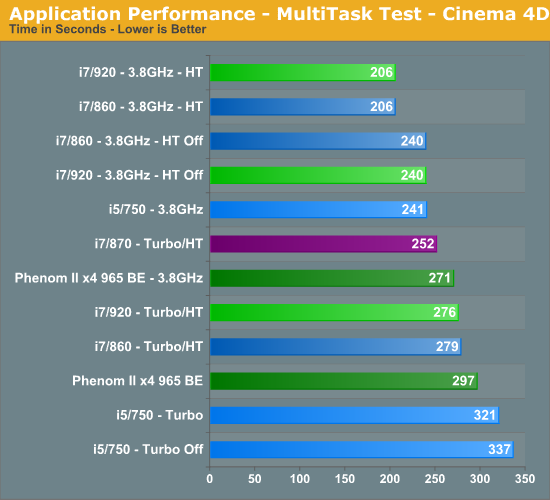

The i5/750 results actually surprised me. The system never once felt “slow” but the results do not lie. The i5/750 had its head served on a platter at stock speeds, primarily due to the lack of Hyper-Threading when compared to the other choices. The 965 BE put up very respectable numbers and scaled linearly based on clock speed. An 11% increase in clock speed resulted in a 10% improvement in the total benchmark score for the 965 BE. You cannot ask for more than that.

At 3.8GHz clock speeds, it is once again a tossup between the 920 and 860 processors with HT enabled. The 920 did hold a slight advantage over the 860 at stock clock settings, attributable to slightly better data throughput when under load conditions. Otherwise, on the Intel side the i7/870 provided excellent results based on its aggressive turbo mode, although at a price.

Gaming-

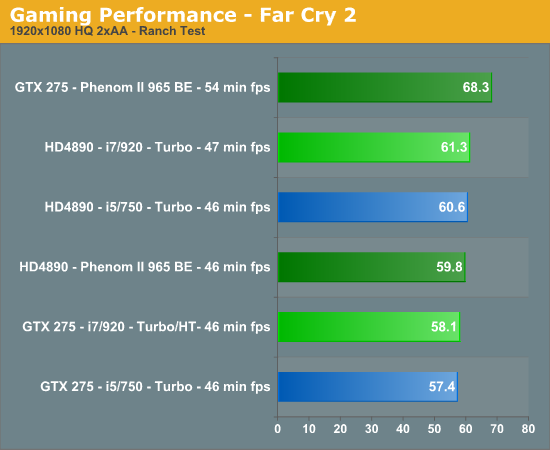

We utilize the Ranch Small demo file along with the FarCry 2 benchmark utility. This particular demo offers a balance of both GPU and CPU performance.

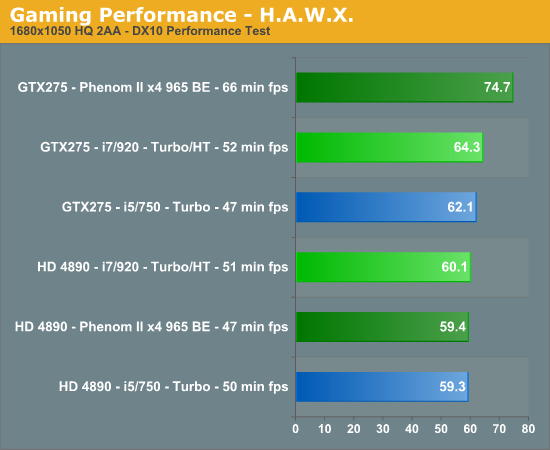

We utilize FRAPS to capture our results in a very repeatable section of the game and report the median score of our five benchmark runs. H.A.W.X. responds well to memory bandwidth improvements and scales linearly with CPU and GPU clock increases.

Your eyes are not deceiving you. After 100+ clean OS installs, countless video card, motherboard, memory and driver combinations, we have results that are not only repeatable, but appear to be valid. We also tracked in-game performance with FRAPS and had similar results. Put simply, unless we have something odd going on with driver optimizations, a BIOS bug, or a glitch in the OS, our NV cards perform better on the AMD platform than they do on the Intel platform. The pattern reverses itself when we utilize the AMD video cards.

It is items like this that make you lose hair and delay articles. Neither of which I can afford to have happen. However, we have several suppliers assisting us with the problem (if it is a problem) and hope to have an answer shortly. These results also repeat themselves in other games like H.A.W.X. and Left 4 Dead but not in Crysis Warhead or Dawn of War II. So, besides the gaming situation, we also see a similar pattern in AutoCad 2010 and other 3D rendering applications where GPU acceleration is utilized, it is just not as pronounced.

77 Comments

View All Comments

MadMan007 - Tuesday, September 15, 2009 - link

I don't see many enthusiasts, even upgrade junkies, buying a $1000-1500 6c/12t CPU. It will likely only find a home in time=money systems as far as workstations.nvmarino - Tuesday, September 15, 2009 - link

I remember reading about an issue with nvida performance on i7 back around i7 launch. Yep, here it is (one of the few decent articles I had read over at tom's in a while...):http://www.tomshardware.com/reviews/geforce-gtx-28...">http://www.tomshardware.com/reviews/geforce-gtx-28...

Ryun - Tuesday, September 15, 2009 - link

I was just about to post this as well. I've seen other sites come across the same issue as well: http://www.bit-tech.net/hardware/cpus/2009/04/23/a...">http://www.bit-tech.net/hardware/cpus/2...enom-ii-... (Far Cry 2 benchmark is more noticeable)I would have thought the issue would've been fixed by now, though I still have not recommended GTX 2-series cards wtih Nehalem in spite of it.

Gary Key - Tuesday, September 15, 2009 - link

Here is thing, the numbers lined up with the AMD HD 4890 the last time I tested on Vista with the 180 series drivers. I tried the 180s under Win7 and had the same problem, even the inbox Win7 drivers show this pattern.This does not occur in all games either, which makes it even more confusing as the thought process a few months ago was the lack of driver optimizations in GPU bound situations. If that were the case, Crysis/Crysis Warhead should show the largest difference based on current game engines, it does not.

Instead we have titles like H.A.W.X. and L4D, not exactly GPU killers, showing this pattern besides FarCry 2. We just want an answer, but I am not going to wait much longer. ;)

neoflux - Tuesday, September 15, 2009 - link

Excuse my noobness, but are those 2 gaming benchmarks in frames per second, meaning that higher is better? Also, what is the minutes for each processor for? Thanks.Rajinder Gill - Tuesday, September 15, 2009 - link

Higher is better. 'min' refers to minimum frame rate.yacoub - Tuesday, September 15, 2009 - link

yeah i was wondering why "minutes" were listed. might want to change "min" to "min fps" or something similar. "min" more commonly means "minute" and leads to confusion.neoflux - Tuesday, September 15, 2009 - link

thank you, sir.SmCaudata - Tuesday, September 15, 2009 - link

I think with the current generation of video cards the 1156 is the way to go. The question I have is what about the next gen. With all of the games that are now video card limited it seems that the cheapest upgrade to a new computer down the road will be a second vid card. It will be interesting to see how much the 1156 bottlenecks with CrossFire in the next few weeks.yacoub - Tuesday, September 15, 2009 - link

Most people aren't worried about multi-card systems. So long as the single card next-gen GPUs don't fully saturate a PCIe x16 bus, 1156 will be fine.